Clear Sky Science · en

Designing an explainable algorithm based on XGBoost and genetic algorithm for predicting hospitalization needs of COVID-19 patients

Why this matters for everyday care

During the COVID-19 pandemic, doctors often had to decide very quickly who needed a hospital bed and who could safely recover at home. This paper describes a computer-based tool designed to help with that decision. It tries to combine two important qualities: strong accuracy in spotting patients at risk and clear, simple explanations that doctors can actually trust and use.

Turning patient records into early warnings

The researchers analyzed medical records from 1,278 adults with COVID-19 seen at a single hospital in Iran between April 2020 and March 2021. For each person, they collected 27 pieces of information, including age, oxygen level, blood tests such as C-reactive protein and D-dimer, symptoms like fever or shortness of breath, and existing illnesses such as diabetes or high blood pressure. Only records with solid laboratory or scan evidence of COVID-19 and reasonably complete data were kept. The team carefully cleaned the dataset, filled in some missing values with statistical methods, removed obvious errors, and then split the data into separate groups for building and testing their models.

Building a powerful prediction engine

At the heart of the system is a machine-learning method called XGBoost, which is very good at finding patterns in complex data. The tool learns from earlier patients which combinations of measurements tend to signal a need for hospital care. When tested 100 times on fresh data, it correctly separated higher-risk from lower-risk patients with an area under the curve of 0.85, meaning it was strong at ranking who was more likely to need admission. It identified roughly three out of four patients who truly needed hospitalization and correctly reassured about nine out of ten people who did not. Compared with more traditional approaches—such as logistic regression, random forests, a simple neural network, and another tree-based method called LightGBM—XGBoost gave the best mix of accuracy and reliability.

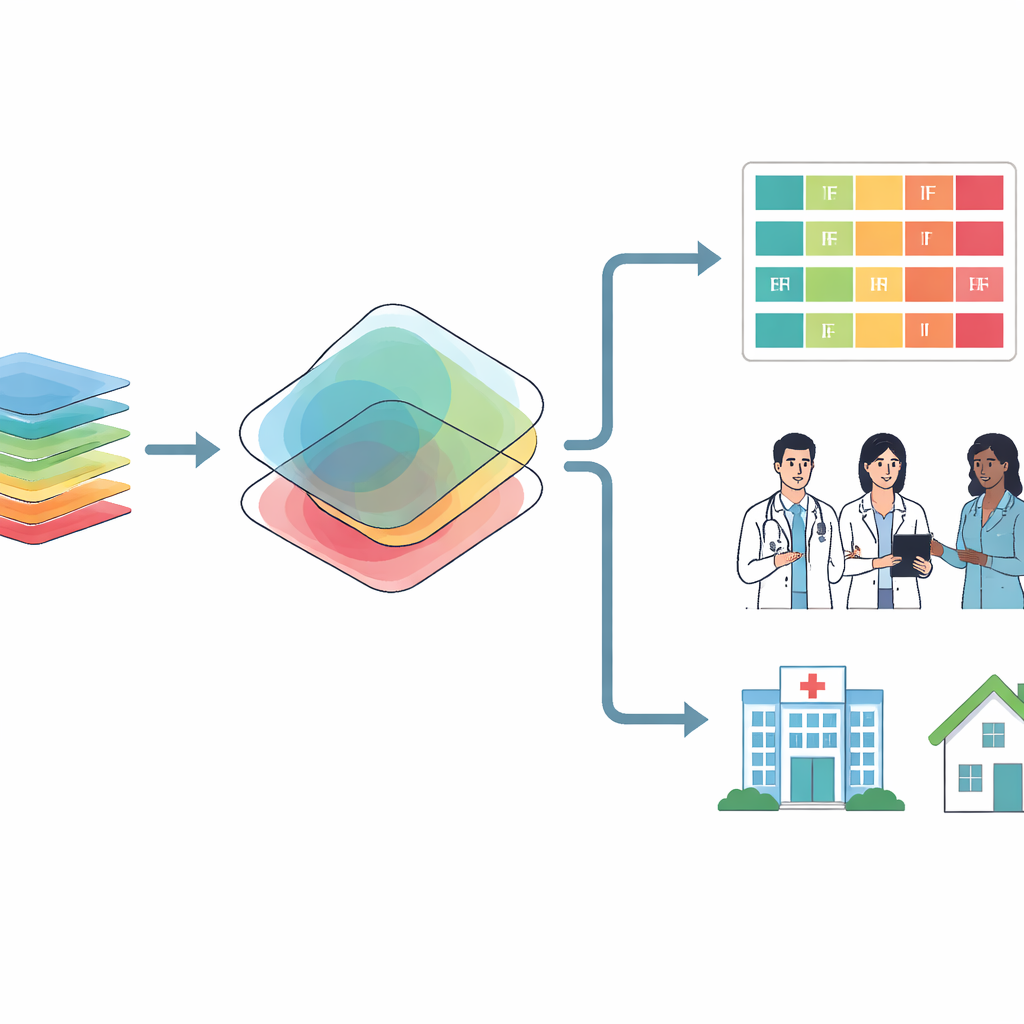

From black box to clear rules for doctors

Purely statistical models can feel like a black box: they give a risk score but not a human-friendly reason. To open that box, the team added a second layer that turns the model’s behavior into short, easy-to-read rules of the form “IF these conditions are present, THEN hospitalization is likely.” They first trained a set of small decision trees that use only a few conditions at a time, then treated each path through these trees as a candidate rule. A genetic algorithm—an optimization method inspired by evolution—was used to trim and refine these rules, keeping only those that were both accurate and applied to enough patients to be useful. Finally, ten physicians from relevant specialties graded the rules, keeping only ones that were medically sensible and clear. This process produced 40 final rules, 20 pointing toward hospitalization and 20 toward safe outpatient care.

What the model learned about risk

When the researchers probed which measurements mattered most, a small group stood out. Low oxygen saturation, high C-reactive protein, older age, increased D-dimer, high ferritin, and low lymphocyte percentage had the biggest impact on predictions—matching frontline experience that oxygen levels and signs of inflammation or clotting are crucial. Conditions like diabetes, significant lung involvement on CT scans, and shortness of breath also played a role but were somewhat less central. Common symptoms such as cough or muscle aches contributed little to deciding who needed a hospital bed. The team also checked performance across men and women, younger and older patients, and those with or without major chronic diseases. Differences were small and not statistically meaningful, suggesting the tool behaved fairly across these groups, at least in this dataset.

How this could help in future outbreaks

In practice, the system would work in two stages. First, the XGBoost model calculates a hospitalization risk from a patient’s basic information, vital signs, and routine blood tests. Second, the tool looks for one of the expert-approved rules that matches this patient—such as a certain combination of low oxygen, high inflammatory markers, and age. If a matching rule is found that agrees with the model’s prediction, the tool presents that rule to the clinician as the reasoning behind the suggested decision. The authors argue that this two-part design—accurate prediction plus simple, vetted rules—could make artificial intelligence more acceptable in real clinics. Because the rule-generation process is modular, similar systems could be retrained quickly for new infectious diseases using locally collected data, helping hospitals triage patients and manage scarce resources during future health crises.

Citation: Abkar, A., Mehrabi, M., Golabpour, A. et al. Designing an explainable algorithm based on XGBoost and genetic algorithm for predicting hospitalization needs of COVID-19 patients. Sci Rep 16, 10210 (2026). https://doi.org/10.1038/s41598-026-40120-6

Keywords: COVID-19 triage, hospitalization prediction, explainable AI, clinical decision support, machine learning in healthcare