Clear Sky Science · en

LLM-DWA: a hybrid path planning framework combining large language models with the dynamic window approach

Smarter routes for everyday robots

From vacuum cleaners to warehouse carts, mobile robots are becoming common in homes and workplaces. Yet even these high‑tech helpers can get stuck in awkward corners or maze‑like hallways. This study introduces a new way to help robots choose better routes by combining a fast, traditional navigation method with the reasoning power of large language models, the same technology behind modern chatbots.

Why robots get stuck in tricky spaces

Most robots split navigation into two jobs. A global planner first sketches a rough route across a map, and then a local planner reacts to nearby walls, furniture, and people using live sensor data. A widely used local method, called the Dynamic Window Approach, quickly looks at the robot’s possible speeds and turns to pick a safe short‑term motion. This works well in open spaces but struggles in layouts with U‑shaped obstacles or tight mazes. In such cases, the robot can end up circling inside a dead end or hugging sharp corners, wasting time or failing to reach its goal at all.

Letting language models think about space

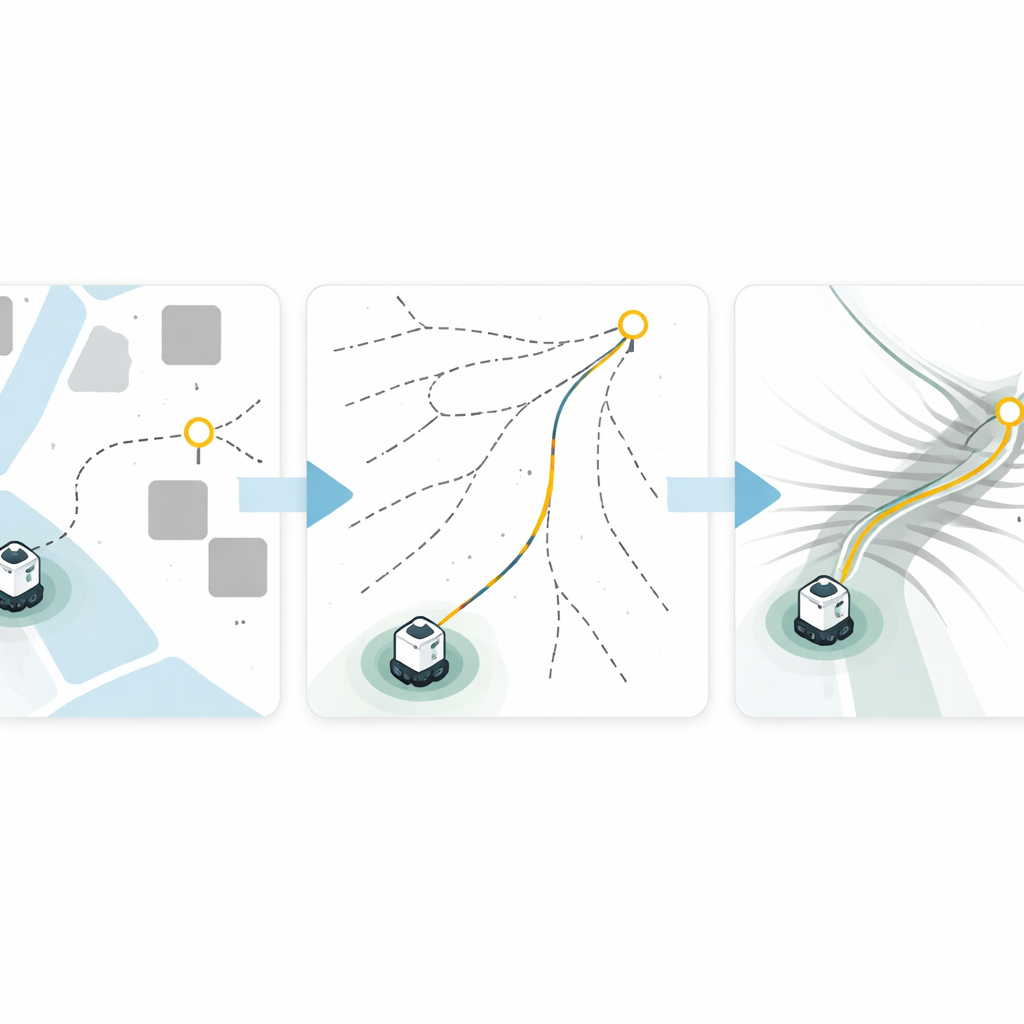

The authors propose adding a large language model (LLM) as a high‑level guide on top of the existing local controller. Instead of directly steering the robot, the LLM receives a description of the environment—either as coordinates of walls or as a simple map image—along with the robot’s start and goal locations. Using its pattern‑matching and reasoning skills, the LLM outputs a small list of intermediate “waypoints” that snake through key gaps and bottlenecks, like doorways or corridor turns. The familiar Dynamic Window Approach then handles the fine‑grained motion from one waypoint to the next using real‑time sensor readings, preserving safety and responsiveness while following the LLM’s broader guidance.

How the hybrid planner was built and tested

The team first validated this pipeline in a simple two‑dimensional grid world and then in a realistic three‑dimensional simulator using a TurtleBot3 robot. The LLM, accessed through an application programming interface, was given carefully crafted prompts so that it always returned clean lists of waypoints. The low‑level controller came from standard open‑source robotics software, making the overall design modular: in principle, different language models or local controllers could be swapped in without redesigning the whole system.

Beating dead ends and shaving off travel time

Across a series of tests, the hybrid “LLM‑DWA” method was compared with common baselines that pair a global Dijkstra planner with either the Dynamic Window Approach or an optimization‑heavy controller. In a U‑shaped obstacle course, the plain local planner failed to reach the goal, and the global‑plus‑local baseline collided with corners. The LLM‑guided method, by contrast, produced waypoints that steered the robot cleanly around the trap and completed the route. In three‑dimensional worlds—including a copy of the U‑shape, a complex maze, and a house‑like layout—the new framework often cut travel time roughly in half while maintaining similar path lengths, and it was the only method to solve the most complicated maze. Repeated trials showed that, despite the language model’s built‑in randomness, success rates and travel times remained stable.

Limits today and room to grow

The approach is not without drawbacks. Describing cluttered rooms to a language model using only numbers or a single overhead image can miss important details, sometimes leading to waypoints placed inside obstacles or ambiguous paths. The current system also asks the LLM for waypoints only once at the start, so it cannot yet rethink the route in the middle of a run when unexpected obstacles appear. The authors argue that tighter coupling between perception, geometry, and language—as well as calling the LLM again during navigation—could further boost reliability.

What this means for future robot helpers

Overall, the study shows that language models can act as a kind of high‑level “navigator’s brain,” sketching out sensible intermediate goals while proven low‑level controllers keep the robot safe moment to moment. By combining big‑picture reasoning with fast, physics‑aware motion planning, this hybrid design helps robots escape common traps and move more efficiently through challenging spaces. As multimodal language models grow better at understanding maps and scenes, such reasoning modules may become a standard part of robust, adaptable robot navigation systems.

Citation: Seo, J., Kim, E. & Choi, A.J. LLM-DWA: a hybrid path planning framework combining large language models with the dynamic window approach. Sci Rep 16, 9898 (2026). https://doi.org/10.1038/s41598-026-39524-1

Keywords: robot navigation, path planning, large language models, mobile robots, hybrid control