Clear Sky Science · en

Integrated spatio-temporal modeling with hybrid graph convolutions and the graph fourier neural operator for traffic prediction

Why predicting traffic far ahead matters

Anyone who has sat in a sudden traffic jam knows that today’s navigation apps are still mostly reactive: they warn you when congestion has already formed. This paper explores how to look much further into the future—tens of minutes to hours—so that cities and drivers can avoid gridlock before it starts. The authors introduce a new artificial intelligence framework that learns how traffic ripples across entire road networks over time, doing so more accurately and efficiently than many current deep‑learning approaches.

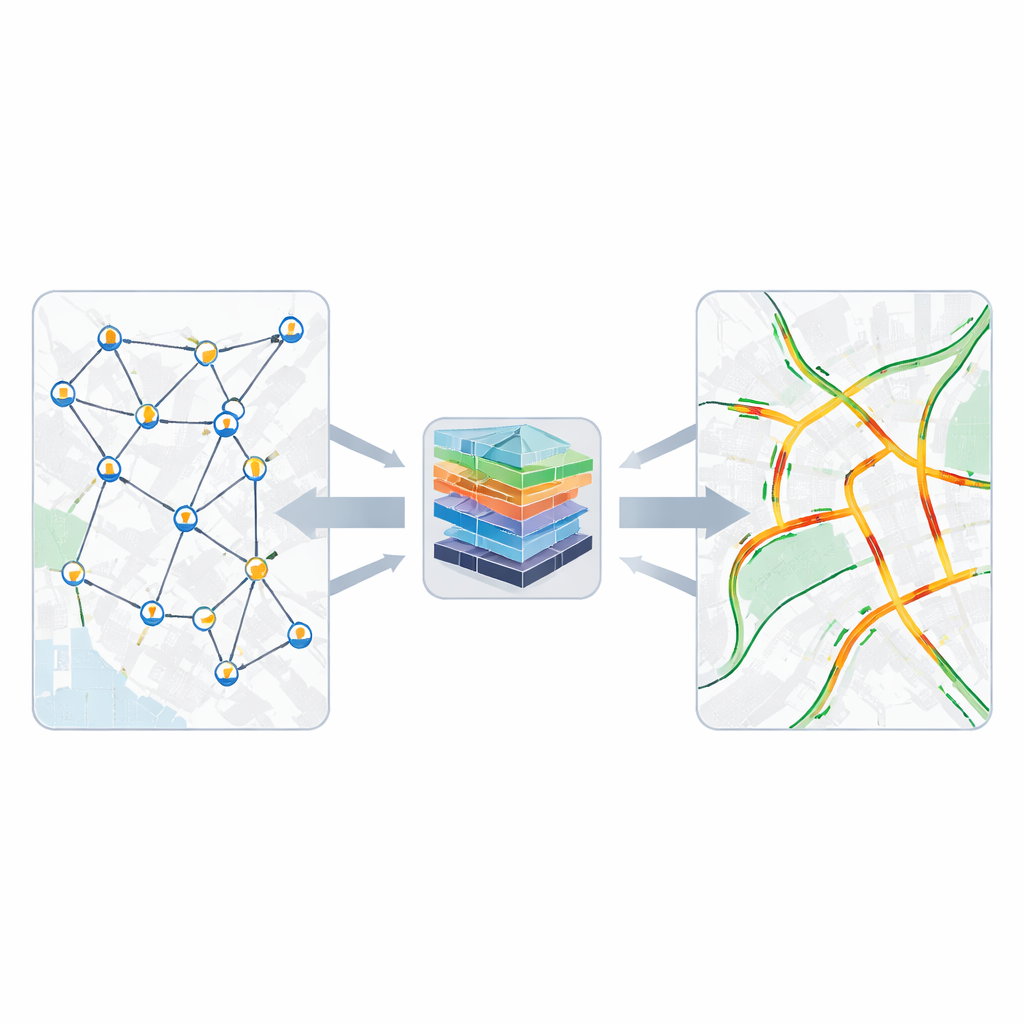

Seeing roads as a living network

Instead of viewing each road sensor as an isolated counter, the study treats the whole city as a network of connected points, much like a web of friends on a social platform. Each sensor’s readings are influenced not only by nearby roads but also by more distant, changing patterns such as commuting flows, events, and weather. Traditional methods either assume simple, fixed relationships or focus only on short stretches of history, which makes long‑term forecasting unreliable. By contrast, the new model is designed to learn both how traffic travels across the map and how it evolves over extended periods, capturing daily rush hours as well as rare disruptions.

Blending fixed maps with changing patterns

The first building block of the proposed system focuses on space: where roads and sensors are located and how they influence one another. The model starts from the physical layout of the road network, which provides a stable skeleton of who is connected to whom. On top of this, it learns an extra, flexible layer of connections that can change with the data itself, discovering hidden relationships such as roads that behave similarly even if they are not physically adjacent. A clever parameter‑sharing trick lets each sensor develop its own behavior pattern without exploding the number of model parameters, so the system remains compact enough for real‑world deployment.

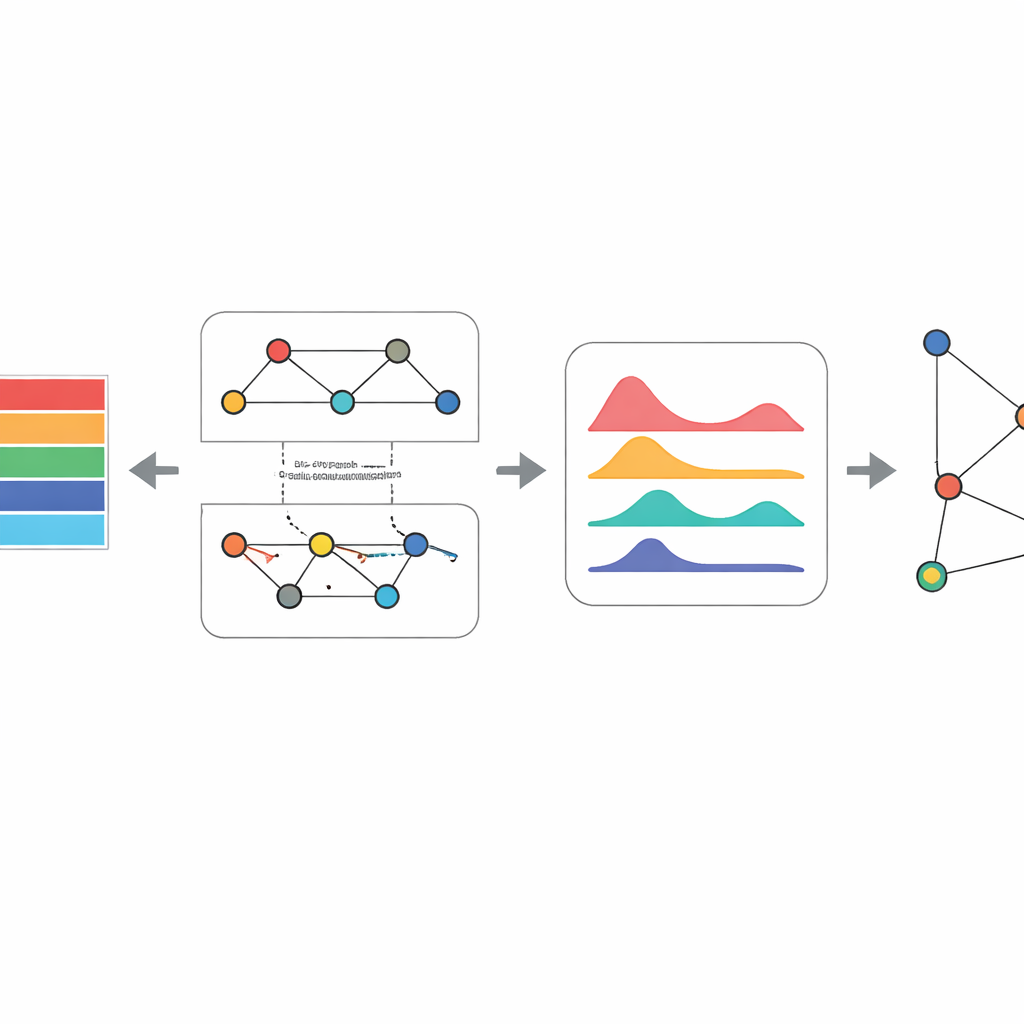

Turning time into compact signals

The next challenge is time: traffic measurements arrive every few minutes, creating long sequences for each sensor. Many popular deep‑learning models, such as recurrent networks and Transformers, handle this by stepping through each time point or comparing all time points with each other. That approach becomes slow and memory‑hungry as sequences grow longer. Here, the authors use a different idea. For each sensor, they compress its entire recent history into a single dense “token,” a numerical summary that still preserves the overall trend and variations. These tokens feed into the heart of the model, where both space and time are handled together.

Listening to traffic in the frequency world

At the core of the framework is a component called the Graph Fourier Neural Operator. Instead of examining raw traffic values directly, it transforms the network‑wide signals into a kind of frequency space, analogous to separating a piece of music into its bass, mid, and treble components. In this transformed view, repeating rush‑hour patterns and slower background changes become easier to isolate and adjust. The model learns which frequencies to emphasize or dampen and then converts the signals back to the road network, combining this global view with a simpler local path. This design captures long‑range, city‑wide dependencies while keeping the computational cost roughly proportional to the length of the input history, rather than growing explosively with it.

Proving accuracy and efficiency in real data

To test their approach, the researchers evaluated it on four standard freeway datasets from California’s traffic monitoring system. They compared their framework against a wide range of strong competitors, including recent Transformer architectures, state‑space models such as Mamba, and other graph‑based traffic predictors. Across different forecast horizons—from short‑term (about an hour) out to much longer windows—the new model consistently produced lower errors. It also used fewer parameters and less memory, and trained faster than attention‑heavy or strictly sequential baselines. Careful ablation and sensitivity studies showed that each piece of the design—the hybrid graph module, the sequence‑as‑token step, and the spectral operator—contributes meaningfully to this performance.

What this means for everyday travel

In practical terms, the study shows that it is possible to build traffic prediction systems that see further ahead without demanding enormous computing resources. By weaving together knowledge of the road map, observed data patterns, and a frequency‑based view of time, the proposed framework offers more stable and accurate long‑term forecasts than many state‑of‑the‑art alternatives. For city planners and intelligent transportation systems, this could translate into better timing of signals, smarter routing, and more resilient responses to accidents and special events—bringing us closer to cities where traffic is managed proactively rather than reactively.

Citation: Hosseini, SM., Rahmatinia, S.M. & Hosseini-Seno, SA. Integrated spatio-temporal modeling with hybrid graph convolutions and the graph fourier neural operator for traffic prediction. Sci Rep 16, 12945 (2026). https://doi.org/10.1038/s41598-026-38563-y

Keywords: traffic forecasting, graph neural networks, smart cities, spatio-temporal modeling, Fourier neural operator