Clear Sky Science · en

Spatial-spectral resolution analysis using drone hyperspectral and satellite multispectral imagery for shallow coastal water monitoring

Why this coastal study matters

Shallow coastal waters are home to seagrass meadows, reefs, and algae beds that protect shorelines, support fisheries, and store carbon. Yet these habitats are hard to map and monitor frequently using boats alone. This study shows how flying a hyperspectral camera on a drone and combining it with satellite-style views can deliver detailed, repeatable maps of water depth and seafloor habitats, helping communities manage fragile coasts under growing human and climate pressures.

Looking at the sea from above

The researchers focused on Las Canteras beach, an urban shore in Gran Canaria protected for its rich marine life but heavily used by residents and tourists. A natural rock bar calms the water and makes the seabed clearly visible from above, ideal for optical remote sensing. Historically, the area hosted extensive seagrass meadows, which have largely vanished as coastal construction altered sand movement. Today, patches of green, brown, and red algae, along with sand and rock, form a mosaic on the seafloor that reflects both natural change and human impact.

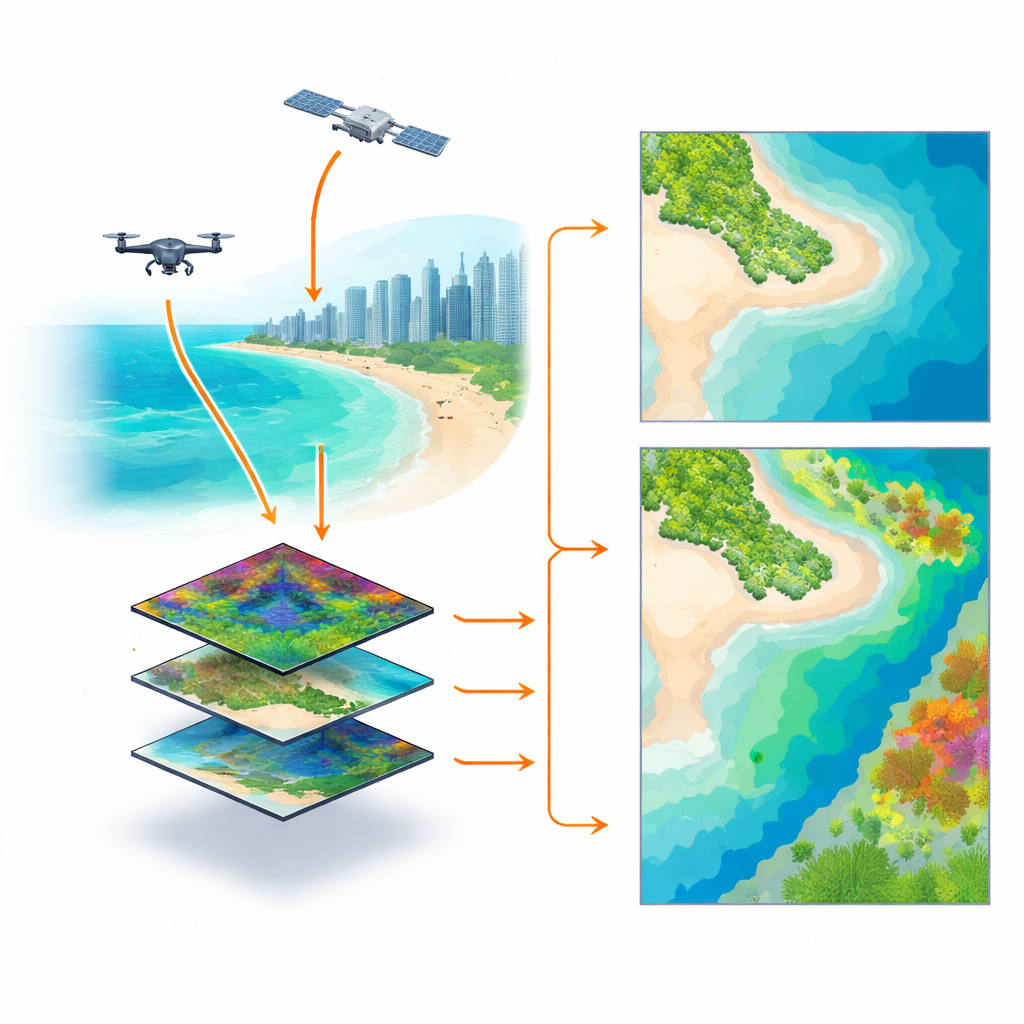

Testing different “eyes” on the water

At the heart of the work is a clever experiment: instead of directly comparing different cameras flown or orbited at different times, the team began with one very detailed drone image captured by a hyperspectral sensor (97 narrow color bands at 10-centimeter resolution). From this single reference, they mathematically “downgraded” the data to mimic a commercial satellite with 8 broader bands and a simple RGB camera with only 3 bands, each at both fine (10 cm) and coarser (2 m) pixel sizes. This produced six controlled versions of the same scene, all free from differences in weather, water clarity, or tides. They then used these images to estimate water depth (bathymetry) and classify seafloor types (vegetation, sand, and rock) using a mix of well-known formulas and modern machine-learning methods.

Reading depth and habitats from color

To recover depth, the study compared an established mathematical approach that relates light in two color bands to depth, with a more flexible machine-learning model that learns patterns from many bands at once. For seafloor habitats, they trained two types of classifiers: support vector machines and feedforward neural networks, both guided by in-water surveys and seabed photos. Across all tests, the drone-based hyperspectral data gave the most accurate results, with water depth errors as low as about 15 centimeters and around 94% correct habitat classification. Multispectral imagery, similar to that from common commercial satellites, performed nearly as well, offering a strong compromise between detail, coverage, and cost. The simpler RGB version did an acceptable job for depth but struggled to reliably separate different bottom types in this complex habitat, especially when trying to distinguish between similar algae species.

What matters more: sharpness or color richness?

One of the key questions was whether finer pixels or richer color information makes the bigger difference. For depth, the answer is surprising: changing the pixel size from 10 cm to 2 m barely affected accuracy. Because depth is controlled mainly by how light fades with water, very high spatial detail adds little when waters are only a few meters deep and relatively smooth. For habitat mapping, however, both spatial and spectral richness matter more. Finer pixels reduce the mixing of sand, rock, and plants within each pixel, making classes easier to separate. And when the goal is to tease apart similar algae types, having more spectral bands clearly helps. The neural network model took best advantage of this extra information, outperforming the more traditional classifier.

Tracking a worrying loss of marine greenery

Beyond the methods test, the team also compared a true satellite image from 2016 with the 2023 drone data, adjusted to match the satellite’s coarser resolution. They detected an estimated loss of about 7,200 square meters of marine vegetation, much of it likely from meadows of the green alga Cymopolia barbata. Combined with the already documented disappearance of historic seagrass, and a local warming trend in sea-surface temperature alongside heavy recreational use and periodic water-quality issues, the results suggest that this urban reef is under significant stress.

What this means for coasts and communities

For non-specialists, the message is twofold. First, modern imaging from drones and satellites, analyzed with machine learning, can now deliver accurate, repeatable maps of shallow-water depth and seafloor life without constant boat surveys. This makes it practical to watch how coastal habitats change over years or even seasons. Second, the case of Las Canteras shows why such monitoring is urgent: valuable underwater “forests” can shrink quietly under the combined weight of warming seas and local human pressure. The approach presented here offers a practical toolkit for cities and conservation agencies to keep a closer eye on their underwater neighborhoods and act before key habitats are lost.

Citation: Mederos-Barrera, A., Eugenio, F. & Marcello, J. Spatial-spectral resolution analysis using drone hyperspectral and satellite multispectral imagery for shallow coastal water monitoring. Sci Rep 16, 14511 (2026). https://doi.org/10.1038/s41598-026-38166-7

Keywords: coastal mapping, drone hyperspectral imaging, shallow water bathymetry, benthic habitat monitoring, marine vegetation loss