Clear Sky Science · en

Joint block estimation and attention-based long short-term memory network for doppler shift mitigation in UWA communication systems

Listening Clearly Underwater

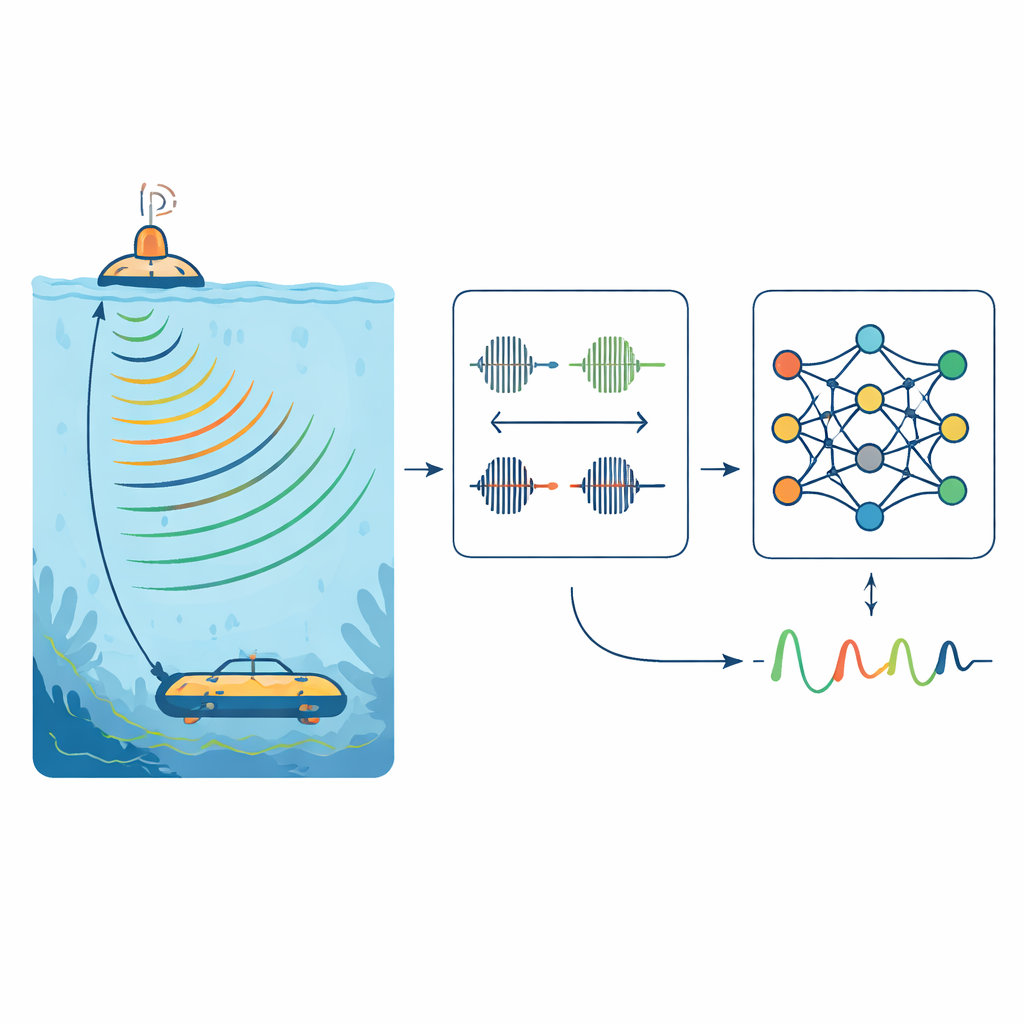

As oceans fill with sensors, robots, and research instruments, sending information reliably through water has become increasingly important. Yet underwater sound signals are easily warped when waves, currents, or moving vehicles shift their frequency, a phenomenon known as Doppler shift. This paper introduces a smarter way to correct those shifts for a popular signaling scheme called OFDM, combining a traditional physics-based correction with a modern attention-enhanced neural network to keep underwater messages clear even in rough, noisy seas.

Why Underwater Signals Get Garbled

Unlike radio waves in air, sound in the ocean must navigate a constantly changing environment. When a ship, buoy, or underwater vehicle moves, or when wind and waves reshape the sea surface, the frequencies of acoustic signals are squeezed or stretched in time. In OFDM systems, where many closely spaced tones carry data side by side, even small shifts break the delicate balance that keeps those tones from interfering with each other. The result is crosstalk between channels and higher error rates in the received data stream. Existing correction methods can estimate these Doppler shifts, but they often trade accuracy for simplicity, or fail when motion is fast, the channel changes quickly, or the signal-to-noise ratio is low.

Blending a Simple Ruler with a Smart Learner

The authors propose a two-step strategy that marries a simple, interpretable measurement with a powerful learning model. First, they insert special probe sounds—linear frequency modulation (LFM) chirps—at the beginning and end of each data frame. Because these chirps spread their energy across a wide range of frequencies, they remain easy to match even after the ocean has distorted them. By measuring how much the received chirps are stretched or compressed in time, the system obtains a coarse estimate of the overall Doppler factor and resamples the incoming data to undo most of the distortion. This step is relatively inexpensive to compute and robust to noise, turning a complicated wideband problem into a cleaner, narrower one.

Teaching a Neural Network to Fine‑Tune the Signal

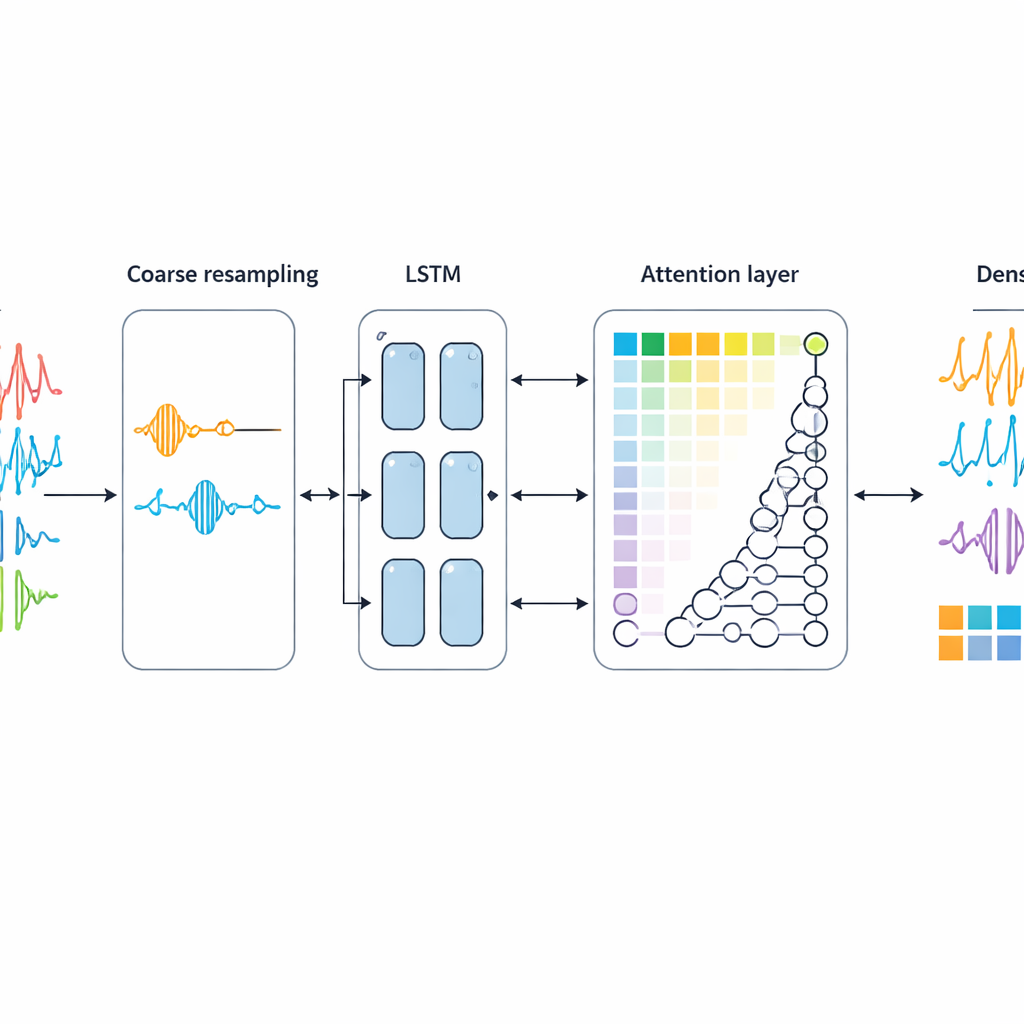

After this first correction, a subtler residue remains: a small carrier frequency offset that still twists the phase of the OFDM tones. To remove it, the authors treat the problem as a pattern-recognition task on time series. They feed the resampled OFDM symbols into a neural network built from long short-term memory (LSTM) units enhanced with an attention mechanism. The input is not the full complex waveform but primarily the phase of each subcarrier, where Doppler-induced distortions show up most clearly. The LSTM cells learn how these phases evolve across consecutive symbols, while the attention layer highlights the most informative time steps, mimicking how human perception focuses on key details. The network outputs a single normalized offset value, which is then used to apply a final phase correction to all subcarriers.

Testing in Virtual Oceans

To see how well their hybrid method works, the researchers simulate underwater channels using a widely used acoustic propagation model that includes both shallow coastal waters and deep ocean conditions. They generate tens of thousands of synthetic OFDM frames with varying Doppler shifts and noise levels, using part of this data for training and the rest for testing. They compare their attention-based LSTM approach, preceded by the LFM-based coarse step, against several alternatives: purely traditional methods based on cyclic prefixes or unused subcarriers, and neural networks without recurrent layers or without attention. Across a range of signal-to-noise ratios and Doppler strengths, their method produces lower frequency-estimation errors and significantly fewer bit errors, especially in challenging low-SNR or high-Doppler scenarios.

Balancing Performance and Practicality

Although the new approach requires more computation than the simplest classical techniques, it remains practical when the neural network is trained offline and only used for prediction at run time. The authors analyze the number of operations for each competing method and show that while their model is heavier than search-based estimators at coarse resolution, it avoids the explosion of cost that would come from making those searches very fine. It also uses fewer parameters than a competing attention-based network, which is important given the scarcity of labeled underwater data. Overall, the design strikes a balance between accuracy, robustness, and efficiency that is suitable for real-world embedded systems on underwater platforms.

Clearer Conversations Beneath the Waves

In essence, this work shows that combining a straightforward physical measurement with a focused, sequence-aware neural network can dramatically improve how well underwater communication systems cope with motion and turbulence. The coarse LFM block estimation handles the bulk of the Doppler distortion, while the attention-based LSTM cleans up the remaining frequency offset by learning subtle patterns in the signal’s phase. Together, they cut error rates and make digital conversations beneath the waves more dependable, pointing the way toward smarter acoustic links for ocean science, offshore engineering, and autonomous marine vehicles.

Citation: Zeng, Q., Guo, T., Peng, G. et al. Joint block estimation and attention-based long short-term memory network for doppler shift mitigation in UWA communication systems. Sci Rep 16, 11328 (2026). https://doi.org/10.1038/s41598-026-38112-7

Keywords: underwater acoustic communication, Doppler shift compensation, OFDM, deep learning, LSTM attention network