Clear Sky Science · en

Deep learning techniques for crop classification in complex agricultural landscapes

Why smarter crop maps matter

As climate change, water shortages, and rising food demand put pressure on farmers, knowing exactly what is growing where and how it is doing has become essential. This study shows how a new blend of satellite imagery and advanced deep learning can more accurately tell different crops apart in crowded, mixed fields. By teaching computers to pay special "attention" to key moments in a plant’s growth, the researchers move a step closer to real-time, field‑level crop monitoring that can support better yields and more sustainable farming.

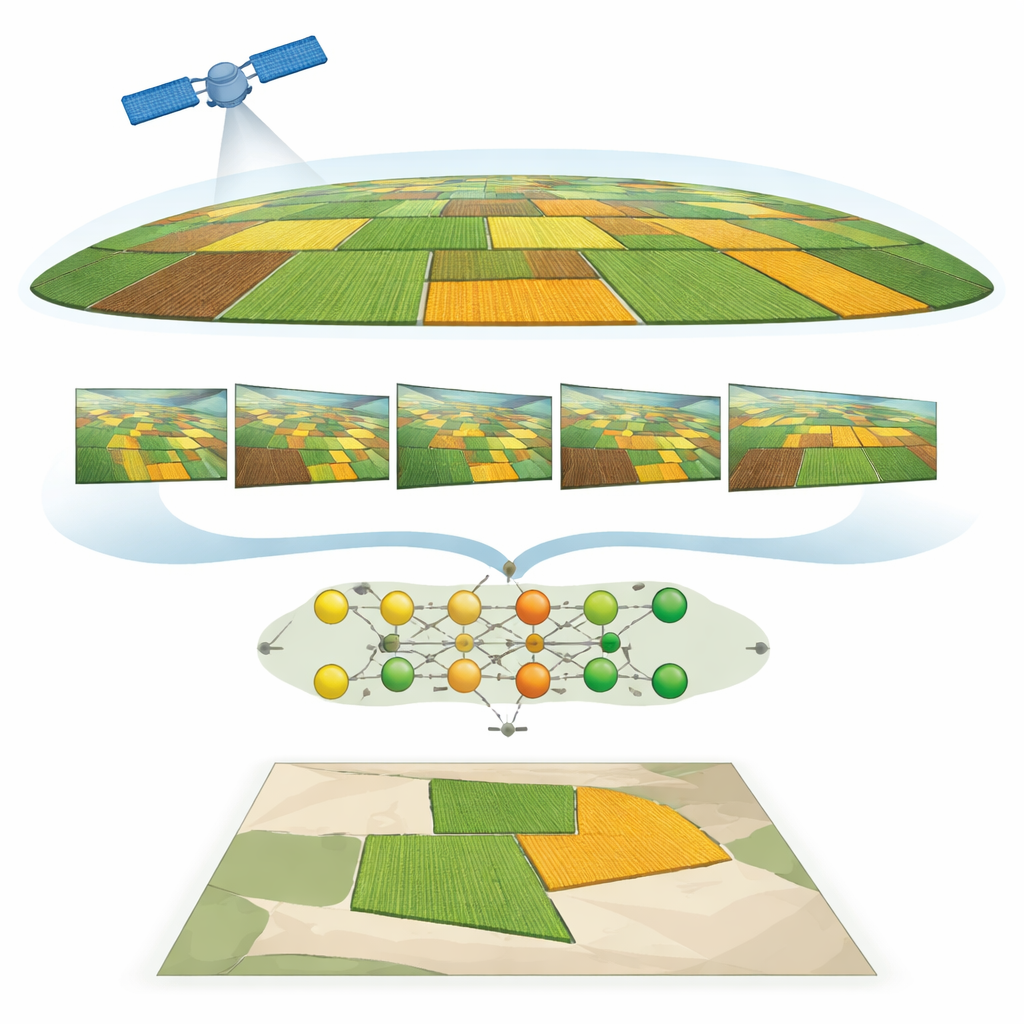

Watching fields from space over time

The work focuses on farms around Hoskote near Bengaluru, India, where two staple crops—ragi (finger millet) and beans—often grow in a patchwork of small plots. Traditional mapping struggles here because the fields are small, the landscape is varied, and the crops can look very similar, especially early in the growing season. To tackle this, the team used high‑resolution PlanetScope satellite images taken several times between October and January. Each image captures multiple colors of light, including parts of the spectrum that the human eye cannot see but plants reflect strongly, providing clues about plant health and growth stage.

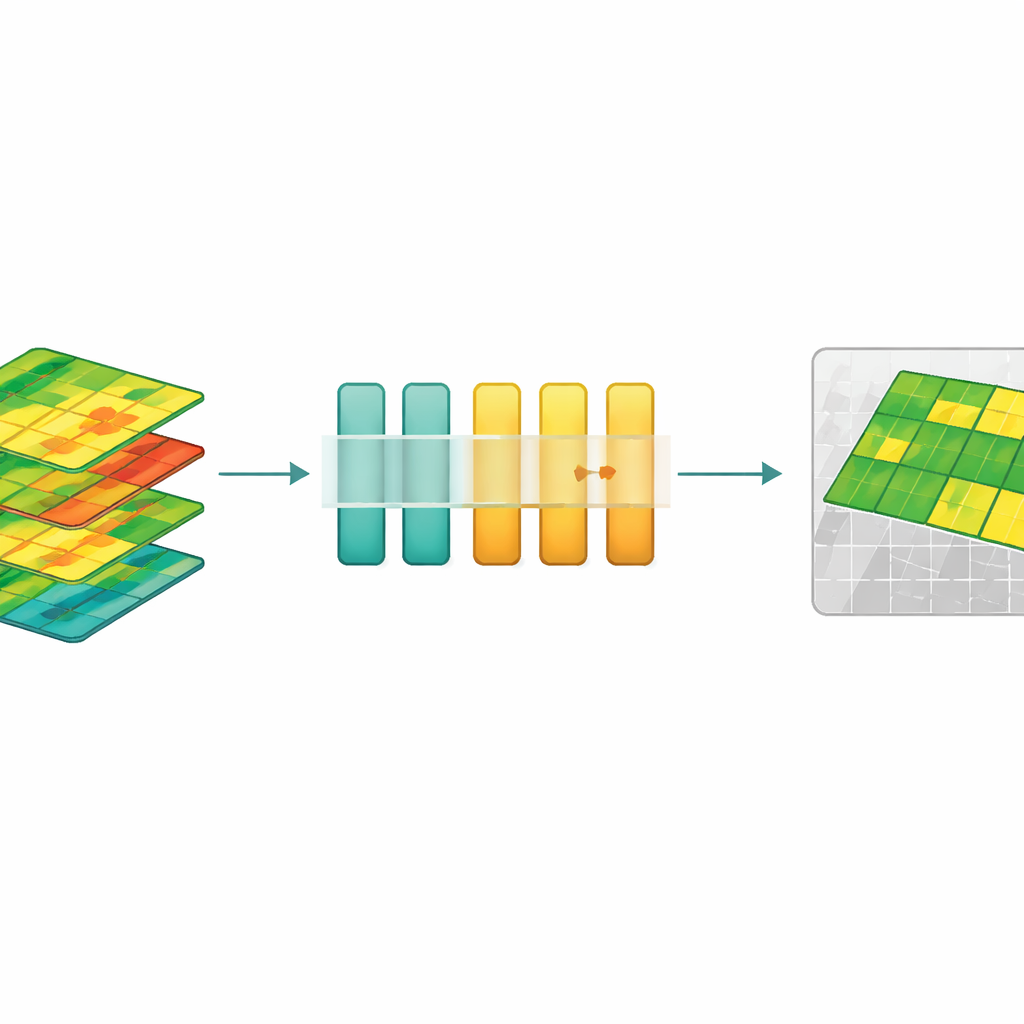

Turning light into plant health signals

Instead of working only with raw satellite colors, the researchers transformed the data into “vegetation indices” that distill how green, dense, and vigorous the plants are. Well‑known indices such as NDVI, EVI, GNDVI, NDRE, and MCARI convert combinations of red, green, blue, near‑infrared, and red‑edge light into numerical measures linked to leaf chlorophyll, canopy density, and growth stage. By stacking these indices across multiple dates, the team built a time‑lapse portrait of how each field’s health signal rises and falls as the crop develops. This makes it easier to tell crops apart based on how they grow over time, not just how they look on a single day.

Teaching the model what to focus on

To read these plant health movies, the study uses a deep learning model built around a type of network called an LSTM, which is good at handling sequences. On top of this, the authors added several forms of “attention” mechanisms—mathematical tools that let the model decide which time points are most important for making a decision. A key innovation is a version of self‑attention that uses the tanh activation function. This design dampens extreme values and helps the network capture subtle but meaningful changes in the plant health curves. The system also includes careful preprocessing: aligning images, correcting for lighting, filtering out non‑vegetation, and normalizing all features so that no single index dominates.

Sharper maps and fewer false alarms

When the different attention variants were compared, tanh‑based self‑attention came out on top, reaching 88.89% accuracy in separating ragi from beans—an improvement of more than eight percentage points over a strong object‑based Random Forest baseline and ahead of other attention types such as multiplicative, global, and soft attention. The model performed well for both crops, with balanced precision and recall, and handled the challenge of look‑alike fields during early growth better than previous methods. A confidence threshold ensured that pixels with uncertain predictions were labeled as background rather than forcing a guess, reducing misclassifications by about 12%. Simple spatial filtering then smoothed the maps so that the result looks like realistic fields rather than speckled noise.

What this means for future farming

In plain terms, the study shows that teaching neural networks not only to see but also to pay attention to the right growth moments leads to much more reliable crop maps from space. Although the work focuses on ragi and beans in one region and one season, the same approach could be extended to other crops, climates, and satellite systems. For farmers, agencies, and insurers, such tools promise earlier and more accurate information on what is planted where and how it is performing, enabling better planning, more targeted inputs, and improved food security with less environmental impact.

Citation: Sharma, M., Kumar, A., Muthuraman, S. et al. Deep learning techniques for crop classification in complex agricultural landscapes. Sci Rep 16, 8831 (2026). https://doi.org/10.1038/s41598-026-37806-2

Keywords: remote sensing, crop mapping, deep learning, precision agriculture, vegetation indices