Clear Sky Science · en

A boosting strategy based on feature mimicking with attention for visual anomaly detection

Why spotting odd patterns in images matters

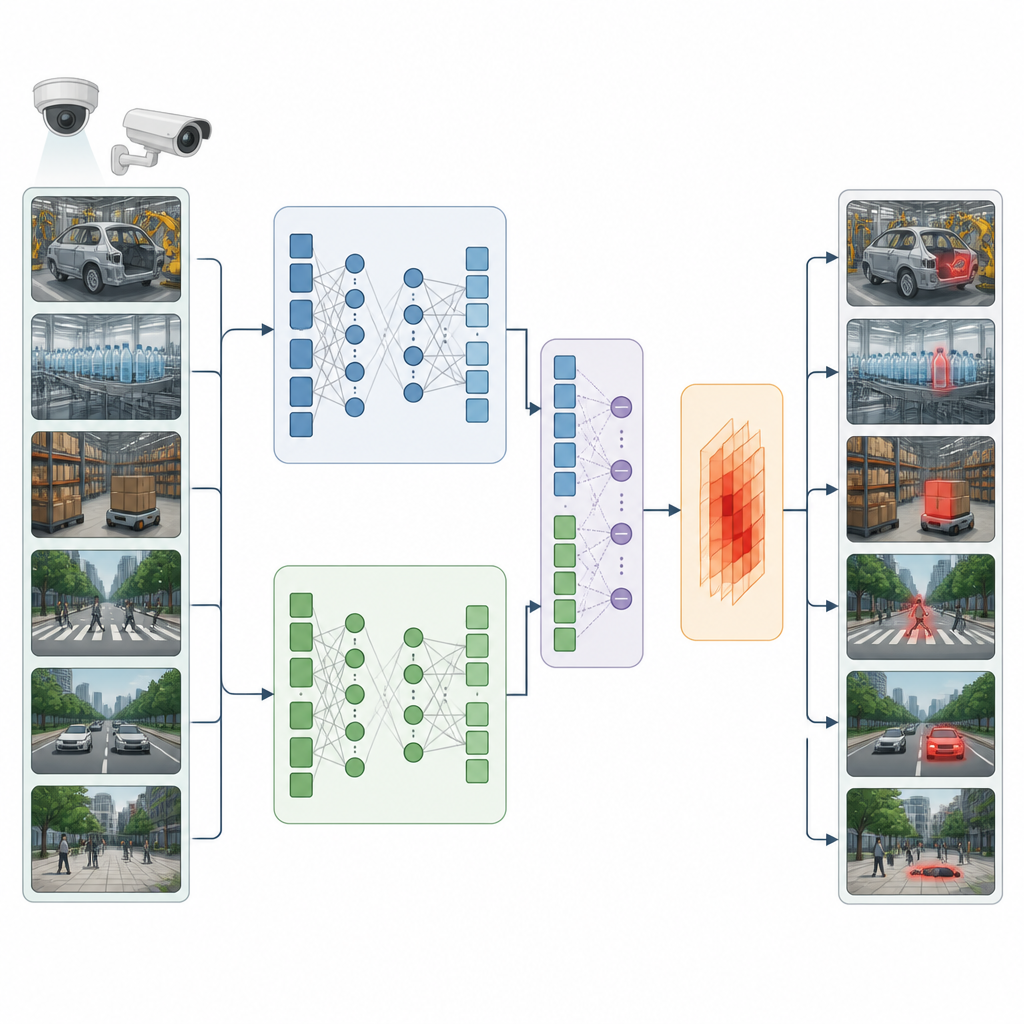

From keeping factory products free of tiny defects to watching for unusual events in city streets, computers are increasingly asked to flag anything that looks out of place. This paper introduces a new way to help artificial intelligence tell normal scenes from suspicious ones more reliably, even when the system has only ever seen normal examples during training.

Teaching a computer what normal looks like

In many real settings, true anomalies are rare and hard to label by hand. As a result, most systems learn only from normal images and videos, then try to spot anything that does not fit what they have seen before. A common approach is to train a model to rebuild, or “reconstruct,” its input images and then treat large reconstruction errors as warning signs. But modern models are so powerful that they sometimes rebuild abnormal scenes too well, causing dangerous mistakes where faulty products or strange events are passed off as ordinary.

Learning from a stronger guide

The authors tackle this problem by pairing two models, called the teacher and the student. The teacher is a pre trained network that already knows how to handle the reconstruction task on normal data. Instead of only asking the student to reconstruct images, the new method also asks it to imitate the inner features of the teacher. These hidden features capture the overall meaning and structure of normal scenes. When an abnormal image is shown, the student, trained only on normal data, struggles to copy the teacher’s inner responses. This mismatch becomes a powerful extra clue that something is wrong, beyond simple pixel level differences.

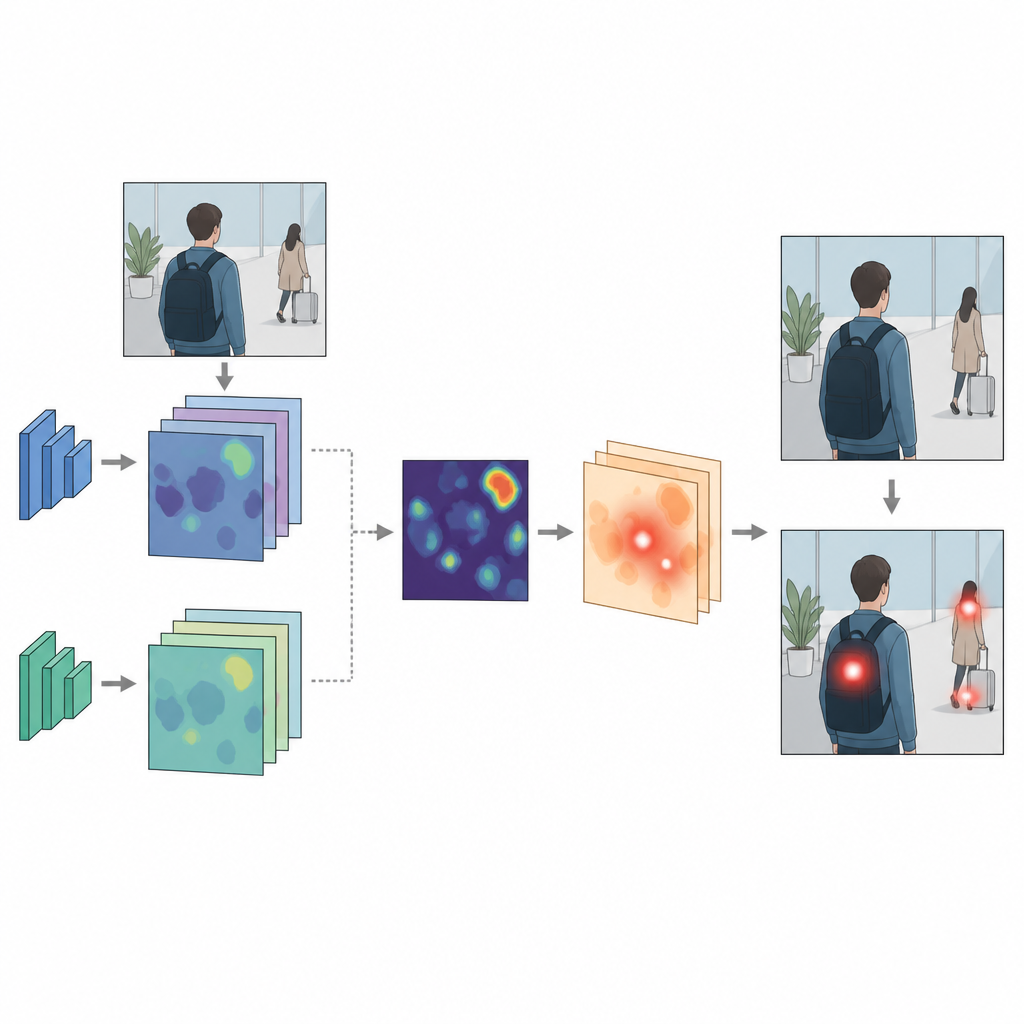

Letting attention follow the mismatch

To make the most of this teacher student disagreement, the paper adds a special attention module guided by feature inconsistency. It starts by computing a “difference map” between the features produced by the teacher and the student. This map tends to be small and smooth for normal inputs, but lights up around truly abnormal regions. The attention module then uses this map to strengthen or weaken parts of the student’s features, nudging the system to focus on regions where the mismatch is highest. Unlike traditional attention, which usually highlights visually striking areas, this attention is driven purely by semantic inconsistency between teacher and student, making it more tightly linked to anomalies.

Proving the idea on videos and factory images

The researchers plug their feature mimicking and attention scheme into several leading anomaly detection systems for both surveillance videos and industrial product images. They test the combined methods on three challenging benchmarks: Avenue and ShanghaiTech for unusual events in campus scenes, and MVTec AD for subtle defects in objects and textures such as carpets, metal parts, and toothbrushes. Across these tests, the enhanced systems consistently outperform their original versions, catching more anomalies while keeping false alarms in check. In some categories, the accuracy of pinpointing defect regions improves by more than twenty percentage points, showing that the extra guidance from feature inconsistency and attention significantly sharpens the model’s eye.

What this means for reliable automatic monitoring

For a lay reader, the main message is that this work gives computers a better sense of what truly “does not belong” in an image or video. By asking a student model not only to copy what it sees, but also to mimic how a trusted teacher thinks internally, and then steering attention toward areas where they disagree, the method reduces the risk that unusual events or defects slip through unnoticed. This makes automated inspection lines and surveillance systems more dependable without requiring large sets of labeled abnormal examples.

Citation: Zheng, B., Gan, Y., Wang, L. et al. A boosting strategy based on feature mimicking with attention for visual anomaly detection. Sci Rep 16, 15084 (2026). https://doi.org/10.1038/s41598-026-37667-9

Keywords: visual anomaly detection, teacher student network, attention mechanism, industrial inspection, video surveillance