Clear Sky Science · en

Explainable hybrid AI CAD framework for advanced prediction of steel surface defects

Why tiny flaws in steel should matter to you

From cars and ships to skyscrapers and robots, many things we rely on every day are built from flat steel plates. If tiny cracks, pits, or scratches slip through the factory unnoticed, they can weaken these structures, shorten product lifetimes, and drive up costs. This paper describes a new kind of artificial intelligence (AI) system that acts like a super-attentive inspector, spotting and explaining subtle defects in steel surfaces more accurately than today’s tools, while still being fast enough for use on real production lines.

How steel is checked today—and why it falls short

Traditional steel inspection depends on human workers or simple image-processing rules. Humans tire quickly and may miss faint or irregular flaws. Rule-based systems struggle whenever lighting, material, or defect shape changes even slightly, which happens often in real factories. In recent years, deep-learning models, especially the YOLO family of object detectors, have been trained to find defects automatically. But these one-step systems try to do two very different jobs at once: drawing boxes tightly around defects and deciding which type each one is. When defects are tiny, oddly shaped, or look similar to the background steel, this coupled approach tends to either miss flaws or confuse their categories.

Splitting the job in two for sharper eyes

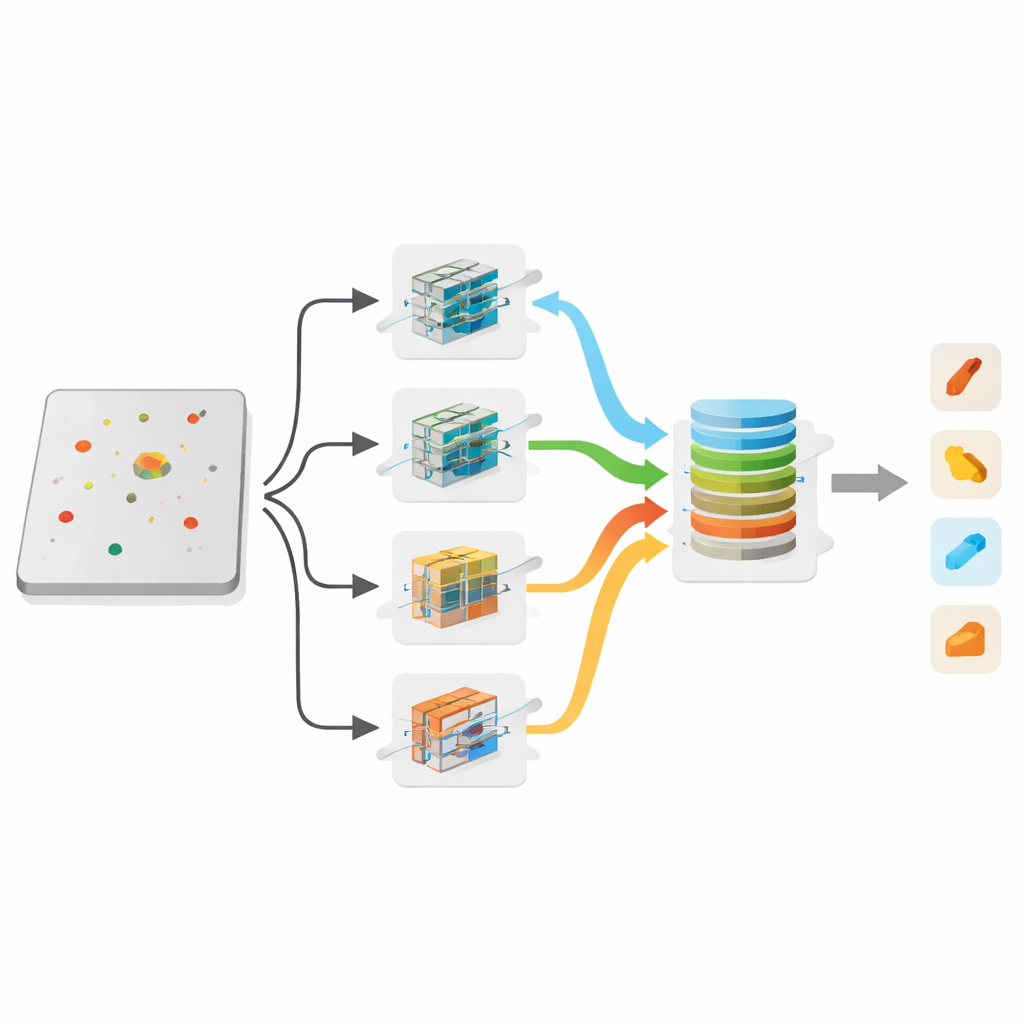

The authors propose a "hybrid" computer-aided diagnosis (CAD) framework that deliberately separates finding defects from naming them. First, an improved detector called Fusion YOLO focuses only on answering a simple yes-or-no question for each region: is there some kind of defect here? It combines three optimized YOLO-based models, including a custom design called DCBS-YOLO, and merges their suggestions using a technique that averages overlapping boxes instead of discarding them. This lets the system draw more reliable outlines around suspicious regions, especially when defects are small, oddly shaped, or faint against the background.

Teaching the system to see both details and the big picture

Once likely defect areas are located, a second stage takes over to decide what kind of flaw each one is—for example, a crack-like "crazing" mark, a pit, a patch, or a scratch. Here the framework uses a combination of several convolutional neural networks (CNNs), which are good at picking up fine textures, and a Vision Transformer, which excels at seeing larger patterns and long-range relationships across the image. Their feature maps are fused so that local details and global context are considered together. This arrangement sharply reduces mix-ups between defect types that look alike to both humans and machines. Across several families of CNNs, the best trio plus the Transformer, trained end-to-end, achieved near-perfect classification scores on benchmark datasets.

Cleaning the view and tuning the models automatically

To give the AI its best chance to succeed, the authors design a preprocessing pipeline that gently enhances the steel images. By adjusting brightness and contrast, reducing noise, and sharpening edges—while carefully preserving overall image quality—the system makes faint defects stand out without creating artificial artifacts. On top of that, an MLOps-based workflow automatically searches through many training settings, such as learning rates and batch sizes, to find the most effective combinations for both detection and classification. This automation reduces trial-and-error and ensures that the final models are close to their best possible performance on the task.

Opening the black box with visual explanations

Because industrial users must trust the system before putting it on a line, the framework includes explainable AI tools. After a defect is labeled, a method called Grad-CAM produces a heat map that highlights which parts of the image influenced the decision most strongly. These colorful overlays show inspectors exactly where the AI "looked" when it called something a crack or a pit. In cases where the first detection stage misses a flaw, the classification stage and its heat maps can still reveal suspicious regions, acting as a safety net and helping engineers understand remaining blind spots.

What the results mean for real-world factories

Tested on two widely used steel-defect datasets, the new framework outperformed both standard YOLO models and several recent research systems, reaching high detection accuracy and classification scores while generalizing well to new types of defects. Although the two-stage design is more computationally demanding and just shy of ideal real-time speed, it already approaches the frame rates needed on many production lines. With further engineering, the authors argue, this approach could become a practical inspection assistant: one that catches more subtle flaws, explains its reasoning, and helps manufacturers deliver safer, more reliable steel-based products.

Citation: Moon, C., Al-antari, M.A. & Gu, Y.H. Explainable hybrid AI CAD framework for advanced prediction of steel surface defects. Sci Rep 16, 10796 (2026). https://doi.org/10.1038/s41598-025-34320-9

Keywords: steel surface inspection, defect detection, deep learning, computer vision, explainable AI