Clear Sky Science · en

A Smartphone-based Comprehensive Dataset of Annotated Oral Cavity Images for Enhanced Oral Disease Diagnosis

Why your phone could help spot mouth cancer

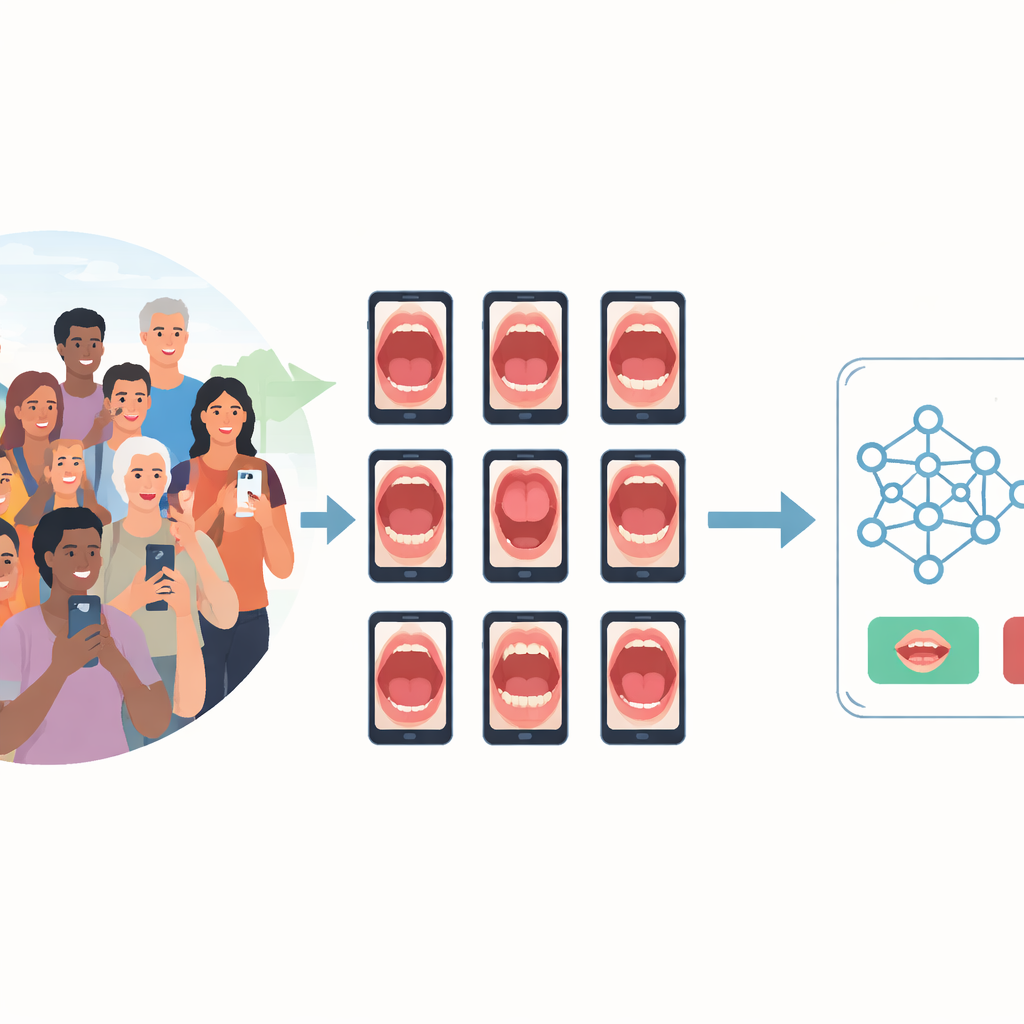

Most of us carry a powerful camera in our pocket, yet it is rarely used to look for early signs of disease inside the mouth. Oral cancer and its warning spots often go unnoticed until they are advanced, especially in communities with few specialists. This study describes SMART‑OM, a carefully curated collection of photographs of the inside of the mouth, all taken with ordinary smartphones and meticulously labeled by dental experts. The goal is to give researchers the raw material they need to build artificial‑intelligence tools that can flag worrisome changes long before they turn deadly.

The global problem inside the mouth

Cancers of the lips and oral cavity kill nearly two hundred thousand people worldwide each year, with hundreds of thousands of new cases, and the burden is especially heavy in low‑ and middle‑income countries. Tobacco, alcohol, and areca nut chewing are major drivers of risk, and men and older adults are particularly affected. Early diagnosis greatly improves survival, but in many regions it is hard to reach a specialist, and routine oral checks rely on a dentist’s eye and experience. That means subtle patches or rough areas can be missed, or different clinicians may disagree on what they see. A low‑cost, widely available way to record and review the inside of the mouth could make a real difference.

Turning smartphones into screening tools

The SMART‑OM project set out to capture high‑quality images of the oral cavity in real‑world community settings using just two common smartphones, one Android and one iPhone. Community health workers and dentists in southern India visited homes and dental camps, recruiting adults who agreed to be examined. For each participant, eight standard views were photographed, covering the tongue, cheeks, lips, and upper and lower dental arches. Care was taken to use mostly natural light, position the camera just a few centimeters away, and employ simple tools such as mouth mirrors or wooden sticks to gently hold cheeks and lips aside. Blurry or poorly lit shots were repeated, and all data collection followed strict ethical rules, with faces kept out of frame and personal details fully anonymized.

From raw pictures to rich, labeled data

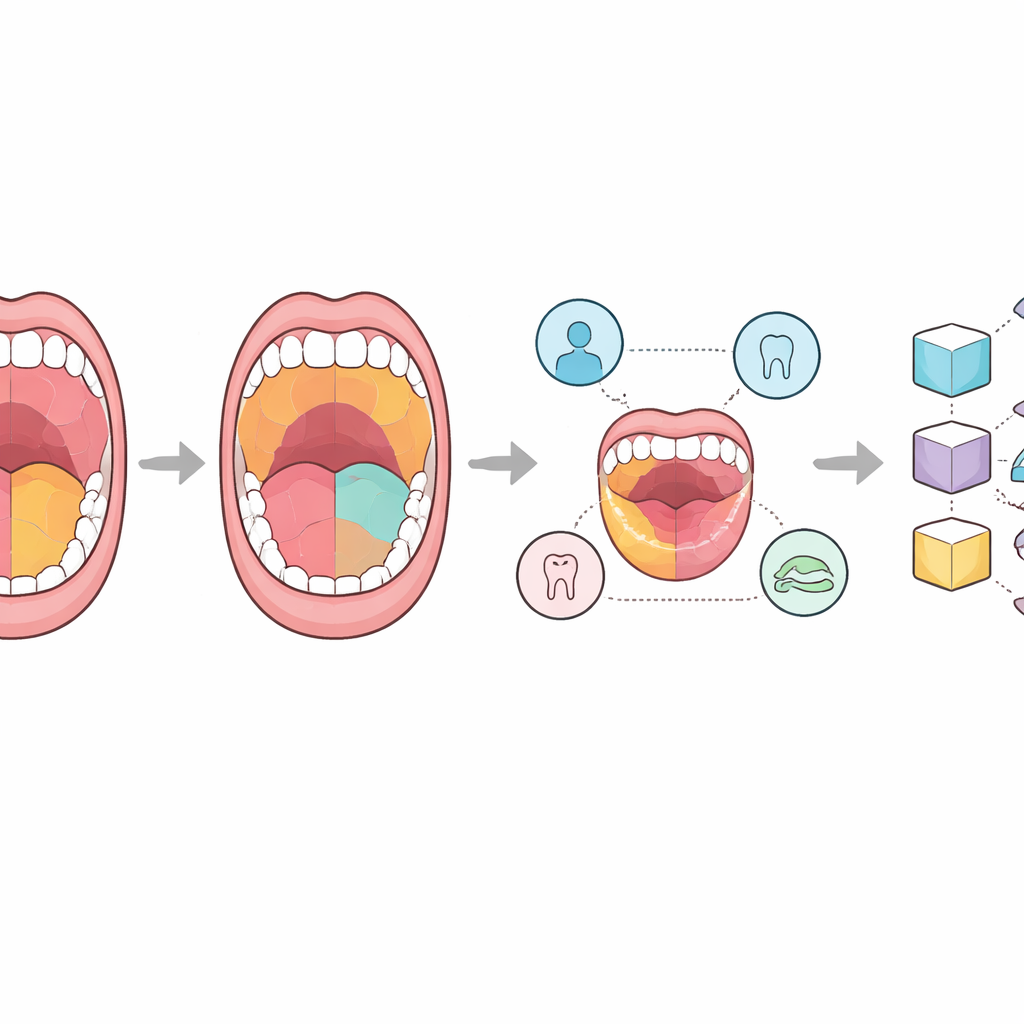

In total, the team assembled 2,469 images from 331 people. Each picture was placed into one of four groups: completely healthy tissue; harmless variations from the usual look; potentially malignant disorders that may turn into cancer; and confirmed oral cancer. Expert dental surgeons then went beyond simple labels by tracing detailed outlines on the images using an open‑source annotation tool. Some versions mark only the main region of interest, others map every visible structure, and special lesion‑focused versions trace suspicious patches and growths. Alongside the pictures, the dataset includes spreadsheet files listing what each drawn region represents and separate tables describing each person’s age, sex, habits such as smoking or betel chewing, and clinical findings. This combination of visuals and context is designed to support both image‑only and multi‑modal AI systems.

Putting AI models to the test

To show how useful SMART‑OM can be, the researchers trained several popular deep‑learning models, originally developed for general image recognition, to tackle two tasks: simply telling normal from abnormal images, and distinguishing between the four diagnostic groups. They split the dataset into training and test portions and fine‑tuned models such as ResNet, VGG, EfficientNet, and a Vision Transformer. Despite the data being dominated by healthy images and having relatively few cancer and high‑risk cases, the best model, a relatively compact ResNet variant, correctly classified close to nine out of ten test images overall. It was especially reliable in recognizing healthy mouths, and reasonably good—though less consistent—at flagging abnormal ones, reflecting the natural imbalance and subtle visual differences between categories.

What this means for everyday care

For non‑specialists, the key message is that SMART‑OM lays the groundwork for turning an everyday smartphone into an aid for early oral‑cancer screening. By making this large, carefully annotated and fully anonymized dataset publicly available, the authors give researchers around the world a shared resource to train and compare AI tools that can highlight suspicious regions, combine image cues with lifestyle risk data, and help decide who needs a closer look by a dentist or pathologist. Although the current collection still contains relatively few true cancer cases, it closely mirrors what is seen in communities and will grow over time. As it expands, SMART‑OM is poised to support more accurate, accessible, and affordable checks for dangerous mouth changes, potentially saving lives through earlier diagnosis.

Citation: Madan Kumar, P.D., Ranganathan, K., Lavanya, C. et al. A Smartphone-based Comprehensive Dataset of Annotated Oral Cavity Images for Enhanced Oral Disease Diagnosis. Sci Data 13, 676 (2026). https://doi.org/10.1038/s41597-026-06954-5

Keywords: oral cancer, smartphone imaging, medical AI, oral lesions, screening dataset