Clear Sky Science · en

Tomato Multi-Angle Multi-Pose Dataset for Fine-Grained Phenotyping

Why Tomatoes and Smart Cameras Matter

Tomatoes are not just a salad staple; they are one of the world’s most important crops and a workhorse of plant science. Breeders and researchers constantly examine tomato plants in detail—how leaves grow, when flowers open, how fruits change color—to create hardier, tastier, and more resilient varieties. Yet this close-up inspection is usually done by eye, which is slow, hard to repeat, and can differ from one observer to another. This article introduces TomatoMAP, a large, carefully designed collection of tomato images that lets computers inspect plants from many angles, helping to take human guesswork out of plant assessment.

A New Picture Library of Tomato Growth

TomatoMAP is a comprehensive image dataset focused on the cultivated tomato, Solanum lycopersicum. It contains 68,080 color photographs covering the life of 101 greenhouse-grown plants over more than five months. Instead of a few snapshots, each plant is photographed again and again as it grows, capturing different stages such as flowering and fruit ripening. For every image, experts provide rich labels: simple boxes marking seven key regions of interest—leaves, flower clusters, fruit clusters, shoots, and more—and growth-stage tags based on a standardized scale commonly used by agronomists. In a separate set of close-up images, individual buds, flowers, and fruits are outlined down to the level of individual pixels, allowing extremely fine-grained analysis.

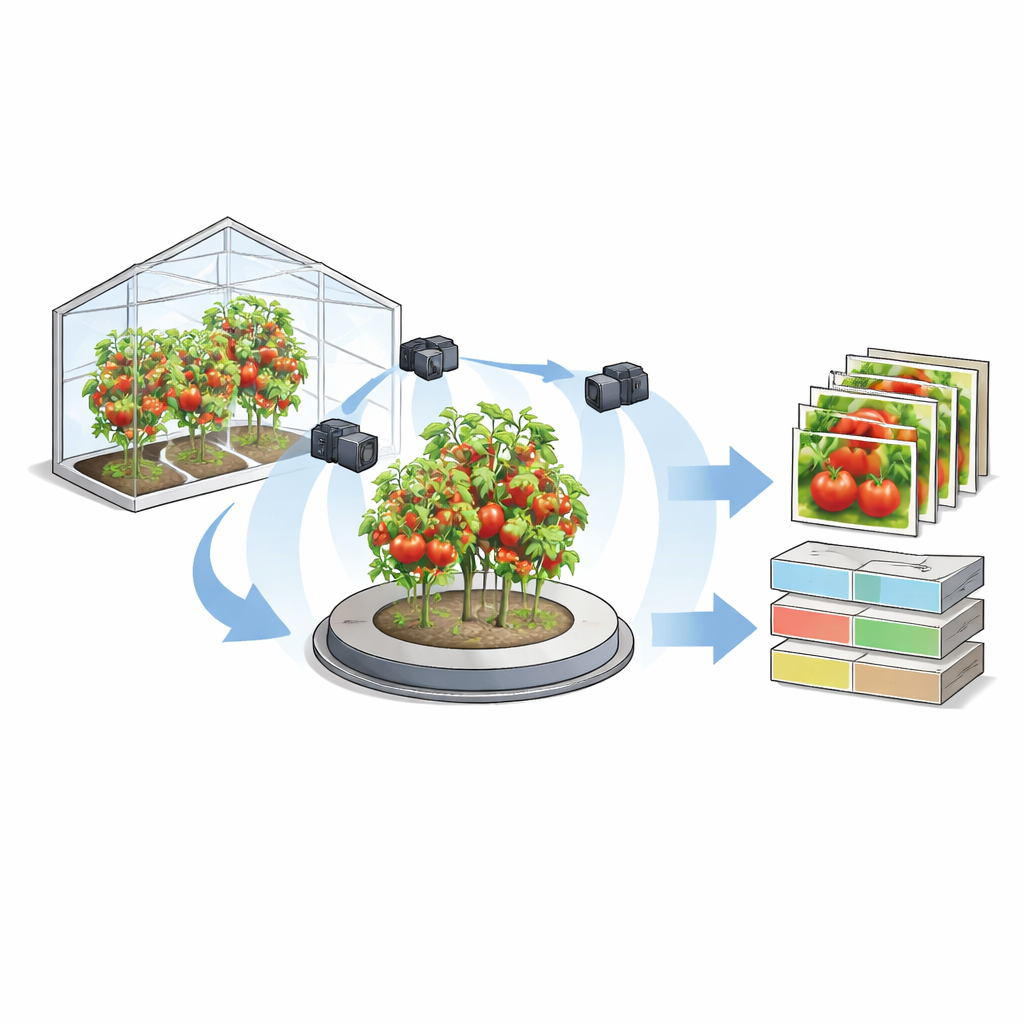

Seeing Plants from Every Side

To collect this dataset, the researchers built a dedicated imaging station that combines a rotating platform with four synchronized cameras. Tomato plants grown under controlled greenhouse conditions are placed on the turntable, which rotates in 30-degree steps to complete a full circle. At each step, cameras positioned at four heights and angles capture images at the same time, producing a multi-angle view of the same plant pose. Over 163 days, this setup produced more than 64,000 moderate-resolution images for growth-stage classification and organ detection, plus 3,616 high-resolution close-ups for detailed segmentation. This multi-view design preserves three-dimensional structure—such as how leaves overlap or how clusters of flowers and fruits are arranged—which is difficult to capture with single, flat images.

Teaching Computers to Read Plant Traits

TomatoMAP is not just a photo gallery; it is also a testbed for modern artificial intelligence. The team trained and evaluated lightweight, fast computer vision models chosen for potential real-time use in greenhouses. A compact image-classification network learned to assign plant growth stages. An efficient object-detection model learned to locate plant parts like leaves, flower clusters, and fruit clusters in each frame. For the close-up images, an instance-segmentation model traced the precise outline of individual buds, flowers, and fruits, and distinguished between early and late stages of development based on size and color. The authors show that these models reach high accuracy, especially for larger flowers and fruits, and can run quickly enough to be practical for continuous monitoring.

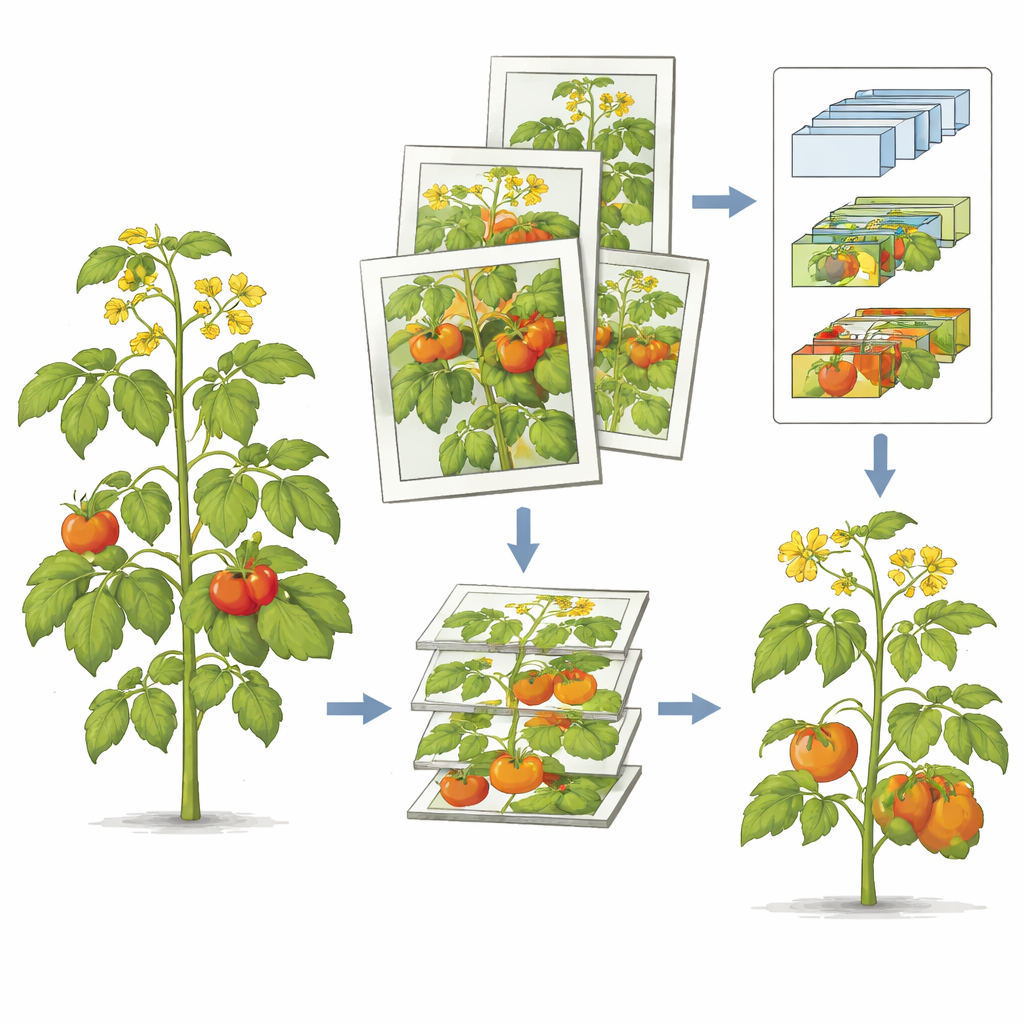

Building a Step-by-Step Digital Workflow

To make automated phenotyping more reliable, the researchers designed a three-level “cascading” workflow. First, data are organized from simple whole-plant images to detailed segmentations. Second, models are arranged in a chain: a growth-stage classifier guides which plants or time points are passed on to a detector, which then highlights the most relevant regions for the segmentation model to refine. Finally, the outputs of all models are combined into a consolidated description of each plant’s traits, such as how many fruits are present and which stages they are in. By structuring both the data and the models this way, errors are less likely to snowball, and each step can be improved or replaced without rebuilding the entire system.

How Well Machines Match Human Eyes

Because human experts do not always agree with one another, the team carefully checked how closely AI models and specialists align. They compared hundreds of images that were labeled independently by five experts and by a trained detection model. Using a standard measure of agreement, both expert–expert and AI–expert comparisons showed “almost perfect” consistency. This suggests that, at least for the structures and stages studied here, the automated methods can match the reliability of trained human observers while avoiding fatigue and inconsistency.

What This Means for Future Crops

TomatoMAP demonstrates that with the right imaging setup and careful annotation, computers can track tomato growth in rich detail from many angles, and do so in a way that closely mirrors expert judgment. For plant breeders and farmers, this opens the door to faster, more objective screening of new varieties and growing conditions, from assessing fruit load to spotting subtle differences in plant architecture. While some plant organs remain harder to capture perfectly and more work is needed to tailor models to specific devices, this dataset lays a foundation for scalable, bias-reducing digital phenotyping that could eventually help bring more resilient and productive crops from greenhouse experiments to the dinner table.

Citation: Zhang, Y., Struckmeyer, S., Kolb, A. et al. Tomato Multi-Angle Multi-Pose Dataset for Fine-Grained Phenotyping. Sci Data 13, 309 (2026). https://doi.org/10.1038/s41597-026-06926-9

Keywords: tomato phenotyping, plant imaging, multi-view dataset, computer vision in agriculture, crop breeding