Clear Sky Science · en

A Comprehensive Dataset for Word-Wheel Water Meter Reading Under Challenging Conditions

Why old water meters still matter

Many cities dream of "smart" infrastructure, but under streets and in basements, countless old mechanical water meters are still doing the real work of tracking how much water we use. Replacing all of them with modern smart meters is expensive, especially for smaller towns. This paper introduces a large, carefully built image dataset that helps computers read these traditional word-wheel meters automatically, even when dirt, shadows, blur, and glare make the job hard for both people and machines.

The problem with reading real-world meters

Reading a mechanical water meter from a photograph might sound as simple as spotting a line of numbers, but real installations are messy. Meters are often buried in underground boxes or cramped corners, surrounded by soil, leaves, or trash. Their glass covers may be stained or fogged, and lighting is rarely ideal; shadows, low light, or harsh reflections from flash or sunlight are common. On top of that, photos taken by workers in the field can be off-angle or out of focus, leaving the number wheels blurry or distorted. All of these factors confuse standard computer vision systems that expect clean, front-facing images.

Building a realistic picture collection

To tackle this, the authors collected more than 50,000 photos from real manual meter-reading work in Hangzhou, a major Chinese city with a complex underground water network and many aging meters. They first removed unusable images and resized the rest to a standard format, so that algorithms could handle them consistently. For each image, they marked the exact area where the reading appears, creating a "cut-out" mask that shows the meter’s window and nothing else. They also tagged each photo with simple yes-or-no flags that describe its challenges—whether it is clear, blurry, stained, soil-covered, dark, reflective, or from a meter with six digits. This multi-label setup reflects the reality that a single photo can be, for example, both blurry and dark at the same time.

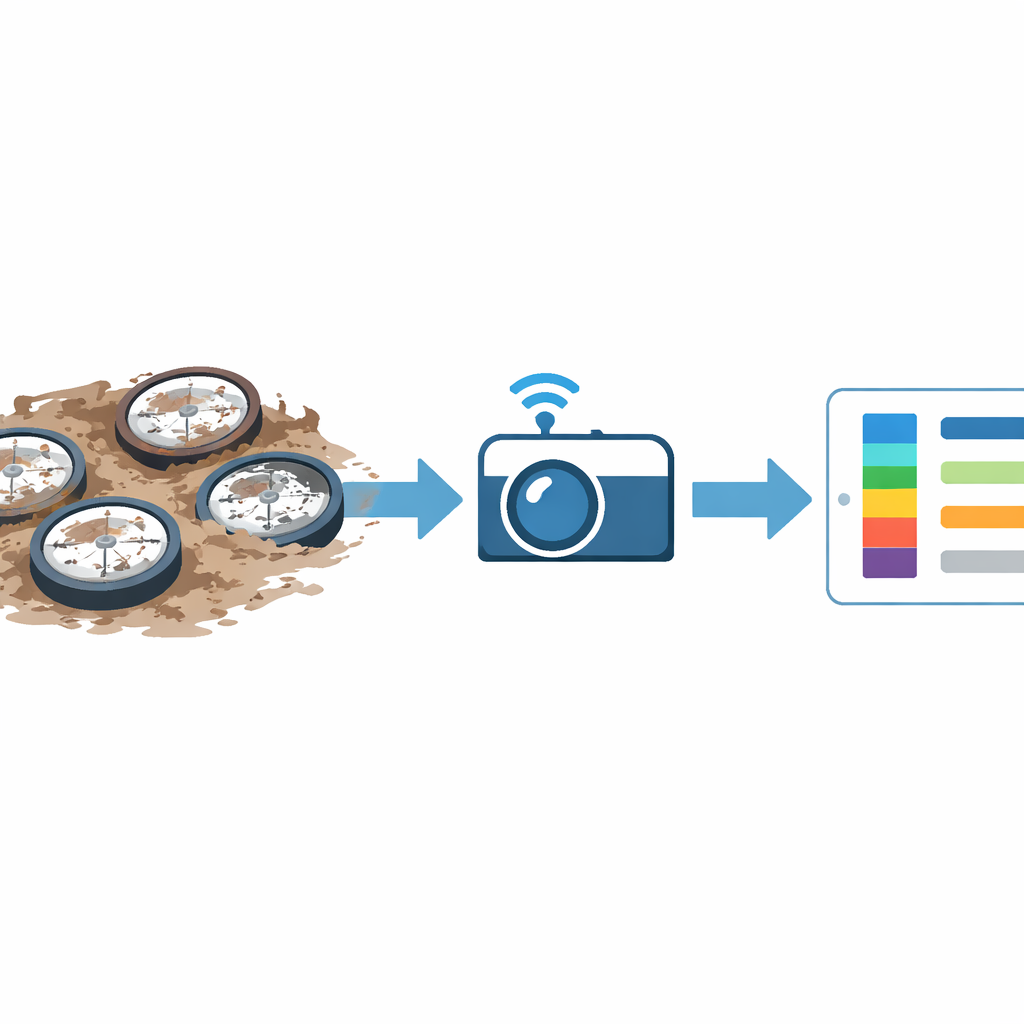

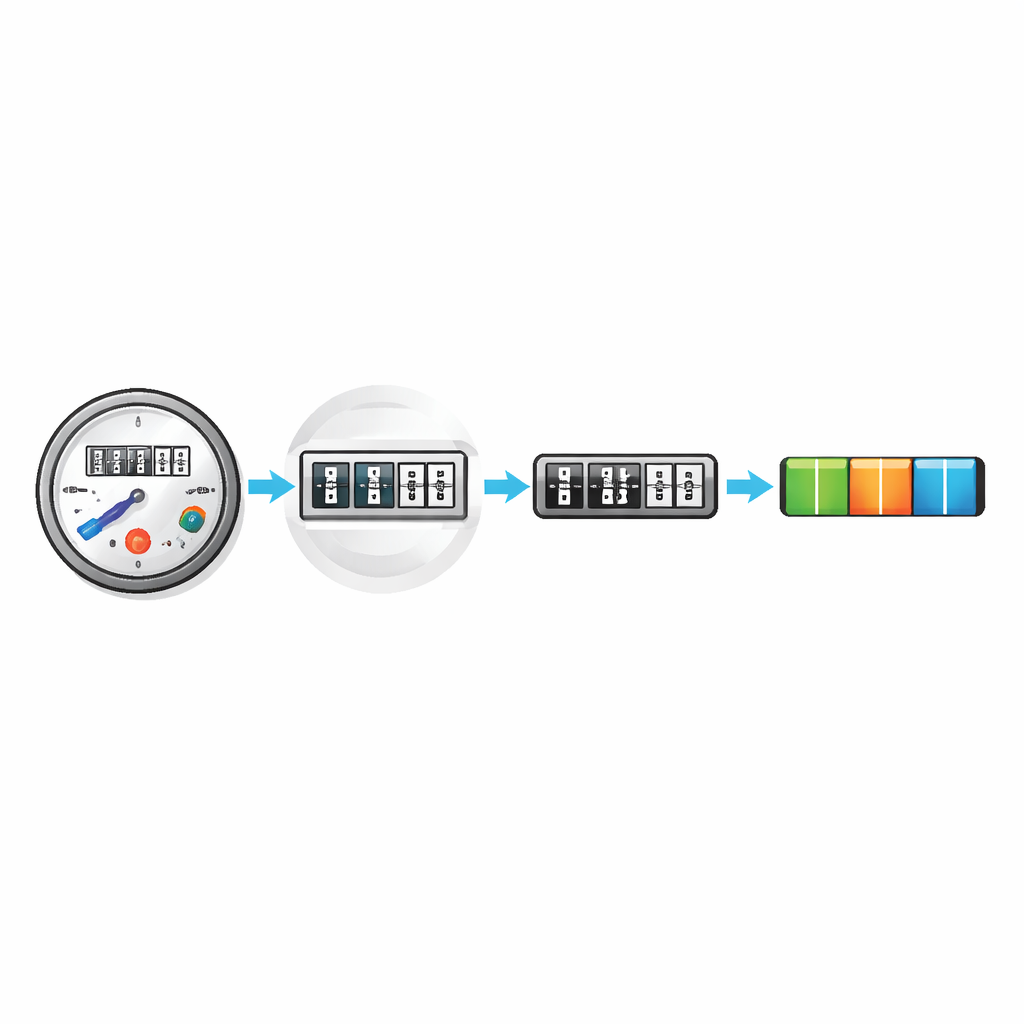

From locating the dial to reading the wheels

Automatic reading really involves two linked tasks: first, finding the small window that shows the rotating number wheels, and second, recognizing the digits themselves. For the first step, the dataset provides full images plus masks that outline the reading area, so models can learn to detect and segment that region. For the second step, the authors crop these regions and transform them into straight, rectangular slices where the digit wheels line up neatly. They then supply the correct five- or six-digit reading for each slice, along with extra flags that describe tricky cases such as reversed strips, partially turned wheels that show "half" digits, and six-digit meters. This structure lets researchers train and test systems that mimic the real workflow of a utility: find the dial, straighten it, then read the numbers.

Testing how well computers can learn

To show that the dataset is useful, the authors ran several well-known image segmentation and recognition models on it. For locating the reading area, four different segmentation approaches all quickly reached high accuracy, correctly capturing nearly all of the meter window in most test images. When they used the scenario tags—such as dark or reflective—alongside the images, they could see which conditions hurt performance the most and how much. Dark scenes, for instance, caused noticeably more errors. For reading the digits, classic and more advanced deep-learning models were compared. Simpler networks ran fast but made more mistakes, while deeper designs such as ResNet and DenseNet recognized almost all readings correctly, especially when allowed to be off by just one digit in tough cases.

What this means for everyday water use

In plain terms, this work does not introduce a single new gadget or app, but rather a shared "training ground" that others can use to build and compare automated reading systems for old-style water meters. Because the images capture the real-world messiness of dirt, blur, darkness, and glare, models that perform well on this dataset are more likely to work reliably in the field. That, in turn, could help utilities upgrade to more efficient, less error-prone, and less labor-intensive water monitoring without immediately replacing millions of existing meters, making smarter water management more affordable and widely accessible.

Citation: Zhao, S., Gao, Y., Liu, F. et al. A Comprehensive Dataset for Word-Wheel Water Meter Reading Under Challenging Conditions. Sci Data 13, 479 (2026). https://doi.org/10.1038/s41597-026-06809-z

Keywords: water meters, computer vision, smart cities, image recognition, dataset