Clear Sky Science · en

AI-enabled flexible electronic systems via near-sensor and in-sensor computing

Smarter Skin for Our Gadgets and Ourselves

Imagine a bandage-like patch that not only feels your heartbeat, motion, and temperature, but also thinks about that data on the spot and reacts instantly—without needing a phone, cloud, or bulky computer. This review article explores how scientists are bringing artificial intelligence (AI) directly into soft, bendable electronics. These "AI-enabled flexible electronic systems" promise wearable health patches, robot skin, and smart aircraft surfaces that sense, decide, and act almost as seamlessly as human skin and nerves.

From Simple Sensing to Thinking Surfaces

Traditional sensors are like simple microphones: they collect signals but rely on a distant computer to interpret them. As AI and the Internet of Things have spread, this old model has run into problems—too much data to transmit, slow responses, high power use, and privacy risks when sensitive information leaves the body or machine. Flexible electronics add a twist: sensors are made from soft materials or clever geometries so they can wrap around skin, joints, or aircraft wings. The article explains that next-generation systems follow a "sense-think-act" loop on a flexible platform: soft sensors pick up signals, a compact intelligence unit close by interprets them, and flexible actuators or devices respond, forming a fast, closed feedback loop.

Thinking Near the Sensing Point

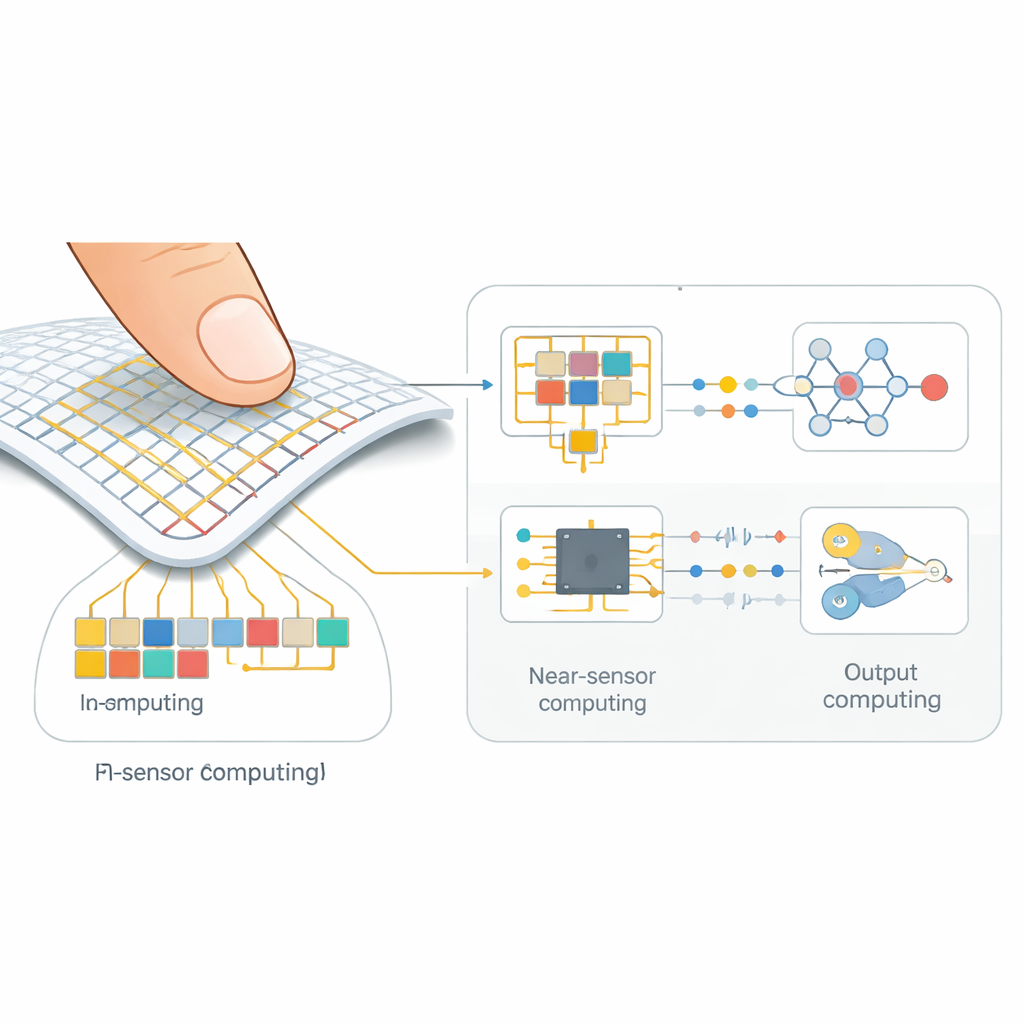

One major pathway is called near-sensor computing. Here, data still pass through basic circuits that convert analog signals into digital form, but the main processing happens on tiny chips located right beside the sensor array rather than in a remote computer. Microcontrollers, neural-network accelerators, and other processors run streamlined algorithms—ranging from simple filters to compact neural networks and “hyperdimensional” schemes that represent information as large bundles of bits. This cuts down how much raw data must be transmitted and enables real-time behavior. The review describes practical examples: wearable heart and brain monitors that clean and interpret signals on the device, gesture-recognizing armbands that decode muscle activity, and electronic skins that let robots identify objects by touch or adjust their grip automatically.

When the Sensor Itself Starts to Compute

The second pathway, in-sensor computing, goes further by merging sensing, memory, and computation into the same physical structure. Instead of acting like a camera that sends every pixel to a computer, an in-sensor device might detect, compress, and partially interpret a scene before any data leave the array. Researchers achieve this by integrating flexible transistors and new kinds of memory directly with sensing materials. Some devices mimic brain-like connections, where the electrical pathways themselves strengthen or weaken based on past activity, storing "experience" in the hardware. Others use light-sensitive or pressure-sensitive layers whose response can be tuned and reused, allowing them to both feel and remember. These designs dramatically reduce power use and delay, which is crucial for implanted devices, artificial skin, and other always-on systems.

New Brains and Nerves for Soft Electronics

To make these soft systems truly intelligent, the hardware is paired with tailored AI models. Classic neural networks are slimmed down to run on tiny processors using tricks like compression and low-precision arithmetic. Spiking neural networks, inspired by brain pulses, promise ultra-low power operation, while hyperdimensional computing trades heavy math for simple bit operations that are easy to implement in hardware. The review compares these approaches in terms of speed, energy, and complexity, and maps them onto real uses: health monitors that adapt to changing signal quality, brain–computer interfaces that decode intent from scalp patches, and smart skins for aircraft that read airflow and detect damage in flight. Together, they form a toolbox for matching the right algorithm to each flexible platform.

Hurdles on the Road to Everyday Smart Skins

Despite rapid progress, many obstacles remain before AI-rich flexible electronics become commonplace. On the hardware side, engineers must combine soft sensors, processors, power sources, and wireless links without sacrificing comfort, durability, or accuracy. Materials need to be safe on or in the body and keep working under sweat, motion, and long-term use. On the software side, AI models must be lighter, more energy-efficient, and able to learn or adapt on the device with limited memory and data. The authors argue that the future will blend three levels of computing: traditional cloud and edge computers for heavy analysis, near-sensor processing for fast local decisions, and in-sensor logic for instant, low-energy reflexes—much like the relationship between our brain, spinal cord, and skin.

Everyday Devices That Feel and Respond Like Skin

In plain terms, this article shows how bringing AI right up to—and even inside—flexible sensors can turn passive patches into active, learning devices. By cutting data traffic, saving energy, and protecting privacy, near-sensor and in-sensor computing open the door to medical patches that quietly track health and deliver therapy, soft robot skins that sense and react to touch, and aircraft surfaces that "feel" the air and adjust themselves in real time. The conclusion is that intelligent, flexible electronics will increasingly blur the line between sensing and thinking, making our technology more like a living, responsive skin than a rigid box of parts.

Citation: Xu, Z., Xie, E., Hou, C. et al. AI-enabled flexible electronic systems via near-sensor and in-sensor computing. npj Flex Electron 10, 52 (2026). https://doi.org/10.1038/s41528-026-00544-6

Keywords: flexible electronics, in-sensor computing, wearable health monitoring, electronic skin, neuromorphic hardware