Clear Sky Science · en

Complementary visual localization and tactile mapping approach for robotic perception of millimeter-sized objects with irregular surfaces

Robots That Can See and Feel

In many dangerous places—from space stations to nuclear accident sites—humans rely on robots to handle tiny switches, pills, screws, and buttons. But ordinary robot “eyes” often fail when lighting is poor or objects are very small and bumpy. This paper introduces a robot sensing system that combines sight and touch, inspired by the way people first look at an object and then explore it with their fingertips.

Why Vision Alone Is Not Enough

Most modern robots depend on cameras and depth sensors to recognize objects and decide how to move. These visual tools work well in clean, well-lit factories, but struggle when the scene is dark, crowded, or partly hidden. The authors show that even powerful camera systems can lose track of small items or miss fine surface details, especially under low light or glare. In such cases, a robot might know roughly where something is, but not whether it has tiny bumps, recesses, or irregular edges that are crucial for gripping or pressing precisely.

Building a Finger That Can Feel Tiny Details

To tackle this problem, the researchers built a soft, skin-like touch sensor that behaves more like a human fingertip. Using inkjet printing, they deposited flexible metal traces onto a stretchy rubber-like material, forming a grid of pressure-sensitive pixels. Between the metal layers sits a textured film made using ordinary sandpaper, giving the sensor a fine, irregular structure that boosts its sensitivity. When the sensor is pressed against an object, its electrical signal changes with pressure, allowing it to detect very light touches—down to the level of a small grain of rice—and to survive thousands of press-and-release cycles without losing performance.

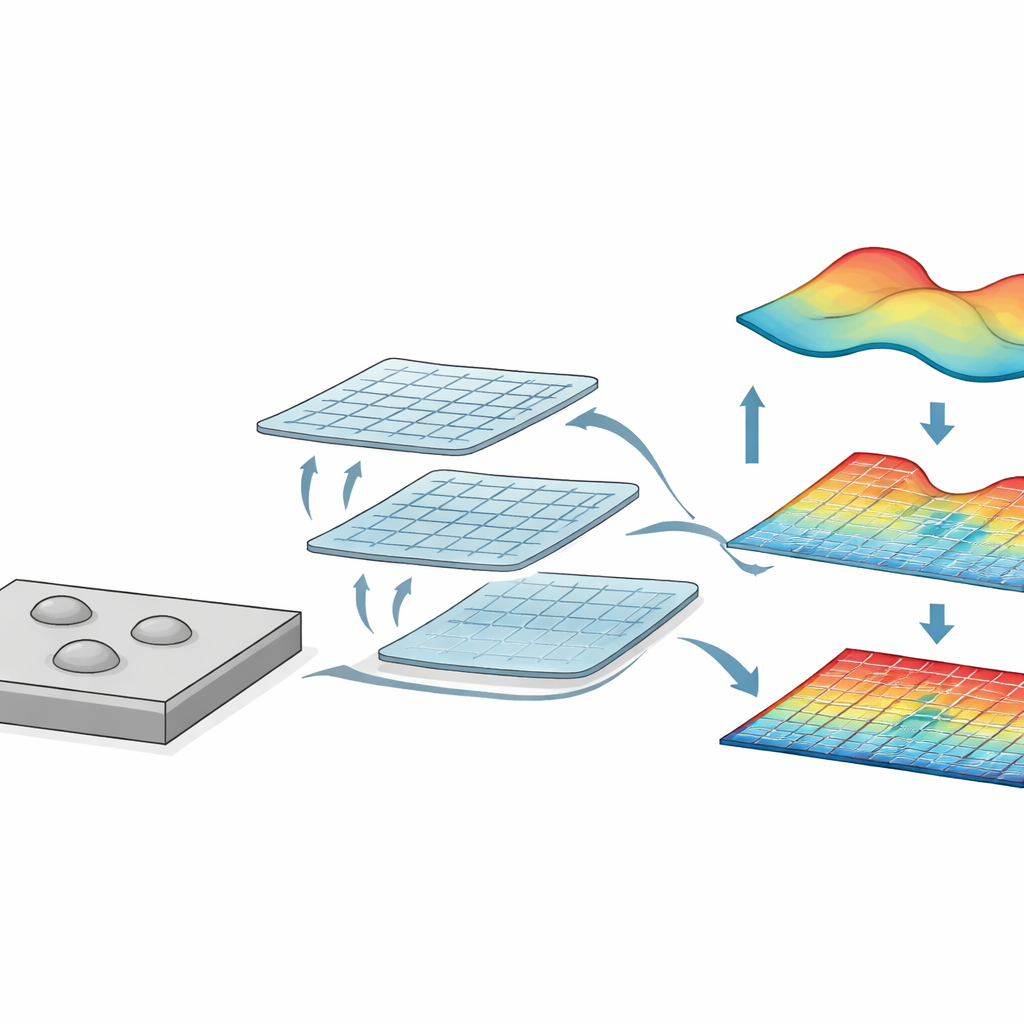

Turning Touch into Shape Maps

The soft sensor was then expanded into a small array that can capture patterns of pressure over an area, much like a low-resolution image. When the team pressed ring-shaped or other complex objects onto the sensor, the resulting pressure maps clearly revealed their outlines and hollow regions, showing that the sensor can “see” shapes through touch. Computer simulations confirmed that the soft material concentrates stress locally, similar to human skin, which helps it pick up fine differences in height and texture on millimeter-sized features such as tiny bumps or protrusions on a surface.

Letting Vision and Touch Work Together

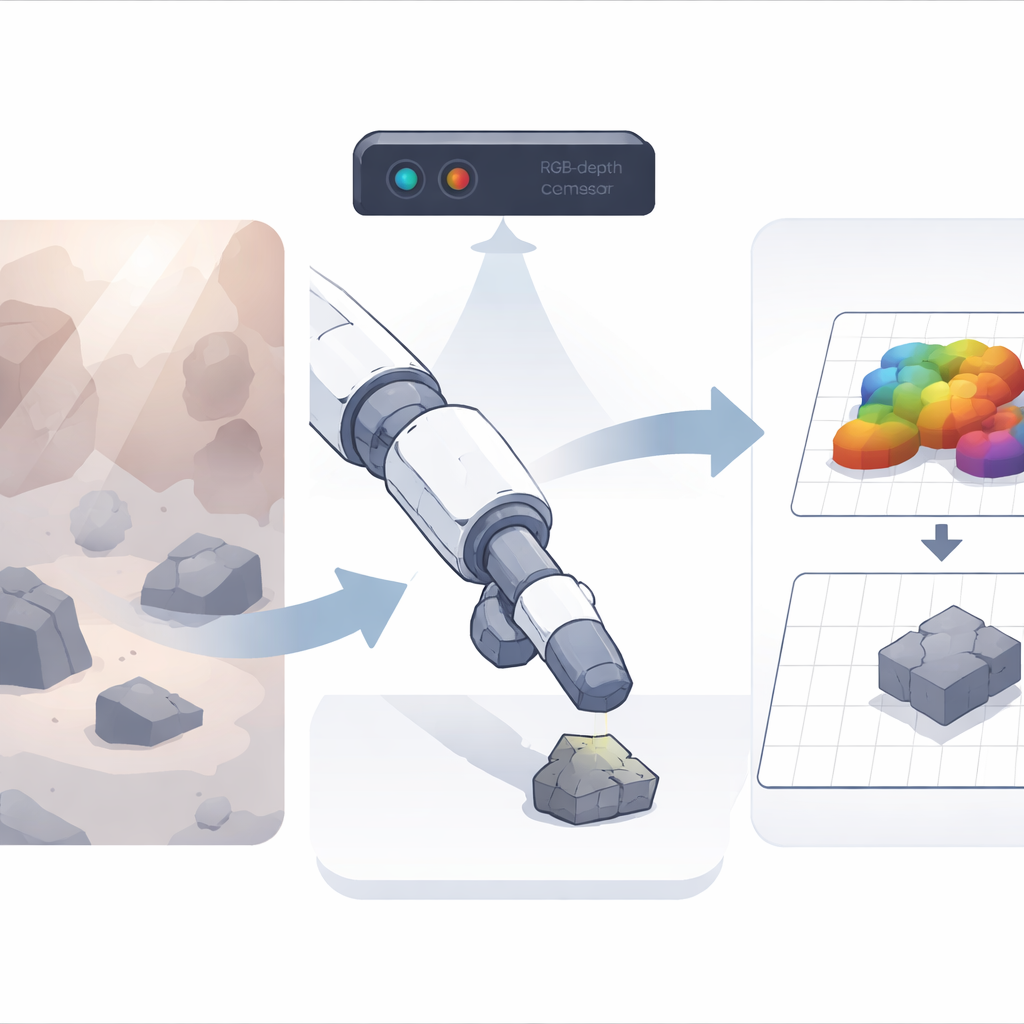

The complete system uses an RGB-Depth camera to find where an object is in space and a soft tactile pad to explore its surface. First, the camera estimates the object’s position and overall shape from a distance, much like a person glancing at a table before reaching out. When visual information becomes unreliable—because of shadows, glare, or focus problems—the robot brings its tactile sensor into contact with the object. By scanning the pad over different parts of the surface and stitching together the pressure data, the system reconstructs a three-dimensional profile of features just a few millimeters across, such as the raised domes of pills in a blister pack or small bumps on a control panel.

What This Means for Future Robots

By fusing camera-based localization with detailed touch-based mapping, this work shows how robots can handle tiny, irregular objects even when they cannot fully rely on their “eyes.” The study demonstrates that a simple, low-cost printed sensor can both back up and, when necessary, stand in for vision. This lays the groundwork for future robots that adapt to changing conditions, combining sight and touch the way humans do to carry out precise tasks in messy, unpredictable, or hazardous environments.

Citation: Jang, J., Park, BS., Oh, K.T. et al. Complementary visual localization and tactile mapping approach for robotic perception of millimeter-sized objects with irregular surfaces. Microsyst Nanoeng 12, 91 (2026). https://doi.org/10.1038/s41378-026-01190-8

Keywords: humanoid robots, tactile sensing, multimodal perception, micromanipulation, RGB-depth vision