Clear Sky Science · en

Single-shot, reference-less computational wavefront sensing for complex optical fields

Seeing the Shape of Light in a Single Glance

Every beam of light carries a hidden landscape: tiny hills and valleys in its wavefront that reveal how it has travelled, what it has passed through, and what it has touched. Measuring this landscape is crucial for everything from sharpening telescope images of distant galaxies to peering deep into living tissue. This paper introduces a new way to read that hidden map from a single snapshot, using a compact sensor and smart computation to decode even extraordinarily tangled light fields that defeat most existing instruments.

Why Measuring Light’s Shape Matters

Light does much more than simply brighten a scene. Its detailed structure encodes information about lenses in a microscope, turbulence in the atmosphere, imperfections in a manufactured surface, or even the inner arrangement of biological cells. To recover that information, researchers need to know both the brightness and the precise shape of the light wavefront. Traditional tools, such as interferometers or Shack–Hartmann sensors, can do this but often at a cost: they may require a separate reference beam, multiple exposures, bulky optics, or they struggle when the wavefront becomes highly distorted, full of sharp twists, breaks, and swirling singularities. As modern applications demand higher resolution and more complex beams, these older methods run into fundamental limits.

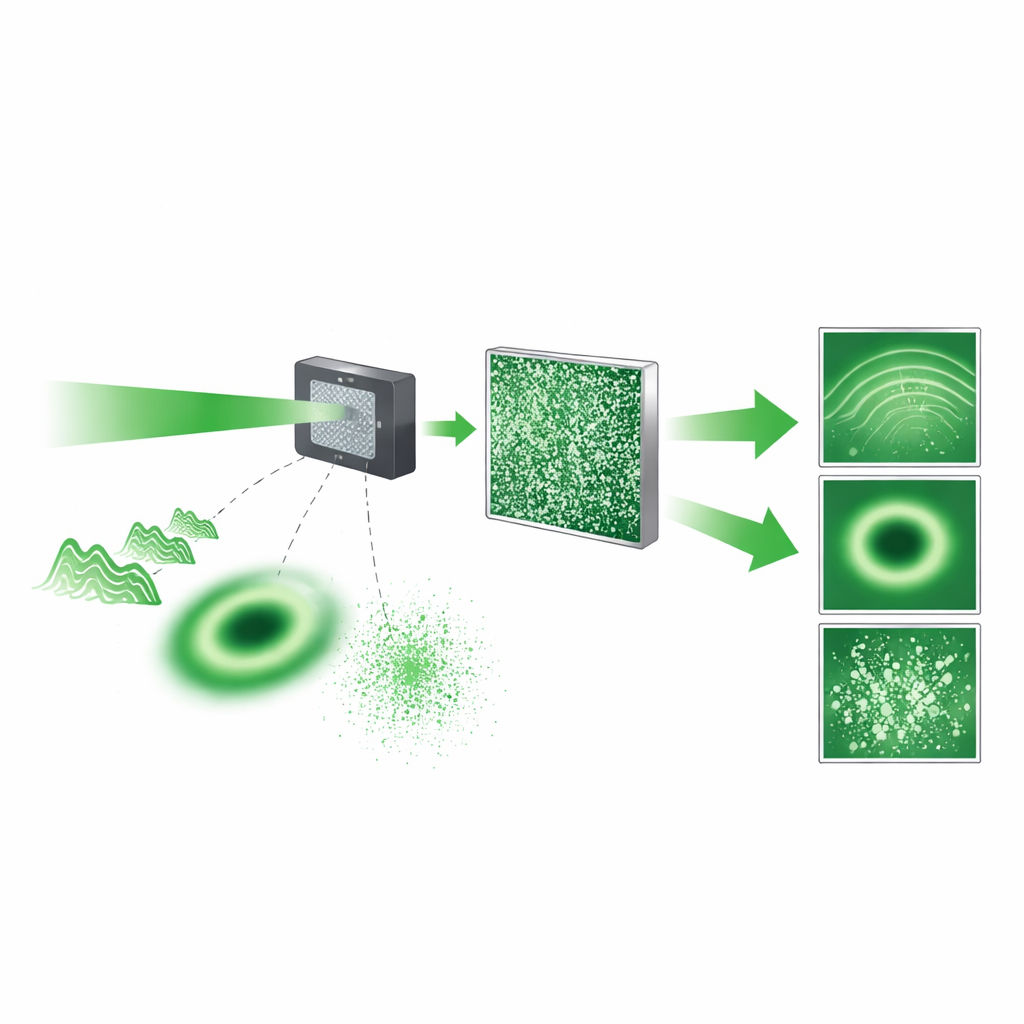

A Compact Sensor That Scrambles to Understand

The authors combine a bare image chip with a thin patterned plate called a diffuser to build an unusually simple wavefront sensor. Instead of forming a clear image, the diffuser deliberately scrambles the incoming light into a grainy speckle pattern on the detector. While this pattern looks random, it is in fact a precise fingerprint of the incoming wavefront: its brightness and fine structure are determined by how the original light field interacts with the known pattern of the diffuser and then propagates through space. Because the detector captures this scrambled pattern in one exposure and no separate reference beam is needed, the hardware is compact and mechanically straightforward, resembling a slightly thickened image sensor.

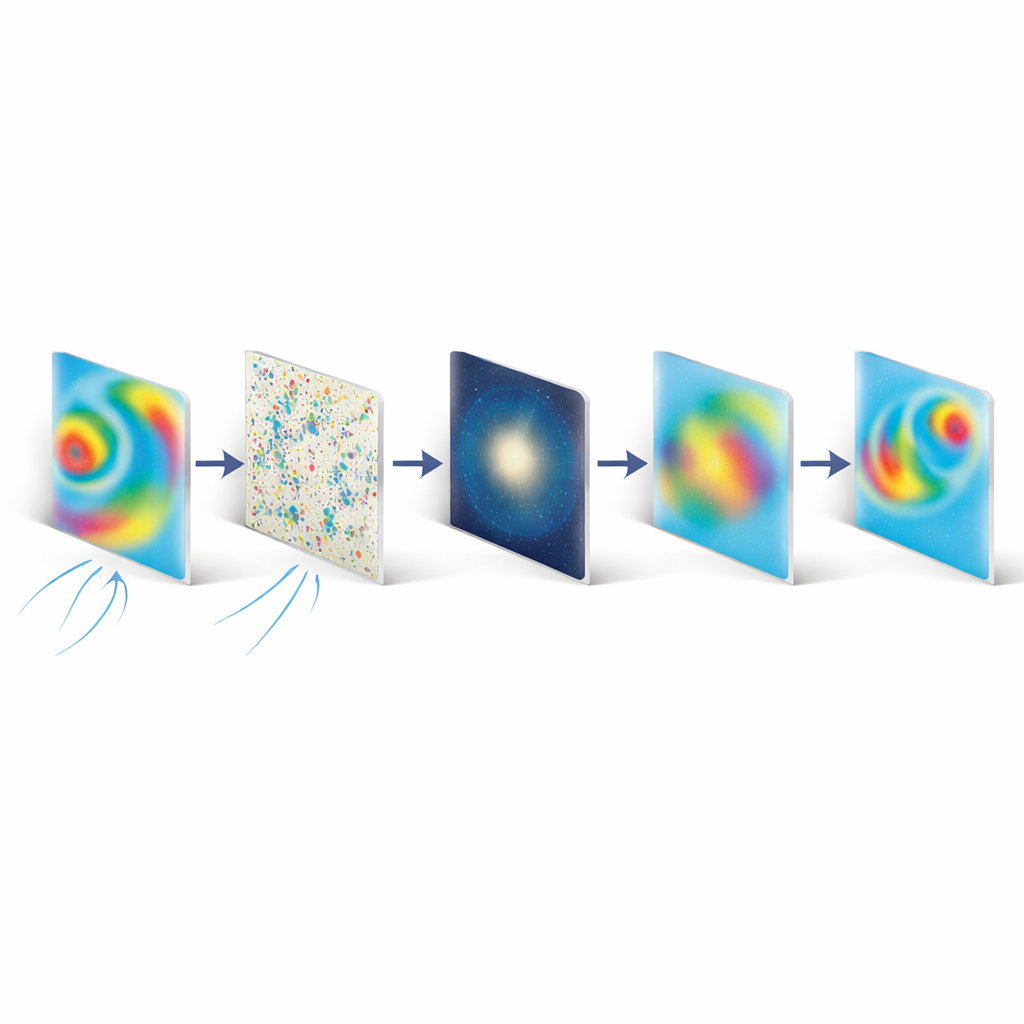

SAFARI: Letting Physics Guide the Reconstruction

Turning that single speckle pattern back into the full complex wavefront is a mathematically difficult task known as phase retrieval. The core advance of this work is a computational strategy called SAFARI (Spatial And Fourier-domain Regularized Inversion). SAFARI takes in the captured speckle pattern and a physical model of how the diffuser and free-space propagation transform light. It then searches for the wavefront that best explains the measurement, while enforcing two simple but powerful expectations: that the wavefront is relatively smooth in space and that most of its energy lies in low spatial frequencies when viewed in the Fourier (frequency) domain. These expectations are built into the algorithm as soft and hard filters, which stabilize the reconstruction and make a notoriously ill-posed problem reliably solvable from a single frame.

Pushing Into Extreme Optical Complexity

To test this approach, the team challenged their sensor with three demanding classes of light fields. First, they created synthetic optical distortions, similar to those caused by imperfect lenses or atmospheric turbulence, combining up to around 200 basic shape components. SAFARI recovered these distortions with high accuracy over a large range of strengths. Second, they generated “structured light” beams whose phases wind around in spirals or form intricate lattices—waves carrying high “topological charge” or arranged in families such as Laguerre–Gaussian and Bessel–Gaussian modes. The system could faithfully reconstruct beams with very high charge (up to 150) and even mixtures of more than 200 different modes at once. Finally, they measured dense speckle fields similar to what arises when light scatters in fog, tissue, or rough surfaces. Here the sensor resolved on the order of 190,000 independent spatial modes, surpassing the capability of many specialized instruments by more than an order of magnitude.

From Lab Prototype to Future Imaging Tools

The authors show that their diffuser-based sensor and SAFARI algorithm together rival or exceed many state-of-the-art, task-specific wavefront sensors in resolution, accuracy, and range, all while remaining broadly applicable to very different kinds of optical fields. The main trade-off is computation: solving the inverse problem takes seconds on a modern laptop, which may be too slow for some real-time uses, but could be accelerated with optimized code or physics-aware machine learning. Even in its current form, this single-shot, reference-less method opens a path to simpler and more versatile instruments for beam diagnostics, high-resolution phase microscopy, imaging through scattering media, and the rapidly growing field of structured light, where the shape of the wave is as important as its brightness.

Citation: Gao, Y., Cao, L. & Tsai, D.P. Single-shot, reference-less computational wavefront sensing for complex optical fields. Light Sci Appl 15, 174 (2026). https://doi.org/10.1038/s41377-026-02241-5

Keywords: wavefront sensing, computational imaging, diffuser-based sensor, structured light, speckle fields