Clear Sky Science · en

Generating archaeological line drawings from limited reference images

Turning Ancient Art into Clear Line Images

Archaeologists rely on careful black-and-white line drawings of artifacts to communicate shape, decoration, and wear in a way that photographs often cannot. But producing these drawings by hand is slow, specialized work that doesn’t scale to the huge number of objects in museum collections and excavation reports. This paper presents a new artificial intelligence method that can turn ordinary photos of artifacts into expert-quality line drawings, even when only a small number of example drawings are available for training.

Why Simple Lines Matter for the Past

Line drawings are the quiet workhorses of archaeology. By stripping away distracting shadows, corrosion, and background clutter, they highlight an object’s outline, decorative patterns, and key manufacturing features that specialists use to classify and compare finds. However, creating them has traditionally required many hours of skilled illustration for each artifact, and different illustrators may use slightly different styles. As large-scale digitization of collections accelerates, this manual process has become a bottleneck: there are far more photographs of artifacts than there are experts who can turn them into consistent, publication-ready drawings.

Teaching an AI to Draw Like an Archaeologist

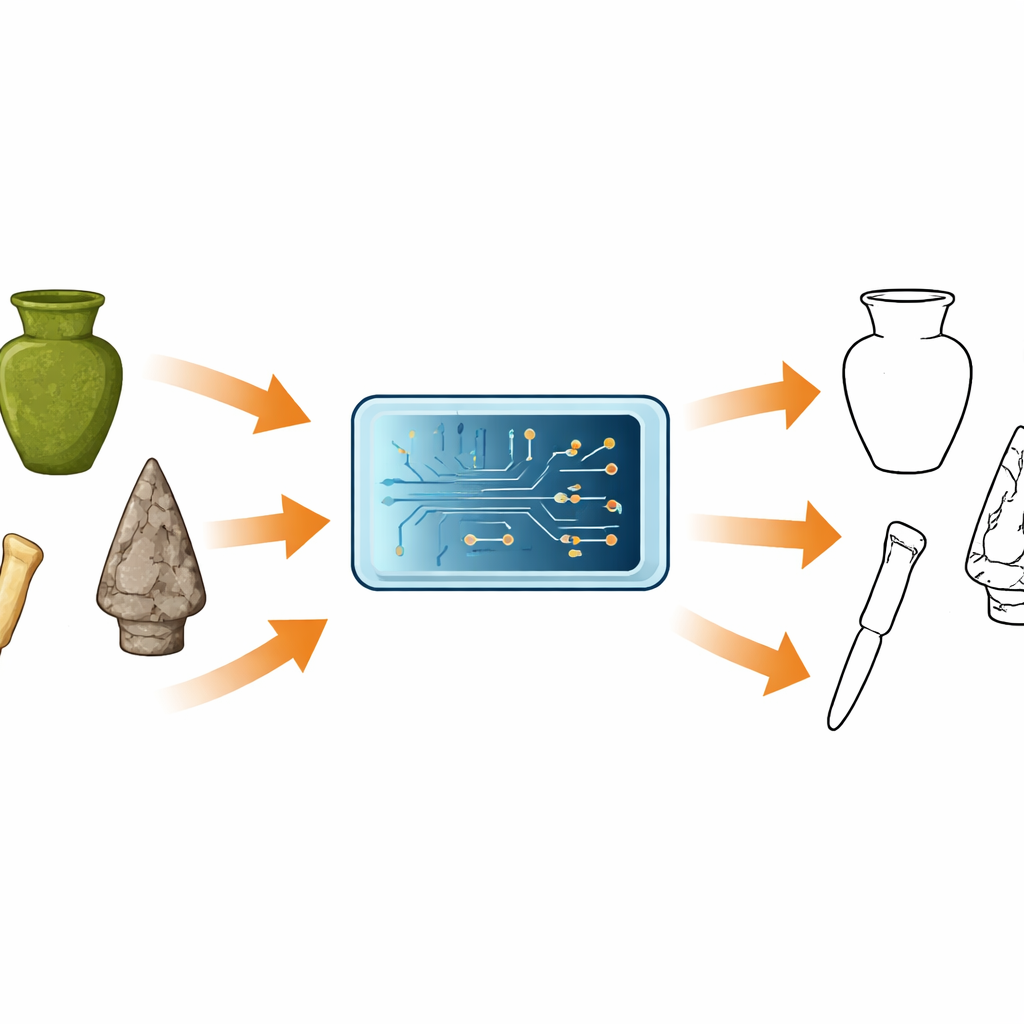

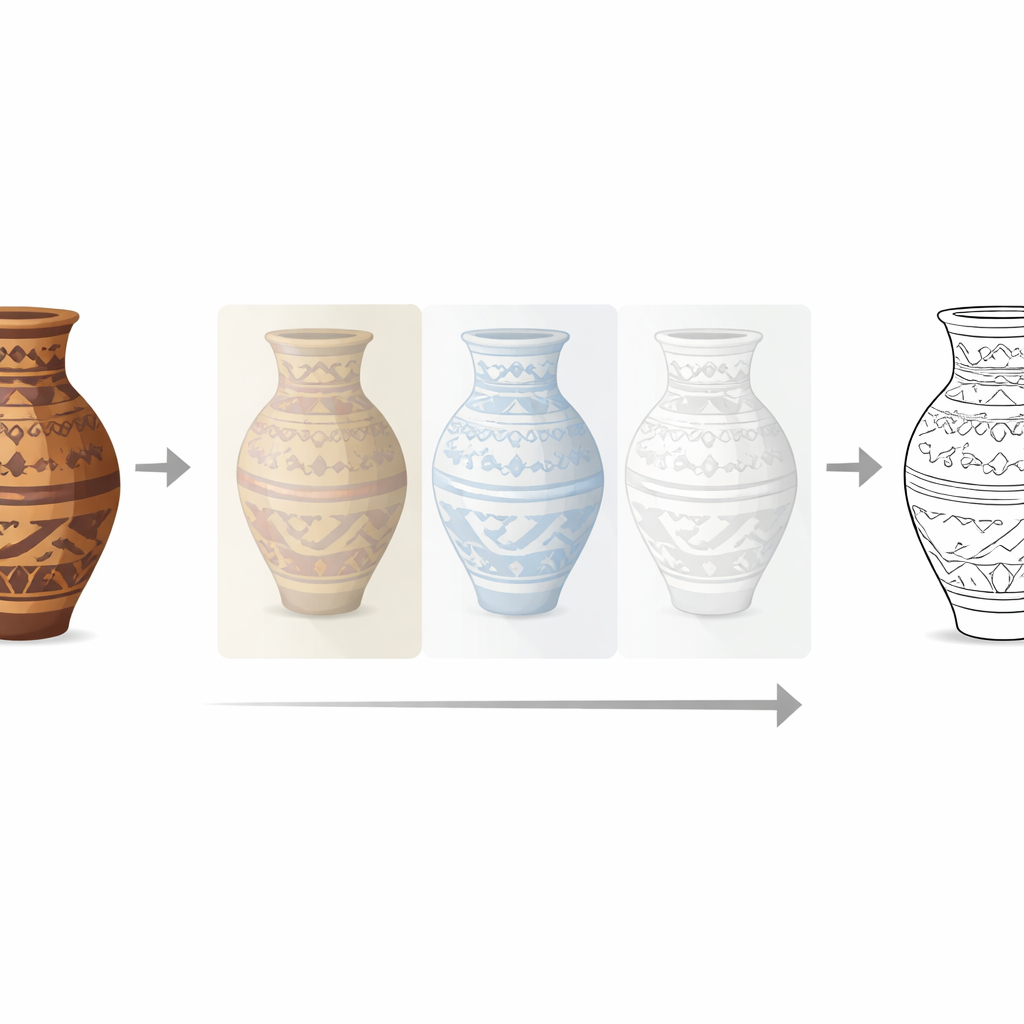

The authors adapt a modern “diffusion” image generator—originally designed to create pictures from text prompts—and retrain it to specialize in archaeological drawing. Their system takes two inputs: a photo of a single artifact and a short text description that encodes the desired drawing style. Inside the model, the image is turned into a compact internal code, mixed with the style information, and then gradually transformed from random noise into a clean black-and-white outline. A light-weight add-on technique called LoRA lets the team fine-tune only a small portion of the model’s parameters, so it can learn the conventions of archaeological illustration without needing a huge dataset or massive computing power.

Doing More with Very Few Examples

Instead of thousands of training samples, the researchers work with just 30 carefully chosen image–drawing pairs for each of three artifact types: bronze vessels, stone tools, and bone tools. They align, clean, and resize each photograph and its matching line drawing, standardizing brightness and removing messy backgrounds. Despite this limited data, the adapted model learns to respect the strict structural rules archaeologists expect: continuous, unbroken outlines, accurate proportions, and ornament that is neither over-simplified nor drowned in surface noise. Tests show that even with as few as 10 training examples, the system already rivals or surpasses several state-of-the-art sketching methods developed for general images.

Cleaner Results Than Existing Tools

The team compares their method to a suite of existing edge detectors, sketch extractors, and generative models. Standard tools either trace every speck of texture and corrosion, producing cluttered lines, or over-smooth the image and lose crucial details. In contrast, the new system produces bronze vessel drawings that maintain the correct tripod shapes and decorative bands, stone tool drawings that clearly show flake scars and ridges, and bone tool drawings with smooth, continuous silhouettes and just enough interior lines to suggest volume and wear. Quantitative measures of visual similarity and structure, as well as a blinded user study with 12 archaeologists and heritage professionals, all favor the new approach, which is most often ranked as the best among competing methods.

Limits, Practical Use, and Future Directions

Like human illustrators, the AI struggles when photographs are extremely degraded, heavily corroded, or badly lit—situations where the underlying shapes are genuinely ambiguous. Generating each high-resolution image also takes up to a minute on a powerful graphics card, which could slow adoption in resource-limited settings. The authors suggest two next steps: converting the generated drawings into editable vector graphics and building interactive tools so experts can quickly correct uncertain regions instead of redrawing from scratch.

What This Means for Understanding the Past

In plain terms, this work shows that a carefully adapted image generator can learn to “draw like an archaeological illustrator” from only a handful of examples. The system turns ordinary artifact photos into clear, standardized line drawings that closely match expert plates, potentially saving specialists many hours of routine drafting. As museums and field projects seek to document ever-growing collections, such tools could make it far easier to record and share the shapes and decorations of objects that carry clues to ancient technologies, trade, and culture—helping line drawings remain a precise, accessible language for understanding the past.

Citation: Xue, J., Wang, X., Zhang, Q. et al. Generating archaeological line drawings from limited reference images. npj Herit. Sci. 14, 247 (2026). https://doi.org/10.1038/s40494-026-02526-3

Keywords: archaeology, line drawings, diffusion models, heritage digitization, computer vision