Clear Sky Science · en

Dual-stream disentangled super-resolution for bamboo slip text images

Bringing Faded Bamboo Texts Back to Life

For centuries, Chinese officials and scholars wrote laws, letters, and ideas on thin strips of bamboo and wood. Today, many of these ancient records survive only as fragile, darkened pieces whose ink has blurred and whose surfaces have cracked. Historians and conservators rely on digital images of these slips, but the text is often too fuzzy to read clearly or restore. This study introduces a new imaging approach that sharpens those characters while respecting the original material, opening a clearer window into early Chinese history.

Why Old Bamboo Records Are So Hard to Read

Bamboo and wooden slips are organic, and time has not been kind to them. Exposure to moisture, mold, and physical stress causes fibers to fray, ink to spread, and surfaces to stain. Even with good cameras and infrared imaging, the resulting pictures can show smeared strokes, noisy textures, and missing details. Simple digital tricks like enlarging or smoothing an image tend to blur the edges of the writing even more. Modern artificial intelligence methods for sharpening text images have mostly been trained on street signs and printed words in everyday scenes, not on fragile, vertically written calligraphy on aging bamboo. As a result, they often either smooth away important stroke shapes or invent misleading structures.

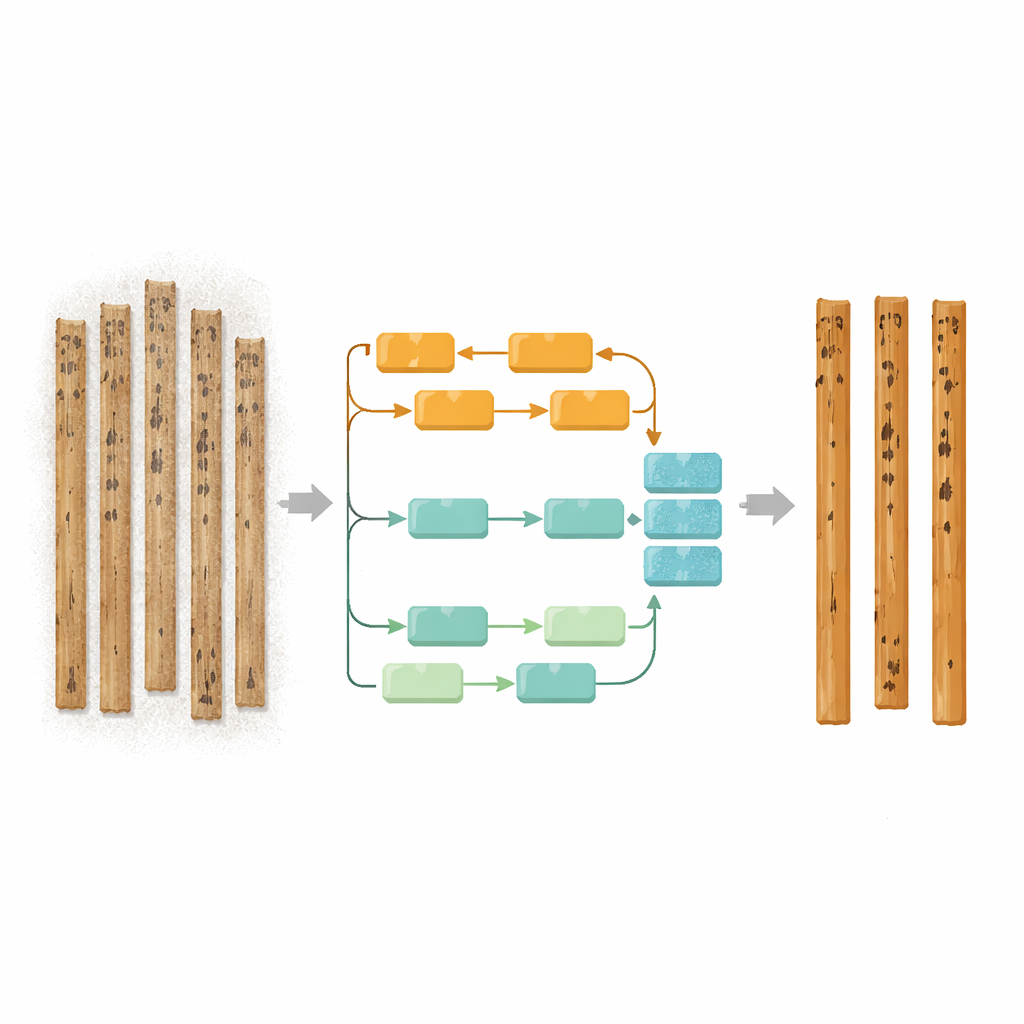

A Two-Path Approach to Text and Background

The researchers propose a new image reconstruction model called DSF-SRFormer that treats the writing and the bamboo surface as two different, but related, problems. Instead of processing everything in a single bundle of features, the system first separates what belongs to the characters from what belongs to the background. One pathway focuses on the dark ink strokes, while the other focuses on the lighter fibers, stains, and textures of the slip itself. Inside the text pathway, a special module pays attention to the main brush directions found in Chinese calligraphy—horizontal, vertical, and diagonal strokes—so that the model can reinforce the correct shapes where lines bend, intersect, or taper. In parallel, the background pathway works across multiple scales to understand both fine fibers and broader stains, making it possible to clean up noise without erasing meaningful marks.

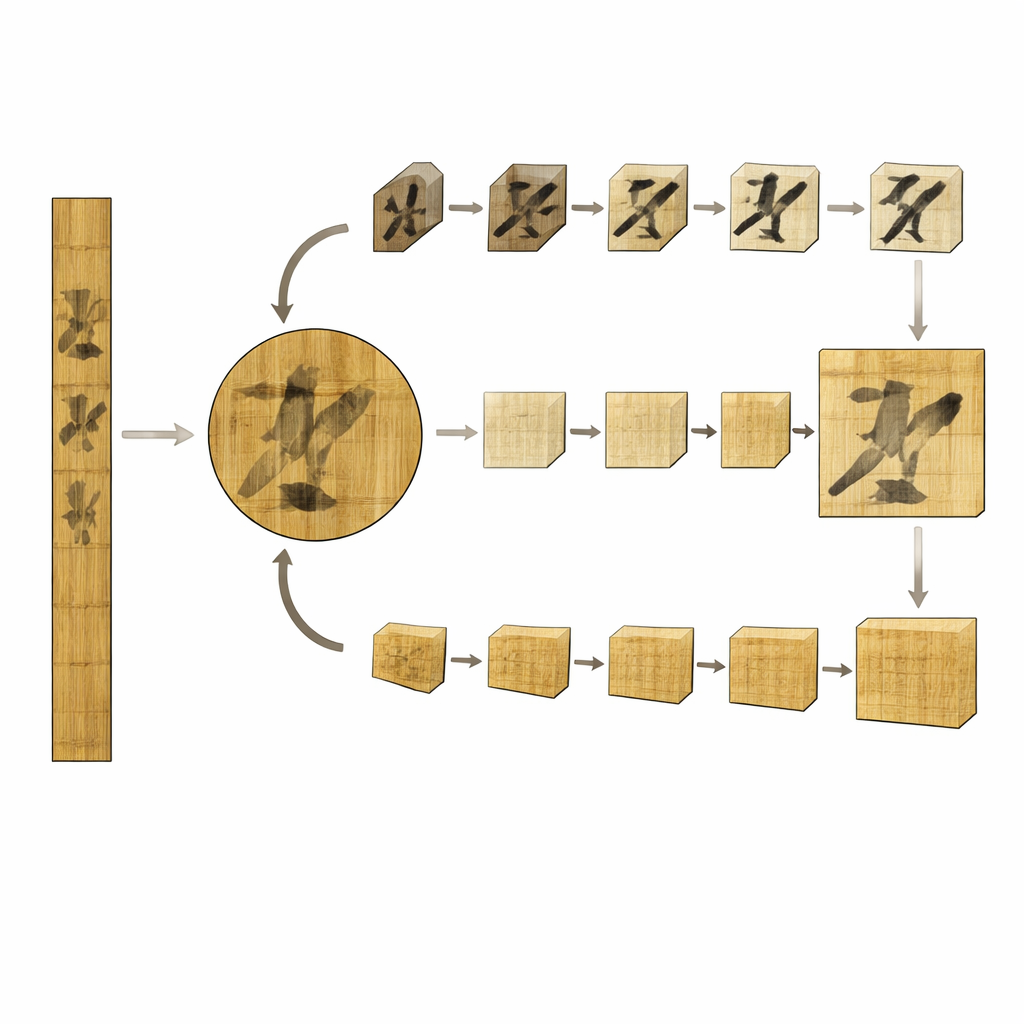

Handling Long Strips of Vertical Writing

Beyond individual characters, complete bamboo slips show tall columns of text that extend over long distances. To cope with this layout, the authors design a “long strip” attention mechanism that slices each image into overlapping vertical bands. Within each band, the model learns how strokes continue along rows and across columns, then gently fuses neighboring bands so that characters remain consistent across the entire slip. This design captures long-range relationships—such as how strokes in one part of a character relate to others further down—while keeping the computation manageable. By reconstructing at both the character level and the full-slip level, the method aims to produce images that are sharper and more readable for human experts.

Measuring Sharper Strokes and Cleaner Surfaces

To train and test their system, the team assembled a large set of character images from bamboo slips, silk manuscripts, and handwritten drafts, then generated realistic digital damage that mimics fiber patterns, ink spread, aging spots, and other defects. They also prepared full-slip images derived from an existing collection of excavated texts. During training, the model is guided not only to match the original pixels, but also to keep edges crisp and overall appearance pleasing to the eye. The authors compare DSF-SRFormer with many established sharpening methods, from classic interpolation to advanced deep learning and diffusion models. Across several test sets and for both moderate and strong upscaling, the new model consistently produces higher scores for fidelity and visual quality, and visual examples show more continuous strokes and fewer distracting artifacts.

From Digital Cleanup to Historical Insight

The findings suggest that carefully separating the writing from the support material, and tailoring the processing to the unique layout of bamboo slips, leads to clearer reconstructions than treating everything as generic imagery. While the approach still faces limits—such as handling very different text sizes or expanding beyond infrared images—it already improves the legibility of difficult sources and also performs competitively on modern street-text benchmarks. For historians, archaeologists, and conservators, this means sharper digital views of ancient documents, making it easier to identify characters, restore missing parts, and interpret long-silent voices from early China.

Citation: Wang, W., Wang, T., Hu, X. et al. Dual-stream disentangled super-resolution for bamboo slip text images. npj Herit. Sci. 14, 229 (2026). https://doi.org/10.1038/s40494-026-02495-7

Keywords: bamboo slips, ancient manuscripts, image super-resolution, digital restoration, Chinese calligraphy