Clear Sky Science · en

Restoration of Liye Qin slips characters using generative adversarial network with effective connected component constraint

Ancient words brought back to life

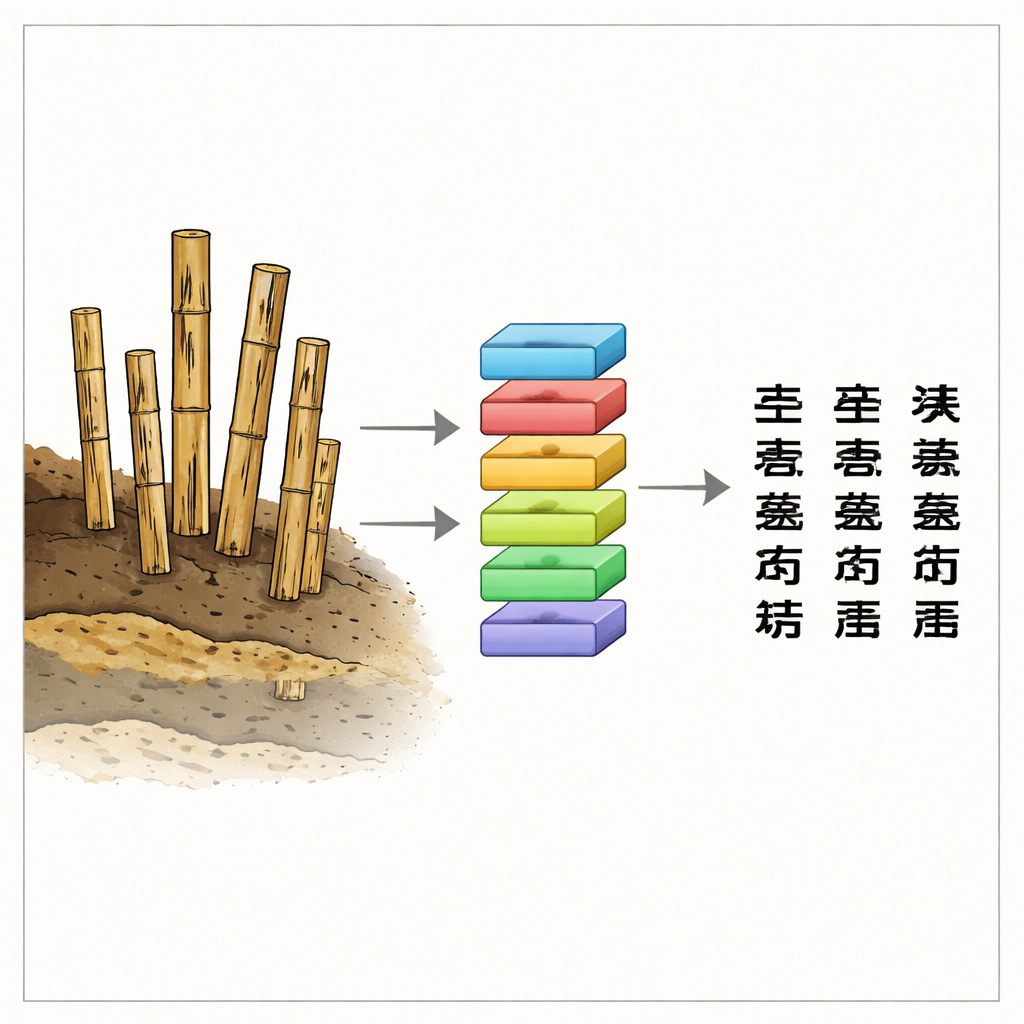

Long before paper, Chinese officials wrote on slim strips of bamboo and wood. Thousands of these fragile records, known as the Liye Qin Slips, were pulled from an abandoned well after more than two millennia underground. They preserve everyday orders, accounts, and reports from the first unified Chinese empire. But water, mud, and microbes have left much of the ink blurred or missing, making the slips painfully slow to read by hand. This study shows how a modern artificial intelligence system can digitally "clean" these damaged characters, helping historians recover voices from the distant past.

Why these buried records matter

The Liye Qin Slips are not royal decrees or grand inscriptions; they are the paperwork of a working government. Over 30,000 slips record taxes, labor, and routine administration in one county at the edge of the Qin empire. Unlike similar slips found in dry tombs, the Liye pieces lay in wet sediment at the bottom of a well. Many are warped, cracked, and stained; the brush strokes are smudged or eaten away. Deciphering a single batch can take experts years, and the growing number of finds has outpaced what human eyes alone can handle. Automating part of the restoration process could dramatically speed up research while preserving the subtle shapes that make each character readable.

Teaching a computer to see through the damage

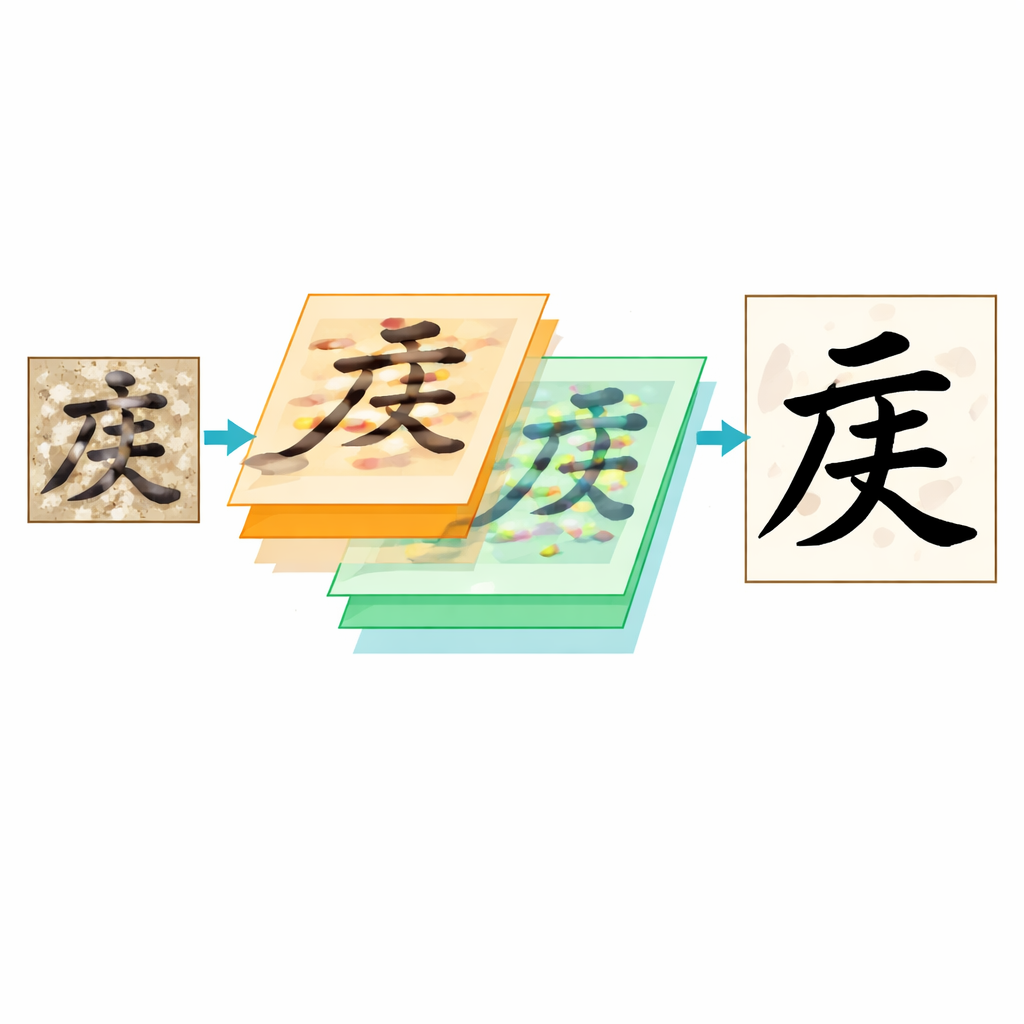

The authors treat restoration as a kind of image translation: the computer receives a small, noisy picture of a character and is asked to produce what that character would look like if it were clean and sharp. To do this, they build on a type of AI called a generative adversarial network, or GAN. One network (the “generator”) tries to turn a damaged image into a clear one, while another (the “discriminator”) judges whether the result looks like a genuine, well-written character. Through this back-and-forth contest, the generator gradually learns to produce more convincing restorations that fool the critic.

Sharper attention to tiny brush strokes

Standard image tools often miss the finest details of ink on bamboo, especially when the background is mottled and the ink is faint. The team improves the heart of the GAN using a U-shaped design known as U-Net, which balances broad context with pixel-level detail. They replace the usual building blocks in this network with specially designed Local Residual Dense Blocks. These blocks encourage the system to reuse useful patterns while avoiding the over-smoothing that can cause nearby strokes to blur together. A strengthened “bottleneck” section in the middle of the network further refines the most important features, helping the model distinguish true strokes from noise even when the original is heavily degraded.

Keeping each character’s skeleton intact

A key innovation is what the authors call an Effective Connected Domain constraint. Rather than judging every pixel on its own, this rule looks at the main ink regions in a character: how large they are and where their centers lie. The model compares these coarse “islands” of ink in its output to those in carefully restored reference images, paying special attention only to the largest few that form the character’s core skeleton. If a major stroke is missing, merged with another, or shifted out of place, the system is penalized and forced to adjust. This simple geometric check proves remarkably stable even when strokes are broken, edges are ragged, or ink has bled into the background.

How well the digital repairs work

Because no suitable data existed, the team created their own paired dataset of 600 character images from the Liye slips, each matched to a painstaking, expert-made restoration. On this benchmark, their method outperforms several state-of-the-art approaches, including other GANs, a Transformer-based model, and a diffusion model, according to three standard image-quality measures. Visual side-by-side comparisons show fewer broken strokes, cleaner separation between close brush lines, and less background clutter. Calligraphy instructors who reviewed the results in a blind test gave high marks for stroke continuity, structural accuracy, and overall readability, and separate tests suggest the model does not simply memorize the training data.

Bringing buried writing back into focus

For non-specialists, the message is clear: by combining expert knowledge of ancient scripts with tailored AI designs, it is now possible to recover legible writing from bamboo slips that once seemed too damaged to use. The model preserves not just whether a character is present, but how its strokes relate in space, making the output meaningful for historians and language scholars. Although the approach still depends on limited training data and has yet to be adapted to other materials such as silk or stone, it points toward a future in which many fragile, hard-to-read texts can be digitally restored and studied at scale, opening new windows onto everyday life in early empires.

Citation: Li, X., Huang, Y., She, S. et al. Restoration of Liye Qin slips characters using generative adversarial network with effective connected component constraint. npj Herit. Sci. 14, 194 (2026). https://doi.org/10.1038/s40494-026-02434-6

Keywords: bamboo slip restoration, ancient Chinese manuscripts, generative adversarial networks, digital heritage preservation, handwritten character recovery