Clear Sky Science · en

Fine grained representation learning for low resource Yi script detection and dataset construction

Saving a Fragile Written Heritage

The Yi people of southwest China have preserved a rich written tradition for centuries, recording medicine, astronomy, religion, and daily life in their own script. Yet many of these manuscripts are fading, stained, or otherwise damaged, and the script itself is visually complex. Manually transcribing hundreds of thousands of characters is slow and expensive. This paper presents a new computer-vision system designed specifically to find and isolate Yi characters in digital images of old documents, laying groundwork for large-scale digitization and preservation of this endangered written heritage.

Why This Script Is So Hard for Computers

Unlike the more familiar Latin alphabet or even modern printed Chinese, Yi characters are built from dense, curved strokes that often weave around one another. Many different characters look extremely similar, and the same character can appear in slightly different shapes across time and manuscripts. Historical pages often use tight multi-column layouts, with irregular gaps and overlapping strokes. On top of that, ink can be faded, pages warped, and backgrounds blotchy. Older detection methods, which rely on fixed rules about spacing or on generic text-detection models, tend to merge neighboring characters, miss faint strokes, or confuse background noise for writing. The authors argue that Yi manuscripts represent a kind of “worst case” for text detection, and that solving this problem could help many other low-resource scripts.

A New Way to See Fine Details

To tackle these challenges, the researchers design a specialized neural network called FGRL-YiNet (Fine-Grained Representation Learning Network for Yi). At its heart is a twist on standard convolutional layers, the workhorse of modern image recognition. Instead of using one fixed filter pattern everywhere, FGRL-YiNet uses dynamic convolution: several candidate filters operate in parallel, and a small gating module decides, for each region of the image, how much to rely on each one. This allows the system to subtly adjust its “receptive field” to local stroke patterns, better capturing delicate curves and junctions without being thrown off by cluttered backgrounds or page damage. Built on a compact ResNet-18 backbone, the model is deliberately kept moderate in size so it can learn effectively from the relatively small amount of annotated Yi data.

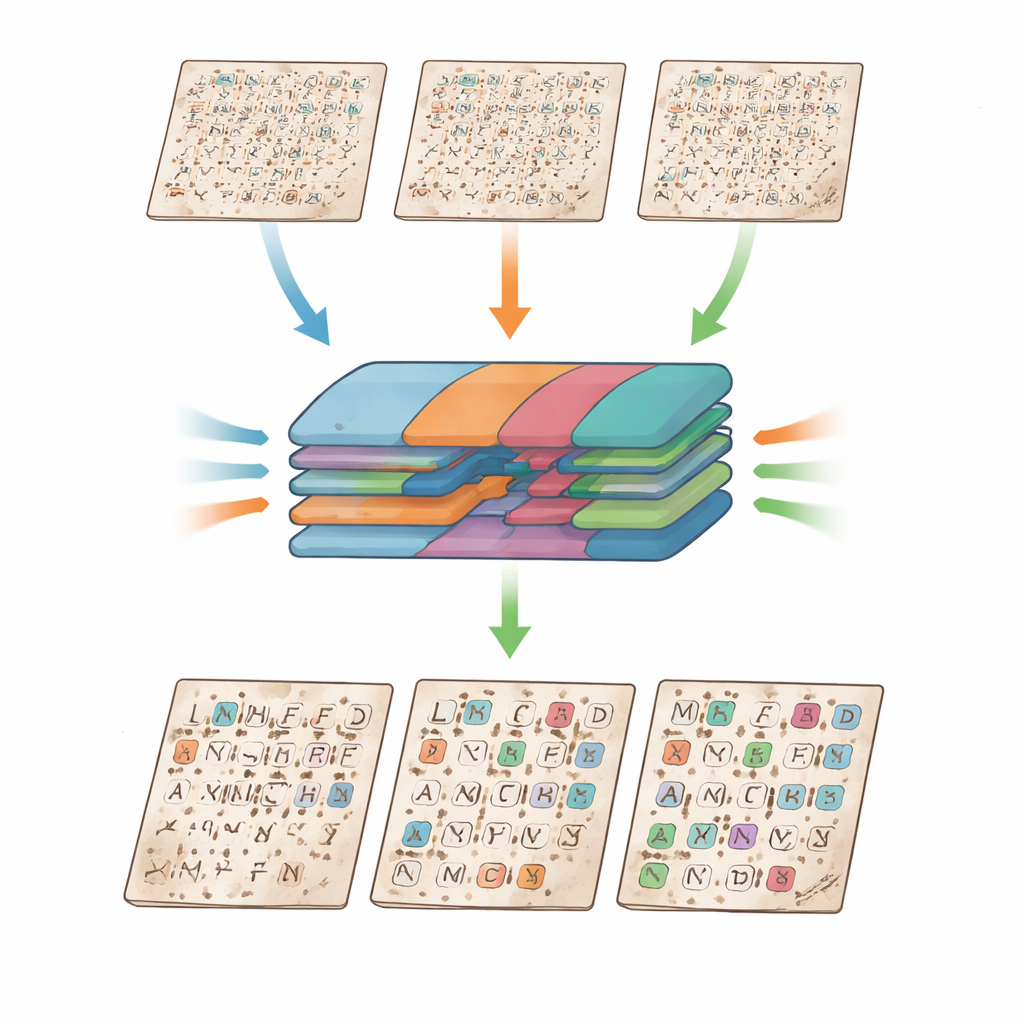

Combining Scales and Cleaning Up the Page

Detecting characters on a full manuscript page also requires understanding patterns at multiple sizes at once—from tiny wiggles in a single stroke to the layout of an entire column. FGRL-YiNet introduces an Adaptive Multi-Scale Fusion (AMSF) module to solve this. The network first extracts features at several resolutions, then uses a joint attention mechanism to decide which scale and which channels matter most at each location. One part of this attention focuses on “where” in the image fine details are important, while another focuses on “what” type of feature is useful—such as a particular stroke width or a small loop inside a character. In parallel, a differentiable binarization head learns to separate ink from background by predicting both a probability map and a locally varying threshold. Because this step is built into the network and trained end-to-end, it can preserve faint strokes that traditional black-and-white conversion would wash out, while suppressing speckle and stains.

Building a Benchmark for a Rare Script

A major obstacle for any specialized script is data: there are few high-quality digitized Yi manuscripts, and even fewer with precise labels for each character. The team addresses this by constructing the YiPrint-694 dataset from Liangshan Yi classics, resulting in nearly 347,000 labeled characters across 694 page images and 1,165 character categories. They combine careful preprocessing—noise reduction, edge enhancement, and binarization—with a semi-automatic segmentation pipeline and painstaking manual checking by Yi language experts. To mimic the look of older, discolored pages, they create additional images with yellowed and browned backgrounds. This curated collection becomes both the training ground for FGRL-YiNet and a public benchmark for future research on Yi and related scripts.

How Well the System Performs

When tested against a broad set of state-of-the-art text detectors, including widely used models like Faster R-CNN, DBNet++, and PSENet, FGRL-YiNet achieves the best overall scores on YiPrint-694. It detects characters with a high f-score of 94.7%, driven by very high precision (98.4%) and strong recall (91.3%), meaning it rarely mistakes background for text while still finding most characters on the page. Ablation experiments, where individual components are removed, show that each innovation—dynamic convolution, adaptive multi-scale fusion, and differentiable binarization—contributes measurable gains, and that they work best in concert. The model also transfers well to the larger MTHv2 dataset of historical Chinese Buddhist texts, where it performs competitively with leading general-purpose detectors, highlighting its broader potential.

What This Means for Cultural Preservation

For non-specialists, the central message is that careful, targeted design can help computers read some of the world’s most challenging scripts, even when only limited training data exist. By combining adaptive filters, smart multi-scale fusion, and built-in cleaning of degraded pages, FGRL-YiNet can reliably pinpoint individual Yi characters in crowded, damaged manuscripts. This makes it far easier to build searchable digital archives, support linguistic and historical research, and safeguard the written record of the Yi people. The authors see their architecture and dataset as a blueprint for tackling other underserved scripts around the world, showing that advances in artificial intelligence can play a direct role in preserving fragile cultural heritage for future generations.

Citation: Sun, H., Ding, X., Yu, H. et al. Fine grained representation learning for low resource Yi script detection and dataset construction. npj Herit. Sci. 14, 183 (2026). https://doi.org/10.1038/s40494-026-02418-6

Keywords: Yi script, historical manuscripts, text detection, digital heritage, deep learning