Clear Sky Science · en

Single-shot full-Stokes imaging through scattering media

Seeing Clearly Through the Fog

Whether it is a self-driving car in heavy rain, a doctor looking for a tumor deep inside tissue, or a wildlife camera peering through underbrush, all face the same obstacle: light gets scrambled as it passes through messy, cloudy material. This scrambling turns crisp images into grainy speckle, hiding important details. The work in this paper shows a new way to recover not just brightness, but the full polarization state of light—information about how the light waves wiggle—as it passes through very strong scattering. That extra information can reveal hidden objects and subtle differences that ordinary cameras miss.

Why Ordinary Cameras Get Lost in the Glare

When light travels through fog, tissue, or frosted glass, it bounces around randomly. The once-smooth wavefront that carried a clear picture breaks up into a noisy speckle pattern. Standard imaging tricks can sometimes reverse this scrambling, but only when scattering is mild. Once the scattering becomes strong, the few “ballistic” photons that remember where they came from are drowned out by noise. Traditional cameras also record only intensity—how bright the light is at each point—throwing away the polarization, which can encode how light interacted with materials along the way. As a result, scenes behind thick scattering layers often look like formless fuzz, no matter how smart the image-processing software.

Using the Shape of Light as an Extra Clue

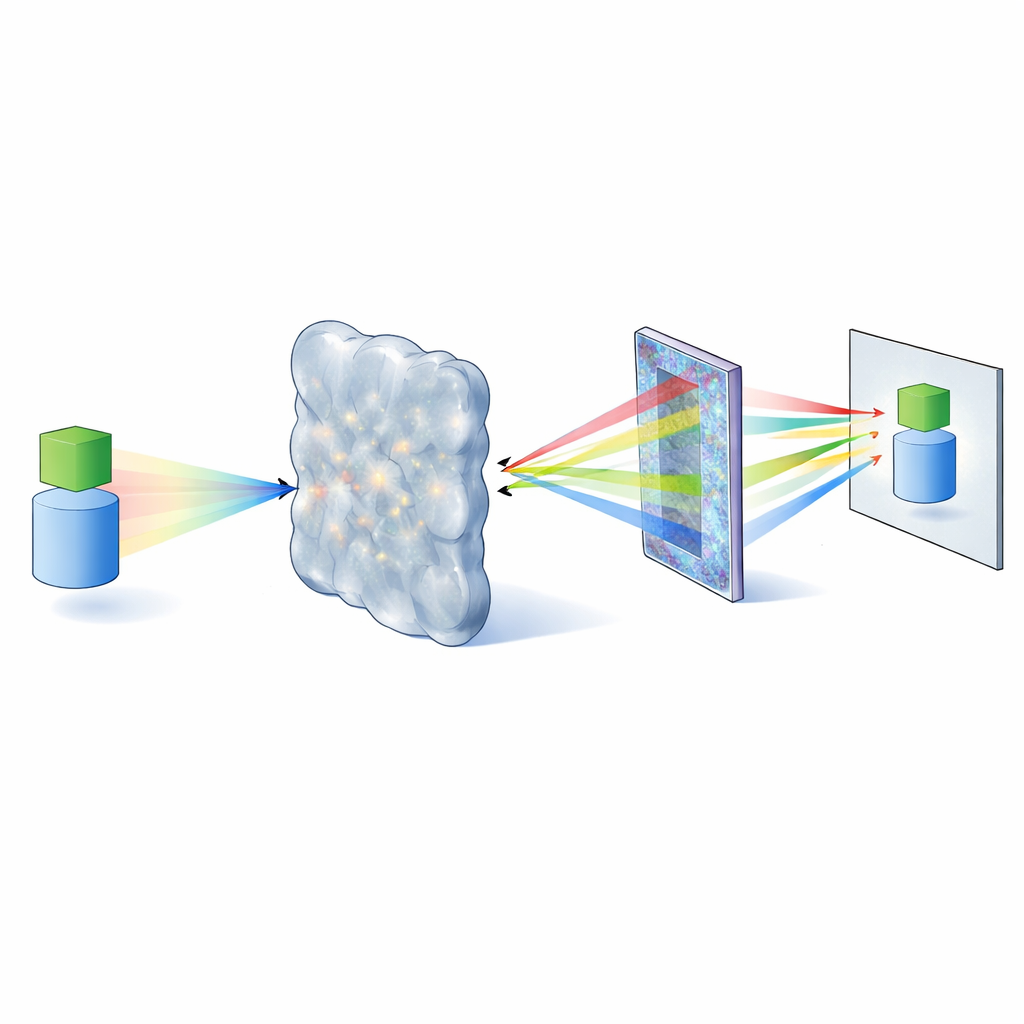

Light waves can vibrate in different directions, and this polarization carries a kind of fingerprint of the objects and materials they have touched. The full description of polarization at each point is captured by the so-called Stokes parameters, four numbers that together describe total brightness and how much the light is linearly or circularly polarized. Recent advances in flat optical components called metasurfaces—nanostructured films thinner than a human hair—make it possible to measure all four Stokes parameters in a single snapshot. The authors designed such a metasurface that splits incoming light into six spots, each corresponding to a different polarization channel. From a single exposure, they can reconstruct the full-Stokes polarization image with high precision, even for complex patterns and real-world samples like butterfly wings or eyeglass lenses.

Teaching a Neural Network the Physics of Light

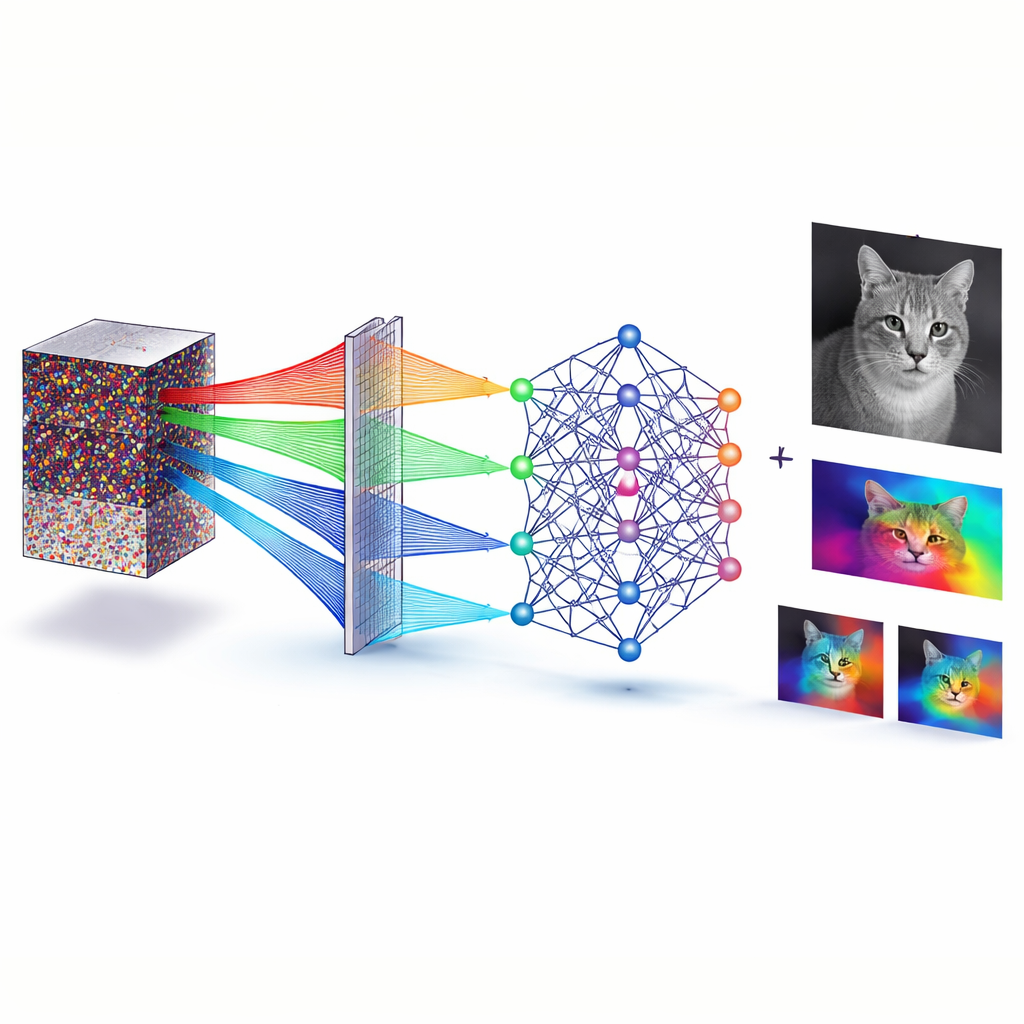

Capturing many polarization channels is only half the job; the other half is turning a scrambled speckle pattern back into a recognizable scene. For this, the team built a specialized deep neural network, called PdU-Net, that takes in the six polarization-resolved speckle images and predicts the clean full-Stokes images that would have been seen without the scattering layer. Instead of relying only on data, the network is trained with built-in physical rules about polarization. These rules act as guardrails, pushing the network’s outputs to obey the same relationships that real Stokes parameters must satisfy. By embedding these constraints directly into the loss function, the network learns to separate meaningful polarization structure from random noise, recovering fine details that a standard U-Net model or conventional speckle-correlation methods cannot retrieve at similar scattering strengths.

Seeing Through Camouflage and Motion

To test their approach under harsh conditions, the researchers placed various diffusers between the metasurface and the target, reaching optical depths where earlier techniques fail completely. Even when the memory of the original wavefront is nearly erased, PdU-Net could reconstruct sharp images of digits and shapes, along with their full polarization maps, from a single shot. The team then created a camouflage scenario: two thin polarization elements moving and changing shape against a cluttered background, all viewed through strong scattering. In conventional intensity images, the objects blend into the surroundings. In contrast, the reconstructed maps of polarization angle and circular polarization clearly expose the objects and even trace their motion, because their polarization signatures differ from the background even when their brightness does not.

What This Means for Future Imaging

The study shows that by co-designing the hardware that gathers light and the neural network that interprets it, we can see through highly scattering media in ways that were not possible before. The metasurface sorts photons by polarization in a compact, camera-friendly layer, while the physics-informed network uses those extra clues to undo severe scrambling and recover the full-Stokes polarization image in a single snapshot. For non-experts, the takeaway is simple: instead of just measuring how bright light is, this method also measures how it is oriented, and then uses that rich information to cut through optical fog. This could help future systems detect hidden tumors, track animals in dense foliage, or guide vehicles in bad weather, all by reading subtle patterns in the shape of light itself.

Citation: Xiansong Ren, Ye Tian, Yanling Ren, Bo Wang, Shifeng Zhang, Anqi Hu, Kaveri A. Thakoor, and Xia Guo, "Single-shot full-Stokes imaging through scattering media," Optica 12, 1560-1568 (2025). https://doi.org/10.1364/OPTICA.572713

Keywords: polarization imaging, metasurface camera, imaging through scattering, physics-informed deep learning, camouflage detection