Clear Sky Science · en

Optical attention mechanism for high-resolution computational imaging

Sharper Pictures with Smaller Cameras

Why do great photos usually come from chunky cameras with thick glass lenses, while slim phones struggle in low light or at long zoom? This paper introduces a new way to design camera optics that borrows an idea from human attention: focus effort where it matters most, and relax elsewhere. By teaching lenses to “pay attention” only to the parts that truly preserve fine detail, and then cleaning up the image with smart algorithms, the authors show that we can get sharp, high‑resolution pictures from much simpler, thinner lenses.

How Traditional Lenses Try to Do Everything

Conventional lens design follows a straightforward rule: every part of every glass surface must bend light rays so they all meet as perfectly as possible on the sensor. Engineers judge success by how tightly a point of light is focused and how smoothly the lens transfers contrast from the scene to the sensor over different sizes of detail. In practice, however, the outer and inner parts of a lens surface do not behave equally well. For simple lenses in particular, forcing all areas to obey the same strict rules can backfire: fixing a badly behaving zone often spoils a better one. To avoid these trade‑offs, classic high‑end solutions stack many carefully shaped elements, which boosts performance but also size, weight, and cost.

Letting Optics and Algorithms Share the Work

Modern “computational imaging” offers a different bargain: allow some blur and distortion in the optics, then remove them afterward in software. Decades of work have mapped out which kinds of lens flaws can be undone and which destroy crucial fine detail forever. The key is whether the system still carries enough high‑frequency information — the tiny variations that define hair strands, text edges, and distant window frames — up to the sensor’s limit. If that fine detail survives, sophisticated restoration methods can revive a crisp image; if not, no amount of processing will help. The remaining challenge is how to shape a real lens so that it keeps exactly the right kinds of imperfections: those that algorithms can fix without sacrificing the smallest visible details.

Teaching a Lens Where to Pay Attention

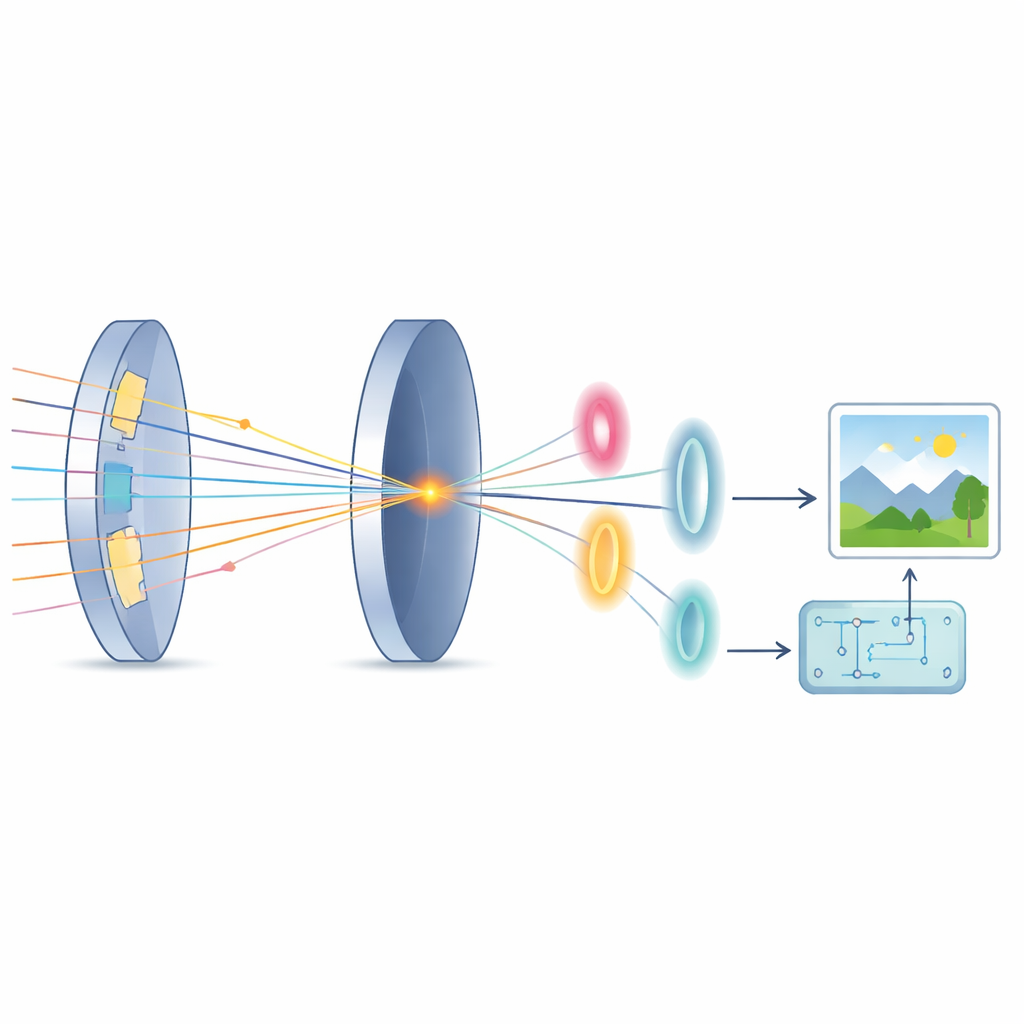

The authors propose an “optical attention” mechanism that imitates how our brains selectively process parts of a scene. They analyze each tiny patch of every lens surface and ask: if this spot alone handled the refraction, how close would it come to the ideal behavior? This measure becomes a kind of “attention score.” Zones that already bend light nearly perfectly are marked as attention regions and are refined to bring rays into sharp focus. Zones that struggle are tagged as non‑attention regions; instead of forcing them to focus, the design steers their rays so they miss the main focus in a controlled, harmless way. The physics analysis shows that if these misdirected rays land at special distances on the sensor, they barely disturb the highest spatial frequencies. A follow‑up restoration algorithm is then tuned, using modern optimization and deep learning tools, to remove the remaining low‑frequency blur while preserving the boosted fine detail.

From Bulky Glass Stacks to Smart Simple Lenses

To test this idea, the team redesigns two kinds of systems: a complex, multi‑element smartphone lens and a simple single lens. For the phone example, they replace a six‑element stack with just four elements, shortening the total length by nearly one‑fifth, yet achieve essentially the same sharpness after restoration. For the single‑lens case, they compare their method against both traditional design and a recent state‑of‑the‑art computational approach. Simulated and real images show that measurements from the attention‑based lens look blurrier at first glance, because some mid‑tone contrast is sacrificed. But once processed, the recovered images are cleaner and more detailed, with significantly higher contrast at the finest resolvable patterns — in some cases more than doubling the ability to distinguish closely spaced lines across the field of view.

What This Means for Future Cameras

In everyday terms, this work says we can trade expensive glass for clever design and computation. By letting the lens concentrate its “effort” on the most useful parts of each surface, then relying on algorithms to tidy up the rest, cameras can become thinner and lighter without giving up fine detail. The proposed optical attention framework also offers a more transparent, physics‑based way to co‑design optics and software, rather than treating the lens as a black box. If further developed and adopted, this approach could help bring high‑performance imaging to smaller devices, from phones and drones to endoscopes and miniature scientific instruments.

Citation: Zongling Li, Fanjiao Tan, Rongshuai Zhang, and Qingyu Hou, "Optical attention mechanism for high-resolution computational imaging," Optica 12, 1647-1656 (2025). https://doi.org/10.1364/OPTICA.570600

Keywords: computational imaging, lens design, high-resolution cameras, image restoration, optical attention