Clear Sky Science · en

SCAD: self-supervised contrastive learning for allusion detection in Chinese poems

Hidden Messages in Ancient Verses

Classical Chinese poems are full of hidden references to famous stories, legends, and historical figures. These "allusions" add emotional depth and cultural richness, but they also make the poems hard to understand for modern readers—and for computers. This paper introduces a new artificial intelligence system, SCAD, that can automatically uncover these buried references at large scale, opening the door to smarter digital tools for reading, teaching, and researching Chinese literature.

Why Allusions Matter in Poetry

For centuries, Chinese poets have relied on allusions as a kind of literary shorthand. By hinting at a well-known tale—such as an idyllic hidden village or a grieving river goddess—they could express complex feelings in just a few characters. The trouble is that these hints are often subtle. A poem may never mention the name of the story it draws on; instead it evokes a place, an object, or an image tied to that tradition. Because the same word can point to different stories depending on context, even advanced computer systems struggle to reliably recognize which allusion a poem is using, especially when there are thousands of possible candidates and limited labeled training data.

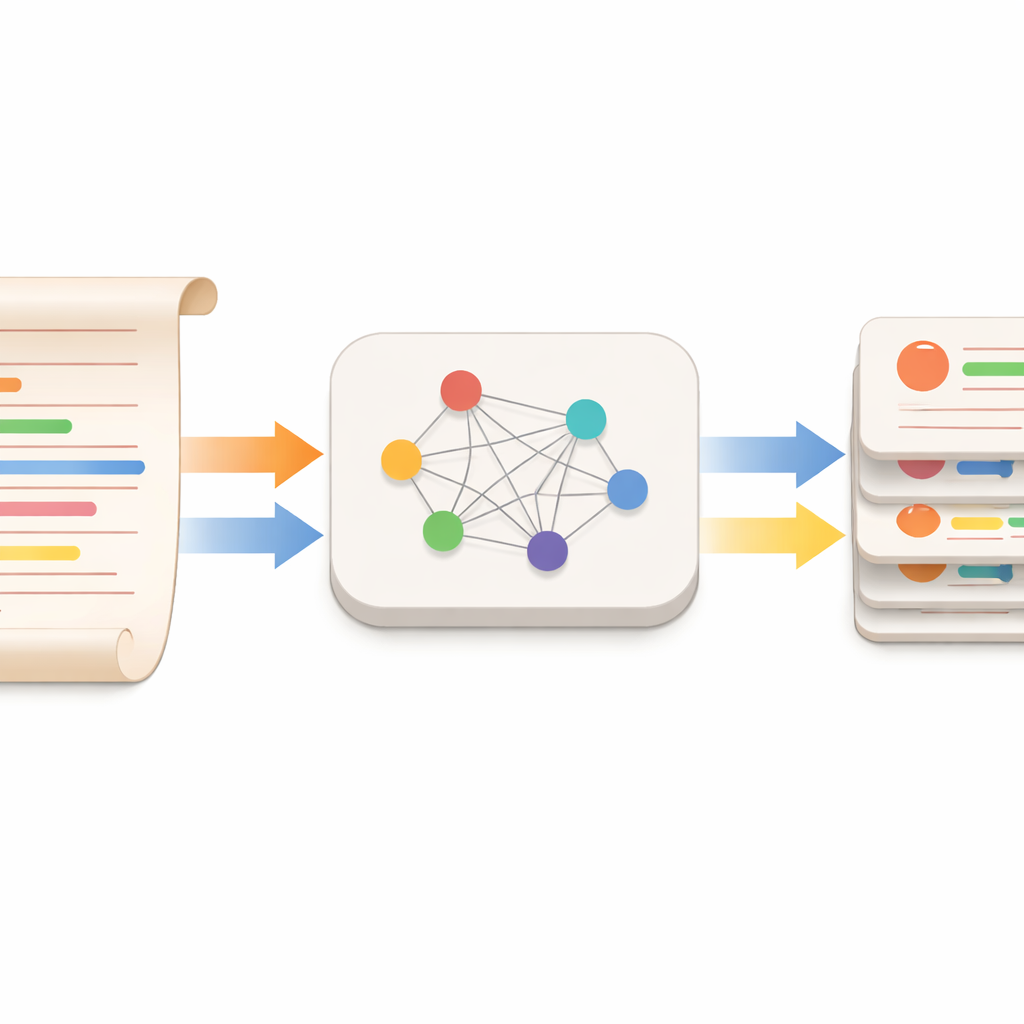

Teaching Machines to Learn from Comparisons

The authors tackle this challenge with a strategy called self-supervised contrastive learning, adapted specially for classical Chinese. Instead of asking humans to label every poem with the correct allusion, they build a large collection of poem–allusion pairs from a curated website that documents how over 14,000 poems quote 1,025 specific allusions. For each real pair—a poem that truly uses a certain story—they automatically generate "negative" pairs by matching the same poem with many unrelated allusions. SCAD learns to distinguish the genuine pair from the false ones by pulling related poem–allusion texts closer together in its internal representation space and pushing unrelated ones apart.

A Model Tuned for Ancient Chinese Texts

Under the hood, SCAD builds on SikuBert, a language model trained on large collections of premodern Chinese writing. The system feeds both the poem and the allusion (including its original source passage) into a joint encoder, allowing the model to focus on how specific phrases in a poem interact with details from the story. Lightweight "adapter" modules are added to this encoder so that only a small number of new parameters need to be trained, making fine-tuning efficient. An improved loss function gives extra weight to the hardest negative examples—the misleading allusions that the model is tempted to select—so that SCAD learns from its most common mistakes rather than from easy cases alone.

Outperforming Existing Approaches

When tested against a range of alternatives—including earlier deep-learning systems, rule-based methods, and even large general-purpose language models—SCAD proves markedly more accurate at naming the correct allusion in a poem. It not only ranks the right answer higher on average but also identifies it as the top choice for roughly four out of five test cases, a clear gain over previous techniques. Ablation studies show that each design choice contributes: using classical rather than modern language pretraining, including the full source text of the allusion, adding adapters, and reweighting difficult negative examples all improve performance, especially on rare or subtle allusions.

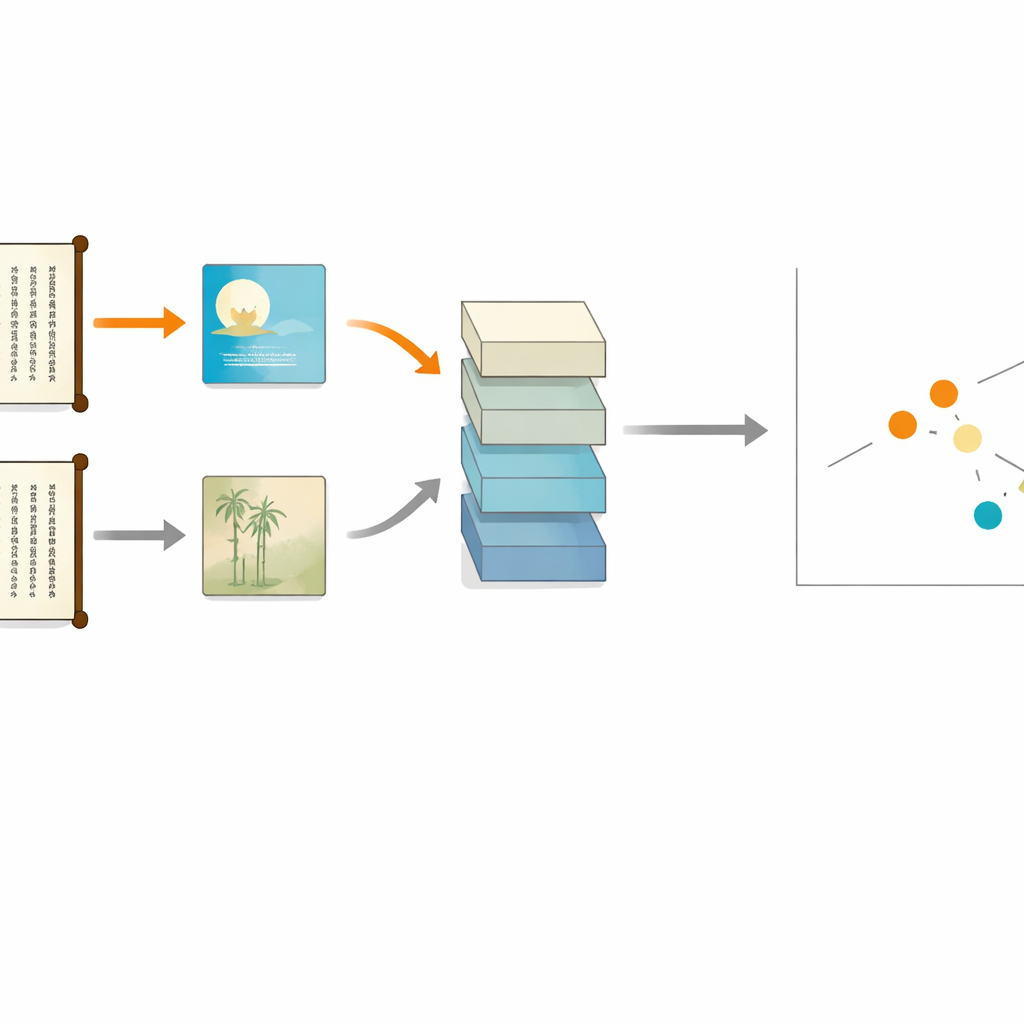

Discovering New Links and Building Knowledge Maps

Beyond raw accuracy, the authors explore how SCAD can generalize and explain its decisions. In "zero-shot" tests, they deliberately remove certain famous allusions and all related poems from training, then ask SCAD to recognize them anyway. The system still performs strongly, suggesting that it has learned general patterns about how poets hint at stories rather than memorizing a fixed checklist. To peer inside these decisions, the team applies an interpretability method called LIME, which highlights the specific words in a poem that most influence SCAD’s prediction. Using these signals, they extract nearly 10,000 "allusion words" and assemble them into a knowledge graph linking poems, evocative phrases, and the stories they recall—a resource that can power search, study tools, and interactive quizzes.

Bringing Ancient Hints into the Digital Age

In essence, this work shows that with the right training signals and architecture, machines can begin to pick up on the literary winks and nods embedded in classical Chinese poetry. SCAD not only detects which story a poem is quietly invoking but can also generalize to new allusions and help map the intricate web of references that tie poems to one another and to the broader cultural tradition. For readers, students, and scholars, systems built on this approach could become guides that illuminate the hidden layers of meaning in some of the world’s most allusion-rich literature.

Citation: Shi, B., Bu, W., Li, X. et al. SCAD: self-supervised contrastive learning for allusion detection in Chinese poems. Humanit Soc Sci Commun 13, 293 (2026). https://doi.org/10.1057/s41599-026-06627-z

Keywords: classical Chinese poetry, literary allusions, contrastive learning, digital humanities, natural language processing