Clear Sky Science · en

Temporal domains of anticipatory nasal coarticulation: evidence from contrastive, phonologized and neutral nasal systems

How Our Voices Hint at What Comes Next

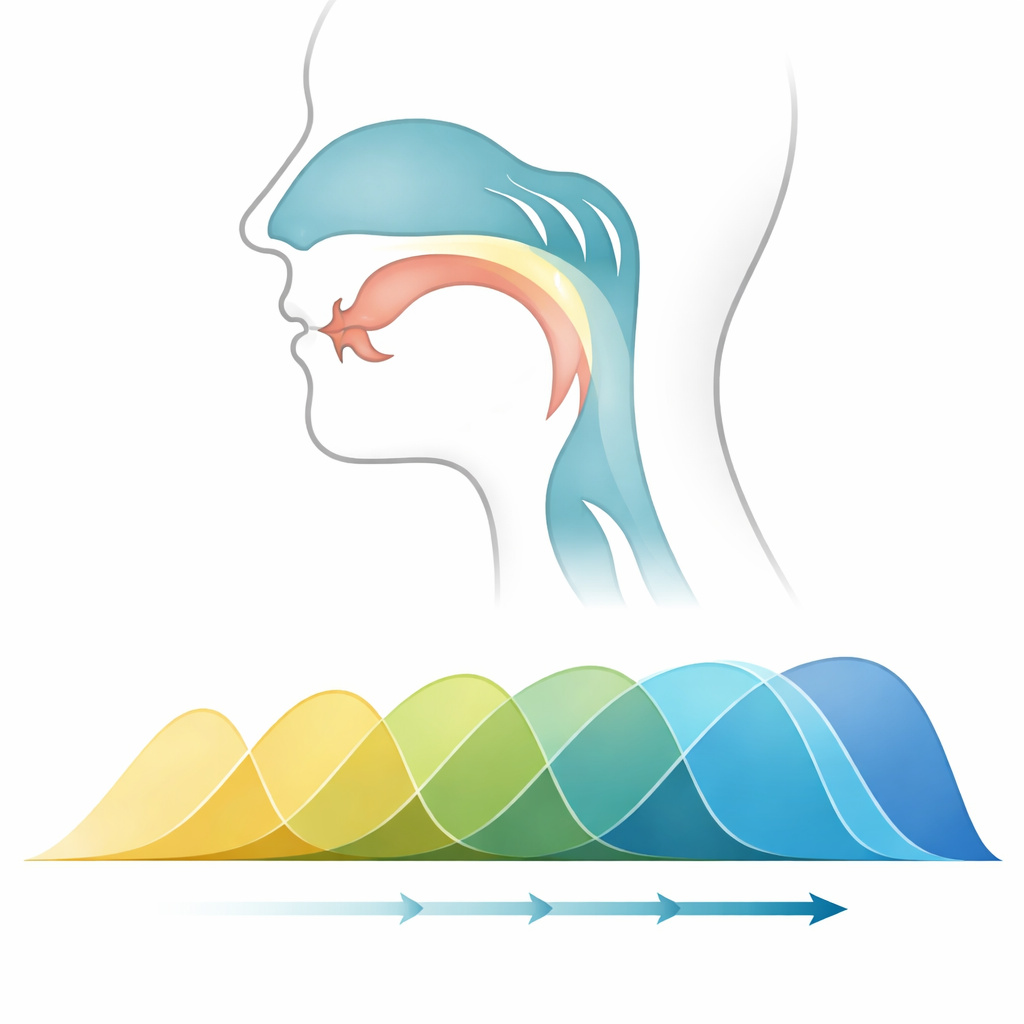

When we talk, our mouths and noses begin preparing for upcoming sounds before we actually say them. This subtle "head start"—especially for nasal sounds like m and n—is so automatic that we never notice it, yet it leaves a measurable trace in the sound waves of speech. This article explores how three major languages—American English, French, and German—use this hidden timing differently, and what that reveals about how languages shape both our bodies and our perception of speech.

Hidden Clues Before Nasal Sounds

Many languages let air flow through the nose for sounds such as m and n. Well before those sounds arrive, the soft palate inside the mouth can begin to lower, quietly adding a nasal quality to earlier parts of the word. This study focuses on "anticipatory nasal coarticulation"—the early start of that nasal quality—asking a simple but powerful question: does a language’s sound system control how early this nasal cue begins, or is it just a side effect of the body’s moving parts? The author compares three systems: French, where nasal vowels form clear contrasts with oral vowels; American English, where vowels often become nasal before m or n but without forming separate categories; and German, which mostly avoids special nasal vowel patterns.

Careful Listening to Many Voices

To probe these differences, the researcher recorded 93 native speakers—roughly 30 per language—reading specially chosen word pairs such as those differing only in a nasal versus oral consonant. Recordings were made with equipment that separately tracked sound from the mouth and the nose. Instead of relying on a human judge to eyeball when nasalization began, the study used a mathematical curve-fitting technique to detect the exact point at which nasal energy for nasal words started to pull away from otherwise similar oral words. This approach, built on sigmoid (S-shaped) curves, made it possible to compare timing patterns across thousands of spoken tokens and across languages in a uniform, objective way.

Three Languages, Three Timing Styles

The timing patterns that emerged were strikingly different. American English speakers showed the earliest and broadest spread of nasal influence: in many cases, the nasal quality began even before the vowel that directly precedes the nasal consonant, spilling back across multiple sounds. French speakers showed the tightest control, with nasalization starting closer to the nasal consonant, consistent with the need to keep nasal and oral vowels clearly distinct. German speakers fell in between in average timing, but with much greater person-to-person variability. In German, some speakers behaved more like English, others more like French, and many showed idiosyncratic patterns, suggesting weaker guiding rules in the language’s sound system.

From Body Mechanics to Learned Patterns

These timing patterns matter because they blur the line between raw physiology and learned structure. The broad, regular nasal spread in American English appears not to be just a mechanical lag of the soft palate, but a stable, learned feature of the language: listeners in a follow-up test reliably used this early nasal cue to tell apart sounds in different contexts. By contrast, French seems to keep nasal spread on a short leash to protect its distinctive nasal vowels. German’s variability points to a system where, lacking strong rules, speakers’ individual anatomy and habits play a larger role. The results also show that nasal influence often begins well before the pre-nasal vowel, contradicting models that assume speech effects are confined neatly to single segments.

Why This Matters for Learners and Machines

The findings have clear consequences outside the laboratory. For second-language learners, especially English speakers learning French, the deeply ingrained habit of letting nasalization spread early and widely may make it hard to adopt French’s stricter timing. For speech technologies, such as automatic speech recognition and synthesis, the study shows that one-size-fits-all models of nasal timing are likely to fail: English systems must handle long-range nasal cues, French systems must keep them narrowly focused, and German systems must adapt to strong individual differences. By revealing how each language quietly choreographs the timing of nasal sounds, the study offers a window into how our sound systems harness both the flexibility of the body and the structure of the mind.

Citation: Lei, J. Temporal domains of anticipatory nasal coarticulation: evidence from contrastive, phonologized and neutral nasal systems. Humanit Soc Sci Commun 13, 255 (2026). https://doi.org/10.1057/s41599-026-06601-9

Keywords: speech production, nasalization, cross-linguistic phonetics, coarticulation, second language pronunciation