Clear Sky Science · en

Surgical RARP copilot: a vision language model for robot-assisted radical prostatectomy

Smarter Help in the Operating Room

Modern prostate cancer surgery is performed with sophisticated robots and cameras, yet surgeons still have to juggle complex decisions, fast-changing views, and constant questions from trainees and staff. This article introduces an artificial intelligence "copilot" that can watch the live surgery video and answer spoken questions on the spot, much like a highly knowledgeable assistant. For patients, this points toward safer, more consistent operations; for surgeons, it hints at a future where expert guidance and teaching are available in every operating room.

A Digital Assistant That Can See and Talk

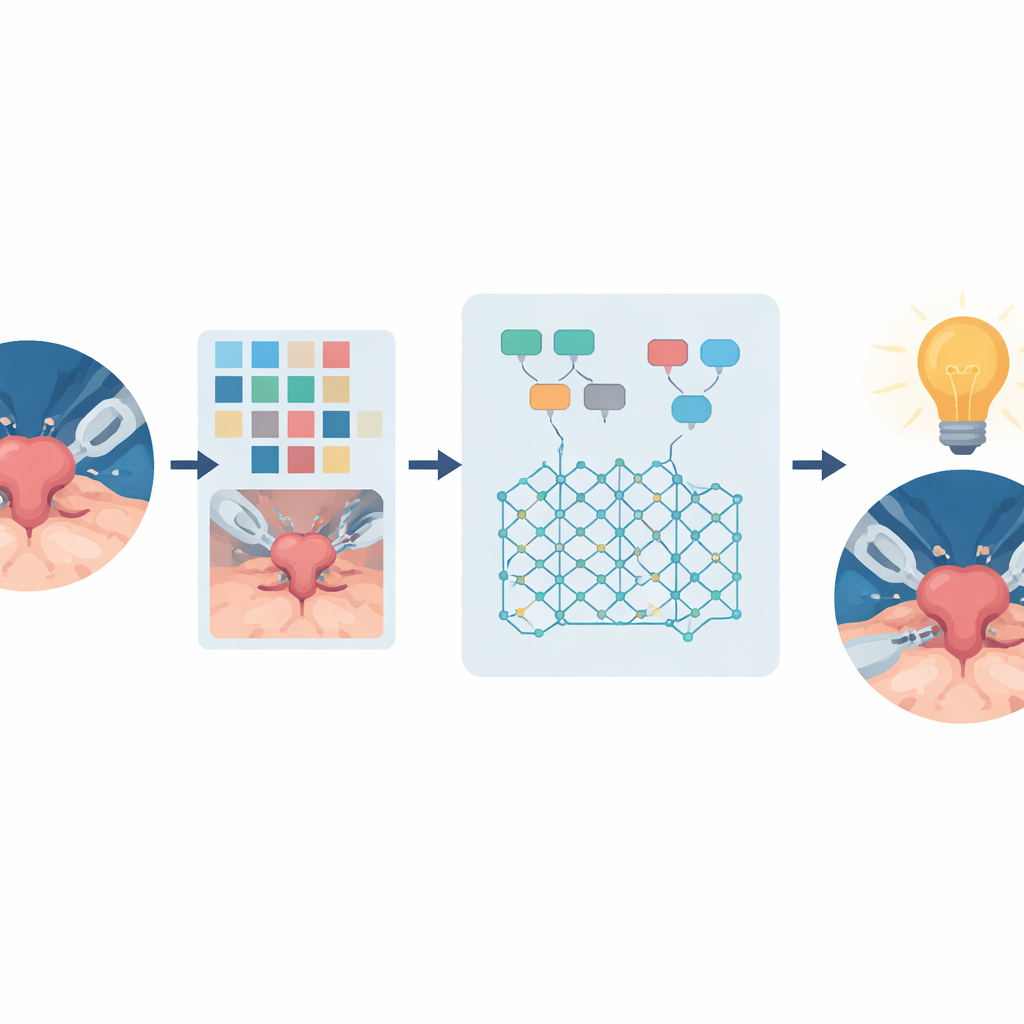

The researchers built the Surgical RARP Copilot for a specific operation: robot-assisted radical prostatectomy, the standard surgery for many men with localized prostate cancer. In this procedure, a robotic system controlled by the surgeon removes the prostate through small keyhole incisions, guided by a high-definition camera inside the body. Traditional chat-based AI systems only process text, so they cannot interpret what the surgical camera is showing. The Copilot instead combines computer vision with a large language model, allowing it to "see" the operative field and generate natural-language answers about what is happening, what tools are in view, or what the next steps in the operation should be.

Teaching the Copilot Surgical Know-How

To give the Copilot meaningful surgical expertise, the team assembled a specialized training dataset rather than relying on general internet images. They collected nearly 20,000 labeled frames from recorded prostate operations, marking the positions of instruments, organs, and the current step of the procedure. They also added approximate depth information, so the system could infer which objects are in front of or touching one another. Using expert-designed rules, these labels were converted into detailed written captions describing what each frame showed and where in the operation it occurred. Large language models were then prompted, in the voice of different "personas" ranging from senior surgeons to curious children, to generate over one million question–answer pairs based on those captions. A separate model checked these pairs for logical consistency, and flawed examples were filtered out before training.

How Well the Copilot Performs

Once trained, the Copilot was tested in several ways. On a held-out set of synthetic question–answer pairs, fine-tuning boosted the model’s ability to give at least partially correct answers from about 61% to 83%, and fully correct answers from 0% to 59%. Human reviewers then asked 650 questions about prerecorded surgical images; nearly seven in ten answers were judged fully correct. The system also tackled classic computer-vision tasks without extra retraining: it identified which step of the prostatectomy was underway from a single video frame with 82% accuracy and recognized surgical instruments with a 94% F1-score, while also estimating how much time remained in the operation. These results suggest that a single, unified model can match specialized tools on multiple tasks while still engaging in open-ended conversation.

Putting AI Into a Live Operation

The most striking demonstration took place in a real operating room. The Copilot was deployed on a powerful edge computer linked directly to the robotic surgery video feed. During a live prostatectomy performed on a different robotic platform than the one used for training, an audience of surgeons and engineers submitted 276 questions via their smartphones. After filtering out irrelevant and duplicate queries, experts judged that the Copilot answered about 77% of the remaining questions correctly—comparable to its offline performance. The system responded in roughly half a second before beginning its reply and produced text fast enough to feel interactive, all while applying safety filters and conservative behavior when uncertain.

What This Means for Future Surgery

For lay readers, the key message is that an AI system can now watch a delicate cancer operation in real time and give useful, context-aware answers about what is happening and what should happen next. Although the current Copilot is limited to one type of surgery, relies on snapshots rather than full video memory, and does not yet access full medical records, it proves that multimodal AI can be safely brought into the operating room. As similar systems are expanded to more procedures, connected to richer patient data, and rigorously tested for clinical impact, they could support training, improve team communication, and ultimately help make complex surgery safer and more transparent.

Citation: Bogaert, W., Remy, F., Tejero, J.G. et al. Surgical RARP copilot: a vision language model for robot-assisted radical prostatectomy. npj Digit. Surg. 1, 3 (2026). https://doi.org/10.1038/s44484-025-00003-1

Keywords: robotic surgery, prostate cancer, surgical AI, vision language model, operating room assistance