Clear Sky Science · en

Video-based cattle behaviour detection for digital twin development in precision dairy systems

Why Watching Cows Matters

On modern dairy farms, knowing what each cow is doing—eating, resting, drinking, or chewing her cud—is directly tied to milk yield, health, and welfare. Yet farmers rarely have the time to watch every animal around the clock. This study shows how ordinary barn video cameras, combined with advanced computer vision, can automatically track the daily lives of cows and feed that information into a digital “virtual twin” of the herd. Such systems could help farmers fine‑tune nutrition, catch illness earlier, and manage herds more efficiently, all without attaching gadgets to the animals.

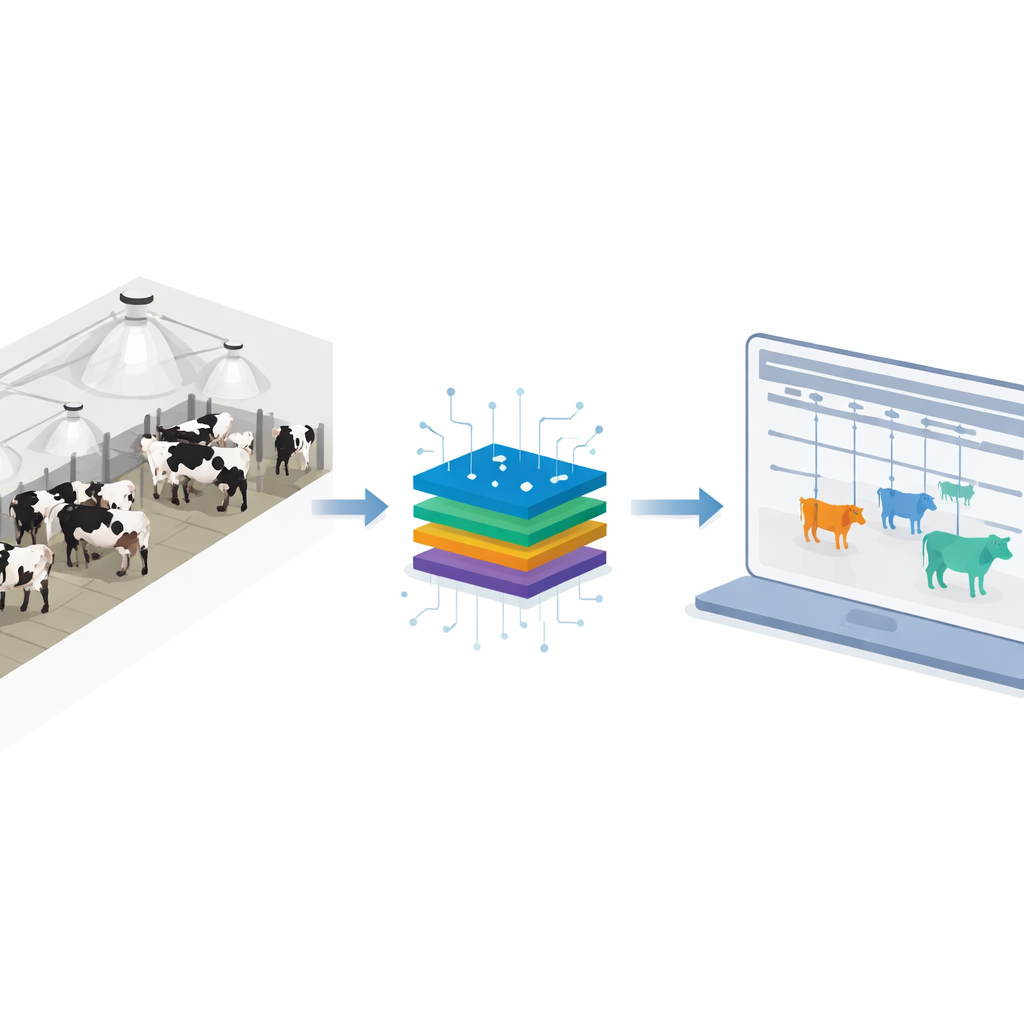

From Real Barn to Virtual Herd

The researchers set out to build the behavioural “eyes and ears” for a dairy digital twin—a virtual model of a barn and its cows that updates in near real time. They focused on seven everyday activities that matter most for health and production: standing, lying, feeding while standing, feeding while lying, drinking, and ruminating (chewing cud) while standing or lying. Instead of relying on wearable sensors, they used overhead and angled security cameras in a commercial-style tie‑stall barn housing about 80 Holstein cows. Continuous video was turned into short, 10‑second clips centered on individual cows, forming the raw material for teaching computers to recognize what each animal was doing.

Teaching Computers to Recognize Cow Behavior

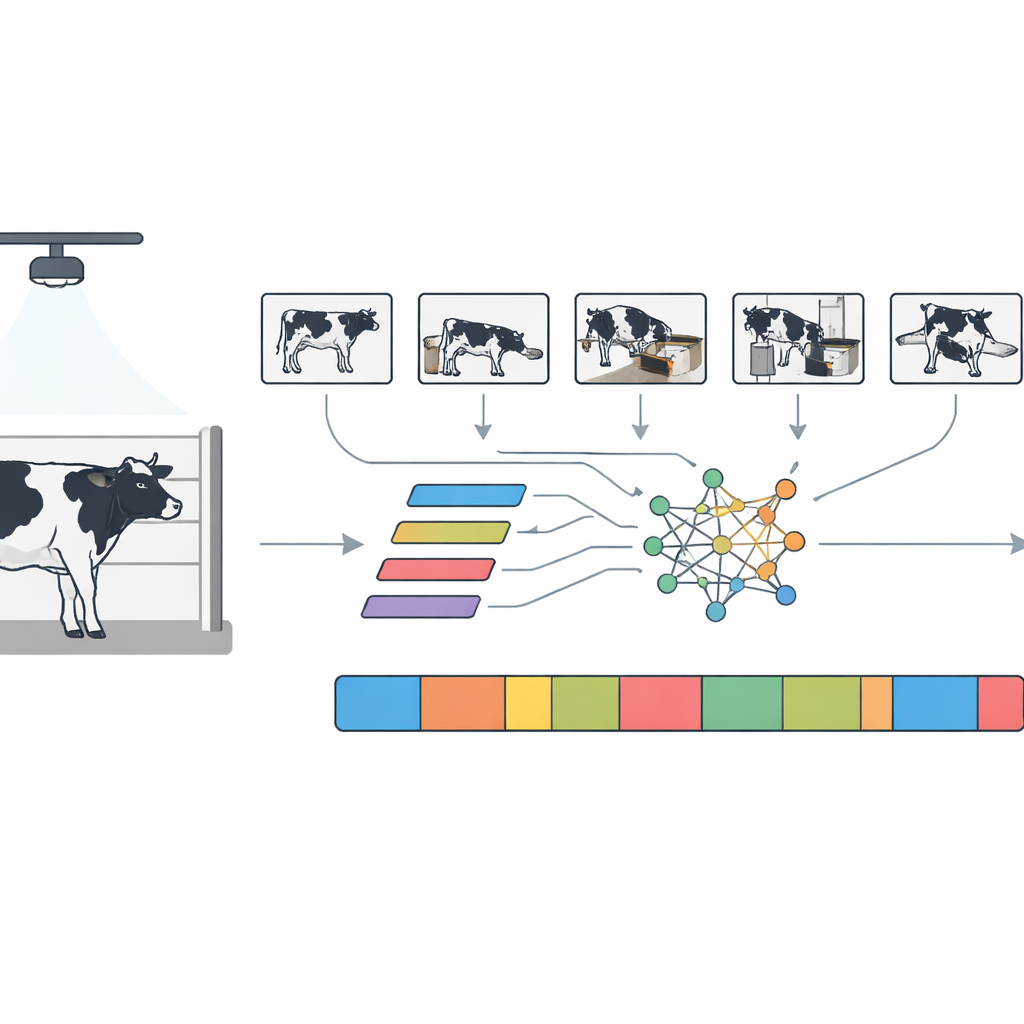

Turning raw footage into useful data required several steps. First, an object‑detection system automatically found cows in each frame, and a tracking algorithm kept each cow’s identity consistent as she moved, even when partly hidden. The program then cropped and resized each cow into standardized video clips. Human experts labeled nearly 5,000 of these clips with the correct behaviour, using clear visual rules and double‑checking each other’s work to ensure consistency. Because cows naturally spend more time lying and standing than drinking or ruminating, the team carefully expanded the rarer behaviours with digital “augmentation”—subtle flips, crops, brightness shifts, and timing tweaks—to create a more balanced training set of about 9,600 clips.

How the System Sees Patterns Over Time

To spot behaviours, the team compared two leading families of video‑analysis models. One, called SlowFast, mimics two viewing speeds at once: a “slow” pathway that sees posture over longer stretches, and a “fast” pathway that focuses on quick head movements. The other, TimeSformer, uses attention mechanisms originally developed for language models to look across space and time, deciding which parts of each frame and which moments in a clip matter most. When trained on the barn videos, TimeSformer slightly outperformed SlowFast, correctly classifying behaviours about 85% of the time and doing so fast enough for real‑time use on a single modern graphics processor. Visualizations showed that the model naturally focused on the cow’s head and muzzle during feeding and drinking, and on the torso and legs for lying or standing, matching how a human observer would judge behaviour.

From Behavior Streams to Farm Decisions

Once the system could recognize behaviours clip by clip, the researchers built a full pipeline that runs continuously on barn video. The program follows each cow over time, applies a sliding window to the video, and smooths out momentary missteps so that short glitches do not appear as rapid-fire state changes. The output is a clean timeline for every animal: when she was feeding, lying, standing, drinking, or ruminating, along with how long each bout lasted and how confident the system was. These structured logs can be read directly by farm nutrition models that estimate feed intake from feeding time, and they can drive a 3D digital twin in a game‑like environment that shows virtual cows mirroring the actions of their real counterparts. In a 24‑hour case study of one cow, the system reconstructed her full day of activities and used feeding duration plus basic animal information to estimate how much dry feed she likely consumed.

What This Means for Future Dairy Farms

The study demonstrates that inexpensive cameras and carefully designed video models can deliver continuous, per‑cow behaviour records accurate enough to serve as the sensory layer of a dairy digital twin. While the work does not yet automate decisions—such as changing rations or alerting staff to illness—it provides the crucial input stream on which those higher‑level tools depend. As the approach is extended to more open barn designs and combined with other sensors, farmers could gain a detailed, always‑on view of their animals’ daily rhythms, enabling gentler, more precise management that benefits both cows and the environment.

Citation: Rao, S., Garcia, E. & Neethirajan, S. Video-based cattle behaviour detection for digital twin development in precision dairy systems. npj Vet. Sci. 1, 3 (2026). https://doi.org/10.1038/s44433-026-00004-x

Keywords: precision livestock farming, computer vision, dairy cattle behavior, digital twin, animal welfare monitoring