Clear Sky Science · en

Modeling nursing care tasks in simulated emergency scenarios: insights for clinical training and practice

Why this research matters for patient care

When someone in the emergency room begins to suddenly get worse, nurses are often the first to notice and act. Their rapid decisions—what to check, whom to call, which treatment to start—can mean the difference between recovery and serious harm. Yet many of these choices are made so quickly and intuitively that even expert nurses have trouble explaining how they do it. This study explores whether modern artificial intelligence can learn the patterns behind expert nursing actions in realistic emergency simulations, with the goal of one day guiding less experienced nurses in high‑stakes situations.

How expert nurses think on their feet

Experienced nurses caring for very sick patients do far more than follow step‑by‑step checklists. They continuously combine readings from monitors, results in the chart, what they see and feel during a physical exam, and what patients tell them about how they feel. Much of this decision‑making is quick, intuitive, and hard to put into words. Novice nurses, in contrast, often cling tightly to written protocols and focus heavily on monitor numbers, which can leave them less able to adapt when a patient’s condition changes in unexpected ways. The researchers argued that if we can capture the sequence of visible actions nurses take—such as checking vital signs, talking to the patient, or calling a physician—we might be able to model this decision process well enough to support training and practice.

Simulated emergencies in a safe setting

To study these patterns without risking harm to real patients, the team used detailed simulations with life‑like manikins. Eleven experienced nurses and thirteen third‑year nursing students completed emergency scenarios involving a patient who suddenly developed an ischemic stroke and, for experts, an additional scenario of patients with severe Covid‑19 complications. Every action the nurses took—19 distinct behaviors in total—was captured on video, time‑stamped, and then carefully coded by clinical and human‑factors experts. These many specific actions were then grouped into eight broader categories, such as checking vital signs, performing focused physical assessments, talking to the patient, consulting the chart, giving medications, calling a physician, ordering extra tests, or initiating a rapid response team.

What the data revealed about nursing patterns

Across 33 simulation episodes, the nurses and students performed 1,024 actions, with an average of about 31 actions per scenario. Checking vital signs was by far the most common behavior, followed by focused physical assessments and talking with the patient. A transition map showed that, no matter what nurses had just done, their next move was most likely to be checking the monitor—suggesting they regularly used numbers to confirm what they saw and heard. There were also notable differences between experts and students: experts balanced time between monitors and hands‑on assessments, and they more often ordered extra tests and gave medications, while students relied more heavily on the monitor alone. These differences created a varied set of behavior patterns that could help a model learn more general rules for patient care.

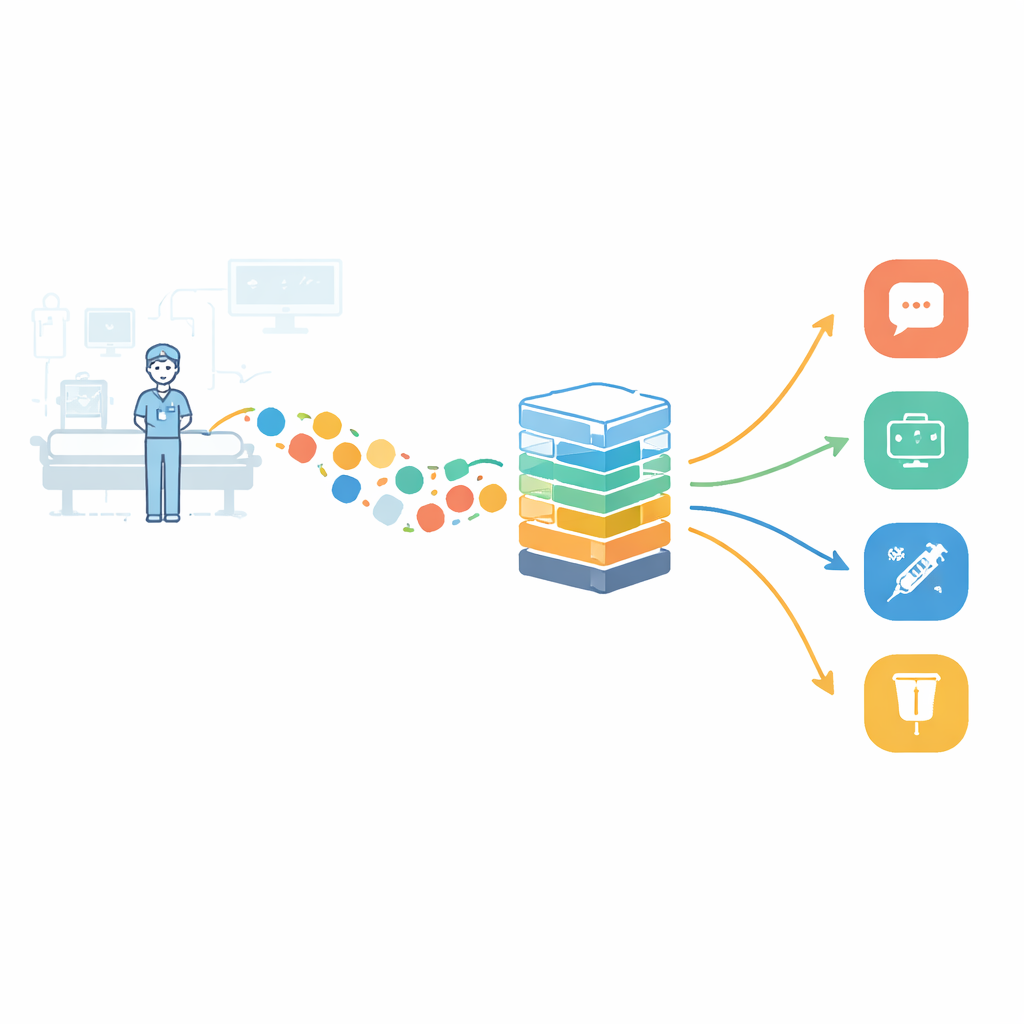

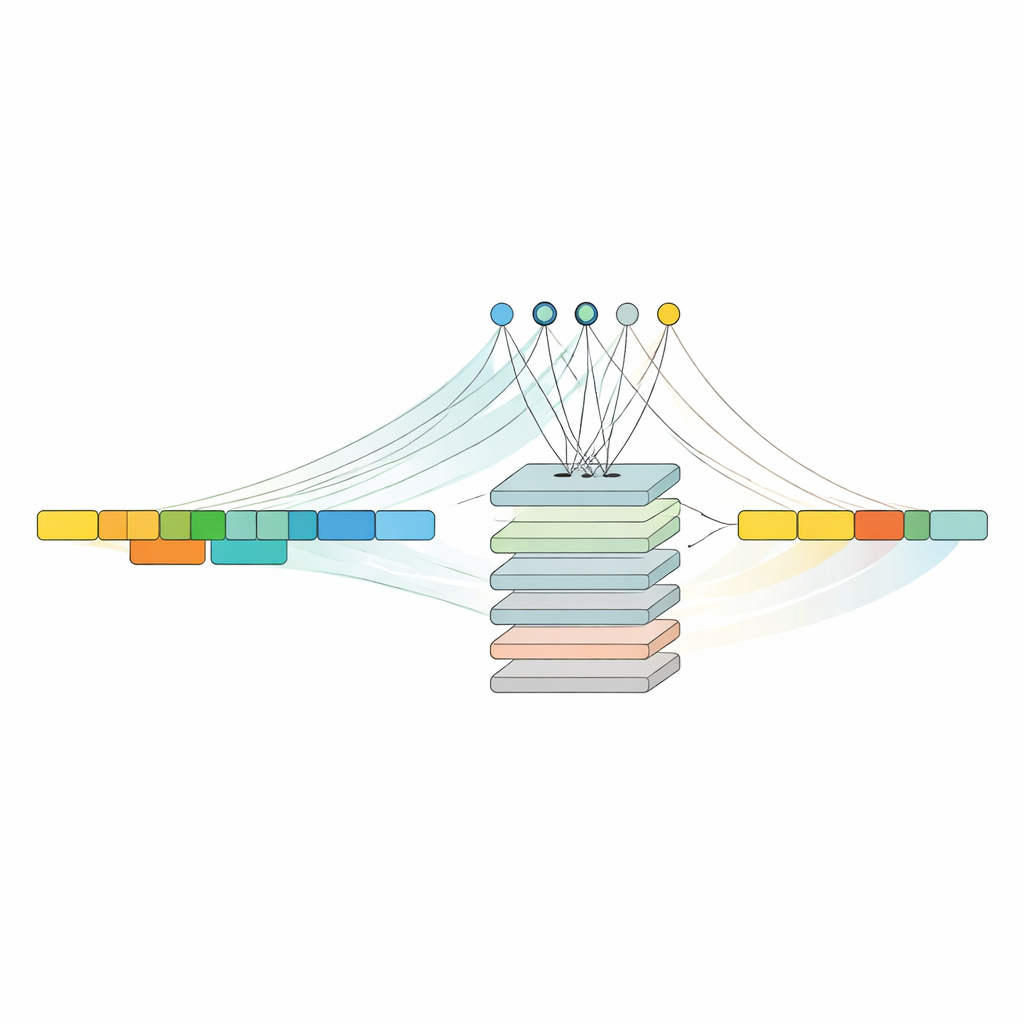

Teaching a model to predict the next nursing step

The central question was whether a modern AI approach known as an attention‑based transformer could learn to predict what action a nurse would take next, based only on the sequence of previous actions. The team trained this model on the coded simulation data and compared it with two more traditional sequence‑learning methods: a basic recurrent neural network and a long short‑term memory (LSTM) network. All three models did better than simply guessing the most common next action. The attention‑based model reached about 73 percent accuracy overall and generally provided the most balanced performance across different types of actions, especially in recalling less frequent but important behaviors. The LSTM model achieved slightly higher precision—meaning that when it predicted a particular action, it was somewhat more likely to be correct—but its performance varied more between action types.

What this could mean for training and real‑world care

To a layperson, the key result is that a computer system can learn meaningful patterns from how nurses actually work through emergencies and can make reasonably accurate predictions about what an expert nurse is likely to do next. In the near term, such a system could be embedded into simulation training: as students work through a stroke scenario, for example, the model could watch their sequence of actions and gently suggest the next helpful step when they get stuck, preserving the human nurse’s holistic approach rather than replacing it. The authors stress that more data, additional conditions beyond stroke and Covid‑19, and careful attention to privacy will be needed before using similar tools in real hospitals. Still, this study offers an early glimpse of how AI might one day support, rather than supplant, nurses’ rapid, life‑saving decisions.

Citation: Anton, N.E., Malusare, A.M., Aggarwal, V. et al. Modeling nursing care tasks in simulated emergency scenarios: insights for clinical training and practice. npj Health Syst. 3, 24 (2026). https://doi.org/10.1038/s44401-026-00079-y

Keywords: nursing decision-making, clinical simulation, machine learning, attention models, emergency care