Clear Sky Science · en

KneeXNet-2.5D: a clinically-oriented and explainable deep learning framework for MRI-based knee cartilage and meniscus segmentation

Why knee scans matter to everyday life

Millions of people live with knee pain from osteoarthritis, a slow and often invisible breakdown of the joint’s smooth cushioning tissue. Doctors can see this damage in magnetic resonance imaging (MRI) scans, but carefully tracing the thin layers of cartilage and the meniscus by hand is slow, tedious work. This study introduces KneeXNet‑2.5D, an artificial intelligence (AI) system designed to do that tracing automatically, quickly, and reliably—potentially helping clinicians catch problems earlier and monitor treatment more precisely.

Turning raw scans into ready-to-use images

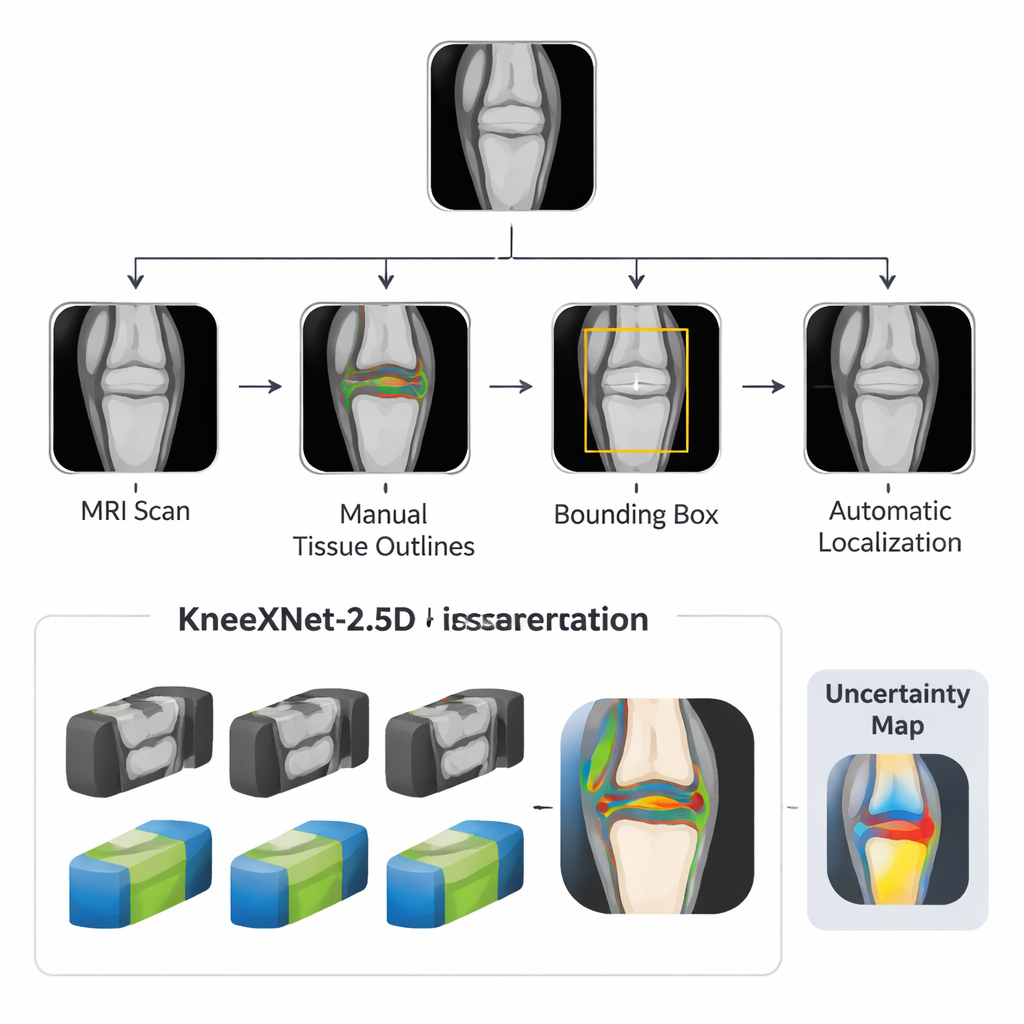

Before an AI model can understand a knee, the scan needs to be cleaned up and focused on the area that matters. The researchers built a pipeline that first gathers standard MRI images and then uses simple outlines and bounding boxes to mark the knee joint. A separate detection model spots and crops the joint area automatically, so the main AI system only sees the clinically relevant region instead of surrounding muscle and background. This targeted pre-processing makes the task easier for the computer and mirrors the way a radiologist mentally zooms in on the joint.

A smart middle ground between 2D and 3D

Traditional medical imaging AI tools often choose between flat 2D slices, which are efficient but can miss context, and full 3D models, which are powerful but demand huge datasets and expensive hardware. KneeXNet‑2.5D takes a middle path. It looks at a slice of the knee together with its immediate neighbors, so it can see how structures continue from one image to the next without carrying the full burden of 3D processing. The core of the system is a U‑Net–style network that learns to label four key structures—three cartilage regions and the meniscus—plus background. Several versions of this network are trained in parallel, each seeing slightly blurred or resized images, and their predictions are blended into one final answer.

Built to handle messy, real-world scans

Clinical MRI scans are rarely perfect. They can be noisy, slightly blurry, or captured with different settings across hospitals and machines. To prepare for this, the team systematically added controlled blur and scale changes during training. This teaches the AI to recognize the same anatomy even when the image quality varies. In formal tests, the full KneeXNet‑2.5D ensemble produced highly accurate segmentations, closely matching expert outlines across all cartilage regions and the meniscus. It also remained stable when images were altered, showing strong robustness scores. Compared with a pure 3D model trained on the same dataset, KneeXNet‑2.5D achieved better accuracy while using less memory and more practical training and run times, a key point for hospitals without top-tier computing resources.

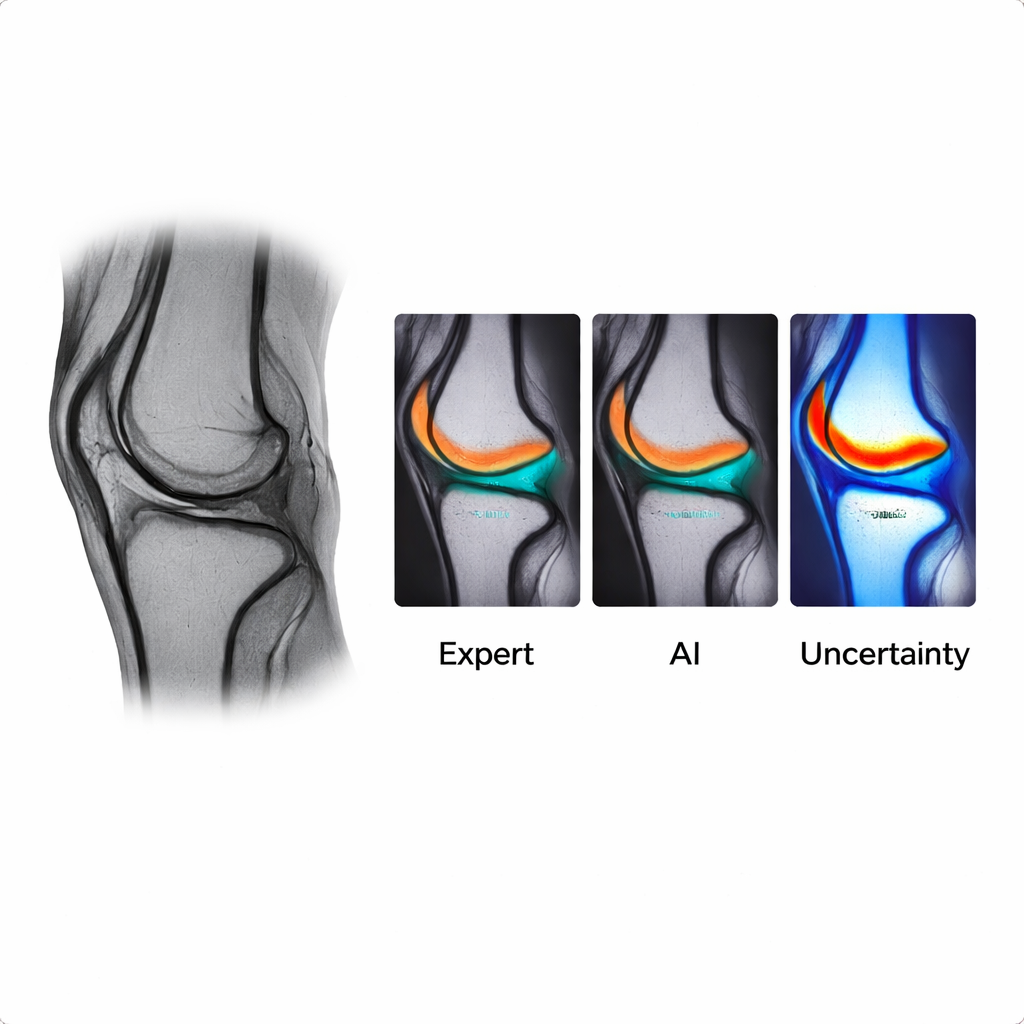

Making the AI’s “thinking” visible

Because clinicians must trust what an automated system is doing, the authors added an explainability layer. For every pixel of the AI’s output, they compute an uncertainty score, then display it as a color overlay: cooler colors mark confident decisions, and warmer colors highlight areas where the model is less sure, typically along thin edges or ambiguous regions of cartilage and meniscus. When the researchers deliberately disturbed only the high‑uncertainty areas, performance dropped sharply, showing that these regions truly matter for the model’s decisions. Two orthopedic surgeons reviewed segmentation results alongside these uncertainty maps and confirmed that the highlighted zones often corresponded to spots they themselves consider tricky or open to interpretation.

From research code to practical clinic tool

To ease adoption, the team released a complete package: a carefully annotated MRI dataset, detailed labeling guidelines, the trained AI models, and a lightweight web-based viewer. In this viewer, users can upload a knee MRI, scroll through slices, see the AI’s color-coded cartilage and meniscus outlines, and examine the uncertainty overlay—all in an ordinary browser. This design aims to make advanced image analysis accessible not only to major academic centers but also to smaller hospitals and clinics, including those in rural settings with limited computing power.

What this means for patients and clinicians

For patients, an accurate and explainable tool like KneeXNet‑2.5D could translate into faster, more consistent readings of knee MRIs, better tracking of how cartilage changes over time, and earlier detection of joint damage before pain and disability become severe. For clinicians and health systems, it offers a way to cut down on repetitive manual outlining, reduce variation between readers, and scale quantitative knee imaging to larger populations. While the model still needs testing on more diverse datasets and scanners, this work shows that carefully engineered AI can be both powerful and transparent, bringing advanced knee imaging analysis closer to everyday clinical use.

Citation: Sanogo, M., Gao, F., Littlefield, N. et al. KneeXNet-2.5D: a clinically-oriented and explainable deep learning framework for MRI-based knee cartilage and meniscus segmentation. npj Health Syst. 3, 18 (2026). https://doi.org/10.1038/s44401-026-00072-5

Keywords: knee MRI, osteoarthritis, cartilage segmentation, medical AI, meniscus