Clear Sky Science · en

Detecting stigmatizing language in clinical notes with large language models for addiction care

Why the Words in Your Medical Record Matter

As more patients gain online access to their medical records, the language clinicians use is no longer hidden in hospital computers—it is visible to the very people it describes. For patients living with addiction, a single phrase like “drug abuser” can quietly reinforce shame, damage trust, and even influence the care they receive. This study asks a timely question: can modern artificial intelligence help hospitals spot and reduce stigmatizing language in clinical notes before it harms patients?

Harmful Labels Hidden in Everyday Notes

Stigma in health care does not just show up in eye contact or tone of voice; it is also baked into the written record. Electronic health records contain millions of notes that follow patients across clinics and hospitals. Terms such as “alcohol abuse” or “drug-seeking behavior” can shape how future clinicians view a person long after an emergency visit or hospital stay. The researchers focused on intensive care unit notes about patients with substance use problems, where the stakes are high and documentation is dense. They started from national guidelines that encourage respectful, person-first language, like “person with substance use disorder” instead of “addict,” and used these ideas to create a large dataset of notes labeled as either stigmatizing or not.

Teaching an AI to Read Between the Lines

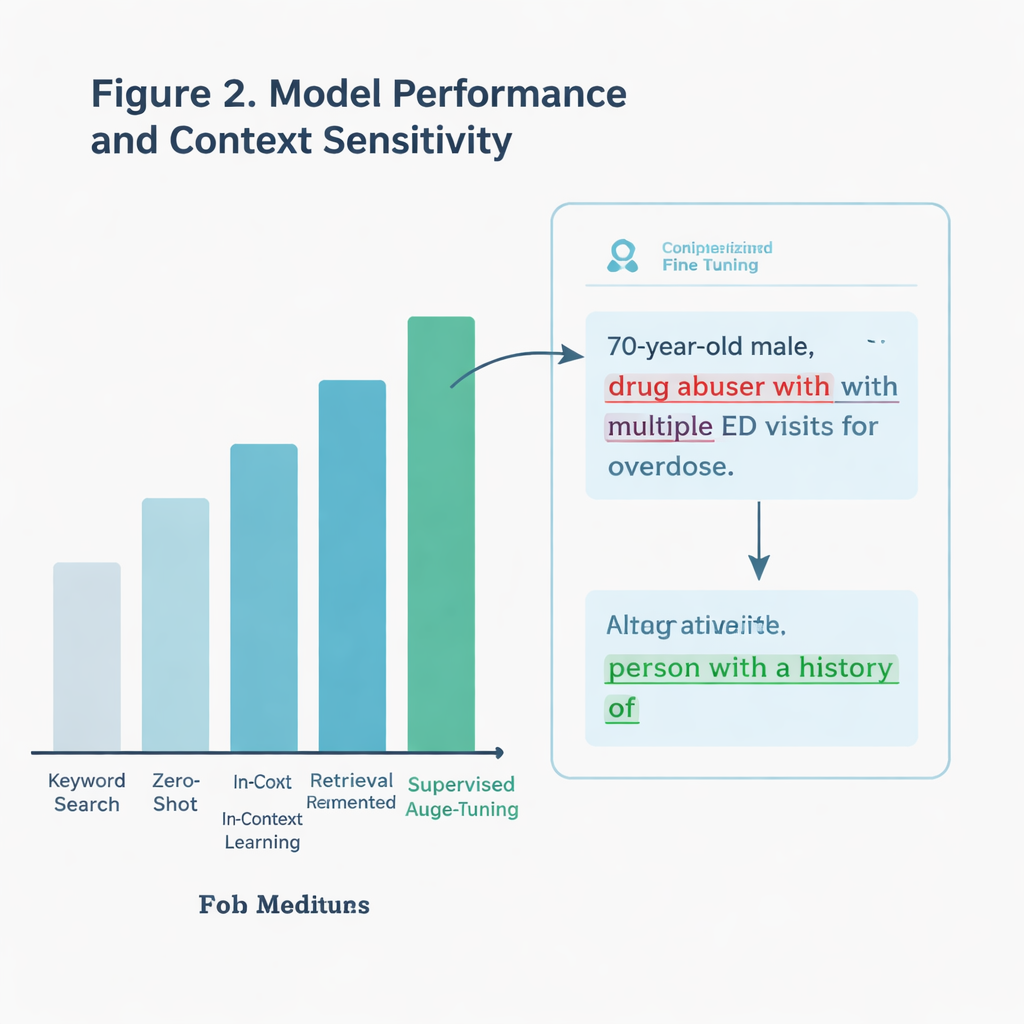

Rather than simply scanning for bad words, the team wanted an AI system that could understand context. For example, a note might quote a patient describing themselves as “drunk,” which is not the same as a clinician applying that label. The authors compared several approaches, all built on a large language model (a type of AI that processes and generates text). One basic method looked only for specific keywords drawn from guidelines. More advanced methods asked the AI to judge each note directly, either with no extra examples, with added guidance from communication guidelines, or after being specially trained—or “fine-tuned”—on thousands of labeled ICU notes.

What Worked Best in the Real World

The fine-tuned model was the clear winner. On a held-out test set of more than 11,000 notes, it correctly identified stigmatizing language about 97 percent of the time, far better than simple keyword search. It also held up better on a particularly tricky subset of notes that contained potentially charged terms but were not always used in a harmful way. The model could distinguish between genuinely judgmental phrases and neutral or quoted uses, where a cruder search would fail. When the team tested the system on notes from a different health system—nearly 300,000 ICU notes written in another state—it still outperformed the keyword approach, even though stigmatizing language was rare in that real-world sample.

Finding New Problem Phrases Clinicians Missed

The researchers went a step further and asked the AI to explain why it had flagged certain notes. An addiction specialist then reviewed these explanations. In dozens of cases, the models highlighted genuinely stigmatizing language that human annotators had originally overlooked, including phrases not listed in existing guidelines. Examples included descriptions like “drug-seeking behavior” or casual mentions of “alcoholic cirrhosis” that subtly blame the person rather than the disease. This suggests that well-designed AI tools might not only enforce current best practices but also help expand our understanding of what harmful language looks like as clinical writing evolves.

From Research Tool to Bedside Helper

The study also weighed practical issues. Keyword search is lightning fast but shallow. The most accurate AI model required several hours of training on powerful graphics processors, yet once trained it could screen notes in a few seconds each—slow for a search engine, but acceptable for a background assistant in a hospital system. Another, less customized approach that relied only on carefully crafted prompts performed reasonably well without extra training, hinting at lighter-weight options for clinics with fewer technical resources. Together, these findings point toward systems that can flag risky wording in real time and suggest more respectful alternatives as clinicians type.

A Step Toward More Respectful Care

For a layperson, the main takeaway is simple: the words in your chart are not just technical jargon; they help shape how you are treated. This work shows that large language models can reliably spot many forms of stigmatizing language related to addiction in intensive care notes, even when the problem is subtle. While no system is perfect, such tools could serve as always-on editors, nudging clinicians toward language that recognizes people as more than their diagnoses. In the long run, that shift—from blame to respect—may be as important to healing as any drug or device.

Citation: Sethi, R., Caskey, J., Gao, Y. et al. Detecting stigmatizing language in clinical notes with large language models for addiction care. npj Health Syst. 3, 15 (2026). https://doi.org/10.1038/s44401-026-00069-0

Keywords: addiction stigma, clinical notes, large language models, electronic health records, person-first language