Clear Sky Science · en

Toward autonomous weed management systems in sugarcane crops and an assessment of technological readiness

Fighting Weeds Without Drowning Fields in Chemicals

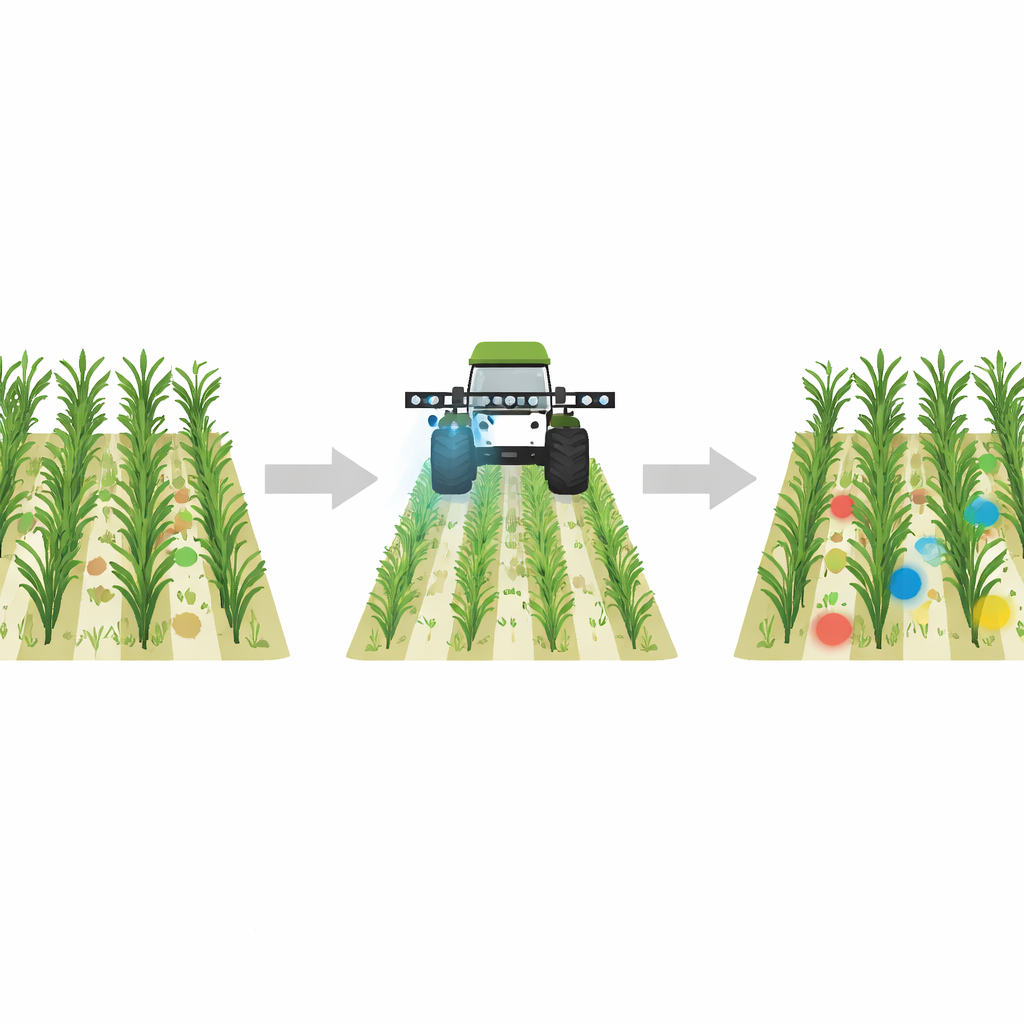

Weeds are the unwanted guests of agriculture, stealing water, light, and nutrients from crops. In sugarcane, a key crop for sugar and bioenergy, these freeloaders can slash yields by a third and push farmers to spray large amounts of herbicides across whole fields. This paper explores whether modern artificial intelligence can give tractors "eyes"—smart cameras that spot weeds growing among sugarcane in real time—so chemicals are sprayed only where they are truly needed.

Why Sugarcane Fields Are Especially Tricky

Many recent AI systems can already tell crops from weeds when plants stand out clearly against bare soil or when images are taken from above. But sugarcane fields present a tougher puzzle. Sugarcane is a tall, perennial grass; its leaves and stems look a lot like many grassy weeds, and both grow as a dense, tangled mat of green. Instead of simple green-on-brown scenes, the camera sees green-on-green, with overlapping leaves, shifting light, dust, mud, and rain. Earlier studies mostly used drone images or neat experimental plots where weeds were visually separate from the crop. The authors argue that this does not reflect the messy reality farmers face and that a more realistic benchmark is needed.

A New Real-World Picture of Weeds in Cane

To tackle this gap, the team built a new dataset from sugarcane fields in Louisiana using a ground-level camera held at about chest height, mimicking a sensor mounted on a tractor or sprayer. They collected over two thousand high-resolution images and grouped them into three scene types: sugarcane only, weeds only, and mixed scenes where both appear. For a subset of the most challenging mixed images, weed experts drew rectangles around weed patches so that computer models could learn where, not just whether, weeds are present. Crucially, the images capture realistic conditions: many small shoots, weeds intertwined with cane, and wide patches of weed growth, often with unclear visual boundaries even for human annotators.

What Today’s AI Can and Cannot Do

The researchers then tested state-of-the-art deep learning models on three tasks. First, in simple scene-level classification—deciding if an image shows sugarcane, weeds, or both—modern networks performed extremely well, with the best transformer-based models reaching about 99% accuracy. This means that, in broad strokes, AI can reliably tell when weeds are present in a sugarcane field image. Second, they examined object detection, where models must draw boxes around individual weed clumps. Here, performance dropped sharply: their best detector, a modern convolutional network called RTMDeT with a ConvNeXt backbone and a geometry-aware loss function, reached an AP50 score of 44.2, far from what would be needed for confident automated spraying. They also learned that simply increasing image resolution or mixing transformer and convolution features did not help and sometimes made detection worse.

Zooming In on Weed Shapes, Not Just Green Pixels

The third task was segmentation: outlining the exact weed pixels within each detected region. The team compared three strategies without training any model specifically for this job: a simple color-based index that emphasizes greenness, a general-purpose "segment anything" model, and a weakly supervised method that learns from coarse cues. Each had strengths and flaws. Color-based methods gave sharp outlines when weeds stood out but failed when background plants had similar shades. The general segmentation model captured structure well but sometimes missed fine leaves or grabbed large chunks of background. The weakly supervised method often found more of the weed in difficult green-on-green scenes but tended to over-mark soil and other non-weed areas. Together with the modest detection scores, these results highlight how hard it remains for AI to separate cane from look-alike weeds under real field conditions.

How Close Are We to Smarter Sprayers?

From a farmer’s point of view, the message is mixed. The good news is that AI can already decide, with near-perfect accuracy, whether a sugarcane scene contains weeds at all, and some detectors are fast enough to run on machines in the field. The bad news is that current systems still struggle to pinpoint exactly where each weed is when the plants are tangled and visually similar, which is precisely when targeted spraying matters most. The authors conclude that while their new dataset and analysis are important steps toward autonomous weed control in sugarcane, reliable, field-ready systems will require better training data, smarter ways to handle ambiguous plant boundaries, and models that balance accuracy and speed on limited onboard hardware. In short, we are closer than before—but not yet at the point where a tractor can safely take over weed control on its own.

Citation: Papa, J.P., Manesco, J.R.R., Schoder, M. et al. Toward autonomous weed management systems in sugarcane crops and an assessment of technological readiness. npj Artif. Intell. 2, 40 (2026). https://doi.org/10.1038/s44387-026-00096-0

Keywords: precision agriculture, weed detection, sugarcane, computer vision, autonomous spraying