Clear Sky Science · en

Explainable multi-granularity attribution reasoning framework for fake news detection

Why spotting fake news is getting harder

Every day, millions of posts combining words and pictures race through social media. Some are harmless, some are true, and some are carefully crafted fakes meant to grab attention, stir emotions, or sway opinions. As image-editing tools and AI generators become cheaper and easier to use, fake news has grown more polished and more dangerous. This paper introduces a new way to look inside fake‑news detection systems so we can see not just whether a post is likely false, but also why.

How fake news tricks our eyes and minds

Fake news creators exploit how people quickly skim headlines and images. They may forge or tweak photos, weave partly true details into an impossible story, stitch together pieces from different events, or mix locations and timelines that do not belong together. A single post about a breaking event might show a dramatic image from a different incident years earlier, or a convincing photo may be generated entirely by AI. Traditional detection systems usually treat all fake posts alike and squeeze text and image into one blended “feature soup.” That approach can work reasonably well, but it behaves like a black box: it is hard for journalists, platforms, or everyday users to understand the specific clues that triggered the alarm.

A new way to ask: “Why is this fake?”

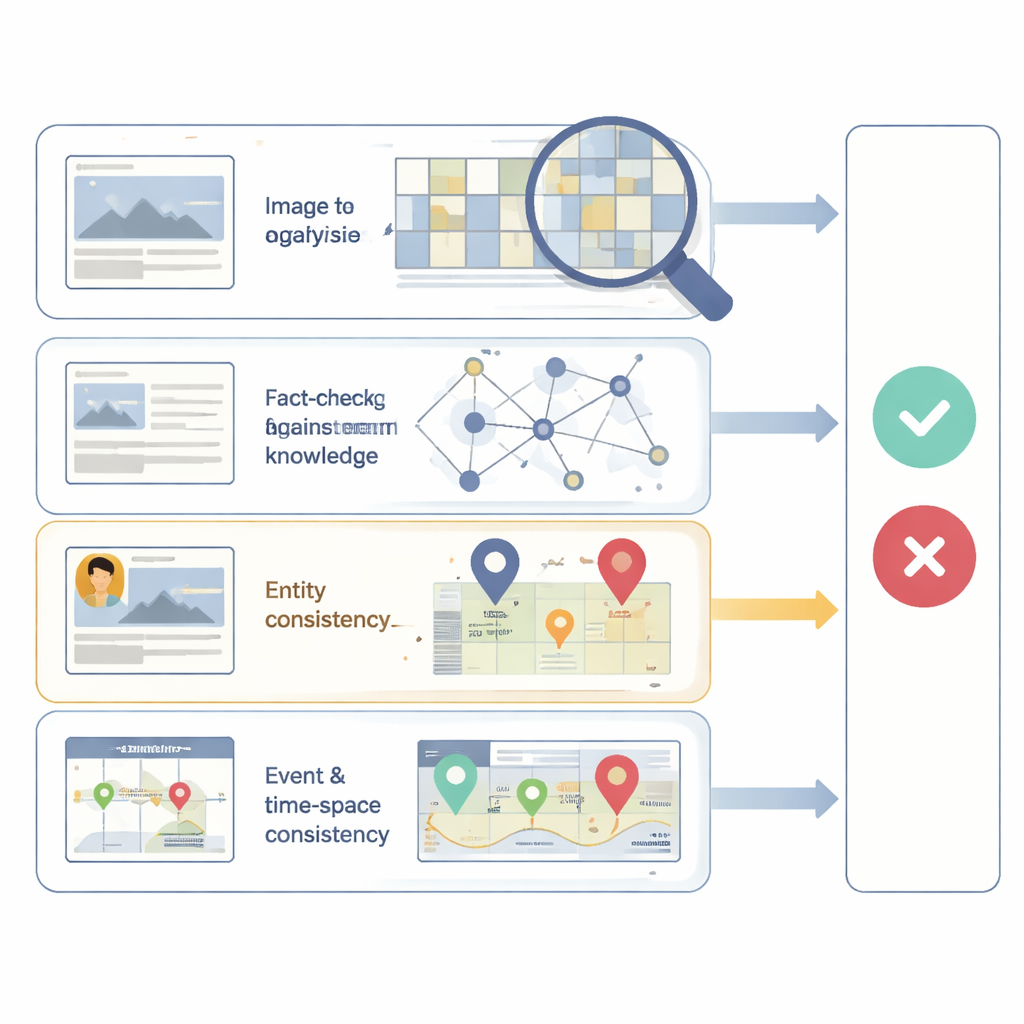

The authors propose an explainable framework called EMAR‑FND that looks at news posts from four distinct angles, each tied to a common way fakes are made. First, it examines whether the image itself shows signs of tampering or synthetic generation, by focusing on subtle camera‑level noise patterns that change when a picture is altered. Second, it checks whether the story’s facts line up with trusted outside knowledge, such as known relationships between people, places, and events. Third, it inspects whether the key entities mentioned in the text—like a public figure or a city—actually match what appears in the accompanying image. Fourth, it evaluates whether the described event fits together in time and space, for example by spotting a mismatch between a claimed location and visual hints in the picture, or between a reported timeline and other evidence.

Piecing together clues from many angles

Each of these four checks is handled by its own reasoning module, which makes a partial judgment about whether that specific aspect looks trustworthy. One module focuses on visual tampering; another reasons over external knowledge graphs; a third builds a rich network linking words, objects in the image, and extracted events; and a fourth compares the post with related evidence across time and space. Instead of hiding these signals inside a single fused representation, EMAR‑FND keeps their contributions separate and then combines them through a final decision step that can weigh how important each view is for a particular case. The result is not just a final real‑or‑fake score, but also an attribution showing, for instance, that a post is flagged mainly because the image appears forged, or because the described event never fits known facts.

Testing the system in the wild

To see how well this approach works, the researchers applied EMAR‑FND to two public collections of real and fake news posts that include both text and images. Across these datasets, their method outperformed several strong existing systems, achieving higher accuracy and better balance between catching fake posts and avoiding false alarms. When they looked at how posts clustered inside the model, real news tended to form tight, consistent groups, while fake news was more scattered—reflecting the many different tricks forgers use. The attribution outputs also proved useful in tough, real‑world examples: posts where the text and image seemed consistent at first glance were exposed as fake either because the image showed hidden manipulation traces or because outside knowledge contradicted the claimed facts.

What this means for everyday readers

In simple terms, the study shows that it is possible to build fake‑news detectors that act less like oracles and more like careful investigators. Instead of giving a bare yes‑or‑no answer, EMAR‑FND highlights which part of a post is suspicious: the picture, the facts, the people mentioned, or the event itself. This kind of explanation can help fact‑checkers, platforms, and readers trust the system’s decisions and learn to recognize common patterns of deception. As fake news continues to evolve, tools that can both spot and explain manipulation will be crucial for keeping online information ecosystems healthier and more transparent.

Citation: Ji, W., Lv, H., Zhao, H. et al. Explainable multi-granularity attribution reasoning framework for fake news detection. npj Artif. Intell. 2, 38 (2026). https://doi.org/10.1038/s44387-026-00093-3

Keywords: fake news detection, multimodal misinformation, explainable AI, social media integrity, image forgery analysis