Clear Sky Science · en

Unraveling the emergence of collective behavior in networks of cognitive agents

Why thinking swarms matter

From robot collectives to online communities, groups of simple units can display surprisingly rich behavior. But what happens when each unit is not simple at all, and instead has powerful language-based reasoning like today’s large AI models? This study explores how swarms of such “cognitive agents” behave compared with classic rule-following particles, and what that means for tasks like problem solving and simulating societies.

From simple particles to talking agents

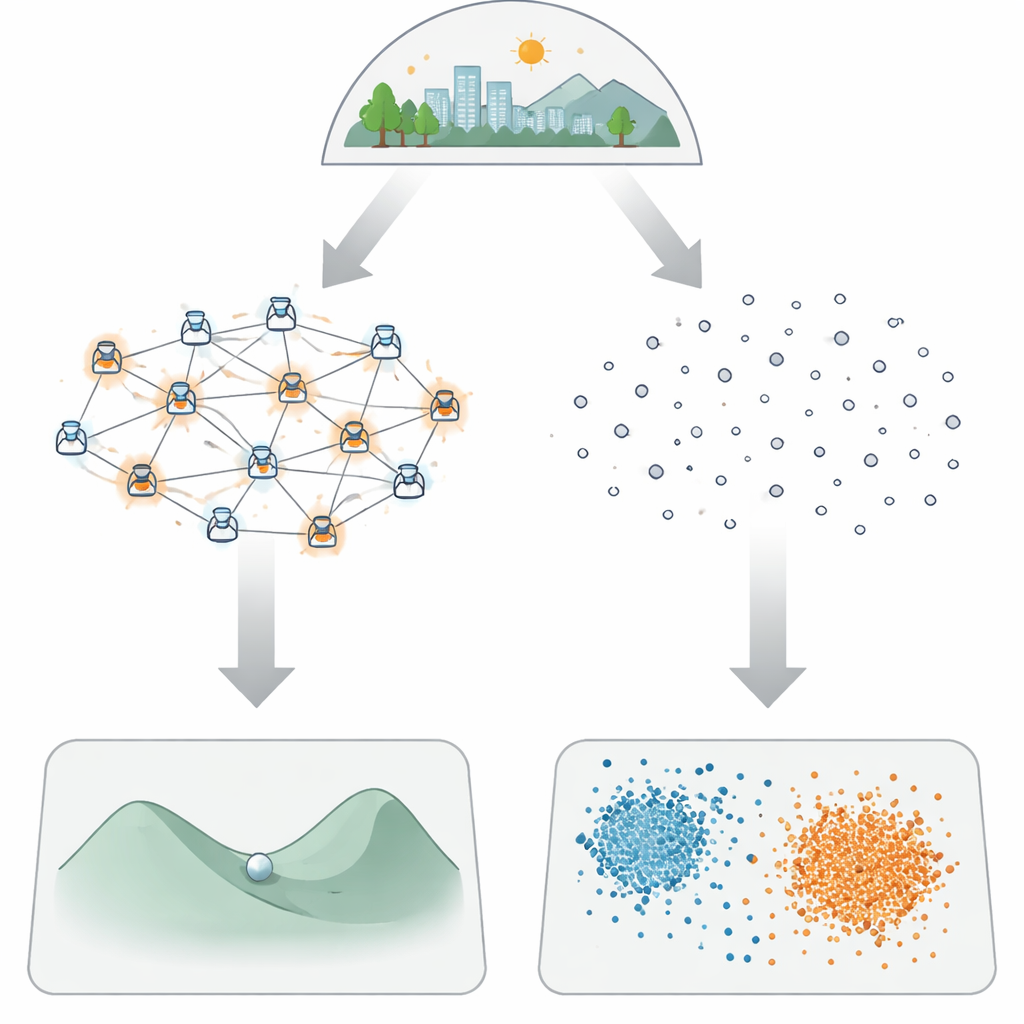

Traditional models of crowds, flocks, or swarms treat individuals as basic particles that follow fixed rules: move toward a neighbor, avoid collisions, prefer similar neighbors, and so on. In contrast, the agents studied here are powered by large language models (LLMs). They perceive their surroundings in words, reason about what to do next, remember past experiences, and even talk to one another. The authors ask a central question: when each unit has this built-in “smarts,” do the overall patterns that emerge at the group level change, and if so, how?

Testing swarms on hard problems

To probe this question, the researchers compare cognitive agents with classic particles on two very different challenges. The first is function optimization, a stand-in for hard search problems where the goal is to find the best solution in a bumpy landscape filled with many local traps. They introduce LLM Agent Swarm Optimization (llmASO), where a network of LLM agents proposes and shares candidate solutions in natural language. This is compared against a well-known particle-based method called Particle Swarm Optimization, as well as a single LLM optimizer that works alone. In simpler landscapes, single LLM agents rapidly find good answers by spotting patterns in past attempts. But in rougher terrains with many local pits, lone agents tend to settle too quickly on nearby “good enough” spots. Swarms of talking agents, by contrast, reliably discover the true best region—though they do so more slowly and are sensitive to how information flows through their communication network.

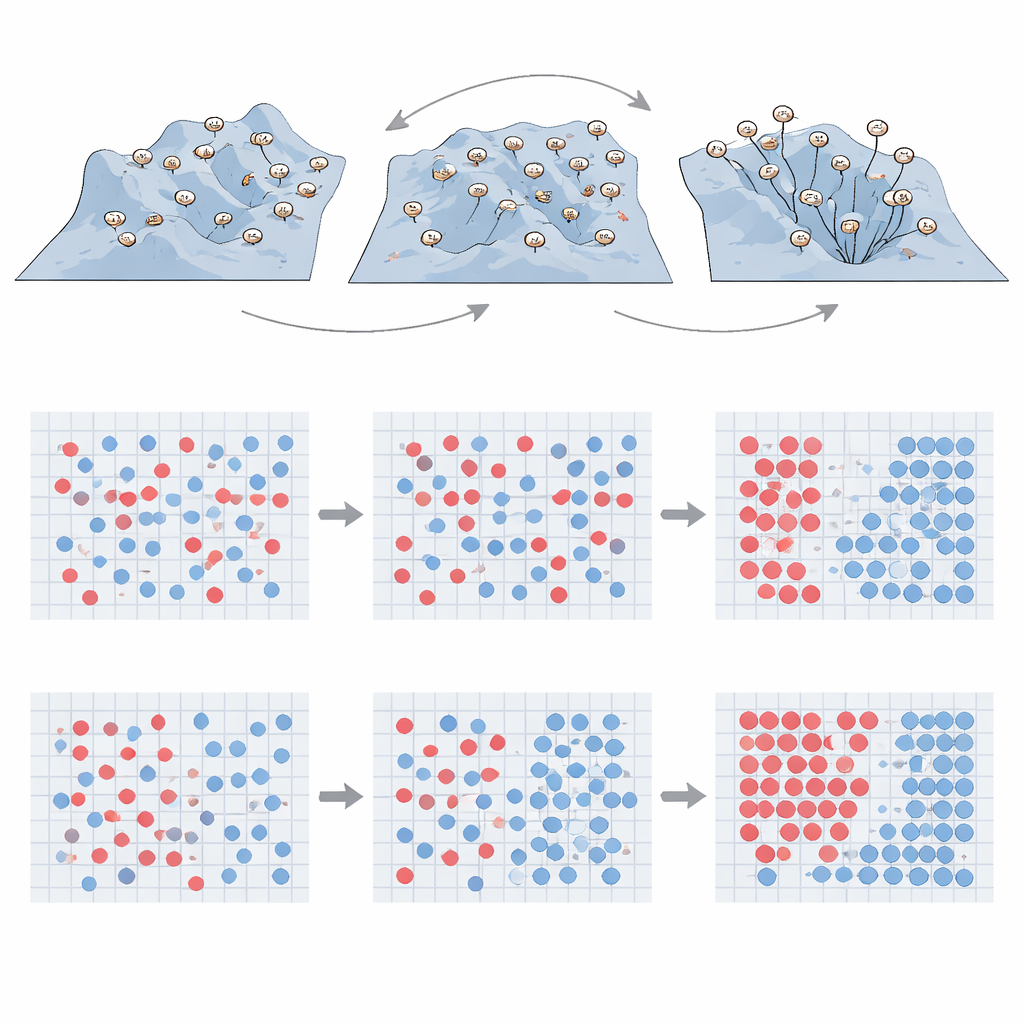

How talking changes social patterns

The second test revisits the classic Schelling model of segregation, which shows how mild preferences for similar neighbors can lead to stark separation between groups. Here, the agents move on a grid and belong to one of two types; they are “happy” if too few neighbors differ from them. For standard particles that follow simple relocation rules, three familiar phases appear as tolerance changes: a mixed state with constant shuffling, a segregated state with distinct clusters, and a frozen state where movement largely stops. Cognitive agents obey the same basic satisfaction rule but decide where to move after exchanging messages with other agents. When every agent can talk to every other, the big-picture outcome looks surprisingly similar to the particle case, suggesting that merely adding language and reasoning does not automatically overturn well-known segregation patterns.

Networks and “birds of a feather” effects

The story changes once the structure of conversations is made more realistic. The authors rewire the communication network so that agents mostly talk to nearby peers, or so that connections follow patterns seen in many real-world social systems with a few highly connected hubs. They also experiment with homophily (agents preferentially talking to the same type) and heterophily (preferring the opposite type). These tweaks have strong consequences: when agents mostly converse with similar peers, they coordinate quickly, form clusters efficiently, and can even avoid the endlessly mixed phase. When they talk mainly across types, the path to happiness is slower and more complex, yet strong segregation can still arise—even though every conversation crosses group lines. Overall, local conversations and “birds of a feather” tendencies reshape how segregation emerges, in ways not accessible to non-thinking particles.

What this means for future AI swarms and social simulations

The authors conclude that giving each agent advanced language-based abilities does not simply make groups universally better. Instead, these capabilities introduce new forces—such as rapid consensus and pattern exploitation—that can be helpful or harmful depending on how agents are wired together. In optimization tasks, poorly designed networks can push smart agents to agree too fast on mediocre solutions; carefully limiting information flow helps them explore more broadly, at the cost of speed. In social simulations, realistic communication patterns and homophily can generate behaviors different from classic models and may better mirror human societies. As swarms of AI-driven robots and networks of virtual agents become more common, understanding and tuning these collective effects will be crucial for designing safe, effective systems.

Citation: Zomer, N., De Domenico, M. Unraveling the emergence of collective behavior in networks of cognitive agents. npj Artif. Intell. 2, 36 (2026). https://doi.org/10.1038/s44387-026-00091-5

Keywords: cognitive agents, large language models, swarm optimization, segregation dynamics, network topology