Clear Sky Science · en

POLYT5: an encoder-decoder foundation chemical language model for generative polymer design

Teaching Computers the Language of Plastics

Plastics and other polymers are everywhere—from phone cases and power cables to electric vehicle batteries. Yet discovering new polymers with just the right mix of strength, flexibility, and electrical behavior is slow and expensive. This article introduces POLYT5, an artificial intelligence system that learns the “language” of polymers so it can both predict their properties and dream up promising new ones, helping scientists rapidly design materials for advanced electronics and energy storage.

Why New Polymers Are Hard to Find

Designing a new polymer is like searching for a single useful sentence in a library of all possible letter combinations. Chemists can tweak building blocks and test the results, but the number of possibilities is astronomical. Traditional machine learning has helped by predicting properties of known polymers, yet these tools usually depend on hand-crafted numerical descriptors and still require people to guess which candidate structures to test. General-purpose large language models can generate molecules, but they often lack the chemical “common sense” needed for reliable materials design, producing formulas that look legal on paper but are unrealistic or unsynthesizable in the lab.

Giving AI a Polymer-Focused Vocabulary

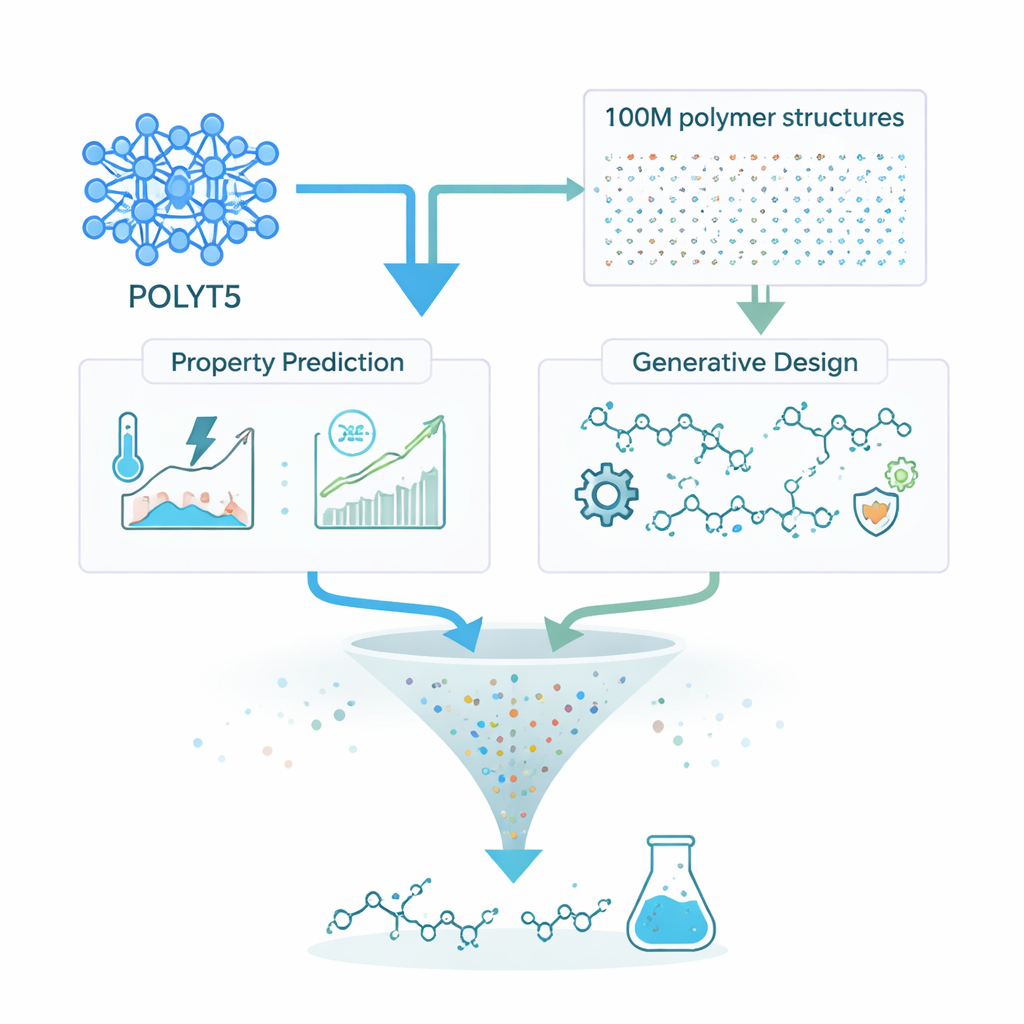

POLYT5 tackles this challenge by training a language model specifically on polymer structures, rather than on generic text. The authors assembled a vast training set: over 12,000 real polymers from the literature plus more than 100 million hypothetical polymers created with well-established reactions used by chemists. To feed these structures into a language model, they converted each polymer into a robust string representation that guarantees chemically valid molecules. Special tokens mark the ends of the repeating unit and encode simple property information. Using the T5 encoder–decoder architecture, POLYT5 learns to reconstruct masked pieces of these strings, gradually internalizing recurring patterns—such as common backbones and functional groups—and how they relate to material behavior.

From Reading Polymers to Predicting Their Behavior

After this large-scale training, POLYT5 is fine-tuned for practical tasks. One set of models predicts key polymer properties: glass transition temperature (where a plastic softens), melting and decomposition temperatures, electronic band gap, dielectric constant (how well it stores electrical energy), and whether a polymer dissolves in various liquids. Across thousands of examples, the model’s predictions closely match known values, with errors comparable to or better than previous machine-learning approaches. Importantly, POLYT5 can handle many different properties with the same underlying representation, reducing the need for custom features or separate tools for each task.

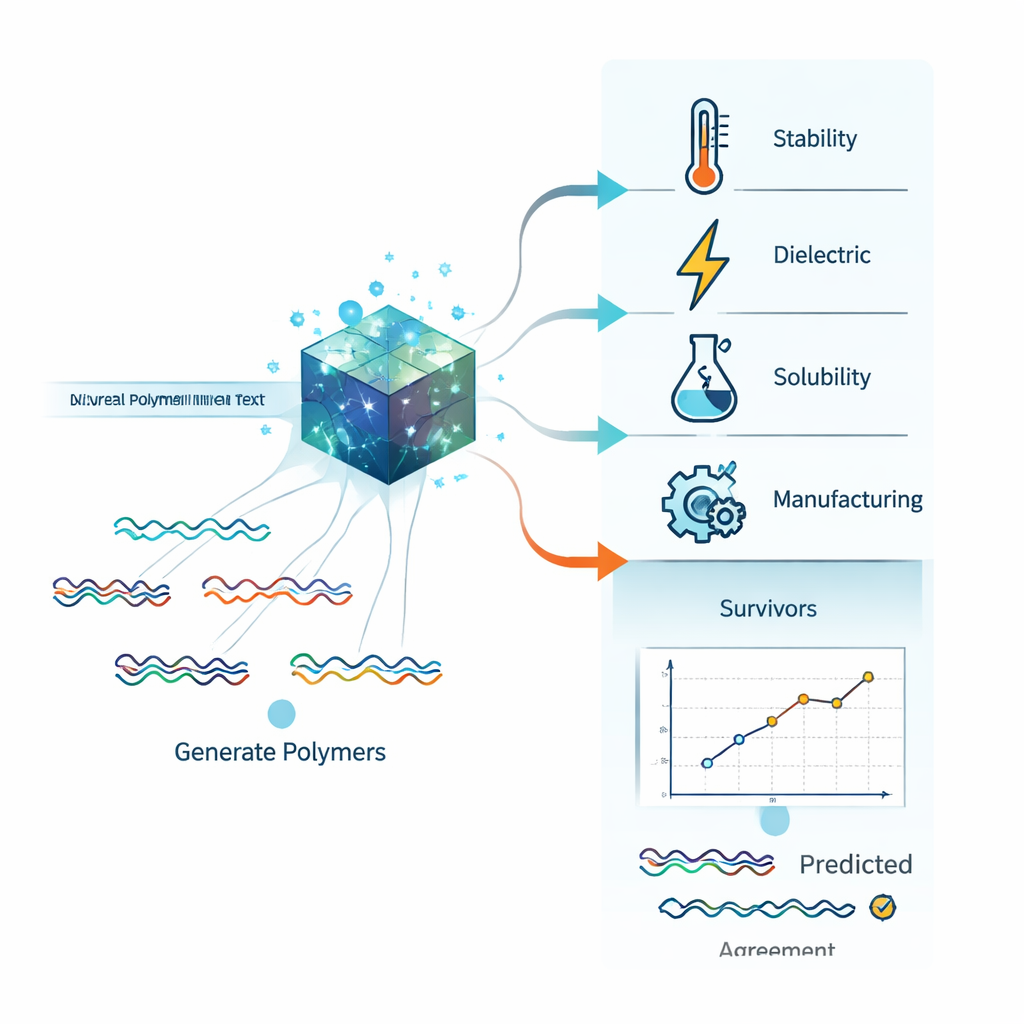

Asking the Model to Invent New Materials

The same framework can also run in reverse: instead of predicting properties for a given polymer, POLYT5 can generate polymer structures that match a desired target. The authors focus on the glass transition temperature because it is crucial for mechanical and thermal stability in devices. By giving the model a target value—such as 500 kelvin—they ask it to produce string representations of hypothetical polymers that should soften around that temperature. The team explored how sampling settings affect the balance between variety and validity, ultimately generating over six million unique, chemically sensible candidates centered around the chosen temperature, while remaining structurally distinct from known polymers.

Finding A Few Gems Among Millions

To demonstrate real-world impact, the researchers turn POLYT5 toward a specific goal: polymers for high-performance electrical insulators and energy-storage devices. Starting from the millions of generated candidates, they apply a multi-step digital filter using POLYT5’s own property predictors. Polymers must have a relatively high dielectric constant, a wide electronic band gap to avoid breakdown, good thermal stability, and practical processing windows. They must also dissolve in common, environmentally friendly solvents like water or ethanol and appear synthetically accessible by standard chemistry rules. This funnel narrows the field to about 18,000 promising options. From these, the team selects one candidate that is straightforward to synthesize. When they make it in the lab and measure its properties, the experimental results line up well with POLYT5’s predictions, falling within expected error ranges.

Making Advanced Polymer Design Accessible

Beyond the core model, the authors build an “agentic” AI interface that lets users work with POLYT5 through natural-language conversation. A general-purpose language model interprets questions such as “Predict the dielectric constant of this polymer” or “Suggest polymers with a high melting point that dissolve in ethanol,” then routes them to the appropriate POLYT5 tools under the hood. This setup hides the complexity of chemical string formats and model selection, making powerful polymer design capabilities available to both specialists and non-experts. In plain terms, POLYT5 shows that teaching an AI to read and write the language of plastics can greatly speed up the hunt for new, high-performance materials, potentially shortening the path from computer screen to working devices.

Citation: Sahu, H., Xiong, W., Savit, A. et al. POLYT5: an encoder-decoder foundation chemical language model for generative polymer design. npj Artif. Intell. 2, 30 (2026). https://doi.org/10.1038/s44387-026-00087-1

Keywords: polymer design, chemical language model, materials discovery, dielectric polymers, generative AI