Clear Sky Science · en

DeepKoopFormer: a Koopman enhanced transformer based architecture for time series forecasting

Why smarter forecasts matter

From weather and energy planning to financial markets, many of our biggest decisions depend on predicting how things will change over time. These "time series"—streams of measurements like wind speed, electricity output, or coin prices—are becoming longer, noisier, and more complex. Modern AI tools called Transformers can crunch this data, but they often behave like black boxes and can become unstable when pushed to predict far into the future. This paper introduces DeepKoopFormer, a forecasting method that keeps the predictive power of Transformers while adding mathematical structure to make their behavior more stable, interpretable, and reliable over long horizons.

A new way to steady powerful models

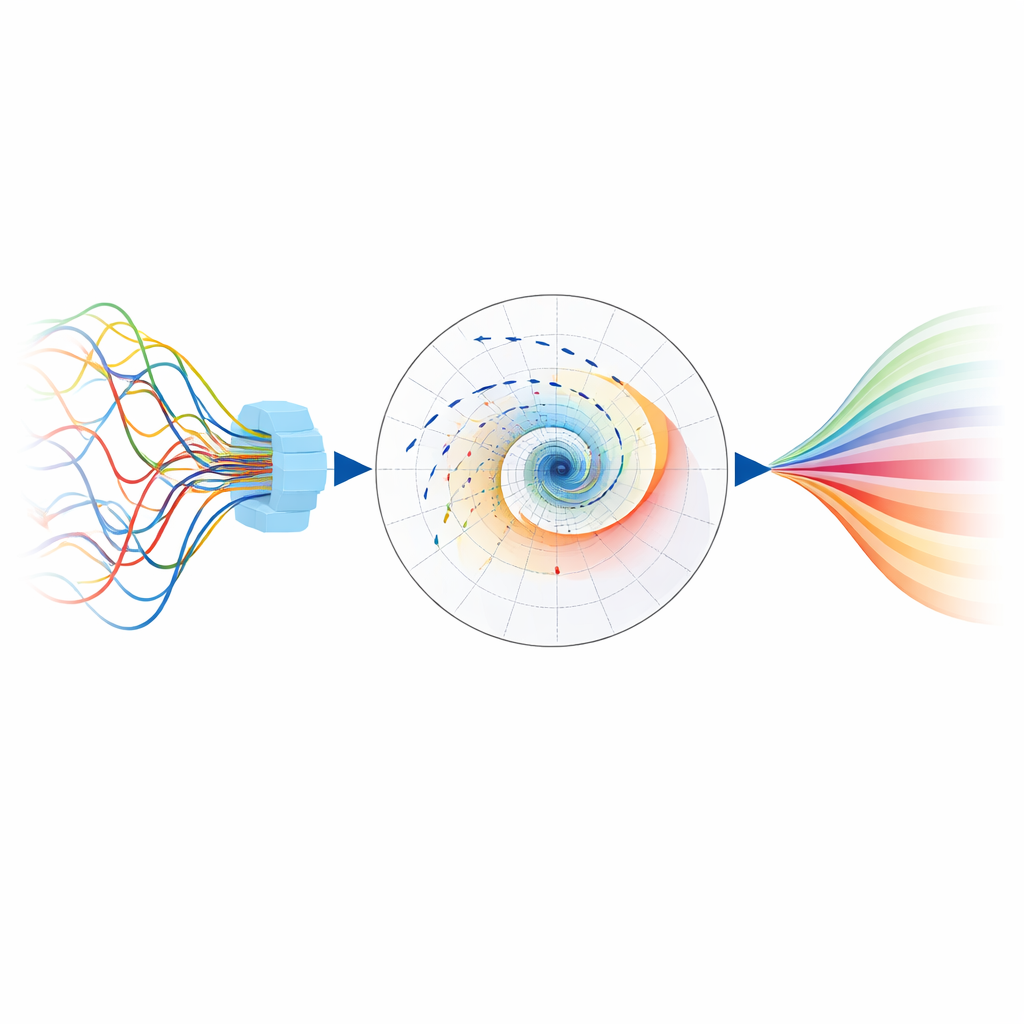

The authors start from a familiar idea in today’s AI: use a Transformer to digest raw time series into a rich internal representation. DeepKoopFormer then inserts a carefully designed middle layer inspired by a concept from dynamical systems known as the Koopman operator. Instead of letting the model evolve in a complicated, wholly nonlinear way, this middle layer advances the internal state using a simple linear transformation in a hidden space. Crucially, this transformation is built so that its influence gradually shrinks over time rather than amplifying wildly, which mathematically guarantees that long-range forecasts will not blow up or oscillate uncontrollably.

How the model keeps its balance

To enforce this stability, DeepKoopFormer constrains the linear step in several ways. The transformation is factored into three pieces: two orthogonal matrices (which preserve lengths and angles) wrapped around a diagonal matrix of scale factors that are all forced to be less than one. This means the hidden state is gently contracted rather than amplified each time it is updated. A second ingredient, called Lyapunov regularization, adds a training penalty whenever the energy of the hidden state grows from one step to the next. Together, these mechanisms ensure the internal dynamics are calm and well-behaved, while the Transformer before and the linear decoder after this step remain free to be expressive. The model’s capacity to learn rich patterns and its stability are controlled by separate settings, so users can tune one without breaking the other.

Putting the method to the test

The researchers evaluate DeepKoopFormer on both controlled and real-world problems. They first test it on classic chaotic systems such as the Lorenz attractor, where small changes can lead to vastly different futures, and add random noise to mimic real measurements. Across different Transformer backbones, the Koopman-enhanced versions closely track the true trajectories while maintaining stable internal behavior over many short-range forecasts. The authors then move to demanding real datasets: climate projections and reanalysis over Germany (wind speed and surface pressure), cryptocurrency prices, and electricity generation from multiple sources in Spain. In these cases, DeepKoopFormer variants are compared against a standard long short-term memory (LSTM) network and simpler linear baselines, across many choices of input window length, forecasting horizon, and model size.

What the experiments reveal

Across climate, financial, and energy tasks, the Koopman-augmented Transformer models generally achieve lower prediction errors and more stable behavior than the LSTM baseline, especially when forecasting many steps ahead or working with high-dimensional data. For wind and pressure over Germany, and for electricity generation, the PatchTST and Informer versions of DeepKoopFormer tend to perform best, reliably capturing both smooth trends and rapid fluctuations. In some special cases where the underlying patterns are almost entirely linear, a very simple linear method still wins on test accuracy, highlighting that no single model is universally optimal. However, the Koopman-based designs consistently show smoother error patterns as forecasting horizons grow, indicating better control of long-term uncertainty and less tendency to overfit quirky details in the training data.

Why this approach is promising

In the end, DeepKoopFormer shows that it is possible to combine the flexibility of deep learning with the guarantees of classical dynamical systems theory. By inserting a structured, stable linear step into an otherwise standard Transformer pipeline, the authors obtain forecasts that are accurate, robust to noise, and mathematically easier to reason about. For practitioners who rely on long-range forecasts in climate science, energy systems, or finance—where stability and interpretability are as important as raw accuracy—this framework offers a way to trust powerful neural models a bit more, and to understand how and why their predictions behave over time.

Citation: Forootani, A., Khosravi, M. & Barati, M. DeepKoopFormer: a Koopman enhanced transformer based architecture for time series forecasting. npj Artif. Intell. 2, 35 (2026). https://doi.org/10.1038/s44387-026-00085-3

Keywords: time series forecasting, transformer models, Koopman operator, stable dynamics, climate and energy data