Clear Sky Science · en

A variational framework for residual-based adaptivity in neural PDE solvers and operator learning

Smarter AI for Tough Equations

Many of today’s scientific breakthroughs—from climate modeling to designing new materials—depend on solving complex equations that describe how fluids flow, waves travel, or chemical fronts move. Neural networks have recently become powerful tools for tackling these equations, but they often struggle when the physics gets tricky: sharp shocks, tiny structures, and long-time predictions can cause them to fail. This paper introduces a systematic way to make these AI solvers focus their effort exactly where they struggle most, so they become both faster and more accurate.

Why Neural Networks Need Guidance

In scientific machine learning, neural networks are trained either to reproduce the solution of a single equation (as in physics-informed neural networks, or PINNs) or to learn a whole mapping from inputs to solutions (known as operator learning). In both cases, the network is judged by a “residual,” a measure of how badly it disobeys the underlying equation at each point in space and time. Standard training treats all points equally, minimizing the average error. That works for simple problems, but for equations with steep gradients, moving fronts, or localized structures, a low average can hide serious mistakes in critical regions. Researchers have responded with ad‑hoc rules that place more training points where the residual is large, but until now these rules have remained heuristic and loosely justified.

A Unified Recipe for Adaptive Attention

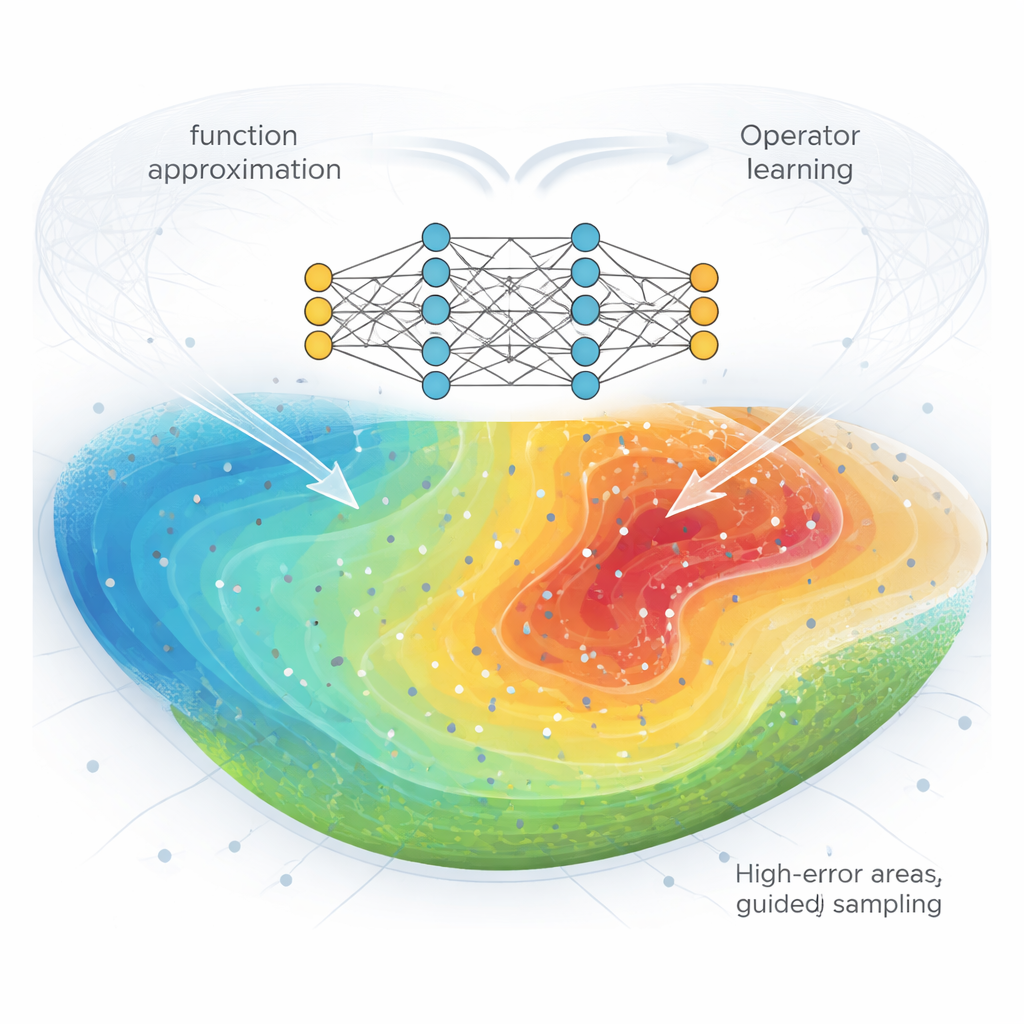

The authors develop a variational framework that turns these heuristics into a principled recipe. The key idea is to see sampling and weighting as choices about which probability distribution over space (and over training examples) the network should care about most. They introduce a family of “potential” functions that transform the residual into a new objective. Different choices of potential correspond to different priorities: an exponential potential drives the network to reduce its single worst error, while a quadratic potential emphasizes reducing the spread, or variance, of the error across the domain. Mathematically, optimizing these transformed objectives naturally leads to sampling more often in regions where the current residual is large. The resulting method, called variational residual-based attention (vRBA), subsumes many existing adaptive schemes and provides a clear way to invent new ones.

Extending to Learning Whole Physics Maps

Modern AI solvers increasingly aim to learn not just one solution but an entire operator: a mapping from inputs such as initial conditions or forcing to full space–time fields. This is the goal of neural operator architectures like DeepONet, Fourier Neural Operators (FNO), and time‑conditioned U‑Nets. Here the challenge is doubled: there is variation across different input functions and variation across space and time within each example. The authors adapt their framework to this product setting by combining two levels of adaptivity. First, they reweight spatial points within each example so that areas with high residuals matter more. Second, they use the accumulated residuals to preferentially resample entire training examples that are hardest to learn. This hybrid scheme can be plugged directly into popular operator-learning models without redesigning their architecture.

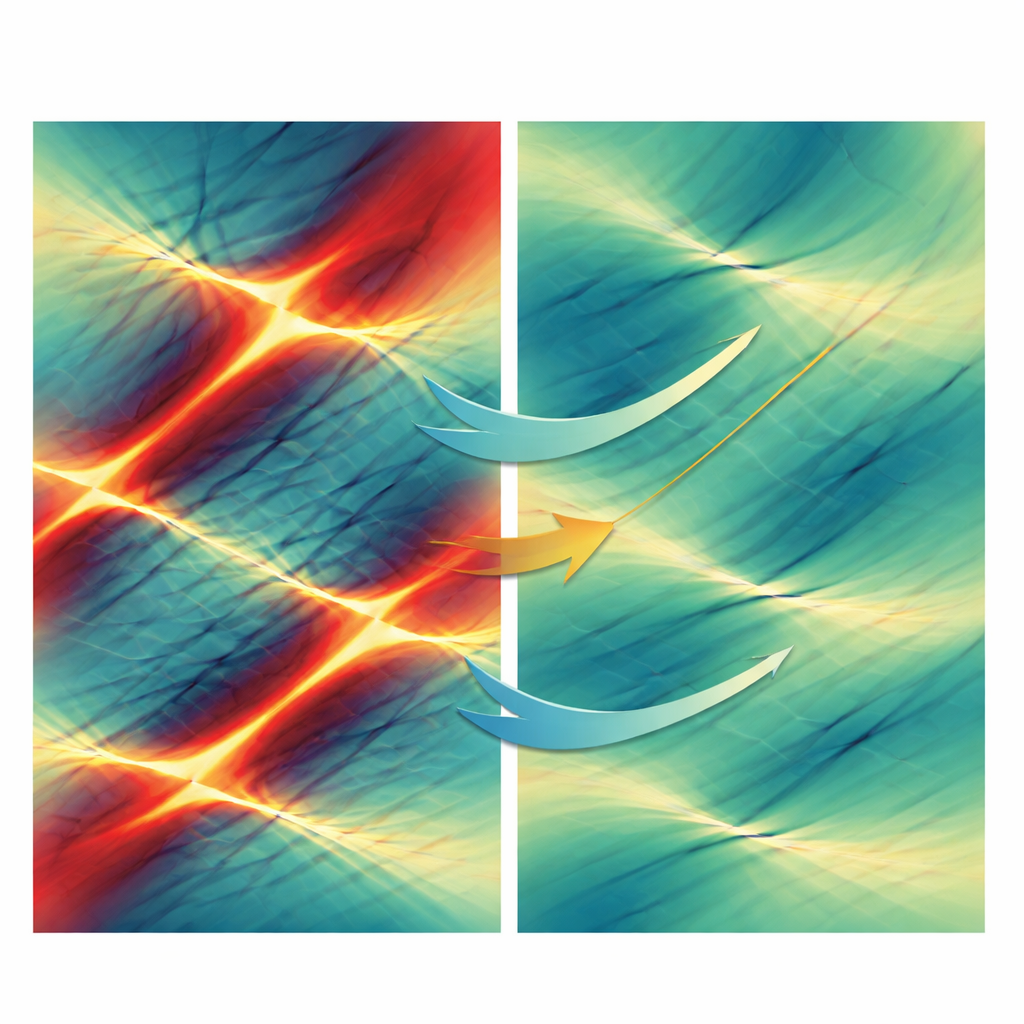

Sharper Details, Slower Error Growth

Across a wide suite of benchmarks, the vRBA approach consistently outperforms traditional training. For PINNs, the team tests classic nonlinear equations such as the Allen–Cahn, Burgers’, and Korteweg–De Vries equations. Some of these problems are known to defeat standard PINNs, either because of sharp internal layers or interacting wave pulses. With vRBA, the same networks converge faster and reach significantly lower error, and in difficult cases where the baseline essentially fails, the adaptive methods recover accurate solutions. For operator learning, they apply vRBA to bubble growth in liquids, high‑pressure shock tube flows, two‑dimensional turbulence, and wave propagation. Here, the main benefit is not just a better final error, but a much slower accumulation of mistakes over time, which is crucial when a model’s output is repeatedly fed back as its own input.

Cleaning Up Noise in the Learning Signal

The authors trace these gains to two main effects. First, by changing how training points are sampled or weighted, vRBA reduces the statistical noise in the estimated loss: random batches of points give a more reliable picture of how well the network is doing overall. This directly reduces discretization error, the gap between the continuous ideal objective and the finite set of points used in practice. Second, the method improves the signal‑to‑noise ratio of the gradients that drive learning, so that different regions of the domain “agree” more on which direction the parameters should move. As a result, networks escape slow, indecisive phases of training much sooner and enter a regime where error drops quickly. The framework also clarifies when aggressive strategies—those that strongly punish the largest residuals—can help and when they may destabilize training.

What This Means for Future Scientific AI

For non-experts, the message is that smarter attention to where an AI solver is wrong can make it a far more trustworthy tool for science and engineering. Rather than relying on trial‑and‑error rules, this work offers a mathematical blueprint for steering neural networks toward the most informative parts of a problem, whether they are shock fronts, fine oscillations, or long-time behaviors. As scientific models grow larger and are used in safety‑critical settings, such principled strategies for reducing error and stabilizing learning will be essential for turning powerful neural networks into reliable scientific instruments.

Citation: Toscano, J.D., Chen, D.T., Ooomen, V. et al. A variational framework for residual-based adaptivity in neural PDE solvers and operator learning. npj Artif. Intell. 2, 32 (2026). https://doi.org/10.1038/s44387-026-00084-4

Keywords: physics-informed neural networks, operator learning, adaptive sampling, scientific machine learning, partial differential equations