Clear Sky Science · en

Recent advances in intelligent wearable systems: from multiscale biomechanical features towards human motion intent prediction

Reading Bodies Before They Move

Imagine if a smartwatch, shoe insole, or lightweight exoskeleton could sense what you are about to do and quietly help you do it—steadying a step before you stumble, boosting a tired muscle, or letting a prosthetic hand move almost as naturally as a real one. This review article explains how scientists are building “intent-aware” wearable systems that read the body’s own mechanical and electrical signals to predict our next movements, opening new possibilities for rehabilitation, safer work, sports performance, virtual reality, and driving.

How the Body Hints at the Next Move

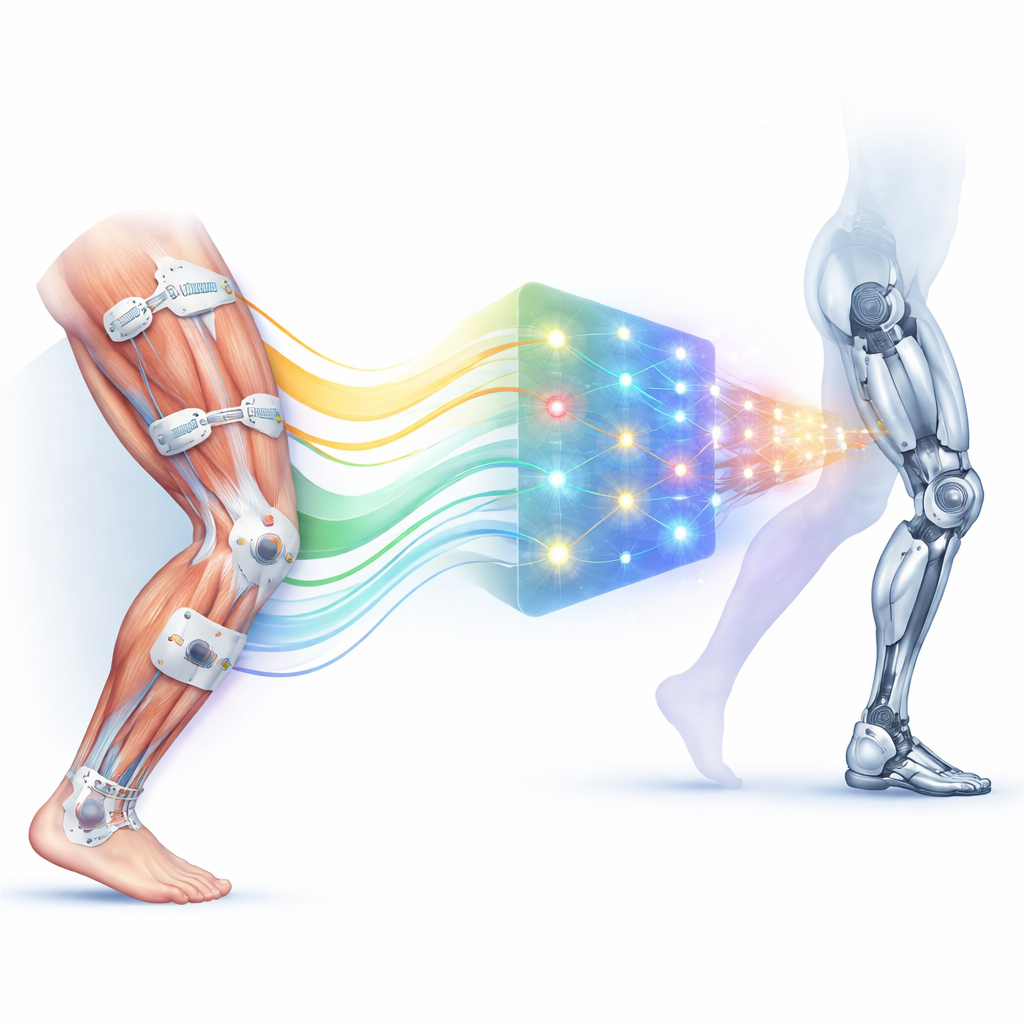

Our bodies leak clues about upcoming actions across several physical layers. At the whole-body level, subtle shifts in acceleration—often measured near the waist—reveal how stable our center of gravity is and when we are about to speed up, slow down, or change direction. Sudden changes in these patterns can precede a slip or a sharp turn by a fraction of a second, giving algorithms a window to predict a fall or a fast maneuver. Zooming in to individual joints, changes in angles and angular speeds at the hip, knee, ankle, shoulder, elbow, and fingers form rich motion “signatures” for walking, lifting, or grasping. At the deepest level, tiny electrical bursts in muscles, captured by surface electrodes on the skin, appear tens to hundreds of milliseconds before visible movement, providing an early warning of intent that is especially valuable for controlling prosthetic limbs and exoskeletons.

Smart Sensors Woven Into Daily Life

To capture these signals, engineers are spreading a network of small sensors across the body. Inertial units track acceleration and rotation of body segments; flexible strain and pressure sensors measure joint bending and foot forces; bioelectric sensors monitor muscle activity, brain signals, and heart rhythms; and even optical, acoustic, and chemical sensors watch blood flow, tissue changes, and sweat chemistry. These components are packaged into familiar objects—watches, armbands, smart shoes and gloves—as well as into electronic textiles and skin-like patches that conform to joints and muscles. By layering sensors at the body, joint, and muscle levels, designers can follow motion from the first neural spark in muscle fibers, through the torque at a joint, all the way to changes in whole-body balance.

Teaching Machines to Read Human Motion

Collecting data is only half the task; the other half is interpreting it fast enough to be useful. Earlier systems relied on hand-crafted rules and classical machine-learning methods that looked at carefully chosen features, such as average muscle activity or peak joint angle, and then assigned each pattern to a known action. These methods are efficient and run well on small, battery-powered devices, but they struggle when motions become more varied or noisy. More recently, deep-learning approaches—convolutional, recurrent, and transformer-style neural networks—have been trained to spot complex patterns across time and across multiple sensors at once. They can fuse acceleration, pressure, and muscle signals to recognize gait phases, predict joint angles ahead of time, or estimate the torque a human will soon generate, often with prediction errors measured in only a few tens of milliseconds.

From Clinics and Factories to Stadiums and Simulators

These intent-predicting wearables are moving from lab prototypes into many real-world settings. In rehabilitation, clothing-like exoskeletons and passive knee braces use joint angles, forces, and muscle activity to give just enough assistance for walking or therapy exercises, adapting to each patient’s progress. For workers and industrial robots, body acceleration and muscle sensors can flag fatigue, foresee unsafe motions, and let robots anticipate and coordinate with human partners. Athletes benefit from E-skins and lightweight motion suits that track joint loading and muscle use to fine-tune technique and reduce injury risk. In virtual reality, smart rings and gloves use finger motion and muscle cues to deliver more natural grasping and touch, while in cars, head and limb sensors help anticipate braking, lane changes, or drowsiness to support driver-assistance systems.

Obstacles on the Road to Everyday Use

Despite impressive accuracy in controlled tests, bringing these systems into daily life is challenging. Real environments are messy: sweat, sliding electrodes, clothing shifts, and electrical noise can distort signals, while people vary widely in body shape, strength, and movement style. That means models trained on one group often perform poorly on another or in new tasks. Flexible sensor materials must also survive continuous bending and stretching without losing sensitivity, and compact power supplies must keep multi-sensor systems running for long periods. On top of this, the rich streams of physiological and motion data raise serious privacy questions, since they can reveal health status, habits, and even emotional states if misused or leaked.

What This Means for the Future

The authors conclude that predicting human motion intent is no longer science fiction, but turning it into a safe, trusted everyday technology will require progress on several fronts at once. Smarter learning methods must adapt to each user and stay robust when signals degrade; sensor materials need to be durable, comfortable, and energy-efficient; and strong safeguards are needed to secure personal movement and health data. If these pieces come together, future wearables may form a seamless “perception–decision–action” loop around the body, quietly understanding what we aim to do next and offering help—whether that means stabilizing a step, amplifying muscle power, guiding recovery, or deepening our connection with machines and virtual worlds.

Citation: Chen, S., Peng, C., Yang, B. et al. Recent advances in intelligent wearable systems: from multiscale biomechanical features towards human motion intent prediction. npj Artif. Intell. 2, 33 (2026). https://doi.org/10.1038/s44387-026-00083-5

Keywords: wearable sensors, human motion prediction, biomechanics, exoskeletons, prosthetics