Clear Sky Science · en

AIM review tool: artificial intelligence for smarter systematic review screening

Why Sorting Scientific Studies Needs a Rethink

Every day, scientists publish thousands of new studies—far more than any team of researchers can comfortably read. When health guidelines or big scientific decisions depend on carefully summarizing this evidence in systematic reviews, experts can spend months just sorting which papers matter. This article introduces the AIM Review Tool, a web-based system that uses artificial intelligence in your browser to help researchers find the important studies faster, with less repetitive work and more transparency.

Turning a Paper Flood into a Manageable Stream

Systematic reviews aim to answer focused questions—such as whether a treatment works—by searching multiple databases and then screening every potentially relevant paper. That screening step is slow and exhausting: teams may begin with tens of thousands of titles and abstracts, and manually decide which ones to read in full. Existing AI tools can help prioritize which records to look at first, but they often rely on closed, opaque algorithms or require complex software setups. AIM Review was designed to be open, configurable, and easy to run directly in a web browser, so that researchers can better understand and control how the AI makes its decisions.

How the Tool Learns from Human Decisions

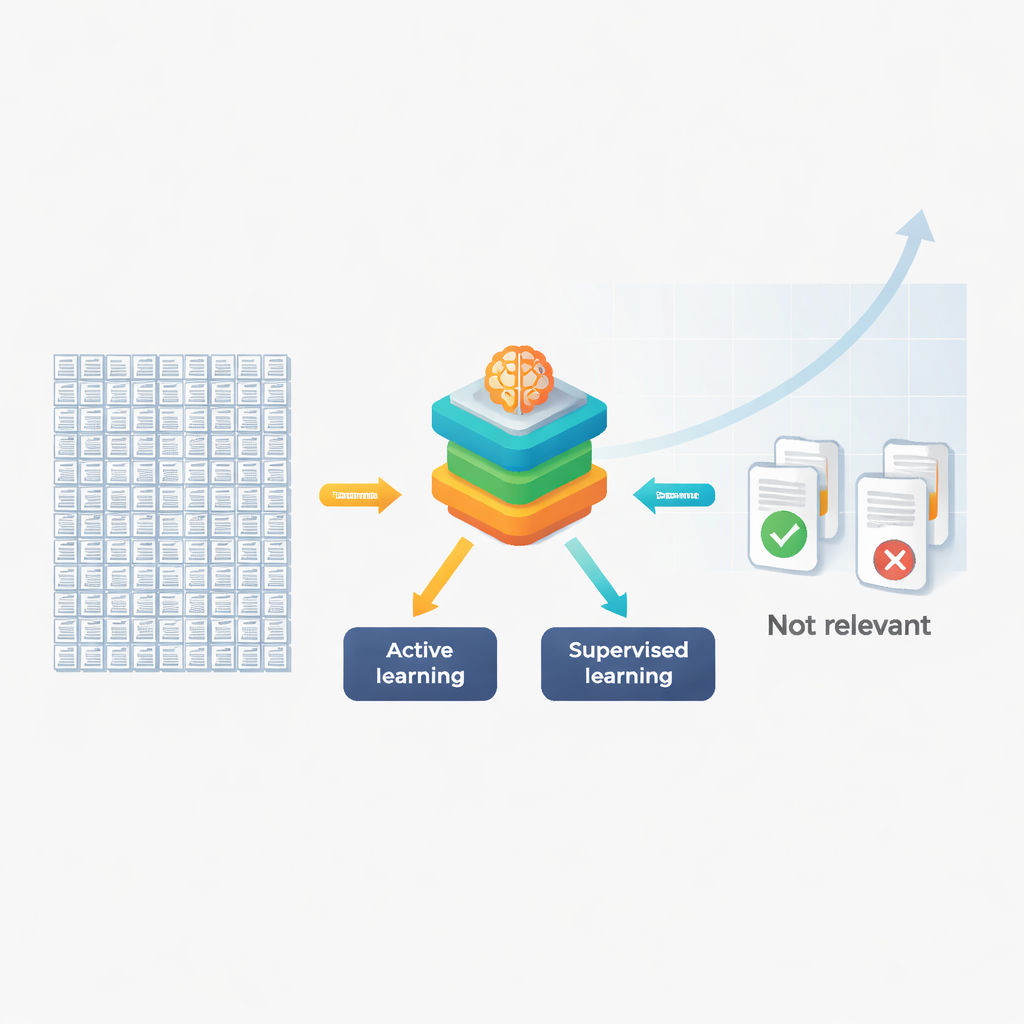

AIM Review combines two main kinds of machine learning. First, it uses active learning to support real-time prioritization. As reviewers label papers as “relevant” or “not relevant,” the system learns patterns in the wording of titles and abstracts. It then reorders the remaining papers so that those most likely to be relevant appear earlier in the screening queue. Under the hood, the software turns text into numeric fingerprints using several methods—from simple word counts to advanced language models—and then feeds these into classifiers such as logistic regression or support vector machines. By stacking or fusing these different text representations, AIM Review can capture both basic keywords and deeper meaning in the language.

Cutting Workload in Real Systematic Reviews

The authors tested AIM Review on six completed systematic reviews across psychology, psychiatry, computer science, endocrinology, and environmental health. In simulated screening runs, active learning greatly reduced how many papers needed to be checked by hand while still finding at least 95% of the truly relevant studies. Depending on how rare relevant studies were, the “work saved” ranged from about 20% to as high as 95%. For example, in a review with more than 16,000 papers but very few relevant ones, the system could have cut manual screening from all records down to roughly 2,400 while still capturing almost every important study. In fields where many studies turn out to be relevant, the savings were smaller but still meaningful.

Predicting Relevance to Semi-Automate Screening

Active learning still assumes that humans will eventually look at most of the high-priority records. To go further, AIM Review adds a supervised learning mode based on nested cross-validation, a rigorous way of building and testing models. After reviewers manually label a subset of the papers (for example, 20%), the tool trains and tunes models to predict which of the remaining 80% are likely to be relevant. Across case studies, these models reached balanced accuracies between about 75% and 87%, meaning they were reasonably good at both catching relevant papers and rejecting irrelevant ones. Different strategies offered trade-offs: stacking multiple models often delivered slightly higher accuracy but risked overfitting, while simply fusing all text features tended to generalize better to new material.

From Manual Drudgery to Guided, Transparent AI Help

AIM Review is organized into three connected modules: a labeling app to screen papers with active learning, an agreement app to compare decisions between different reviewers, and a prediction app to train supervised models and label unscreened records. Everything runs locally in the browser, which protects data privacy and avoids complicated installations. The authors stress that the tool does not replace expert judgment. Instead, it helps teams spend less time on repetitive sorting and more time evaluating the quality and meaning of the best candidate studies. Their results suggest that, when used carefully, browser-based AI can make large, trustworthy evidence summaries more feasible—especially in areas where the volume of research would otherwise overwhelm human reviewers.

What This Means for Future Evidence Gathering

For a layperson, the key message is that smarter software can reduce the hidden, labor-intensive steps behind evidence-based medicine and policy. By learning from reviewers’ decisions and rigorously testing its own predictions, AIM Review offers a practical way to speed up systematic reviews without turning them into a black box. If widely adopted, such tools could help ensure that guidelines, health advice, and scientific syntheses keep pace with the rapidly expanding research landscape.

Citation: Mena, S., Rituerto-González, E., Coutts, F. et al. AIM review tool: artificial intelligence for smarter systematic review screening. npj Artif. Intell. 2, 25 (2026). https://doi.org/10.1038/s44387-026-00080-8

Keywords: systematic reviews, machine learning, literature screening, artificial intelligence tools, evidence synthesis