Clear Sky Science · en

Machine learning discovers new champion codes

Why this matters for your digital life

Every photo you send, movie you stream, or signal beamed across space is quietly protected by error-correcting codes—mathematical tricks that spot and fix glitches in data. Making these codes even better means fewer dropped calls, faster internet, more reliable deep-space messages and denser data storage. This paper shows how modern artificial intelligence, the same kind of technology behind large language models, can help discover record‑breaking “champion” codes that outperform what human experts had previously found.

Keeping messages safe from noise

When information travels—whether over Wi‑Fi, undersea cables, or between Earth and distant spacecraft—it can be distorted by noise. Error-correcting codes guard against this by adding carefully designed extra bits so that mistakes can be detected and often repaired. A key measure of how strong a code is called the minimum Hamming distance, which, loosely speaking, tells you how many changes an adversary or noisy channel would need to flip one valid message into another. Codes that achieve the largest known distance for their size are dubbed champion codes. Finding such champions is fiendishly difficult: checking a single candidate code exactly can require an enormous brute‑force search that grows explosively with problem size.

Letting a smart model guess what is hard to compute

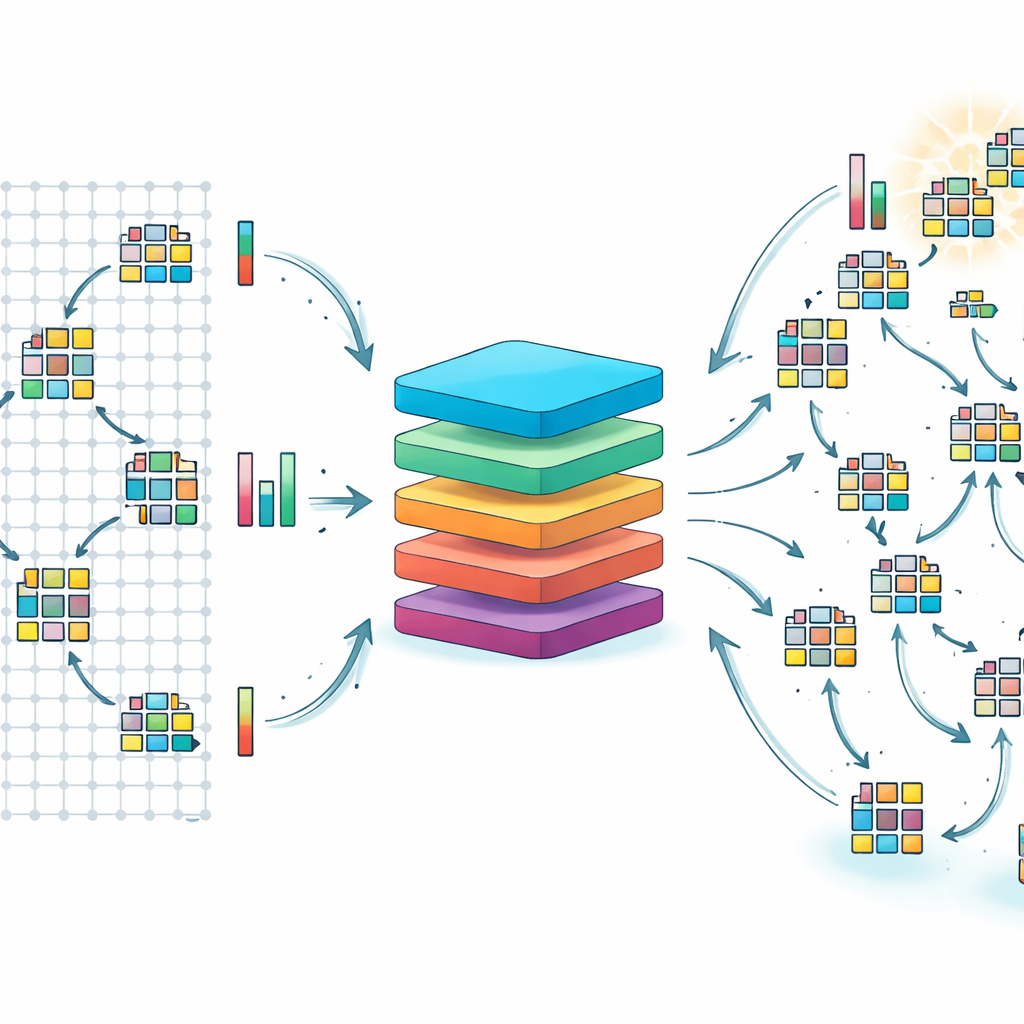

The authors focus on a mathematically rich family called generalised toric codes, which are built from patterns of points on a grid. Instead of exhaustively testing every possibility, they train a transformer—a neural network architecture widely used in language models—to estimate the strength (minimum distance) of a code directly from its defining matrices. Using millions of examples over two finite number systems, labelled F7 and F8, the model learns to predict distances with errors typically within three units of the true value, and with mean absolute errors close to one. That is accurate enough to tell promising candidates from weak ones without running the slow exact algorithm each time.

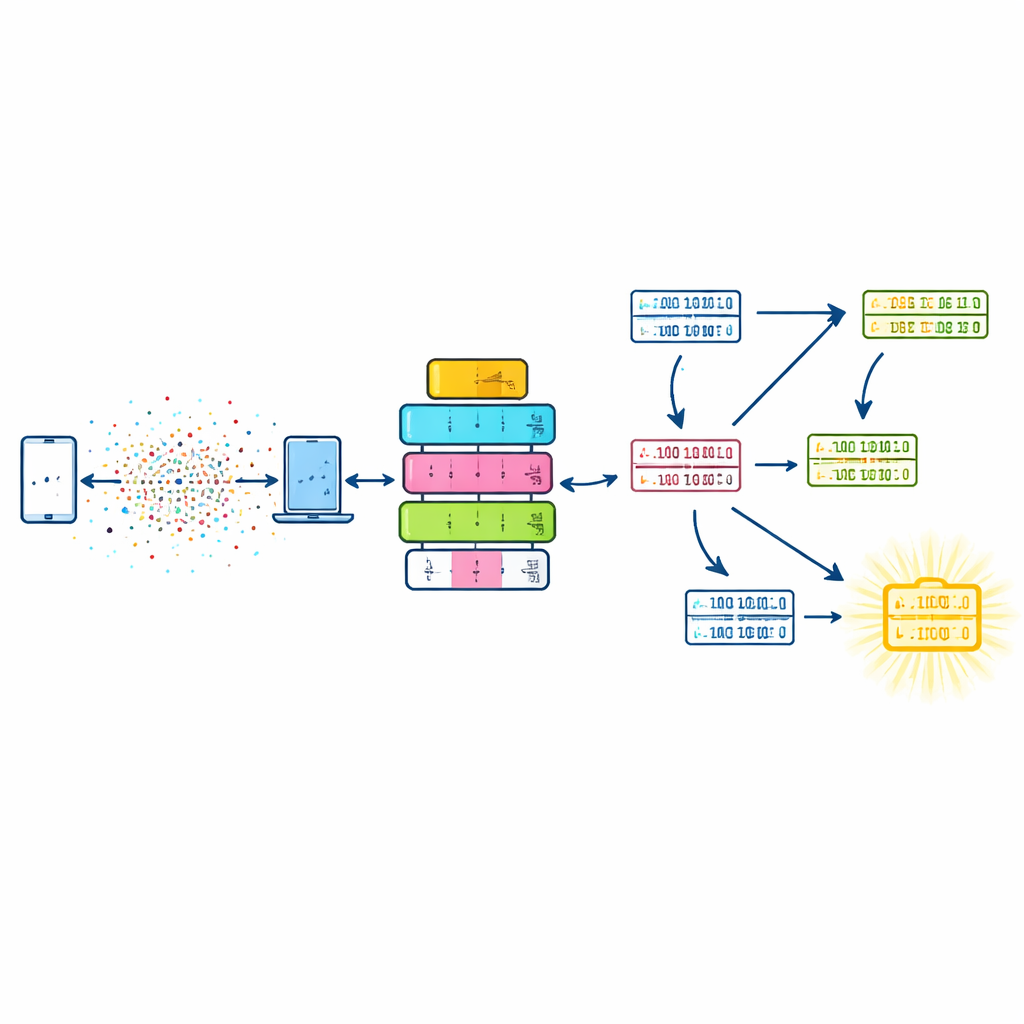

Evolution in the space of codes

To turn these fast predictions into new discoveries, the team couples the transformer with a genetic algorithm—an optimisation method inspired by evolution. Here, each individual in the population is a set of grid points that defines a code. Generations proceed by selecting better individuals, recombining their point sets, and occasionally mutating them to explore new regions. The fitness of a candidate is based on the model’s predicted distance, nudged to prefer codes of a target size and to avoid rediscovering the same solutions. Only when the prediction suggests a code might be outstanding do the researchers expend the heavy computation needed to verify its true distance exactly.

Beating random search and finding new record holders

Applied to codes over F7, this combined approach reliably rediscovers champion toric codes that had previously required painstaking mathematical and computational work. More impressively, for the more complex F8 setting—where earlier methods stalled because the search space is astronomically large—the method uncovers over 500 champion candidates and confirms at least six that were previously unknown. By comparing against a random search, the authors show that their strategy can cut the number of expensive exact evaluations roughly in half in the hardest regimes, a meaningful savings when each check may be very costly.

What this means going forward

To a non‑specialist, the takeaway is that AI can guide us through immense mathematical landscapes that would otherwise be out of reach. By learning the rough terrain—where good codes are likely to be—and steering an evolutionary search toward the most promising regions, the transformer–genetic algorithm combo turns a brute‑force needle‑in‑a‑haystack problem into a more focused treasure hunt. The authors expect that with larger datasets, better models, and further tuning, similar techniques could accelerate the design of many kinds of error‑correcting codes, including those for future communication networks and even quantum computers.

Citation: He, YH., Kasprzyk, A.M., Le, Q. et al. Machine learning discovers new champion codes. npj Artif. Intell. 2, 37 (2026). https://doi.org/10.1038/s44387-026-00077-3

Keywords: error-correcting codes, machine learning, genetic algorithms, digital communication, coding theory