Clear Sky Science · en

AI agent in healthcare: applications, evaluations, and future directions

Why smart digital helpers in medicine matter

Hospitals are drowning in data, doctors are overloaded, and patients want clearer answers about their health. A new kind of artificial intelligence, built on large language models that can read and write like humans, is now being turned into “AI agents” that can reason through multi-step tasks. This review article explains how these digital helpers are starting to assist with diagnosis, treatment decisions, paperwork, patient conversations, and even medical education—while also warning what must be done to keep them accurate, fair, and safe.

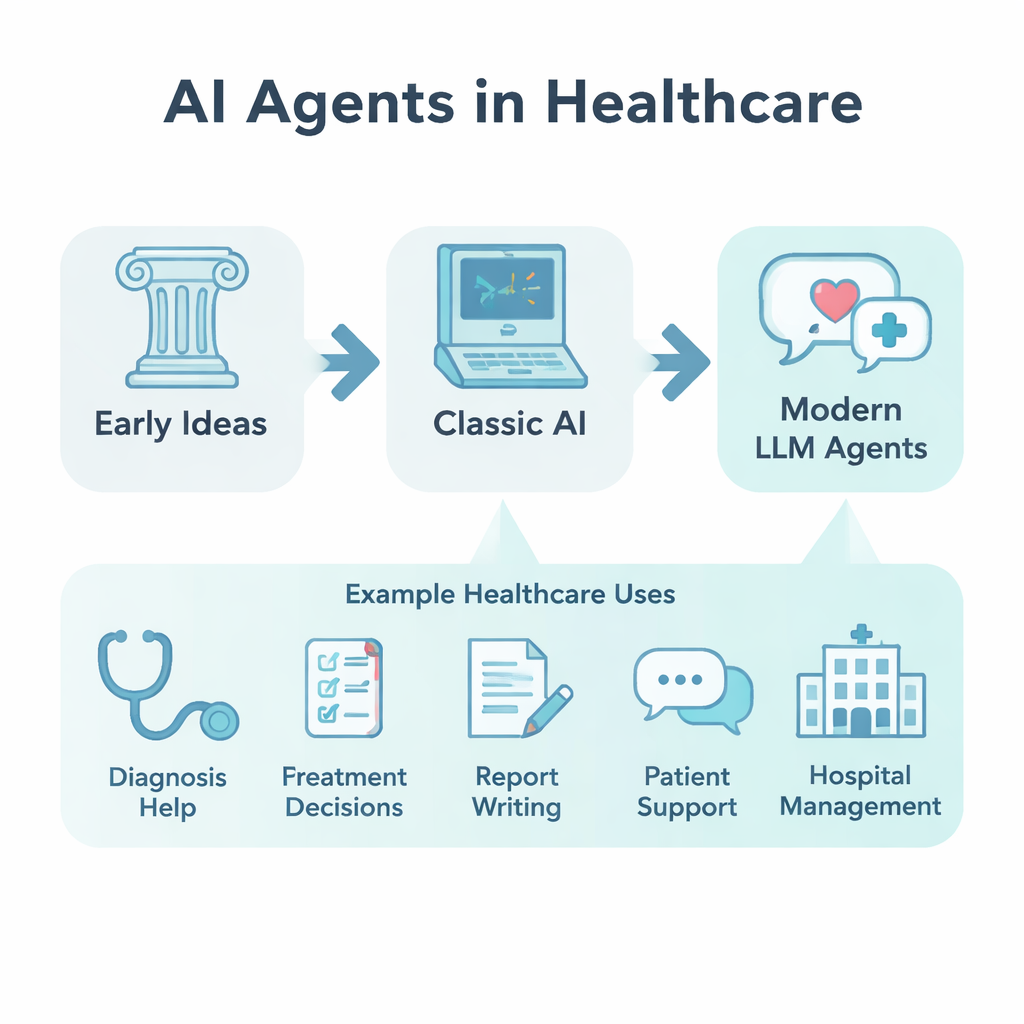

From thought experiments to hands-on digital coworkers

The idea of an “agent” that can act with goals and intentions goes back to ancient philosophy. Modern versions arrived with early artificial intelligence, expert systems, and later machine learning and deep learning, which allowed computers to learn patterns from data. The breakthrough of large language models (LLMs) after 2022 pushed things further: instead of just answering questions, these models can now plan, remember previous steps, and call other software tools. In healthcare, that means an AI agent can read medical records, look up guidelines, draft notes, and suggest next actions, behaving less like a search engine and more like a junior digital coworker.

What makes these agents different from ordinary AI

According to the definition adopted in the article, an AI agent in healthcare is more than a single model. It has an LLM at its core, surrounded by four key abilities: planning, memory, tool use, and self-reflection. Planning lets it break a complex medical task into smaller steps. Memory allows it to keep track of a patient’s story or a long decision process. Tool use means it can, for example, pull lab results from an electronic record or search a medical database. Self-reflection modules check and revise its own answers. Together with strong language skills and growing capacity for logical reasoning, these abilities make agents flexible enough to switch across tasks and medical specialties.

How AI agents are being tested in real healthcare work

Researchers are now building and simulating many kinds of agents to see where they help most. Some are designed to support diagnosis by acting out conversations between virtual doctors and patients, or by having multiple specialist agents debate a difficult case. Others focus on treatment decisions, combining input from virtual generalists, specialists, and pharmacists to reach a shared plan. There are agents that draft radiology reports from chest X-rays, or translate technical findings into plain, patient-friendly language. Chatbot-style systems are being piloted for mental health support, weight loss coaching, and medication reminders. Additional agents help manage prescriptions, spot drug side effects, streamline electronic records, or train medical students with realistic simulated patients.

Judging whether these systems are ready for patients

Because mistakes in medicine can be life-threatening, the review argues that AI agents must be evaluated on more than just cleverness. The authors group tests into two layers. Basic checks ask: Are the answers factually correct? Does the wording match expert reports? Does the agent reliably finish the task, including calling the right tools? Development-focused checks look at speed, clarity, and how well the system communicates with people, including respect, empathy, and fairness across different patient groups. Studies compare agents both to other top language models and to human doctors, and regulators in Europe, the UK, China, and elsewhere are beginning to design official “sandbox” programs to test safety, equity, and clinical benefit before deployment.

Next steps: robots, rules, and human trust

Looking ahead, the article highlights seven priorities: connecting agents to physical robots that can act in the real world; blending general-purpose models with smaller expert models; expanding evaluation to include costs, safety events, and patient satisfaction; building stronger safeguards and oversight; embedding ethics and privacy protections; designing for user trust and continuous feedback; and helping medical staff adapt their careers to working alongside AI instead of being replaced by it. The authors conclude that AI agents could become powerful partners in healthcare, but only if they are developed, tested, and governed with the same care that society demands of any new medical technology.

Citation: Zhao, L., Liu, S., Xin, T. et al. AI agent in healthcare: applications, evaluations, and future directions. npj Artif. Intell. 2, 31 (2026). https://doi.org/10.1038/s44387-026-00076-4

Keywords: AI agents in healthcare, large language models, clinical decision support, medical chatbots, digital health