Clear Sky Science · en

AIFS-CRPS: ensemble forecasting using a model trained with a loss function based on the continuous ranked probability score

Why smarter weather odds matter to you

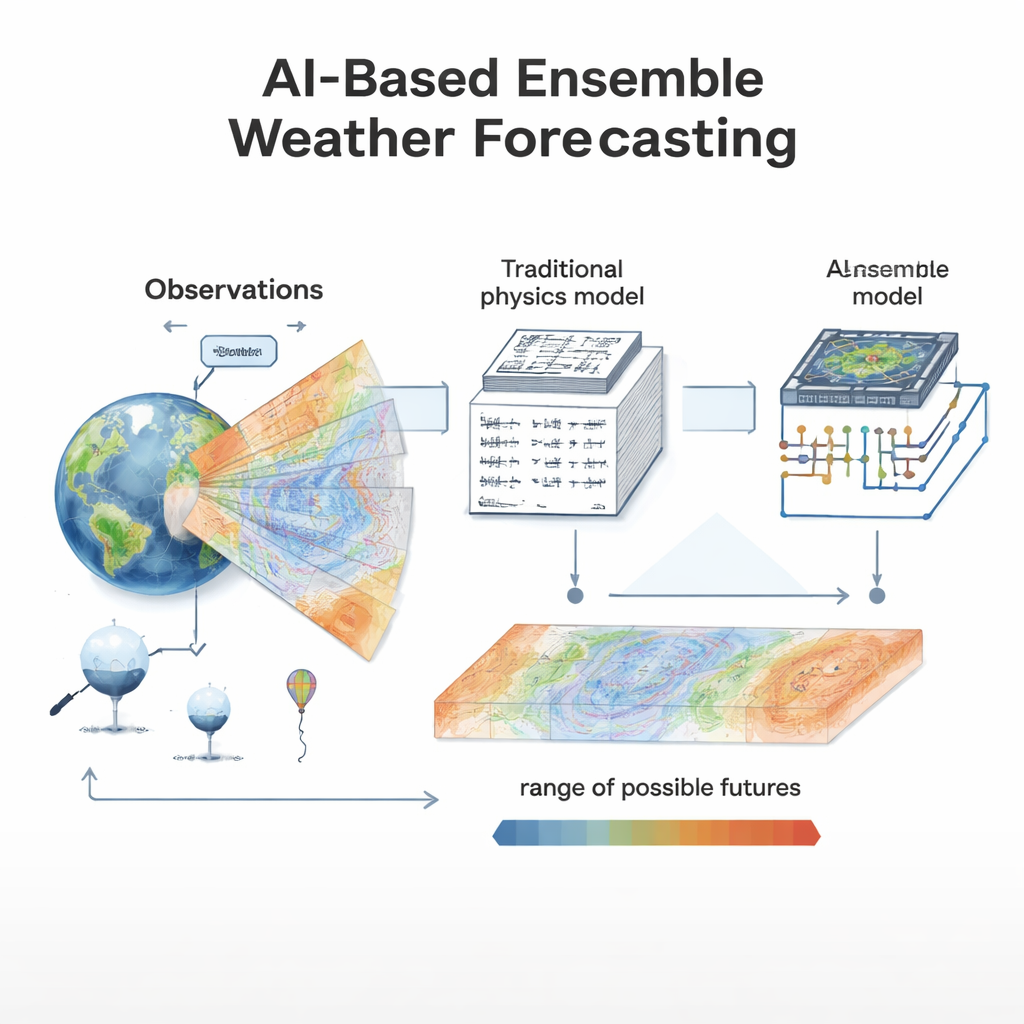

When you check the forecast, you usually see a single prediction: rain or shine, hot or cold. But the atmosphere is chaotic, and what really matters is the range of possibilities—especially for storms, heatwaves, or weeks-long patterns that affect crops, travel, and energy use. This paper presents a new artificial-intelligence-based forecasting system, AIFS‑CRPS, that doesn’t just guess tomorrow’s weather; it estimates the odds of many different futures, often more accurately and efficiently than today’s best physics-based supercomputer models.

From single answers to ranges of possibilities

Traditional weather models use the laws of physics to simulate the atmosphere many times with slightly different starting points. Together these “ensemble” forecasts give a probability distribution: how likely is heavy rain, or a cold snap? Early machine-learning weather models, in contrast, were trained to minimize average error in a single forecast, which encouraged them to smooth away small, sharp features like intense storms. They could be remarkably accurate on typical days, but they struggled to represent uncertainty and often muted extremes. AIFS‑CRPS is designed to close this gap by making probabilistic forecasts directly, so that uncertainty is built into the model rather than bolted on afterward.

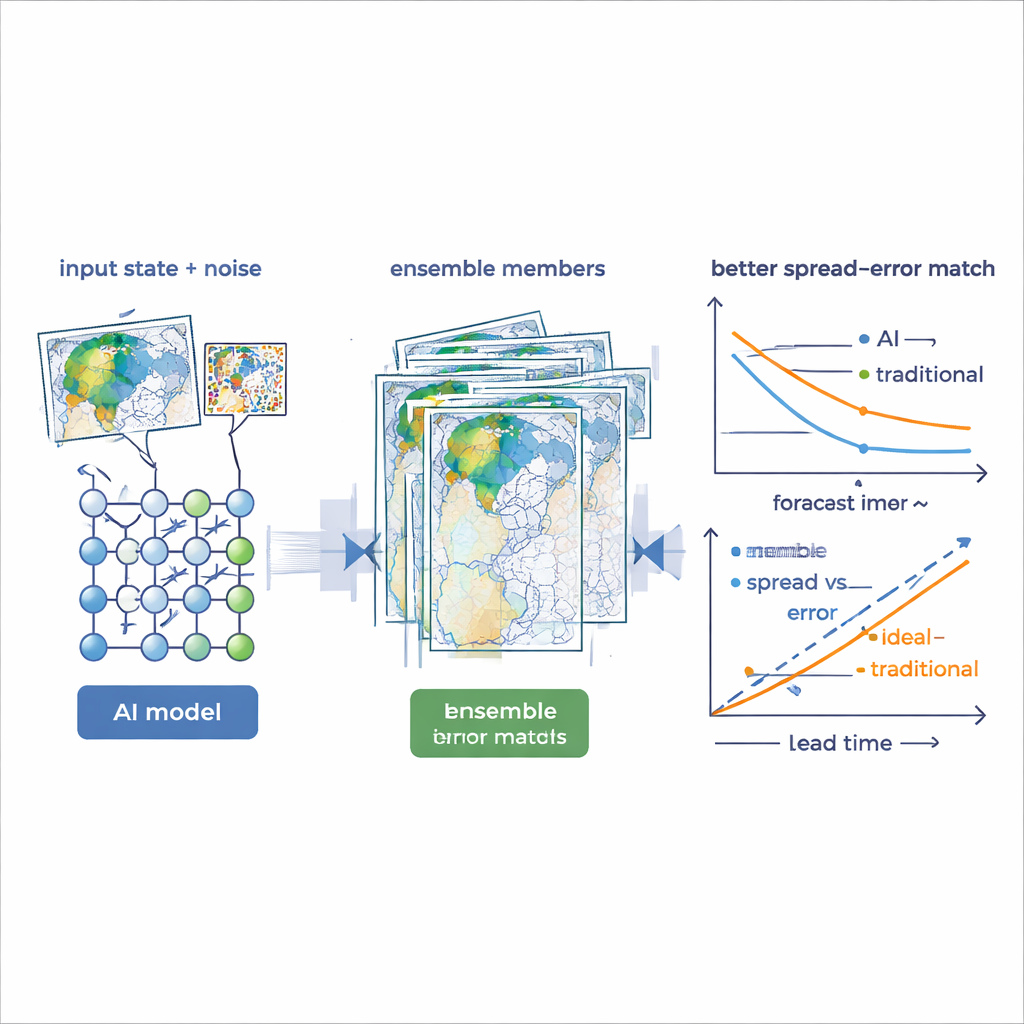

An AI that learns to be honestly uncertain

AIFS‑CRPS is an ensemble version of ECMWF’s Artificial Intelligence Forecasting System. Instead of learning to match one best-guess future, it learns to generate many plausible futures from a single AI model by adding carefully shaped random noise to its internal representation of the atmosphere. The key innovation is the way it is trained: the model is optimized using a statistical measure called the Continuous Ranked Probability Score (CRPS), which rewards forecast distributions that assign high probability to what actually happens and penalizes both missed events and overconfidence. The authors introduce an “almost fair” variant of this score that corrects for biases due to finite ensemble size while avoiding numerical pathologies that would otherwise trip up training on modern hardware.

Sharper detail that doesn’t blur away

One of the main tests of any ensemble system is whether it maintains realistic variability as the forecast stretches from hours to days. In side-by-side comparisons, an earlier AI system trained with a standard mean-squared-error loss gradually lost small-scale structure, making maps look blurred with lead time. By contrast, AIFS‑CRPS preserves detail and energy across scales, closer to what is seen in reference analyses and advanced physics-based models. The authors address an early tendency of the model to grow too much fine-scale noise by “truncating” the reference field used during training—removing the tiniest wiggles from the previous step so the AI does not simply amplify them—but without damping genuine small-scale weather features. This balance is crucial for representing intense storms and other high-impact events.

Outperforming state‑of‑the‑art for days to weeks

The team evaluates AIFS‑CRPS against ECMWF’s high-resolution Integrated Forecasting System (IFS) ensemble. For forecasts up to 15 days, the AI ensemble scores better for many key variables, such as temperatures near the surface and at 850 hPa, winds at jet-stream level, and mid‑tropospheric pressure patterns. Depending on the variable, improvements in standard probabilistic and error scores often reach 5–20 percent. The AI ensemble sometimes shows “over‑dispersion”—its members are more spread out than strictly required by their average error—but this is largely a side effect of using initial condition perturbations tuned for the physics model, not for the much lower error of the AI system. At longer, subseasonal lead times of two to six weeks, the AI system—despite being trained only on forecasts out to 72 hours—matches or beats the IFS for many surface and tropospheric fields when raw forecasts are considered, and remains competitive when biases are removed and only anomaly skill is counted.

Following the tropics’ slow heartbeat

A critical test for subseasonal prediction is the Madden–Julian Oscillation (MJO), a slow-moving pattern of tropical disturbances that can influence monsoons, storms, and even mid‑latitude weather. Using a standard index based on wind anomalies, the authors show that AIFS‑CRPS produces MJO forecasts with higher correlations and lower errors than the IFS ensemble over a multi‑year test period. Importantly, the spread of the AI ensemble matches the typical forecast error very closely, a hallmark of a well‑calibrated probabilistic system. In a case study, the AI more faithfully reproduces the growth and eastward march of a major MJO event than the physics model, which tends to underestimate its strength and revert too quickly toward neutral conditions.

What this means for everyday weather and beyond

For non‑specialists, the takeaway is that AI can now do more than just offer fast, good‑looking weather maps. Systems like AIFS‑CRPS can quantify the odds of different outcomes—how likely a heatwave is to persist, whether a storm track could shift, or how stable a multi‑week pattern might be—often as well as, or better than, today’s most advanced physics‑based models, and at a fraction of the computing cost. Challenges remain, such as improving performance in the stratosphere and fine‑tuning how the model handles extremes, but this work shows that probabilistic training can turn AI into a genuinely useful tool for risk‑aware weather and climate services. In practice, that means more informative forecasts for governments, businesses, and the public when it matters most.

Citation: Lang, S., Alexe, M., Clare, M.C.A. et al. AIFS-CRPS: ensemble forecasting using a model trained with a loss function based on the continuous ranked probability score. npj Artif. Intell. 2, 18 (2026). https://doi.org/10.1038/s44387-026-00073-7

Keywords: AI weather forecasting, ensemble prediction, probabilistic forecasts, subseasonal prediction, Madden–Julian Oscillation