Clear Sky Science · en

PsychAdapter: adapting LLMs to reflect traits, personality, and mental health

Why shaping AI personalities matters

Most of the chatbots and writing tools people use today sound oddly similar: friendly, wordy, and a bit generic. But real people are not generic—we differ in personality, mood, age, and life circumstances, and those differences show up clearly in how we write and speak. This paper introduces PsychAdapter, a new way to give large language models (LLMs) adjustable “personalities” and mental health profiles, so they can generate text that better reflects the huge variety of real human voices.

Teaching machines to sound like different people

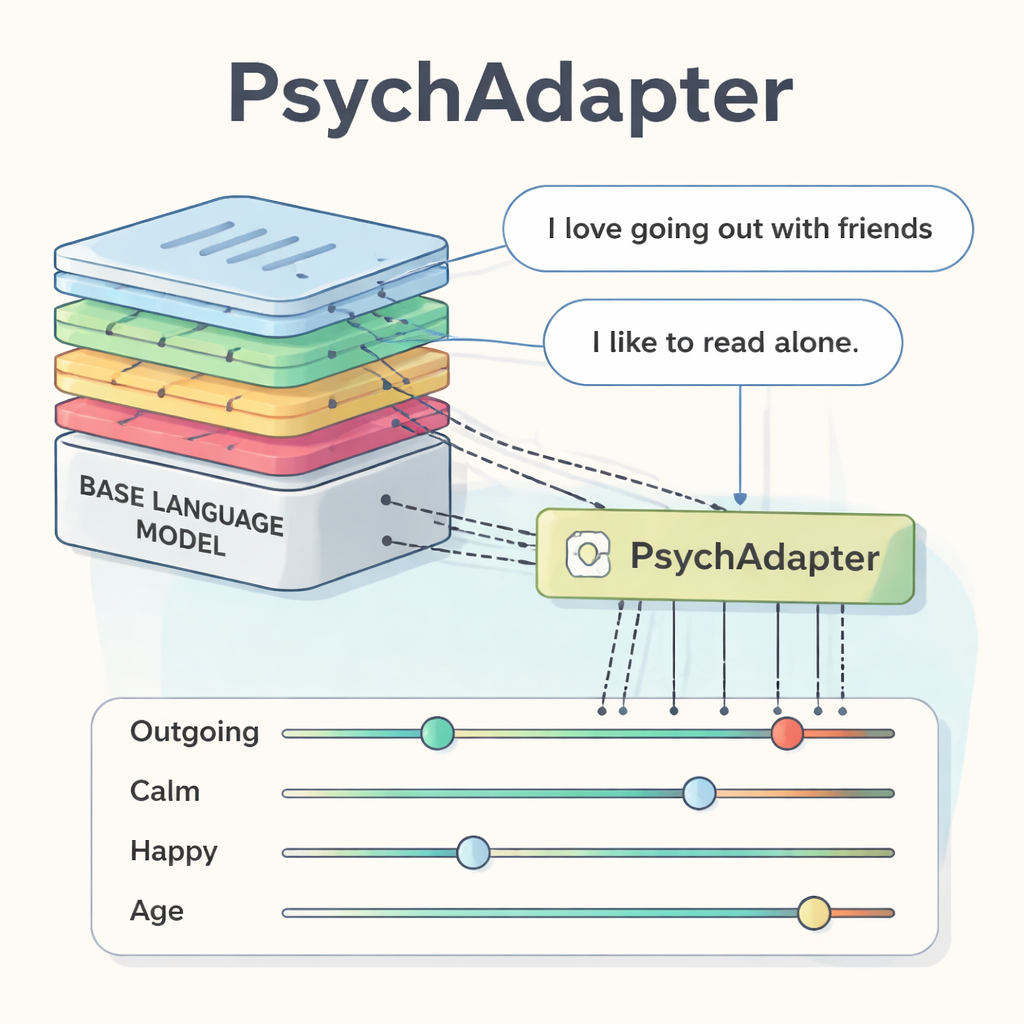

PsychAdapter is a small add-on that plugs into existing language models such as GPT‑2, Gemma, or LLaMA. Instead of just feeding the model words and asking it to continue a sentence, the researchers also feed in a compact profile of the writer: scores for the Big Five personality traits (like Extraversion and Agreeableness), levels of depression or life satisfaction, and basic demographic information such as age. These scores are continuous, like a slider that can be set anywhere from very low to very high, rather than a handful of fixed labels. PsychAdapter expands this small vector and connects it to every layer of the model so that the entire writing process is gently steered by the chosen psychological profile, without relying on complex prompts.

From trait sliders to lifelike sentences

To train PsychAdapter, the team used large collections of public social media posts and blogs. Separate psychological models first estimated personality, depression, life satisfaction, and age for each message based on the language people used. Those estimated scores became teaching signals: the language model was trained to reconstruct each message while seeing the corresponding psychological profile. Once trained, PsychAdapter can take any desired combination of scores—say, “very high extraversion, low agreeableness” or “older adult with low life satisfaction”—and generate fresh text that matches that profile, sometimes starting from a short prompt like “I like to…”. The added adapter is tiny compared with the base model (often less than one‑tenth of one percent of the original parameters), so it can be shared and plugged in easily.

Checking whether the AI really changes its tone

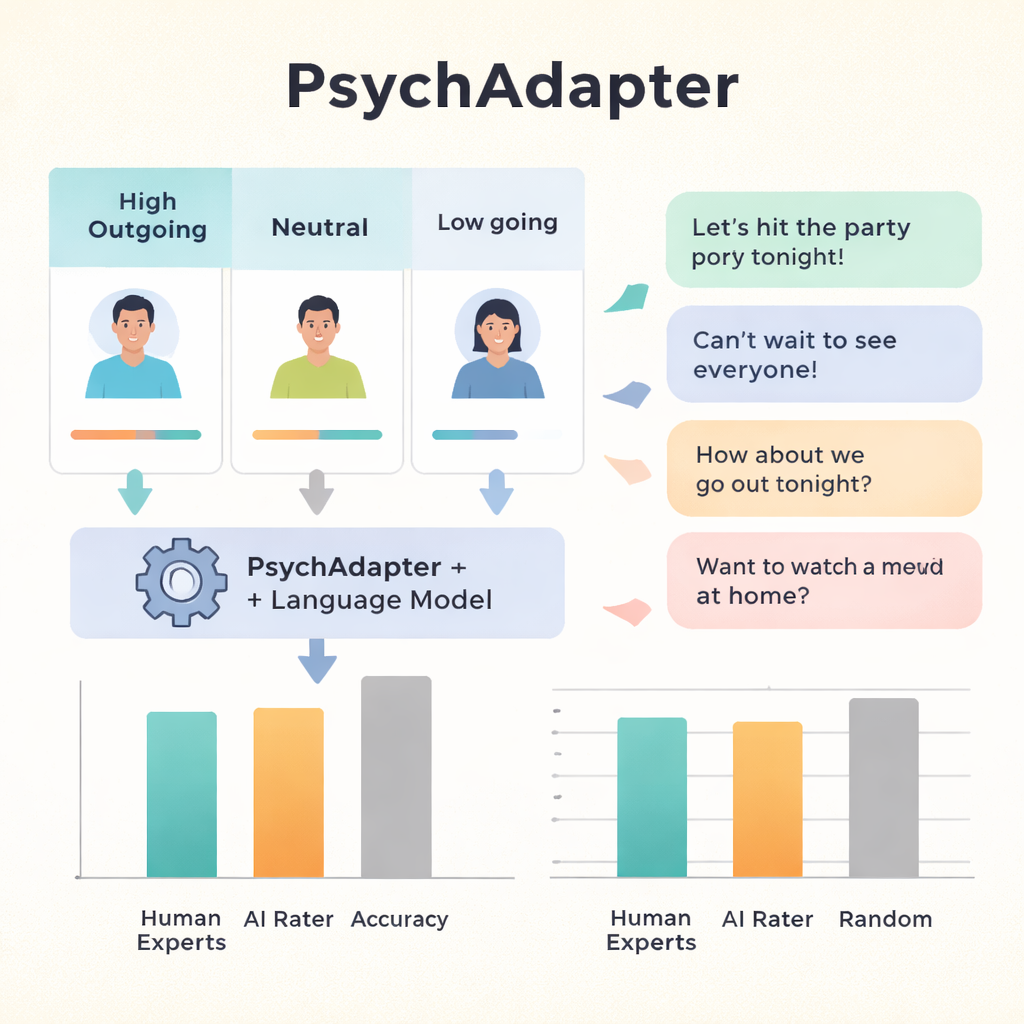

To see if PsychAdapter was truly capturing traits rather than just producing random variations, the researchers asked expert psychologists to act as judges. For each trait, the system generated sets of messages meant to reflect low, average, or high levels (for example, low versus high extraversion). The experts, who were not told which was which, had to match each group of texts to its intended level. Across traits, they were correct about 87% of the time for personality, and almost 97% of the time for depression and life satisfaction—far above random guessing. When the system was nudged with simple prompts such as “I like to…”, accuracy rose even further. A separate test used an advanced AI model as a rater; it agreed with human experts at roughly the same level that experts agreed with one another and sometimes detected traits even more consistently.

Mixing traits, ages, and life domains

PsychAdapter is not limited to one trait at a time. The system can combine personality dimensions, mental health levels, and demographic factors in a single profile. The authors showed that changing the “age” score while holding depression or life satisfaction constant led to different styles of messages: younger voices talked about parents, school, and first days of class, while older voices mentioned spouses, children, and long‑term worries. By mathematically rotating two personality traits (extraversion and agreeableness) into “warmth” and “dominance,” they also mapped the outputs onto a classic psychological model of interpersonal styles. Generated texts in regions labeled things like “Assured‑Dominant” or “Cold‑Hearted” matched what theory would predict. The approach worked across short tweets and longer blog posts, and across several different underlying language models.

Opportunities and risks for human‑AI interaction

Because PsychAdapter can finely tune an AI’s style and emotional tone, it opens the door to more human‑like applications. Training simulations for therapists or crisis‑line workers could expose them to safe but realistic conversational partners showing various personalities and levels of distress. Customer‑service bots or educational tools could adjust language to match a user’s age, reading level, or preferred style. Researchers can also use the system as a laboratory: by dialing traits up or down and prompting for specific topics, they can explore how personality and mental health might shape language in many contexts without waiting for rare real‑world data.

What this means for everyday users

For a layperson, the takeaway is that future AI systems may not just answer questions—they may adopt a wide range of recognizable human‑like voices. With something like PsychAdapter, a single base model can be gently reshaped to sound more introverted or extroverted, upbeat or down, young or old, simply by moving a few sliders. This flexibility could make AI tools feel more relatable and useful, but it also raises new ethical concerns, such as the risk of targeted persuasion or deceptive “personas.” The authors argue that, used responsibly, PsychAdapter offers a powerful new way to study how our inner traits show up in words, and to build AI that better reflects the diversity of real human communication.

Citation: Vu, H., Nguyen, H.A., Ganesan, A.V. et al. PsychAdapter: adapting LLMs to reflect traits, personality, and mental health. npj Artif. Intell. 2, 26 (2026). https://doi.org/10.1038/s44387-026-00071-9

Keywords: psychadapter, personality-aware AI, mental health language, large language models, personalized text generation