Clear Sky Science · en

Improving few-shot named entity recognition for large language models using structured dynamic prompting with retrieval augmented generation

Why smarter reading of medical text matters

Modern medicine generates oceans of text—from intensive care notes to online conversations about drug use. Hidden in those words are vital clues about diseases, treatments, and side effects. Automatically finding and labeling these snippets of information, a task called “named entity recognition,” can help researchers track outbreaks, spot drug problems earlier, and support doctors in real time. But traditional systems need large hand-labeled datasets, which are costly to build and often missing for rare or emerging health issues. This study explores how large language models, like those behind today’s chatbots, can be guided with carefully designed prompts and smart retrieval of examples so they perform this labeling task well even when only a few annotated samples are available.

Teaching machines to spot important words

The authors focus on biomedical named entity recognition—finding mentions of diseases, drugs, symptoms, and social impacts in text. This is difficult because medical language is highly specialized, varies by hospital or subfield, and often involves rare conditions that appear only a few times in any dataset. Existing machine-learning models can achieve human-like performance but typically require large, well-annotated corpora that are expensive to create and share, especially under strict privacy rules. Few-shot learning, in which models learn from only a handful of labeled examples, offers a way around this bottleneck. Large language models are especially promising here because they can learn patterns directly from instructions and examples given in the prompt, without retraining their internal weights.

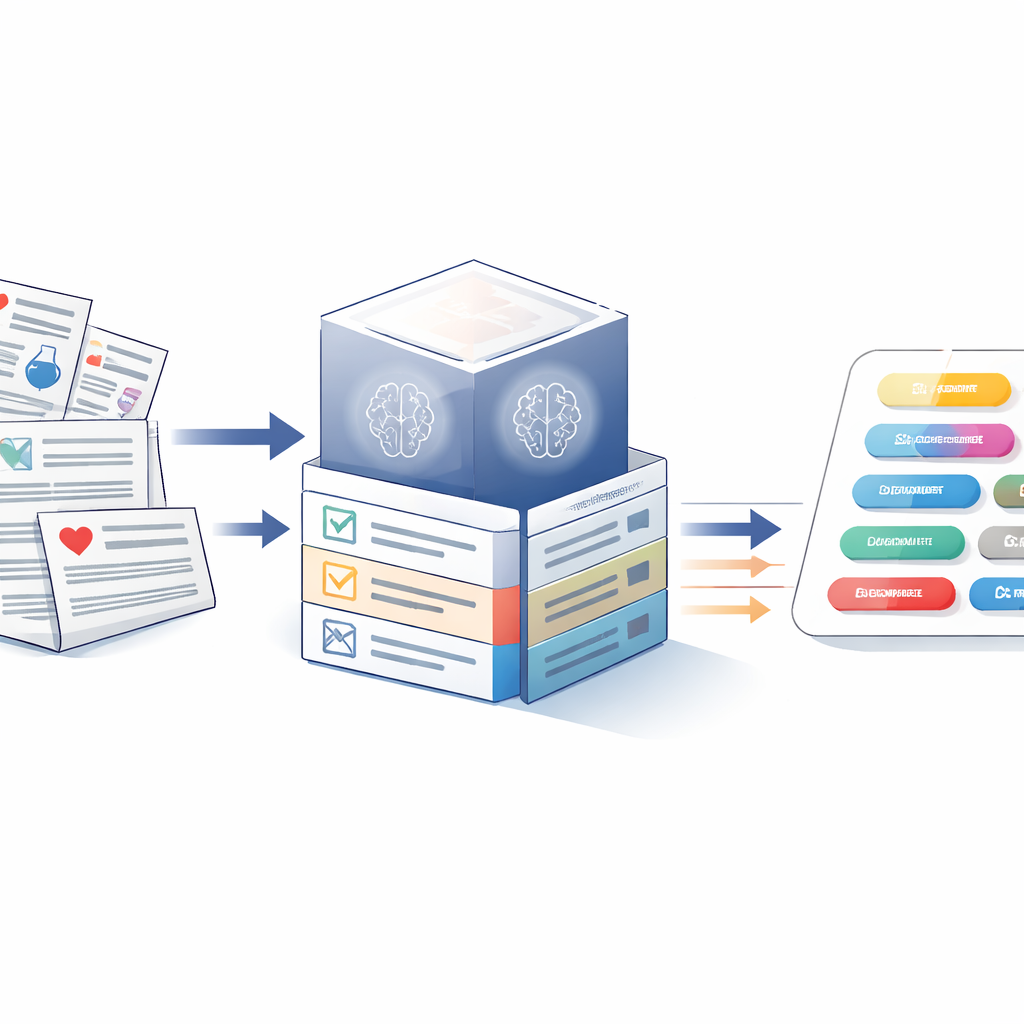

Building better instructions for language models

The first part of the work designs a highly structured “static” prompt—a reusable block of instructions and examples presented to the model for every sentence it must label. Rather than simply telling the model to tag entities, the prompt is broken into six elements: a clear task description and definitions of entity types; a short description of the dataset’s source and theme; high-frequency example words typical of each entity; optional background medical knowledge; summarized feedback from earlier model errors; and a handful of fully annotated example sentences. The team tested this framework with three large language models—GPT-3.5, GPT-4, and LLaMA 3-70B—on five biomedical datasets spanning clinical records, scientific abstracts, and Reddit posts about opioid use. Carefully layering these components raised F1 scores (a balance of precision and recall) by about 11–12 percentage points over a basic prompt, with GPT-4 achieving the best overall performance.

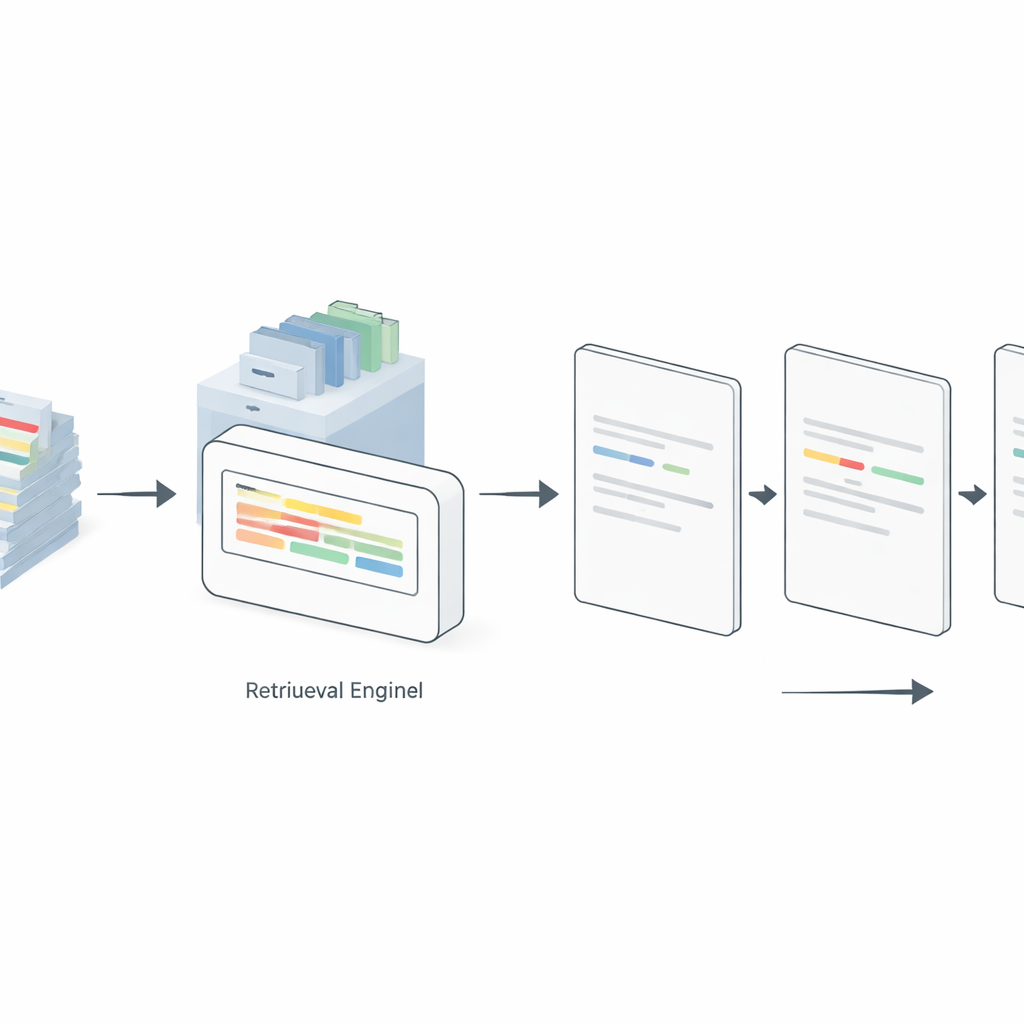

Letting the model look up better examples on the fly

Static prompts, however, always show the same examples, even when they are poorly matched to the new sentence being labeled. To address this, the authors introduce a “dynamic” prompting strategy powered by retrieval-augmented generation. Here, a separate retrieval engine indexes all available annotated examples. For each new input sentence, the system searches this pool to find the most similar labeled sentences and inserts only those into the prompt. The study compares several retrieval methods, from a simple term-frequency scheme (TF–IDF) to neural embedding models such as Sentence-BERT (SBERT), ColBERT, and Dense Passage Retrieval. Across GPT-4, LLaMA 3, and an open-weight model called GPT-OSS-120B, dynamically selecting relevant examples consistently outperformed static prompting in 5-, 10-, and 20-shot settings. Surprisingly, the simple TF–IDF method often matched or beat more complex approaches, especially on cleaner, more standardized datasets, while SBERT shined on noisier social media text.

Getting more from fewer labeled examples

Because annotating medical text is expensive, the authors also studied how many labeled examples the retrieval engine must index to be useful. Using LLaMA 3-70B, they varied the retrieval pool from 50 examples up to the entire training set. Performance generally improved as the pool grew, but gains flattened quickly: pools of about 100–200 examples achieved nearly the same accuracy as indexing all available data, often within the statistical margin of error. In some cases, extremely large pools slightly hurt performance, likely because they introduced more irrelevant or confusing examples and lengthened the prompt. These findings suggest that, when paired with a strong language model and well-designed prompts, even modest annotation efforts can yield robust biomedical entity recognition, making the approach feasible for rare diseases, new clinical concepts, or institutions with limited resources.

What this means for real-world medicine

Overall, the study shows that large language models can reliably pick out important medical concepts from text using only a handful of annotated examples, provided they are guided by structured prompts and a retrieval system that surfaces the most relevant prior cases. GPT-4 offers the strongest general performance, while open and smaller models still benefit substantially from the same prompting and retrieval recipe. For practitioners, this means they do not need to build massive datasets every time a new entity type or health concern emerges; a compact, carefully curated set of examples plus smart prompting can be enough. As healthcare systems continue to digitize notes and patients discuss their experiences online, such efficient, adaptable tools could make it far easier to unlock clinically useful knowledge from the vast, messy world of medical text.

Citation: Ge, Y., Guo, Y., Das, S. et al. Improving few-shot named entity recognition for large language models using structured dynamic prompting with retrieval augmented generation. npj Artif. Intell. 2, 39 (2026). https://doi.org/10.1038/s44387-025-00062-2

Keywords: biomedical named entity recognition, few-shot learning, large language models, retrieval-augmented generation, clinical text mining