Clear Sky Science · en

A pilot study to assess the challenges and efficacy of two hearing loss simulations

Why simulating hearing loss matters

Many of us have a friend or relative who struggles to follow conversations, especially in noisy places. Yet it is hard for people with normal hearing to truly grasp what those sounds are like, and it is not always practical to recruit large numbers of people with hearing loss for every experiment. This study explores whether computer simulations of hearing loss can reliably “fake” the experience for normal-hearing listeners, so that researchers and audio engineers can test ideas, design more accessible media, and better understand what life sounds like with impaired hearing.

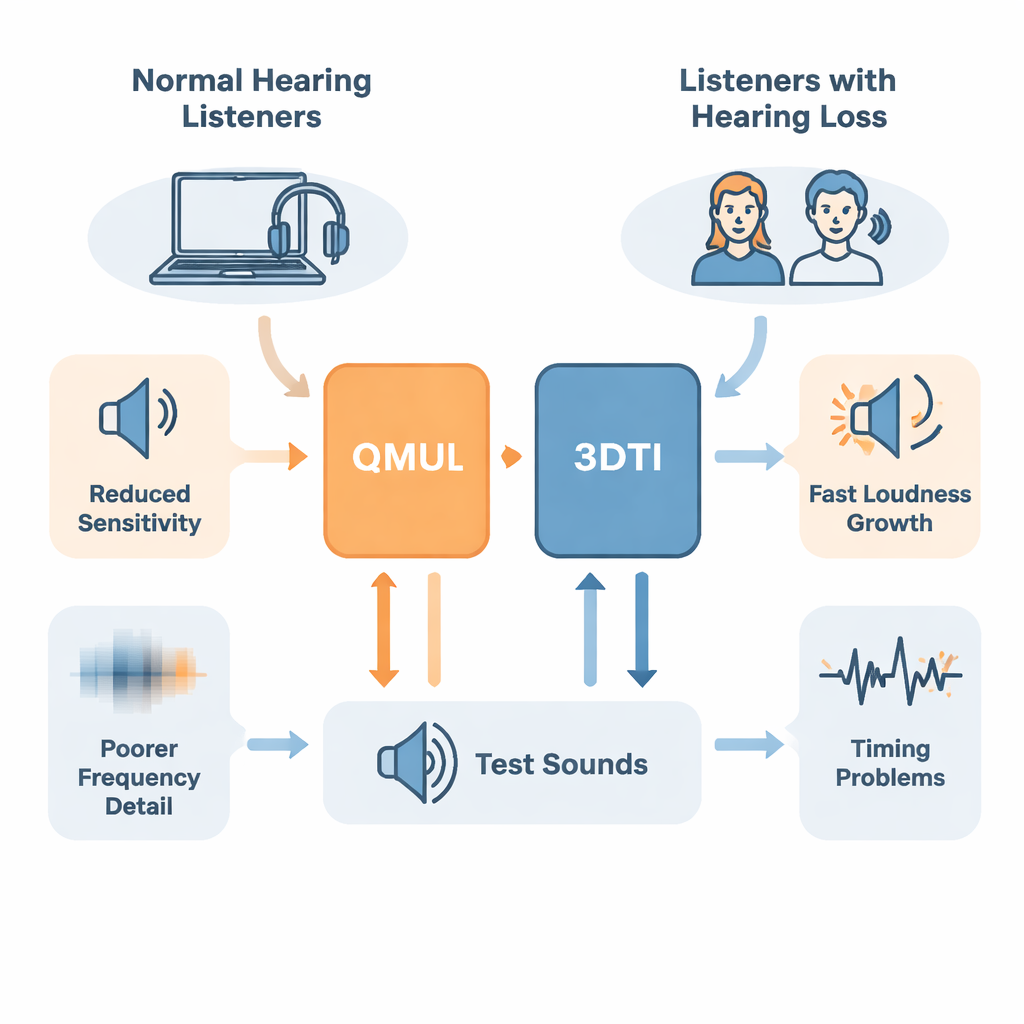

Two digital stand-ins for damaged ears

The researchers focused on two software tools that run in real time like studio audio effects: the QMUL plugin and the 3D Tune-In (3DTI) Toolkit. Both aim to mimic four common effects of sensorineural hearing loss: softer sounds becoming harder to detect, loudness rising too quickly once sounds become audible, fine details in pitch and tone blurring together, and timing information being smeared. The QMUL plugin is designed to be simple and intuitive for sound engineers, with a small set of presets. The 3DTI tool is more flexible, accepting a person’s actual hearing test and offering many more tuning options, including links to 3D spatial audio.

Listening tests with real and simulated loss

To see how well these tools work, the team ran a pilot listening study. Two volunteers with mild-to-moderate, high-frequency hearing loss first completed standard hearing tests and a series of carefully chosen listening tasks. These tasks measured how small a silent gap in noise they could detect, how well they could pick out a tone hidden in “notched” noise, how loud tones at different levels felt, and how much speech they could understand in background noise. The researchers then adjusted the QMUL and 3DTI simulations to imitate each of these two listeners. Eleven people with normal hearing listened through headphones while the simulations were applied in real time, and completed the same set of tasks.

Where the simulations get it right

The simulations performed best at reproducing frequency-related problems—the way hearing loss makes sounds less sharply tuned in pitch. In the tone-in-noise test, both tools produced masked thresholds and modeled “auditory filters” that roughly matched those of the real listeners, with the 3DTI simulation often slightly closer. For how loud sounds felt, the results were mixed but encouraging. The relationship between actual sound level and perceived loudness could be described with a standard psychophysical rule known as Stevens’ power law. For one of the two listeners with hearing loss, both simulations captured the unusually rapid growth of loudness fairly well, with the 3DTI model coming within about 10 percent of that listener’s measured curve.

Where digital ears still fall short

Other aspects were much harder to emulate. In the gap-detection task, thresholds varied wildly across participants using the simulations, and neither tool could match the very poor temporal resolution of one listener whose gap-detection ability was far worse than typical published values. The speech-in-noise test revealed an even bigger problem: almost all normal-hearing participants listening through the simulations performed worse than the real listeners with hearing loss. People who live with hearing loss seem to adapt over time, learning to use whatever cues remain and possibly drawing on cognitive strategies. By contrast, a sudden, artificial “filter” imposed on normal ears does not provide that long-term adjustment.

What this means for future tools

Overall, this small pilot study suggests that modern hearing-loss simulations can reasonably reproduce how loud sounds feel and how blurred in frequency they become, at least for some individuals. However, they still struggle to capture timing deficits and the real-world challenge of understanding speech in noise. The work also highlights practical obstacles: recruiting enough people with specific types of hearing loss, choosing test designs that suit their comfort limits, and balancing the complexity of a model against the need for fast, usable software. The authors argue that more customizable simulations, tested with larger and more diverse groups of listeners with real hearing loss, are needed before such tools can reliably stand in for human volunteers. Even so, the approach they demonstrate here offers a concrete path toward developing better digital “test ears” to guide future hearing aids, accessible media, and public awareness.

Citation: Mourgela, A., Picinali, L. & Vicente, T. A pilot study to assess the challenges and efficacy of two hearing loss simulations. npj Acoust. 2, 9 (2026). https://doi.org/10.1038/s44384-026-00042-z

Keywords: hearing loss simulation, psychoacoustics, speech in noise, audio plugins, hearing research