Clear Sky Science · en

Correcting processing-in-memory multiply-accumulate arithmetic errors with LDPC

Why fixing in-memory math errors matters

Modern artificial intelligence chips squeeze more speed and efficiency out of hardware by performing calculations directly inside memory, instead of constantly shuttling data back and forth to separate processors. This “processing-in-memory” approach saves energy but introduces a serious problem: tiny electrical imperfections can flip stored bits or distort analog signals, quietly degrading the accuracy of tasks like image recognition. The paper describes a new way to automatically detect and correct these errors on the fly, helping future AI hardware stay both fast and trustworthy.

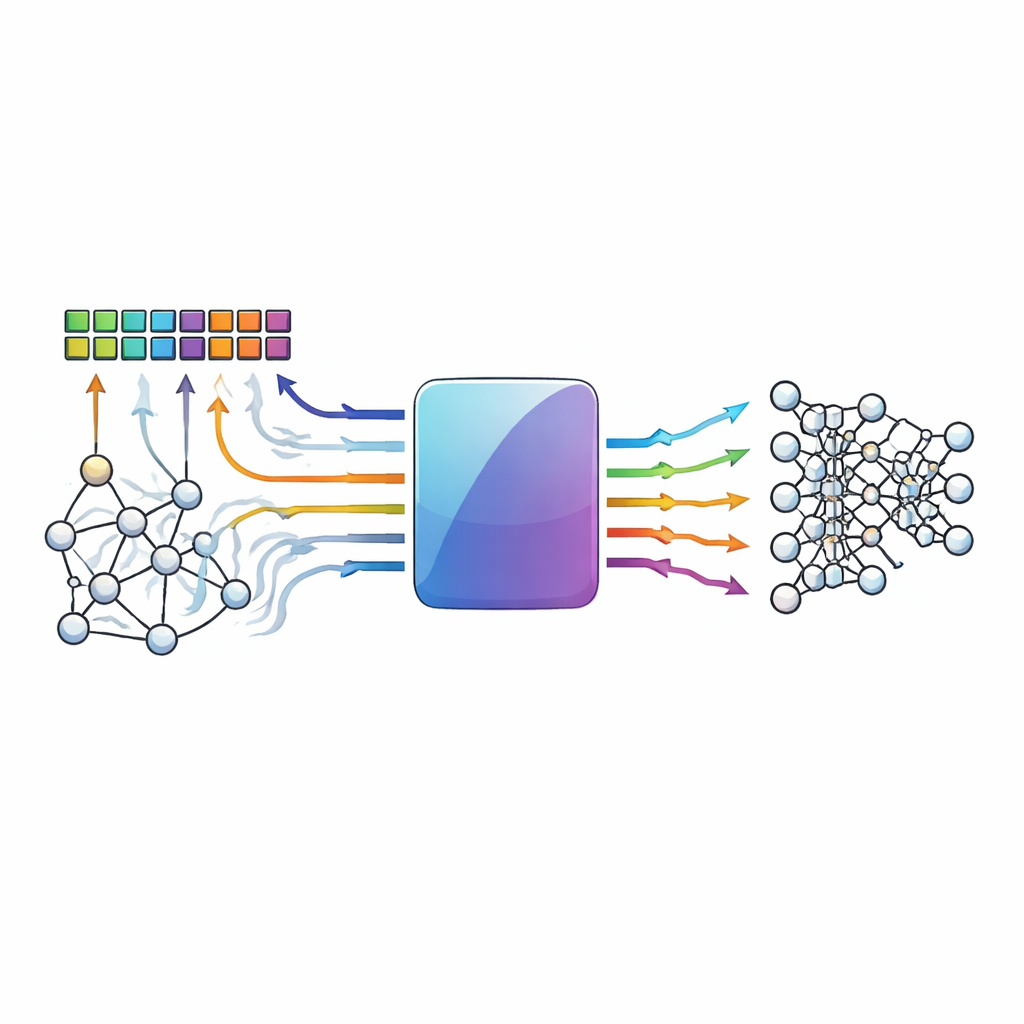

Computing where the data live

Conventional computers are slowed down by the need to move data between memory and the processor. Processing-in-memory designs avoid this bottleneck by carrying out multiply-and-accumulate operations—the backbone of neural networks—inside dense arrays of memory cells. Emerging devices such as resistive RAM and other memristive elements are especially attractive because they can store many values and perform analog-style arithmetic very efficiently. However, the same analog nature and device variability that make them powerful also make them noisy: thermal fluctuations, device mismatches, and voltage drops can all nudge stored values or computed results away from where they should be.

When tiny glitches pile up

In these in-memory arrays, many rows of cells are activated together and their contributions are summed along shared wires. As more rows participate, their individual imperfections add up, creating patterns of errors that are both frequent and complicated. Instead of a single wrong bit, designers often see multiple errors clustered in the same column of a matrix or spread across several columns in a way that defeats traditional error-correcting tricks. Standard codes usually assume simple error patterns and short word lengths; they may miss multi-bit glitches or lack entries in their lookup tables for rare but damaging combinations. As a result, model accuracy for deep neural networks can fall sharply once the underlying hardware becomes even modestly unreliable.

A new kind of digital safety net

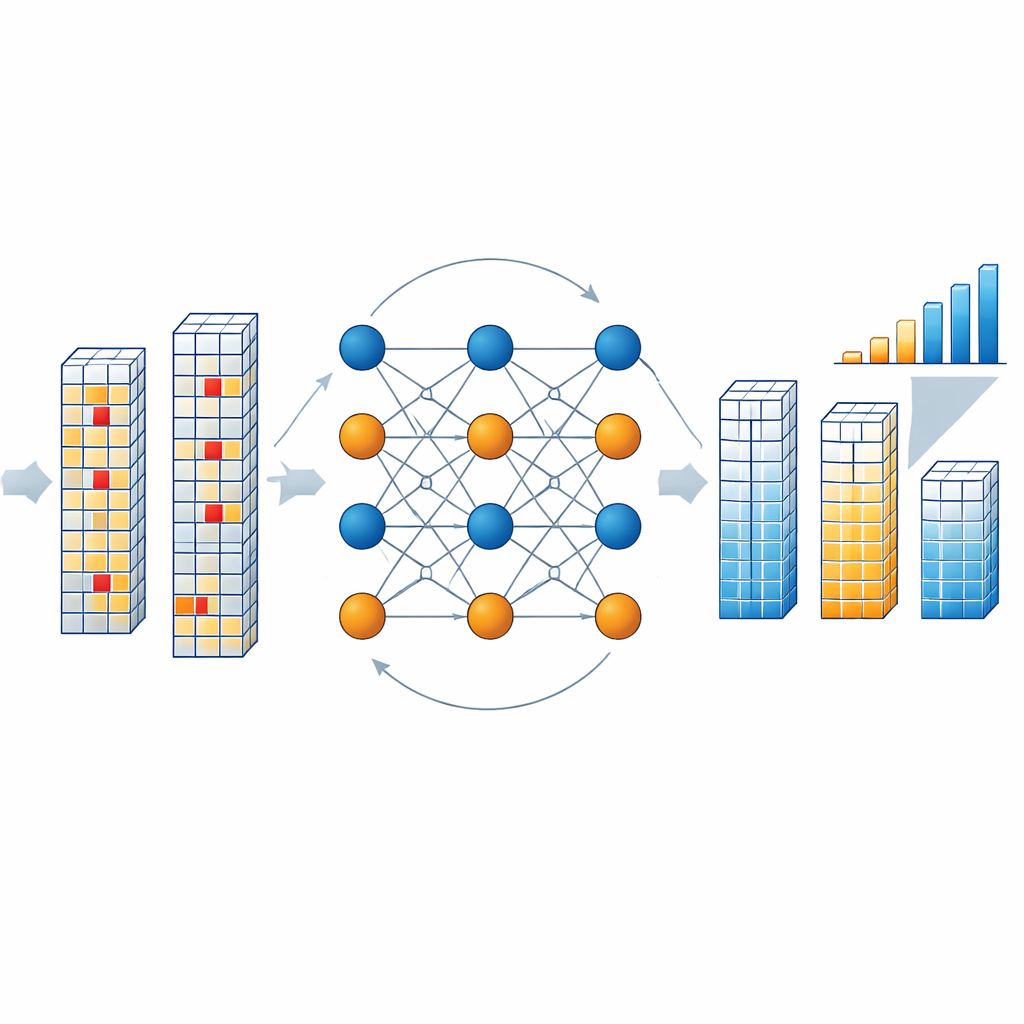

The authors introduce a non-binary low-density parity-check (NB-LDPC) code tailored specifically to processing-in-memory hardware. Rather than working only with zeros and ones, their scheme operates on small groups of bits treated as symbols in a mathematical structure called a finite field built from a prime number (here, three). This lets the same code protect both ordinary binary storage and multi-level or differential encodings commonly used in analog accelerators. The system appends a modest number of extra symbols—check symbols—to each block of data. During both normal memory reads and in-memory multiply-and-accumulate operations, the hardware computes results for the data and the check symbols together, so error detection is naturally woven into the computation.

How the correction engine works inside the chip

When the chip reads out a block of results, a dedicated decoder examines whether the combined data and check symbols obey the parity relationships defined by the code. If they do, the block is assumed to be clean. If not, the decoder launches an iterative process in which abstract “variable nodes” representing each symbol and “check nodes” representing parity conditions exchange probability messages. These messages estimate how likely each symbol is to take each of the allowed values, based on the observed outputs and the expected bit-flip error rate of the memory. The authors simplify this math-heavy reasoning using Manhattan distance approximations, which greatly cut hardware cost while keeping performance high. After a few rounds—typically three—the decoder converges on the most plausible corrected version of the result vector, without ever having to re-read memory or halt the computation stream.

Silicon proof and impact on AI accuracy

To test the idea in practice, the team built a prototype chip in a 40-nanometer process that combines a resistive RAM array, lightweight analog-to-digital converters, and the new NB-LDPC decoder. With a configuration that protects 256 information symbols using 32 check symbols, the decoder achieves a high code rate (about 0.8), a best measured power efficiency of roughly 88 terabits of corrected data per second per watt, and only modest area overhead that can be further reduced by sharing one decoder among several memory macros. Simulations across many code sizes show that when protecting 1024 data symbols with 128 check symbols, the scheme can improve the bit error rate by nearly 60-fold. When applied to a ResNet-34 image classification model running on processing-in-memory hardware, the correction restores more than 20 percentage points of lost accuracy under challenging error conditions.

What this means for future AI chips

In plain terms, the work supplies processing-in-memory hardware with a robust “spell-checker” for its math, one that understands richer symbol sets and complex error patterns without slowing the data stream. By unifying protection for both stored data and on-the-fly calculations, and by demonstrating an efficient silicon implementation, the study shows that high-density, low-power in-memory accelerators do not have to sacrifice reliability. This kind of tailored error correction could become a key ingredient in making future neuromorphic and AI accelerators both energy-frugal and dependable enough for real-world applications, from mobile devices to large-scale data centers.

Citation: Shi, D., Fu, Y., Zhu, Y. et al. Correcting processing-in-memory multiply-accumulate arithmetic errors with LDPC. npj Unconv. Comput. 3, 14 (2026). https://doi.org/10.1038/s44335-026-00061-9

Keywords: processing-in-memory, error correction, LDPC codes, resistive RAM, neural network hardware