Clear Sky Science · en

LIMO: Low-power in-memory-annealer and matrix-multiplication primitive for edge computing

Smarter Routes and Leaner Chips

Every day, companies face puzzles like finding the shortest route for a delivery truck visiting thousands of stops or quickly scanning images to spot faces using a battery-powered camera. These problems strain today’s computers, which shuffle huge amounts of data back and forth between memory and processors. This paper introduces LIMO, a new kind of low-power computing block that keeps data in place while solving such hard route-planning tasks and running artificial intelligence (AI) models, making future edge devices faster and more energy-efficient.

Why Finding Good Routes Is So Hard

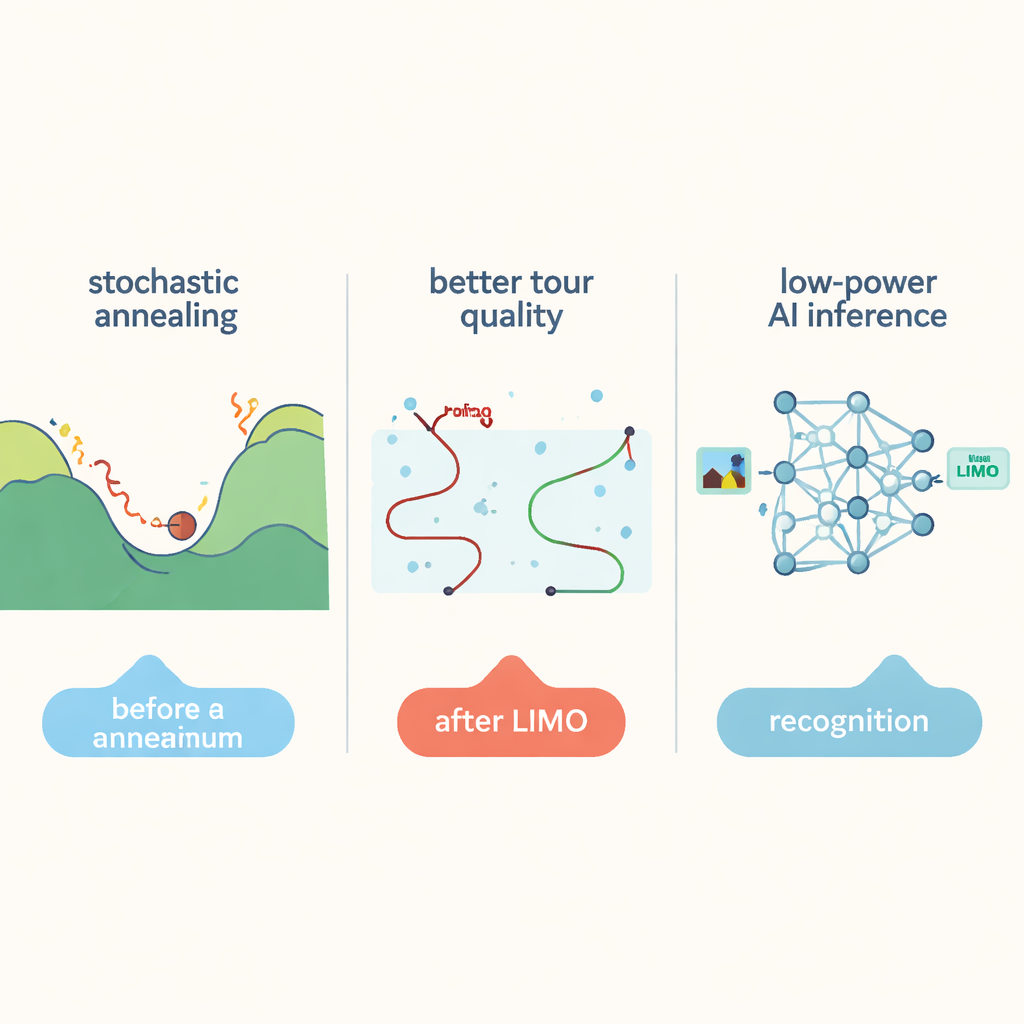

At the heart of this work is the famous Traveling Salesman Problem: given many cities, find the shortest tour that visits each city once and returns to the start. For small maps, exact math tools can find the best answer. But as the number of cities grows into the tens of thousands, the number of possible tours explodes, and even powerful computers bog down. Heuristics like simulated annealing can search this vast space for good, though not perfect, tours by occasionally accepting worse intermediate routes to avoid getting stuck. However, standard approaches still explore the search space inefficiently for very large problems and waste time moving data between memory and CPU, hitting the so‑called “memory wall.”

A New Way to Search the Possibilities

The authors propose a new algorithm called Significance Weighted Annealed Insertion (SWAI) that reshapes how candidate tours are explored. Instead of constantly swapping pairs of cities, which scales poorly as the number of cities grows, SWAI builds tours step by step, inserting one new city at a time. At each step, it sometimes chooses the nearest next city (a greedy choice) and sometimes relies on controlled randomness that favors shorter candidate edges but does not completely exclude longer ones. This bias is tuned over time, starting more adventurous and becoming more conservative as the search progresses. Because each step looks at options in a way that grows only linearly with the number of cities, the algorithm explores long-range improvements more effectively than traditional simulated annealing.

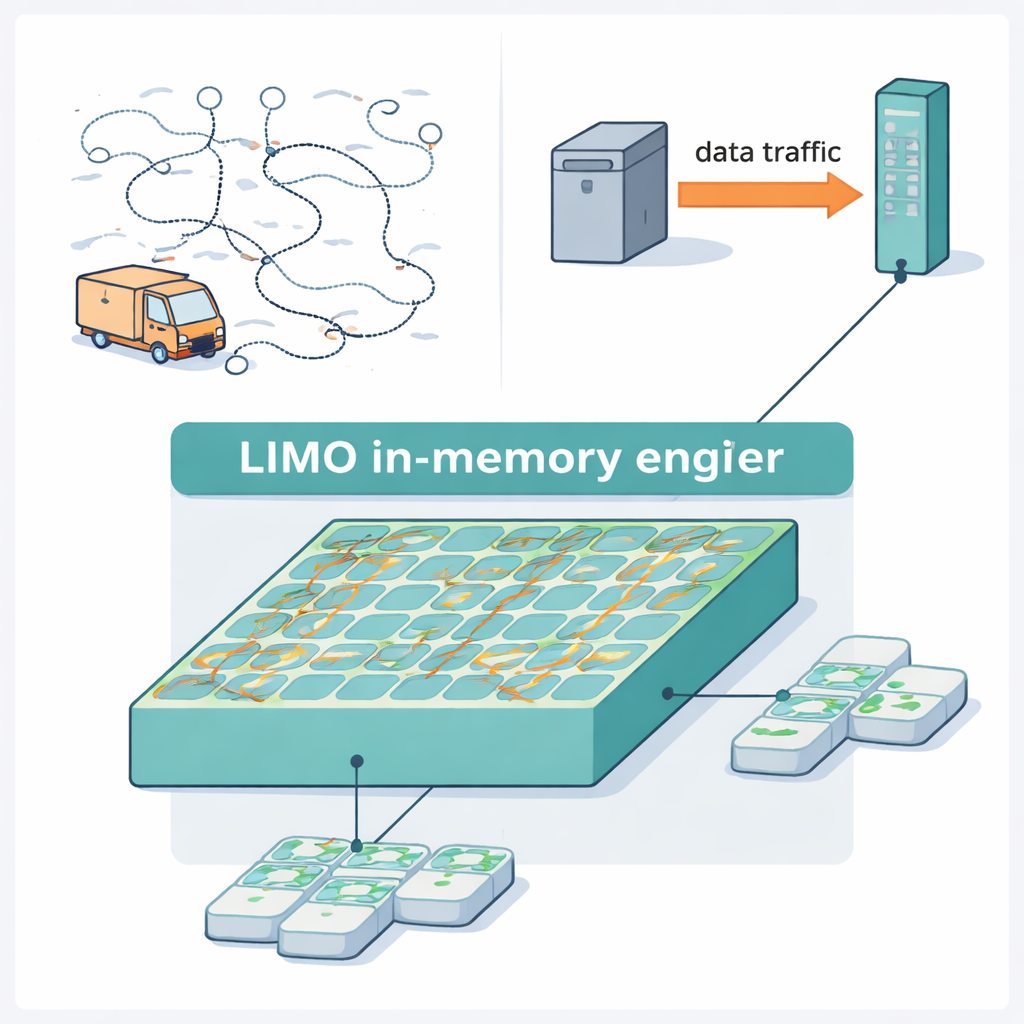

Computing Inside Memory With Built-In Randomness

LIMO turns this algorithm into hardware by tightly co-designing the circuitry and the search method. At its core is a modified memory array that stores both the current tour and the distances between cities, and performs the key update steps without constantly talking to a separate processor. Random choices needed by the algorithm come from tiny magnetic devices called spin-transfer-torque magnetic tunnel junctions, which naturally flip their state in an unpredictable way when driven with the right current. The designers convert this physical randomness into digital bits and use simple comparisons to implement the probabilistic decisions in the algorithm. Because most operations stay digital and occur directly inside memory, the system avoids bulky converters and fragile analog circuits, saving both power and area.

Breaking Big Problems Into Pieces

To tackle truly large route-planning tasks with up to 85,900 cities, the system uses a divide-and-conquer strategy. A light-weight geometric method groups nearby cities into clusters until each cluster is small enough to fit into a single LIMO block. The hardware solves many of these sub-routes in parallel, then stitches them back together into a complete tour. Extra refinement steps further polish the global route: segments of the tour are re-optimized by the hardware, and a classic “2-opt” cleanup on a regular processor removes remaining crossing paths. In tests on standard benchmarks, this combined approach produced higher-quality tours than previous specialized annealing machines, while reaching answers up to about five times faster on the largest problem.

From Hard Routes to Efficient AI

LIMO is not limited to route planning. The same memory array can also act as a building block for neural networks by performing vector–matrix multiplications, the core operation behind image and pattern recognition. Instead of using power-hungry, precise converters to read analog signals, LIMO relies on very simple sensing circuits that capture only the sign of the accumulated signal, and compensates for this roughness by training the networks in a hardware-aware way. On image classification and face detection tasks, these networks reached accuracy close to standard software models, while reducing energy use and response time compared with conventional compute-in-memory chips. For everyday users, this means that cameras, drones, and other edge devices could one day solve complex planning tasks and run AI models longer on a battery, all thanks to smarter searching and computing directly where the data lives.

Citation: Holla, A., Chatterjee, S., Sen, S. et al. LIMO: Low-power in-memory-annealer and matrix-multiplication primitive for edge computing. npj Unconv. Comput. 3, 10 (2026). https://doi.org/10.1038/s44335-026-00054-8

Keywords: in-memory computing, traveling salesman problem, hardware annealing, low-power AI, edge computing