Clear Sky Science · en

Brain Inspired Probabilistic Occupancy Grid Mapping with Vector Symbolic Architectures

Robots That See the World as a Patchwork

Every self-driving car, warehouse robot, or home vacuum needs a quick mental picture of its surroundings: what’s solid, what’s empty, and what’s still unknown. This paper presents a new way for robots to build that picture—called an occupancy grid map—that borrows ideas from how brains might represent information, aiming to keep maps accurate while making them far faster and more efficient to compute.

Turning Raw Sensor Pings into a World Map

Robots often use laser scanners or other distance sensors to probe the world as they move, collecting point clouds of where objects are and where space is free. A classic technique, occupancy grid mapping, divides the environment into tiny cells, like pixels on a screen, and assigns each one a probability of being occupied. Traditional methods treat this as a heavy statistical problem, carefully tracking uncertainty but consuming lots of time and memory. Newer neural-network methods are faster and can fill in gaps, but they behave like black boxes, can be hard to trust in safety-critical settings, and usually must be retrained for each new environment.

A Brain-Inspired Middle Path

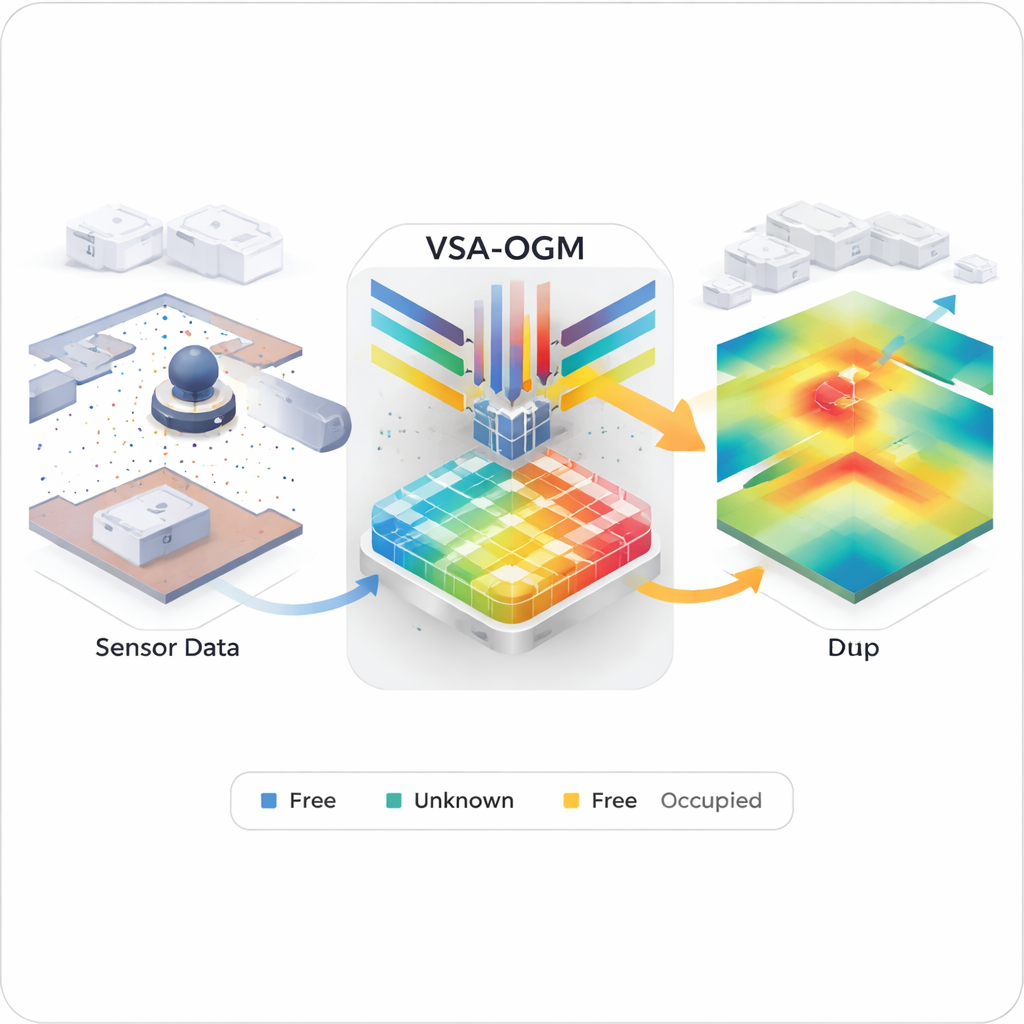

The authors propose a "neuro-symbolic" compromise called VSA-OGM, short for Vector Symbolic Architecture Occupancy Grid Mapping. Instead of storing every detail in a dense grid or burying structure inside millions of neural-network weights, the method encodes sensor readings as very long vectors in a high-dimensional space—a mathematical idea inspired by theories of how groups of neurons might represent concepts and locations. The environment is split into tiles, and each tile has vector memories for "occupied" and "empty" evidence. As the robot moves and collects point clouds, each observation is converted into one of these hyperdimensional vectors and bundled into the appropriate tile memory, efficiently accumulating information over time.

From Noisy Vectors to Clear Maps

Of course, bundling many signals into a single high-dimensional memory risks creating a noisy, hard-to-read blob. VSA-OGM addresses this with a carefully designed decoding pipeline. First, it compares the tile memories against vectors that represent positions in space, producing rough "quasi-probabilities" for occupancy. Then it applies a series of non-linear steps and an information-theory tool, Shannon entropy, to tease out where the data strongly supports one class over the other. Finally, it uses a softmax function to convert these signals into true probabilities and combines them into a final map showing the signed difference between "occupied" and "empty". The result is a smooth occupancy grid that interpolates across sparsely measured regions while staying fully probabilistic and interpretable.

Faster Maps for One Robot—or Many

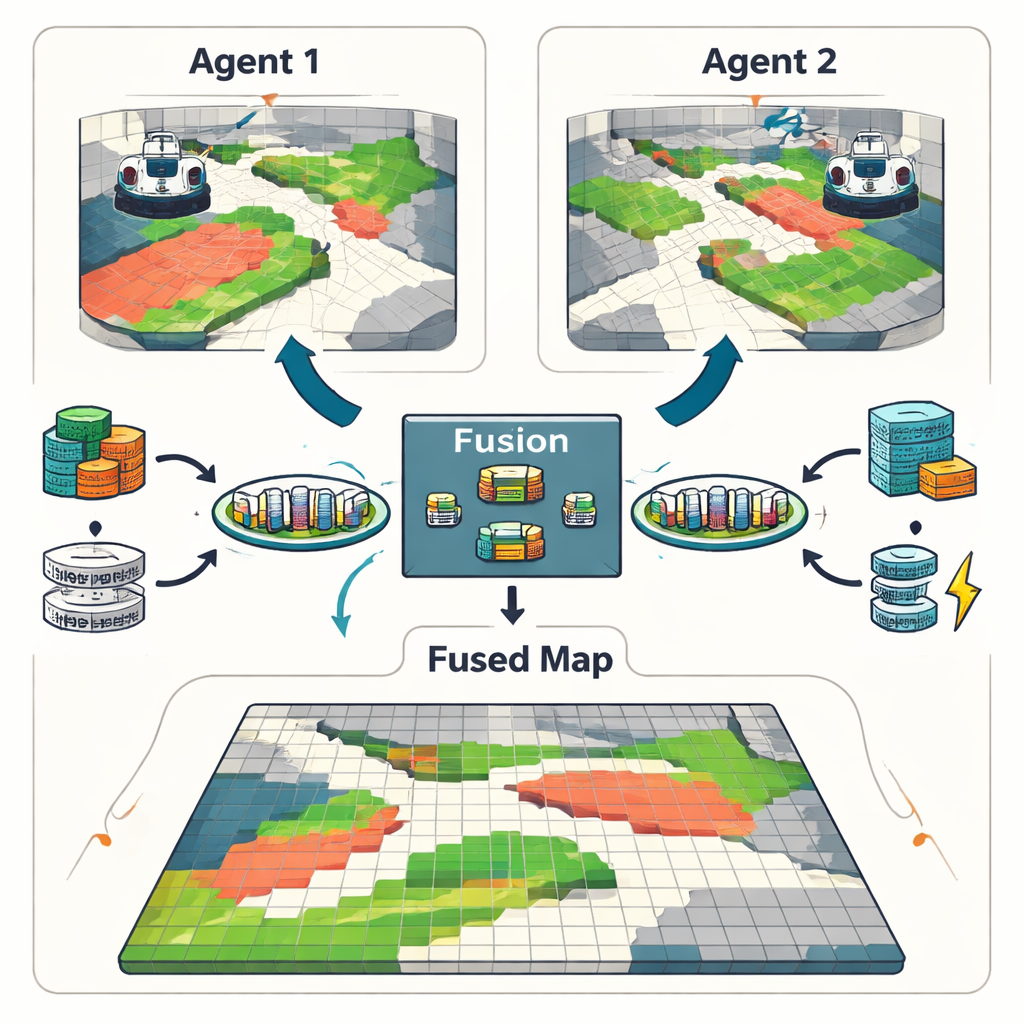

To test their approach, the researchers ran VSA-OGM on several simulated and real datasets, including a classic indoor robot map and a large-scale driving scenario. Against strong traditional baselines that carefully model spatial correlations, VSA-OGM achieved similar mapping accuracy but used about 400 times less memory and ran up to 45 times faster. Compared to streamlined traditional methods that drop some statistical detail, it still matched accuracy while cutting latency by roughly a factor of five. When evaluated against a neural-network system that requires hours of training and millions of parameters, VSA-OGM delivered comparable mapping quality with no pretraining and reduced per-frame processing time by up to sixfold. The framework also supports multiple robots: vector memories from different agents can be simply added together, producing fused maps with little loss of information.

What This Means for Everyday Robots

In plain terms, this work shows that robots don’t have to choose between slow-but-trustworthy math and fast-but-opaque neural networks when building maps of the world. By using brain-inspired high-dimensional vectors, VSA-OGM keeps the clear probabilistic structure of classic methods while reaching the speed and efficiency needed for real-time operation on limited hardware. There are still challenges—such as handling extremely uneven data and very dense environments—but the approach points toward future robots that can safely and reliably understand their surroundings, even when running on modest onboard computers.

Citation: Snyder, S., Capodieci, A., Gorsich, D. et al. Brain Inspired Probabilistic Occupancy Grid Mapping with Vector Symbolic Architectures. npj Unconv. Comput. 3, 13 (2026). https://doi.org/10.1038/s44335-026-00052-w

Keywords: occupancy grid mapping, autonomous robots, vector symbolic architectures, probabilistic mapping, LiDAR sensing