Clear Sky Science · en

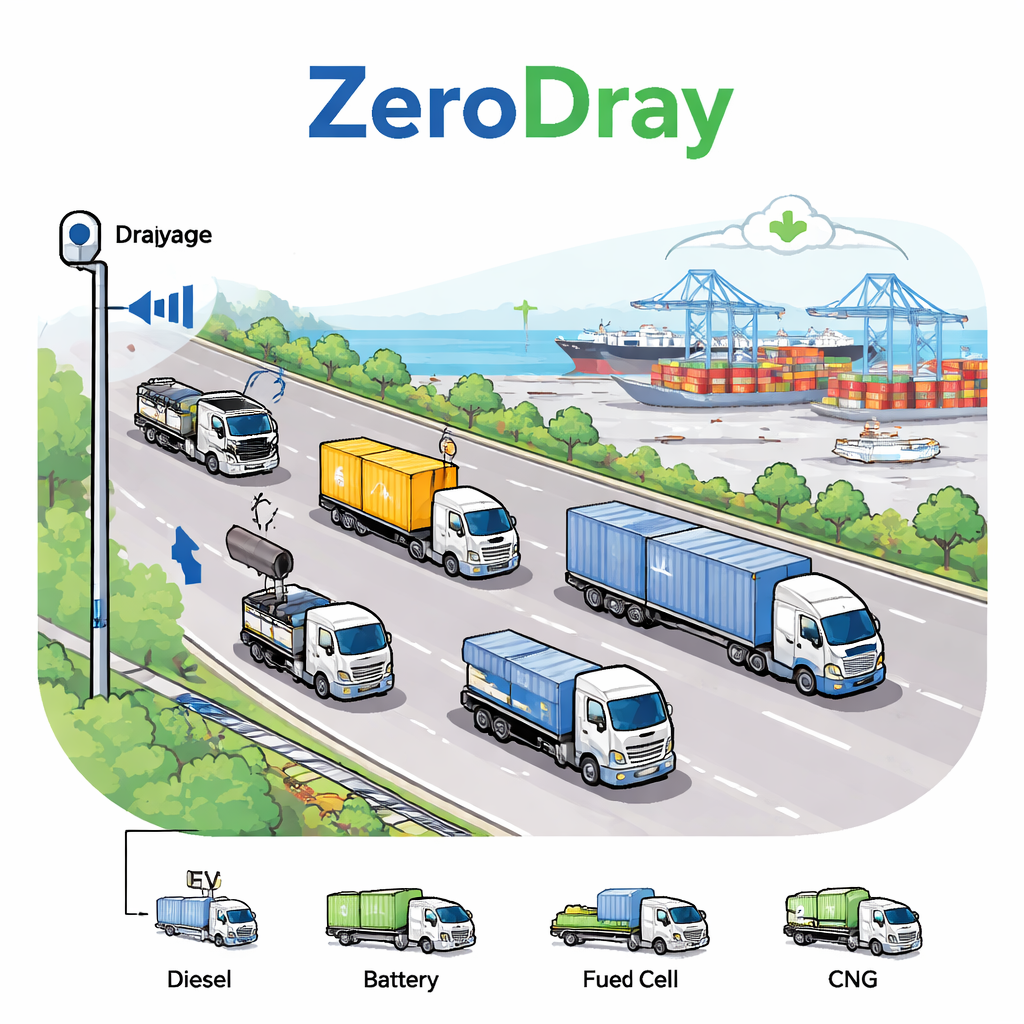

Domain informed vision language model for sustainable freight with drayage truck powertrain and cargo classification

Cleaner ports, smarter trucks

Ports move the goods that stock our stores, but the short‑haul trucks that shuttle containers in and out of terminals are also major polluters. This study shows how a new kind of artificial intelligence can watch these “drayage” trucks with roadside cameras and automatically tell which ones are still burning diesel and which use cleaner technologies—without any manual labeling of images. That kind of automated insight could help regulators, planners, and local communities track progress toward cleaner air around some of the world’s busiest ports.

Why port trucks matter for climate and health

In the United States, transportation is the biggest single source of greenhouse gas emissions, and heavy trucks punch far above their weight: they are a small share of vehicles but a large share of emissions. Nowhere is this more visible than around the Ports of Los Angeles and Long Beach, a pair of neighboring harbors that together handle about 40 percent of U.S. container imports and are also Southern California’s largest fixed source of air pollution. Drayage trucks—the rigs that haul containers between ports, rail yards, and warehouses—generate much of this pollution despite traveling relatively short, predictable routes. California has therefore ordered that by 2035, all port drayage trucks must be zero‑emission, relying on battery‑electric, hydrogen fuel cell, or cleaner gas technologies instead of conventional diesel.

Seeing what powers a truck and what it carries

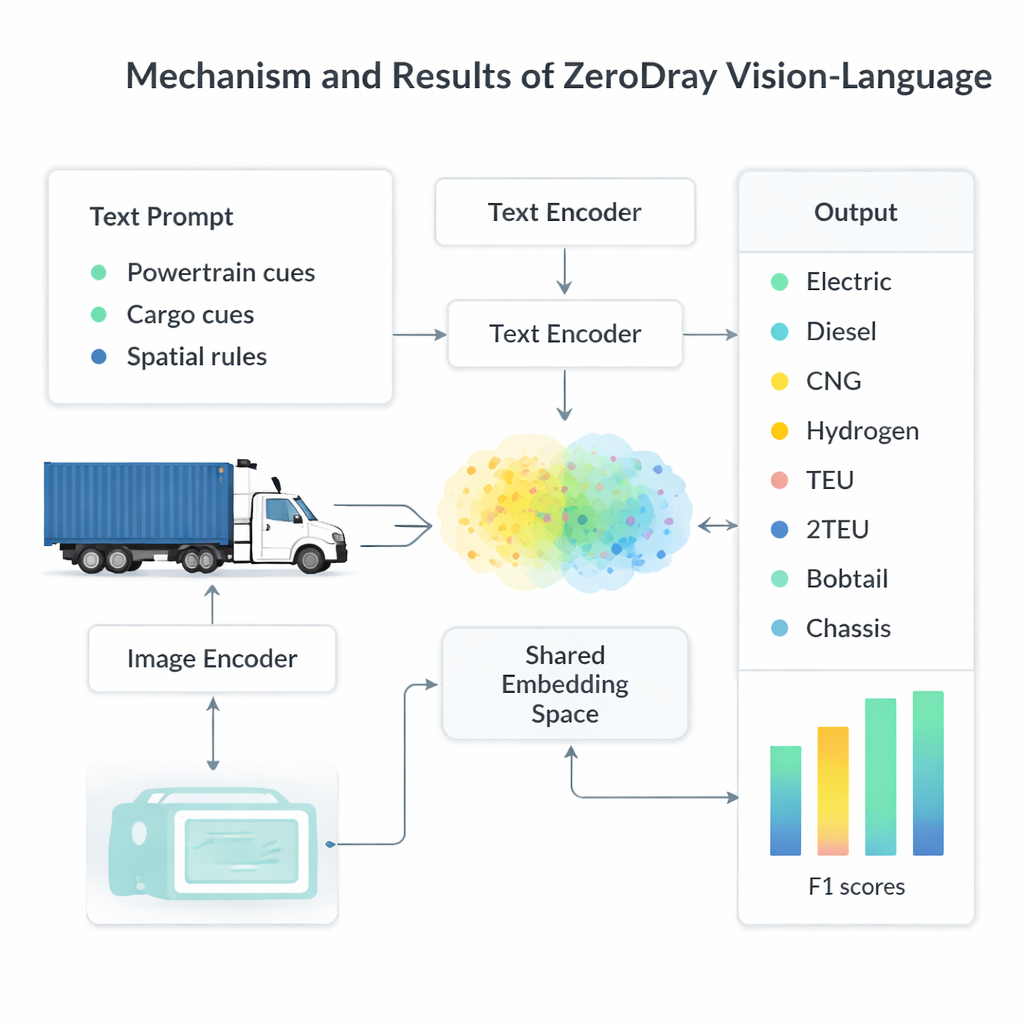

To know whether these policies are working, officials need to measure what kinds of trucks actually show up at port gates and highways: Are they diesel or electric? Are they hauling a full container, an empty frame, or no trailer at all? Traditionally, answering such questions requires building large, hand‑labeled image collections and training task‑specific models. The authors propose a different route, called ZeroDray, that uses a vision‑language model—an AI system that can understand both pictures and text—without any additional training. The model is given roadside camera images of passing trucks along a corridor serving the Los Angeles and Long Beach ports and must classify both the powertrain (diesel, electric, compressed natural gas, or hydrogen) and the cargo setup (single 20‑foot container, longer 40‑foot equivalent, empty chassis, or bobtail truck with no trailer).

Teaching AI to think like a truck expert

Out of the box, vision‑language models are generalists: they know a bit about everything from the internet but lack deep knowledge of niche topics like drayage trucking. ZeroDray bridges that gap by feeding the model carefully crafted prompts that encode expert hints. For powertrains, the prompts describe visual clues such as exhaust stacks and large fuel tanks for diesel, cylinder tanks for CNG, hydrogen tanks for fuel‑cell trucks, or the absence of exhaust hardware and EV badges for electric rigs. For cargo, the prompts tell the model to reason about the geometry of the scene: Does the container’s length noticeably exceed its height and the cab length, as in a long, 40‑foot load, or is it closer in size, as in a shorter 20‑foot container? By asking the AI to work through these cues step by step and to explain its reasoning in plain language, the framework makes its decisions more transparent and easier to check.

Putting the system to the test on real port traffic

The researchers evaluated ZeroDray on 443 truck images captured over two days in February 2025 by a fixed roadside camera near the ports. Human observers provided ground‑truth labels for each truck’s powertrain and cargo configuration. They then compared ZeroDray to a simpler setup that supplied only bare‑bones class names to the same underlying model. With minimal guidance, the basic system already recognized some straightforward cases, such as diesel trucks with no trailer. But it struggled badly when distinctions depended on small visual differences or on spatial layout, often confusing diesel and electric tractors or mixing up short and long containers. Once the expert‑informed visual cues and spatial rules were added, accuracy jumped dramatically. Powertrain classification reached about 100 percent across diesel, electric, hydrogen, and CNG. Cargo recognition, especially the tricky distinction between single and dual‑equivalent container lengths, improved from about half‑correct to roughly 98 percent. Overall, across all 11 combined powertrain‑cargo categories, the enhanced ZeroDray framework achieved an average F1 score of 99 percent, far outpacing the basic approach.

What this means for cleaner freight corridors

For non‑specialists, the key takeaway is that a general‑purpose AI, when guided with the right expert cues, can reliably “look” at highway video and tell not only how trucks are loaded but also what powers them—without any expensive custom training. That capability could give port authorities and regulators a powerful new tool for monitoring the shift from diesel to zero‑emission drayage trucks, spotting where new charging or hydrogen stations are most needed, and reducing wasteful empty trips. While the current study used a modest dataset from a single camera under ideal conditions, the authors argue that the same strategy can be expanded to other freight hubs and more varied environments. If scaled up responsibly, systems like ZeroDray could make the invisible details of freight activity visible, helping communities and policymakers push freight corridors toward cleaner, more efficient operation.

Citation: Feng, G., Li, Y., Tok, A.Y.C. et al. Domain informed vision language model for sustainable freight with drayage truck powertrain and cargo classification. npj. Sustain. Mobil. Transp. 3, 15 (2026). https://doi.org/10.1038/s44333-026-00086-4

Keywords: zero-emission trucks, vision-language models, port drayage, freight emissions, sustainable transport