Clear Sky Science · en

A view-flexible deep learning framework for automated analysis of 2D echocardiography

Why heart scans need a helping hand

Ultrasound scans of the heart are a cornerstone of modern cardiology, but getting reliable information from them usually demands years of training. In busy clinics, emergency rooms, or remote settings, that expertise is not always available, which can delay care for people with heart problems. This study explores whether artificial intelligence (AI) can read common heart ultrasound videos from almost any standard angle, making high‑quality heart assessments possible even when images are captured by less‑experienced users with handheld devices.

A new way to read moving heart pictures

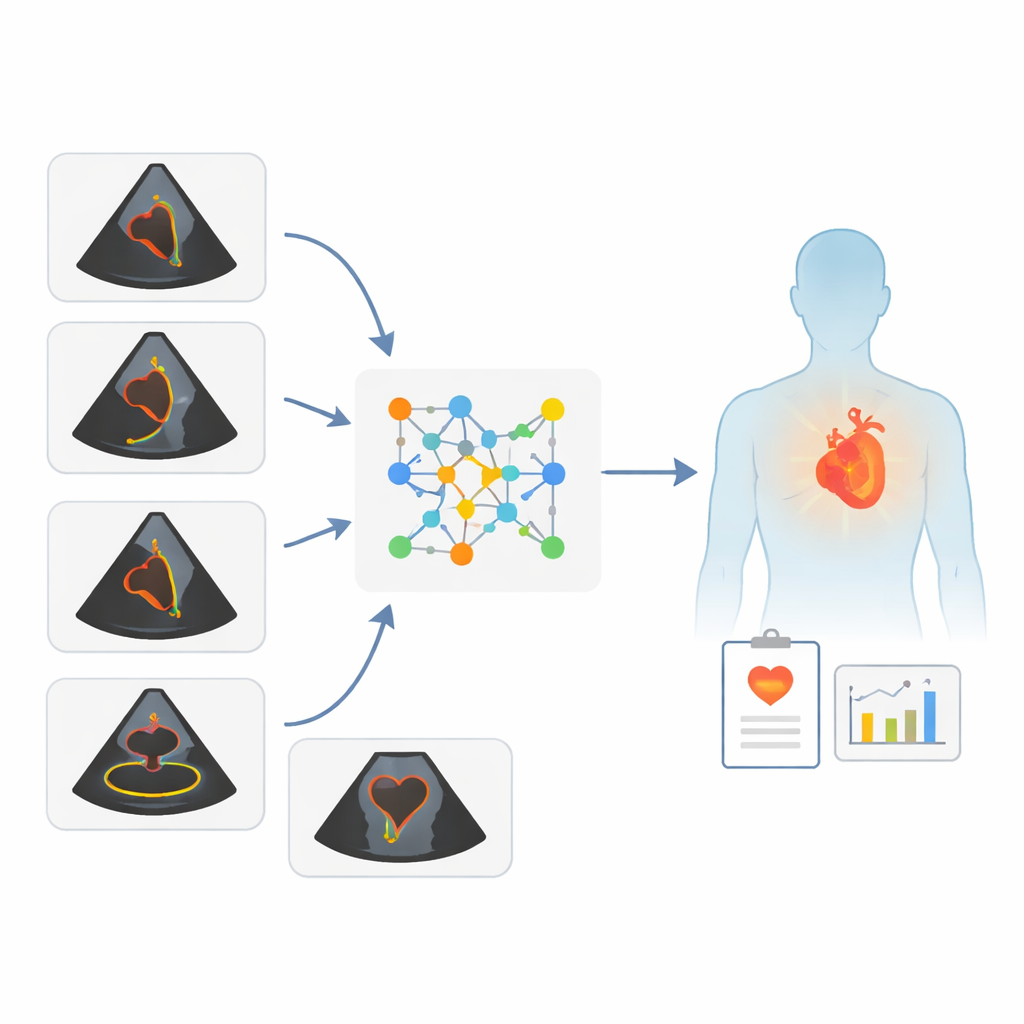

The researchers built a deep‑learning framework that can analyze short video clips from two‑dimensional echocardiograms—moving black‑and‑white images of the beating heart. Unlike traditional computer tools that expect a very specific camera angle, this system accepts several common views as long as the main pumping chamber, the left ventricle, appears in the picture. From these varied views, the AI estimates three things: how well the heart pumps blood (the left ventricular ejection fraction, or LVEF), the patient’s age, and the patient’s sex. The key idea is to free ultrasound from rigid view requirements so that good measurements can still be made even when images are less than perfect.

Testing the system on many kinds of patients

To see how well the framework works, the team trained it on tens of thousands of standard echocardiograms from Mayo Clinic sites in Minnesota and Wisconsin. They then tested it on several independent groups: more patients from Arizona and Florida, a large public dataset from Stanford, and two handheld ultrasound collections. One handheld set came from patients who had both a standard machine exam and a handheld scan during the same visit. The other came from hospitals in the United States and Israel, where both expert sonographers and novices—nurses and medical residents with a brief training course and real‑time guidance software—recorded handheld images.

How accurate were the AI’s heart and body estimates?

Across these diverse datasets, the AI’s LVEF estimates closely tracked the values calculated by expert readers, with typical differences of less than ten percentage points in the vast majority of cases. It also did well at a key practical question: deciding whether heart pumping was clearly reduced or not. For both standard machines and handheld devices, the system’s performance in flagging hearts with significantly low LVEF was similar to that of human specialists. Importantly, results stayed strong when images were captured with handheld scanners, and even when those scanners were operated by novices using guidance software. In only a small minority of cases did the LVEF estimates from novice‑acquired clips differ meaningfully from expert‑acquired clips for the same patient.

Hidden clues to age and sex inside heart motion

Beyond pumping strength, the AI was surprisingly good at guessing a person’s age and sex from their heart ultrasound alone. Estimated age strongly matched true age, whether images came from standard machines or handheld devices. Sex classification was also highly accurate across all test groups. While these traits are already known in the clinic, the ability to infer them reliably from heart motion suggests that ultrasound images contain subtle patterns of aging and biological differences that human eyes do not routinely quantify. The authors suggest that mismatches between AI‑estimated and actual age, for example, might someday reflect “biological heart age” and help identify people at higher cardiovascular risk.

What this means for future heart care

This study shows that a single AI framework can turn a wide range of routine heart ultrasound clips into useful clinical information without insisting on perfect camera angles or expert operators. By accurately gauging heart pumping function and extracting broader clues about patient characteristics from both standard and handheld scans, the approach could support faster triage in clinics, emergency departments, and even pre‑hospital care. Although the work still needs testing in more racially and ethnically diverse groups and in less controlled real‑world settings, it points toward a future where more caregivers armed with simple handheld scanners can obtain dependable insights into heart health at the bedside.

Citation: Anisuzzaman, D.M., Malins, J.G., Jackson, J.I. et al. A view-flexible deep learning framework for automated analysis of 2D echocardiography. npj Cardiovasc Health 3, 17 (2026). https://doi.org/10.1038/s44325-025-00100-7

Keywords: echocardiography, artificial intelligence, handheld ultrasound, ejection fraction, cardiovascular imaging