Clear Sky Science · en

Advanced imaging techniques for tumor intraoperative navigation imaging

Seeing Cancer More Clearly in the Operating Room

Cancer surgery often comes down to a delicate trade-off: remove every last cancer cell while sparing as much healthy tissue as possible. This review article explains how a new generation of imaging tools is helping surgeons actually see tumors and their margins in real time during an operation. For a lay reader, the appeal is straightforward—these technologies promise fewer repeat surgeries, more precise tumor removal, and better chances of long-term survival, all by giving surgeons a clearer “map” while they work.

Why Better In-Surgery Vision Matters

Cancer is now one of the world’s leading killers, and surgery remains a cornerstone of treatment. Yet even the most skilled surgeon has long been limited by what can be seen and felt by hand, and by scans taken days or weeks before the operation. Traditional tools such as ultrasound, CT, MRI, and PET help plan surgery, but they are often bulky, slow, or not suited to continuous use during an operation. As a result, it can be difficult to tell exactly where a tumor ends and healthy tissue begins, which raises the risk of leaving cancer behind or removing too much normal tissue. The review sets out how “intraoperative imaging”—live imaging used right in the operating room—is changing that picture.

Glowing Tumors and New Ways to Light Them Up

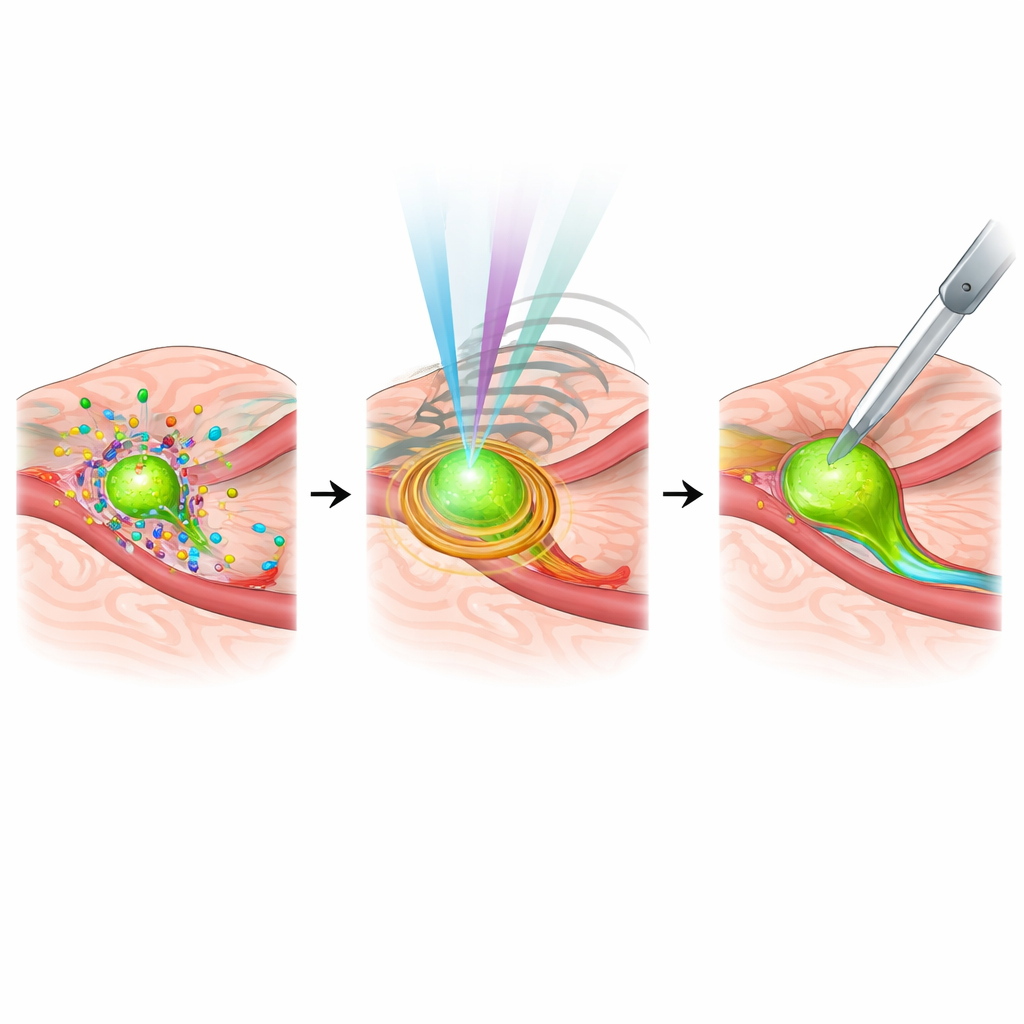

One major advance is fluorescence imaging, in which special dyes or molecular probes make tumors glow under near-infrared light. Older, non-targeted dyes such as indocyanine green have already helped surgeons outline tumors, trace lymph vessels, and find crucial lymph nodes in breast, liver, lung, and stomach cancers. Newer, targeted probes go further by homing in on molecules that are overproduced by tumor cells or their surrounding environment. Examples include probes that attach to growth factor receptors, immune checkpoints, or proteins abundant in the tumor’s supporting tissue or in low-oxygen regions. Some of these agents can even be linked to cancer drugs, pairing precise visualization with therapy in the same molecule. Early clinical trials show that such tracers can uncover hidden tumor deposits and reduce the need for repeat surgery after breast-conserving operations.

Beyond Glow: Sound, Light, and Many Colors

While fluorescence is central, the review highlights several complementary approaches that see different aspects of a tumor. Photoacoustic imaging uses pulses of light to generate sound waves inside tissue, combining the detail of optical methods with the depth reach of ultrasound, and has been able to reveal very small metastases that other scans miss. Multispectral and hyperspectral imaging split light into many bands, capturing subtle differences in how tissues absorb and reflect light; this can distinguish cancer from normal tissue with high accuracy in breast, cervical, and gastrointestinal tumors. Advances in ultrasound—including techniques that measure tissue stiffness—add depth information and help show how far cancer has infiltrated. Raman spectroscopy, which reads the chemical “fingerprint” of tissue based on how molecules scatter light, offers label-free, highly specific identification of cancer during surgery, especially when combined with other modalities.

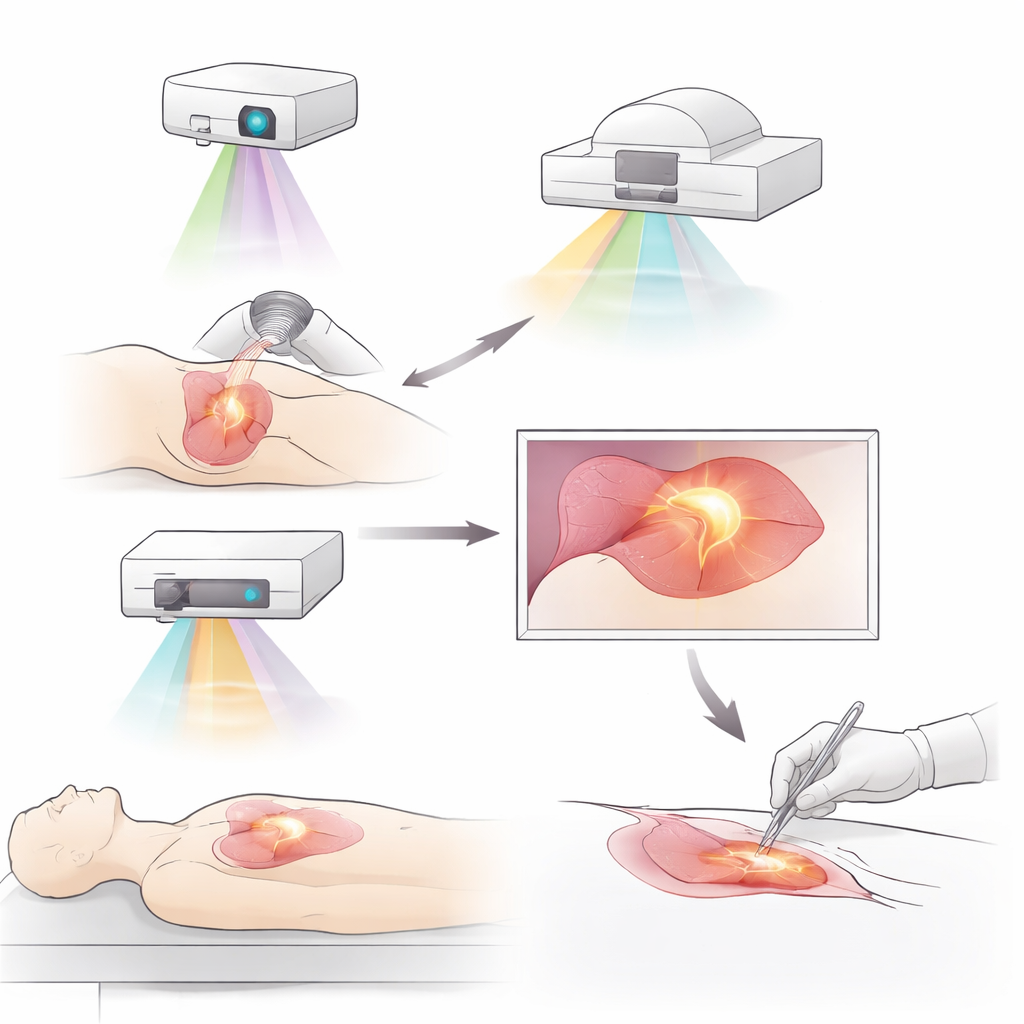

Building 3D Maps and Combining Multiple Views

Another theme of the article is combining images into three-dimensional and multimodal views that surgeons can intuitively use. Three-dimensional reconstructions of blood vessels, lymph channels, and organs, layered with fluorescent signals, help plan precise segmental liver and lung resections and guide difficult lymph node dissections. Hybrid systems that merge PET with optical imaging or pair nuclear medicine tracers with fluorescence allow the same probe to be used for preoperative whole-body scanning and intraoperative guidance. Emerging platforms integrate laser ablation, optical coherence tomography, and robotics to automatically locate and treat lesions with high precision. These approaches aim to give surgeons both the “big picture” of tumor spread and the fine detail needed to cut along safe, clean margins.

Smarter Systems, Personalized Targets, and Remaining Hurdles

The review also looks ahead to the role of artificial intelligence and personalized medicine. Machine-learning models are already helping distinguish cancerous from normal tissue in real time, recognize critical anatomical structures, and even predict lymph node spread during pancreatic surgery, potentially reducing dependence on rapid pathology. At the same time, imaging probes are being redesigned to match the unique molecular signatures of each patient’s tumor, tying intraoperative images to genetic and molecular profiles. Yet obstacles remain: many systems are expensive, complex, and hard to fit into routine workflows; some require specialized contrast agents with carefully managed safety profiles; and standards for integrating all this data into navigation systems are still evolving.

What This Means for Patients

In accessible terms, the article’s conclusion is that surgeons are gaining something they have never truly had before: the ability to see living cancer with high clarity while they operate. By lighting up tumors, reading their chemistry, mapping them in 3D, and combining multiple types of images—often assisted by AI—these tools can help ensure that more of the tumor is removed and more healthy tissue is preserved. Although cost, training, and technology gaps must be addressed before such systems are widely available, the direction of travel is clear. Advanced intraoperative imaging is poised to become a key part of standard cancer surgery, offering patients more precise operations, fewer recurrences, and better chances of long-term control.

Citation: Li, K., Zhang, Y., Yang, H. et al. Advanced imaging techniques for tumor intraoperative navigation imaging. npj Imaging 4, 18 (2026). https://doi.org/10.1038/s44303-026-00150-1

Keywords: intraoperative imaging, fluorescence-guided surgery, tumor margin detection, multimodal cancer imaging, photoacoustic imaging