Clear Sky Science · en

Smart microscopy: adaptive microscope control to improve the way we see life

Seeing More by Letting the Microscope Think

Biologists use microscopes to watch living cells, embryos, and tissues in action, but every experiment is a juggling act: sharper images usually mean brighter light, faster imaging, and more damage to delicate samples. This article explains a new generation of “smart” microscopes that behave less like static cameras and more like self-driving cars for biology—systems that watch what is happening in real time and change how they look at the sample on their own. For readers, it is a glimpse of how automation and artificial intelligence are transforming the way we observe life, helping scientists capture fleeting events while keeping living samples healthier and experiments more efficient.

From Simple Lenses to Self-Adjusting Machines

The authors trace the story from the first light microscopes of the 1600s to today’s highly motorized, computer-controlled instruments. Over time, better optics, controllable light sources, precise motor stages, and digital cameras turned microscopes into complex machines. Early automation—such as motorized stages and autofocus in the 1970s and 1980s—could move samples or keep them in focus, but these systems worked in parallel to image capture and did not change how an experiment unfolded. Only when open-source hardware, 3D printing, and flexible control software like MicroManager and newer platforms arrived did it become practical for researchers to build custom systems that coordinate many parts of a microscope in real time. At this point, microscopes began to cross the line from being passive recorders to active experimental partners.

What Makes a Microscope Smart

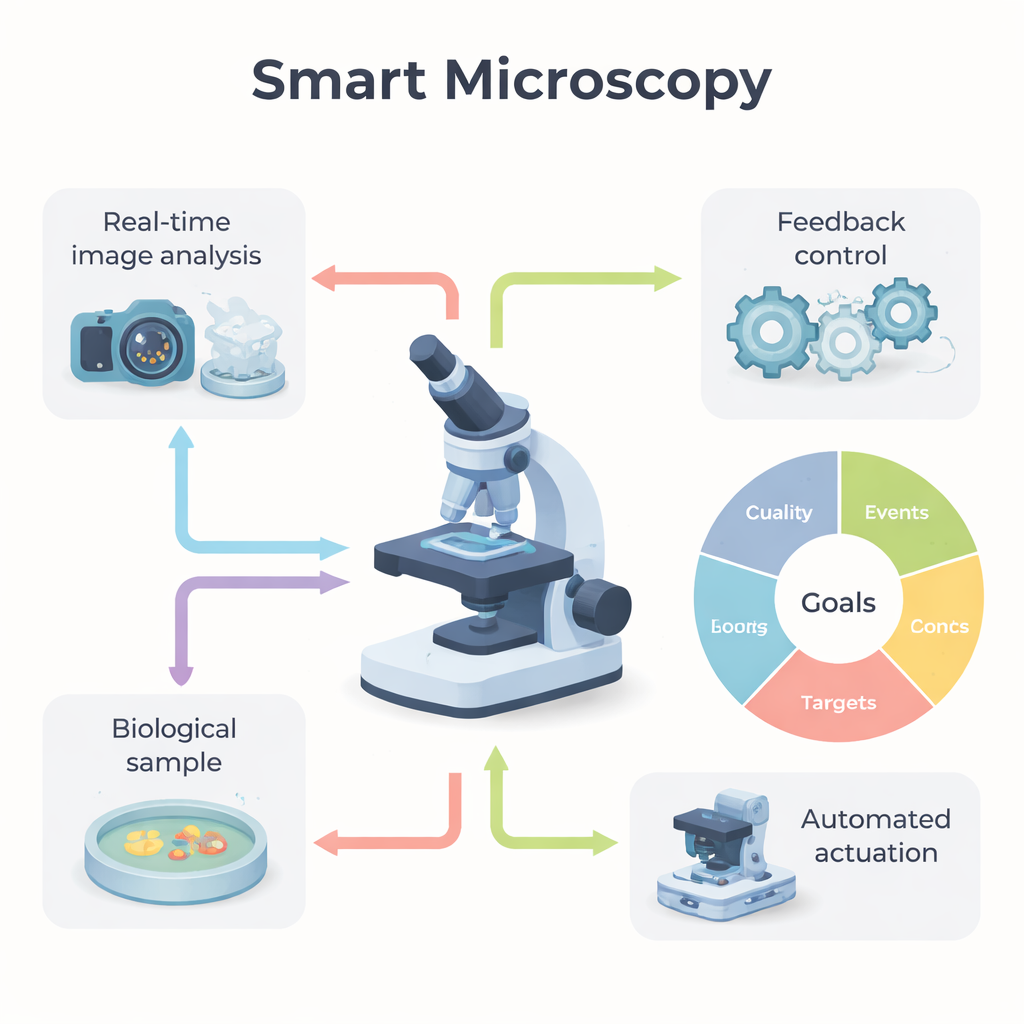

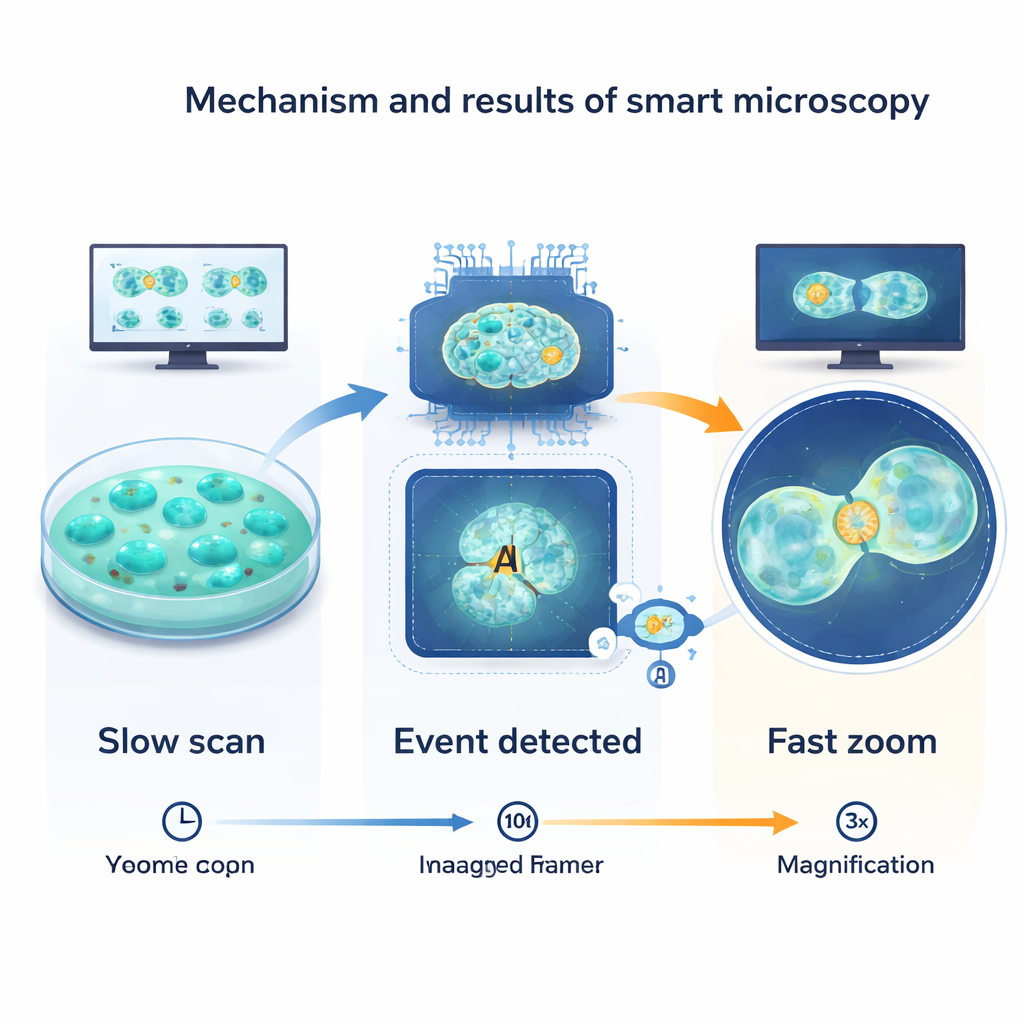

The review defines a “smart microscope” as one that combines three ingredients: real-time analysis of the images it is collecting, a feedback loop that uses those measurements to make decisions, and automated parts that can act on those decisions. Instead of running a fixed script, the system constantly asks: what am I seeing, and should I change how I am imaging? A classic example is watching cells progress through the cell cycle. Most of the time, the microscope can take gentle, infrequent snapshots to avoid light damage. When the system detects the telltale shape changes of a cell entering division, it automatically zooms in, speeds up the frame rate, and adjusts the field of view, capturing the fast event in detail while sparing the rest of the sample unnecessary stress.

Five Ways to Use Smarter Imaging

To help researchers design such experiments, the authors group smart microscopy into five practical goal types. Quality-driven systems continuously adjust settings to keep images sharp and bright, for example by correcting optical distortions during deep-tissue imaging or holding the focus steady as a specimen moves. Event-driven systems hunt for rare happenings—cell division, sudden signaling bursts, protein clumps—and only switch to intensive imaging when they appear. Target-driven approaches keep a chosen object, such as a single cell or worm, centered and properly illuminated over long periods. Information-driven microscopes use prior knowledge or population statistics to focus only on the most informative regions, such as automatically spotting unusual cells in a large field and then imaging them in more detail. Finally, outcome-driven systems go a step further: they not only watch but also intervene, using tools like light-activated proteins to steer cell behavior and adjusting their actions based on how the cells respond.

How Smart Microscopes Decide and Act

Under the hood, smart microscopy relies on three technical pillars. First, real-time image analysis extracts useful information from each frame—identifying cell shapes, tracking motion, measuring brightness, or classifying patterns. Recent advances in deep learning have made it much easier to segment cells, detect subtle events, and even predict what will happen next. Second, feedback control logic translates those measurements into decisions. Sometimes this is simple—turning a light source on or off—but more advanced setups use control theory or adaptive algorithms to continually nudge the system toward a desired state. Third, actuators carry out the decisions: motorized optics shift the field of view or wavelength, light or chemicals are delivered as controlled perturbations, data are processed or discarded on the fly to manage storage, and even user communication can be automated, for example by alerting a scientist when something interesting occurs.

Hurdles, Community Efforts, and What Comes Next

Despite rapid progress, smart microscopy still faces key obstacles. Complex systems can be hard to set up and tune, and both human choices and algorithm training data can introduce subtle biases. Labs use a patchwork of hardware and software that often do not talk to each other smoothly, and huge data volumes strain storage and analysis pipelines. The authors argue that the future lies in interoperable standards, open interfaces, shared datasets, and community-built tools. They highlight initiatives such as SmartMicroscopy.org and working groups that collect protocols, code, and case studies to lower the entry barrier. For non-specialists, the main takeaway is that microscopes are becoming adaptable, collaborative tools: instead of simply taking pictures, they will increasingly help decide where, when, and how to look, turning raw image streams into richer and more meaningful views of living systems.

Citation: Rates, A., Passmore, J.B., Norlin, N. et al. Smart microscopy: adaptive microscope control to improve the way we see life. npj Imaging 4, 14 (2026). https://doi.org/10.1038/s44303-026-00145-y

Keywords: smart microscopy, adaptive imaging, bioimaging automation, AI in microscopy, live-cell imaging