Clear Sky Science · en

In their own words: case studies of adolescent smartphone language preceding suicide-related hospitalizations

Why Your Teen’s Texts May Matter More Than You Think

As smartphones become constant companions, they quietly capture the everyday words, moods, and worries of young people. This study asks a pressing question: can the language teens type on their phones, in the weeks and days before a mental health crisis, reveal when they are in serious danger of attempting suicide? By examining real-world texting patterns from high‑risk adolescents, the researchers explore whether artificial intelligence tools can help clinicians spot short‑term warning signs—and where those tools still fall short.

Following Five Teens Through a Dangerous Month

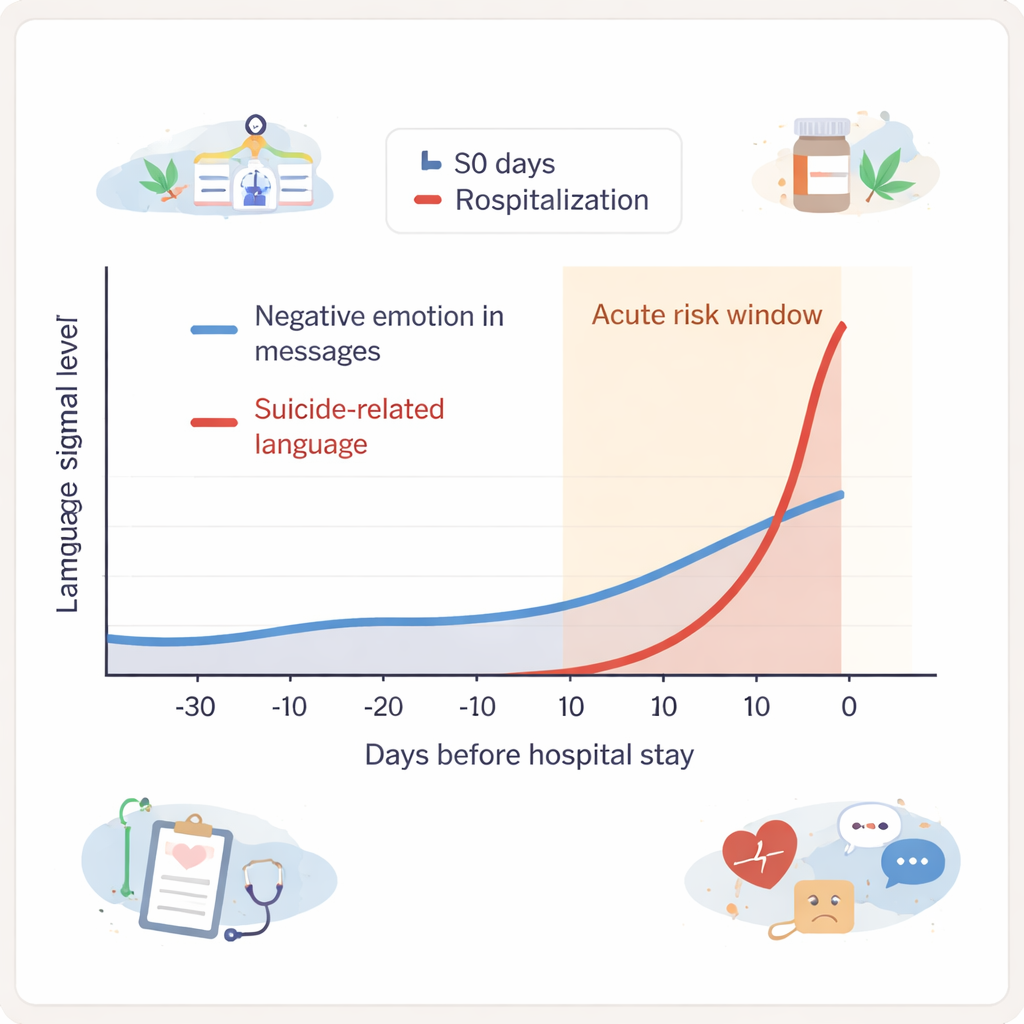

The study focused on five adolescents who were already considered at high risk for suicide and who later experienced a suicide-related hospitalization. For about six months, an app running quietly on their own phones recorded everything they typed on the keyboard—messages, searches, notes—while leaving out what others sent to them. Over 21,000 outgoing text entries per teen, on average, were collected and heavily de-identified to protect privacy. The researchers then zoomed in on the 30 days before each hospitalization, dividing this period into a 20‑day “baseline” phase and a 10‑day “acute risk” phase right before the hospital stay.

What the Words Revealed Before Crisis

Using natural language processing (NLP), the team looked for several types of signals in the typed text. One set of tools searched for suicide-related language, using a youth-focused dictionary that recognized not only standard phrases but also slang and emojis. Another tool, based on modern AI models, estimated whether messages expressed negative emotion. A third method grouped messages into broad themes, such as school, treatment, sleep, substance use, or death. For four of the five teens, both suicide-related language and negative emotion increased in the 10 days before hospitalization, compared with each teen’s own average pattern across the study. Suicide-related language often jumped sharply in the final five days, while negative emotion rose more gradually across the last 10 days.

Signals of Risk—and Signals of Distress

The patterns were promising but complicated. The same warning signs—suicide language and gloomy tone—also appeared at other times outside the immediate crisis window. That suggests these signals may mark periods of serious distress, but not always moments when a suicide attempt is imminent. When clinicians reviewed the text histories directly, they saw that spikes in suicide-related language often lined up with suicidal thoughts, actual attempts, or urgent help‑seeking. Topic models picked up useful themes like substance use and treatment discussions, which sometimes coincided with risky moments.

What Computers Miss That Humans See

However, the AI tools frequently missed issues that clinicians viewed as central triggers, such as fights with friends or family, bullying, romantic conflict, or feelings of rejection. These situations unfolded across many short messages, and the models mostly treated each entry in isolation, without understanding the wider story. As a result, interpersonal conflicts, changes in how teens felt about key events, or subtle shifts from joking about suicide to expressing genuine despair often slipped past the algorithms. The researchers argue that future systems must do more than read single messages: they need to connect conversations over time, and ideally combine text with other passive data such as sleep patterns or movement to improve accuracy.

Looking Ahead: Promise with Important Limits

This work shows that smartphone language can provide a rich, low‑burden window into what adolescents are experiencing between clinic visits. Automated methods already do a decent job at spotting obvious red flags—direct suicidal talk and strongly negative emotion—especially in the days just before a crisis. But they are much less capable of grasping the personal, social, and situational context that human clinicians use to judge risk. For families and health professionals, the message is twofold: digital language data could someday help deliver earlier, just‑in‑time support to youth in danger, but it must be developed with great care, strong privacy protections, and in partnership with clinicians, not as a replacement for them.

Citation: Treves, I.N., Bloom, P.A., Salem, S. et al. In their own words: case studies of adolescent smartphone language preceding suicide-related hospitalizations. NPP—Digit Psychiatry Neurosci 4, 5 (2026). https://doi.org/10.1038/s44277-026-00057-0

Keywords: adolescent suicide risk, smartphone language, digital phenotyping, natural language processing, mental health monitoring