Clear Sky Science · en

Influence of solution efficiency and valence of instruction on additive and subtractive solution strategies in humans, GPT-4, and GPT-4o

Why doing less is surprisingly hard

When we try to fix things in everyday life—rewriting an email, reorganizing a room, redesigning a policy—we usually think about what to add, not what to remove. This quiet tendency to pile on rather than pare back can clog our lives with clutter, bloated software, and overcomplicated rules. The article explores how strong this “more is better” habit really is, and whether new artificial intelligences such as GPT-4 and GPT-4o share, soften, or even intensify this human bias.

How adding beats subtracting in our minds

Psychologists have shown that people often overlook solutions that involve taking things away, even when subtraction would be simpler or more effective. Adding feels natural and is reinforced by culture and language: words like “more” and “higher” are linked with improvement and success, whereas “less” can sound like loss or failure. This bias shows up in many domains, from healthcare that favors additional treatments over stopping harmful habits, to environmental policies that emphasize recycling instead of simply producing less waste. The current research asks whether this human tilt toward addition also appears in powerful language models trained on huge text collections.

Testing people and AI on simple puzzles

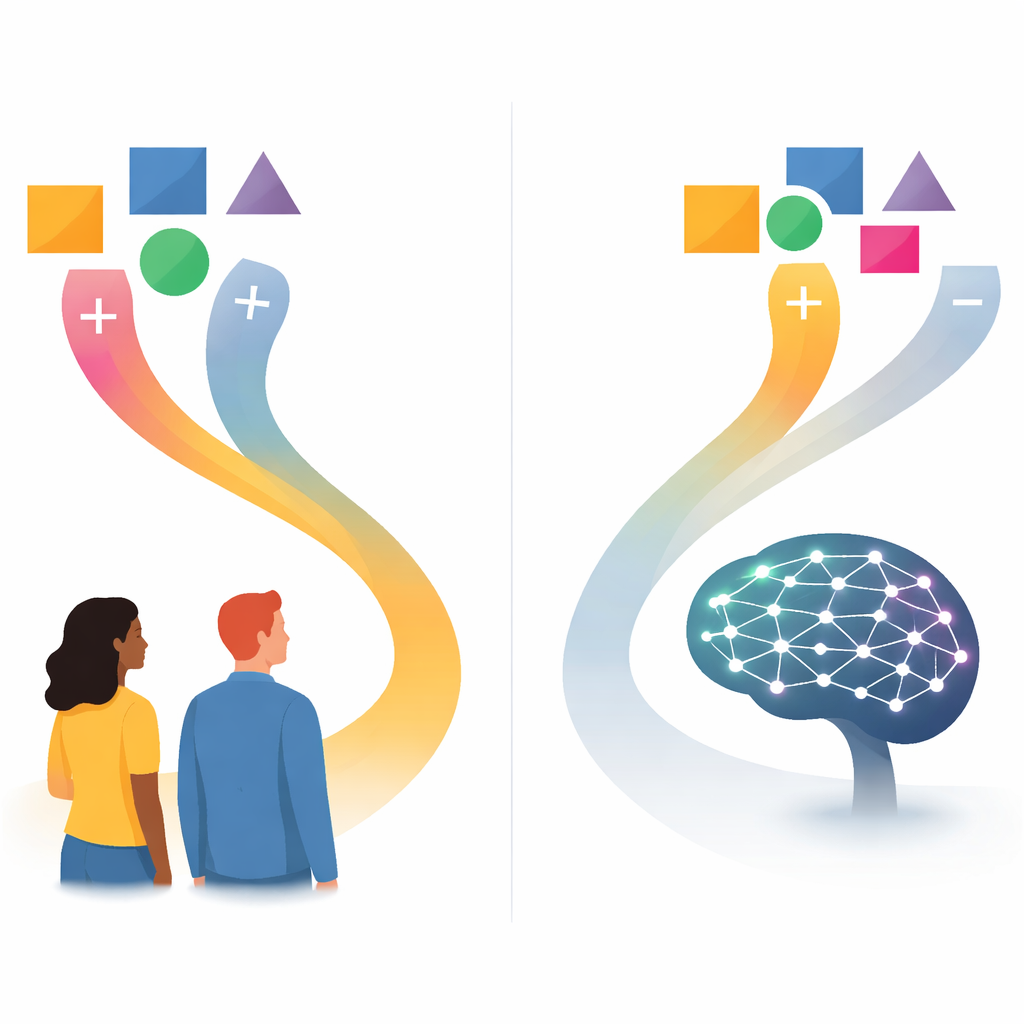

The researchers ran two large studies that compared human participants with GPT-4 and then with its successor GPT-4o. Both humans and AI faced two kinds of problems. In a spatial “symmetry” task, they had to make a small grid pattern perfectly symmetrical by toggling boxes on or off, which could be done either by filling extra boxes (addition) or clearing existing ones (subtraction). In a linguistic “summary” task, they received a news article and an existing summary and were asked to change it under word-count constraints, again allowing either adding or cutting words. The team also manipulated two key factors: whether adding and subtracting were equally efficient or whether subtraction clearly required fewer steps, and whether instructions were phrased in neutral terms (“change”) or with a positive spin (“improve”).

What people did versus what the machines did

Across both studies, a clear pattern emerged: humans and language models all preferred additive solutions, but the models did so far more strongly. People showed a robust pull toward adding boxes or words, yet they still paid attention to efficiency. When subtraction was the faster route, they were noticeably more willing to remove elements. In contrast, GPT-4 often behaved in the opposite way—producing even more additive answers exactly when subtraction would have been more efficient. GPT-4o reduced this mismatch somewhat in the text-based summary task, where its choices more closely tracked human behavior, but in the grid task it still largely ignored efficiency. In many conditions, especially for GPT-4o, additive responses reached near-ceiling levels.

How positive wording nudges choices

The emotional tone of the instructions also mattered, but in specific ways. In the spatial grid task, changing the verb from neutral (“change”) to positive (“improve”) did not reliably alter strategies for either humans or models. In the summary task, however, the story was different. When the instructions repeatedly used positive wording, both GPT models and, in the second study, human participants produced more additive responses. This lines up with broader language statistics showing that words related to improvement are more often paired with ideas of adding rather than removing. It suggests that subtle emotional framing in prompts can push both people and AI toward “more” even when “less” would do.

Why these findings matter for everyday decisions

To a lay reader, the key message is that our brains, and the AIs we build, share a strong preference for solutions that add rather than subtract—and current language models often amplify this tendency. Humans still show some flexibility, adjusting when subtracting is clearly more efficient, but the models largely follow patterns embedded in the language they were trained on. As these systems increasingly help write policies, design systems, or suggest everyday improvements, they may quietly steer us toward more complex, more cluttered answers. Recognizing this shared “addition bias” is a first step toward designing tools and habits that remind us to ask not only “What can we add?” but also “What can we remove?”

Citation: Uhler, L., Jordan, V., Buder, J. et al. Influence of solution efficiency and valence of instruction on additive and subtractive solution strategies in humans, GPT-4, and GPT-4o. Commun Psychol 4, 41 (2026). https://doi.org/10.1038/s44271-026-00403-0

Keywords: addition bias, subtractive reasoning, large language models, human–AI comparison, decision making